Bu sayfa henüz yerel dilinizde mevcut değildir. Daha fazla dil seçeneği eklemek için yoğun bir şekilde çalışıyoruz. Desteğiniz için teşekkür ederiz.

- What's New

- Function Overview

- Product Bulletin

- Service Overview

-

Billing

- Billing Overview

- Billing for Compute Resources

- Billing for Storage Resources

- Billing for Scanned Data

- Package Billing

- Billing Examples

- Renewing Subscriptions

- Bills

- Arrears

- Billing Termination

-

Billing FAQ

- What Billing Modes Does DLI Offer?

- When Is a Data Lake Queue Idle?

- How Do I Troubleshoot DLI Billing Issues?

- Why Am I Still Being Billed on a Pay-per-Use Basis After I Purchased a Package?

- How Do I View the Usage of a Package?

- How Do I View a Job's Scanned Data Volume?

- Would a Pay-Per-Use Elastic Resource Pool Not Be Billed if No Job Is Submitted for Execution?

- Do I Need to Pay Extra Fees for Purchasing a Queue Billed Based on the Scanned Data Volume?

- How Is the Usage Beyond the Package Limit Billed?

- What Are the Actual CUs, CU Range, and Specifications of an Elastic Resource Pool?

- Change History

- Getting Started

-

User Guide

- DLI Job Development Process

- Preparations

-

Creating an Elastic Resource Pool and Queues Within It

- Overview of DLI Elastic Resource Pools and Queues

- Creating an Elastic Resource Pool and Creating Queues Within It

- Managing Elastic Resource Pools

-

Managing Queues

- Viewing Basic Information About a Queue

- Queue Permission Management

- Allocating a Queue to an Enterprise Project

- Creating an SMN Topic

- Managing Queue Tags

- Setting Queue Properties

- Testing Address Connectivity

- Deleting a Queue

- Auto Scaling of Standard Queues

- Setting a Scheduled Auto Scaling Task for a Standard Queue

- Changing the CIDR Block for a Standard Queue

- Example Use Case: Creating an Elastic Resource Pool and Running Jobs

- Example Use Case: Configuring Scaling Policies for Queues in an Elastic Resource Pool

- Creating a Non-Elastic Resource Pool Queue (Discarded and Not Recommended)

- Creating Databases and Tables

-

Data Migration and Transmission

- Overview

-

Migrating Data from External Data Sources to DLI

- Overview of Data Migration Scenarios

- Using CDM to Migrate Data to DLI

- Example Typical Scenario: Migrating Data from Hive to DLI

- Example Typical Scenario: Migrating Data from Kafka to DLI

- Example Typical Scenario: Migrating Data from Elasticsearch to DLI

- Example Typical Scenario: Migrating Data from RDS to DLI

- Example Typical Scenario: Migrating Data from GaussDB(DWS) to DLI

-

Configuring DLI to Read and Write Data from and to External Data Sources

- Configuring DLI to Read and Write External Data Sources

- Configuring the Network Connection Between DLI and Data Sources (Enhanced Datasource Connection)

- Using DEW to Manage Access Credentials for Data Sources

- Using DLI Datasource Authentication to Manage Access Credentials for Data Sources

-

Managing Enhanced Datasource Connections

- Viewing Basic Information About an Enhanced Datasource Connection

- Enhanced Connection Permission Management

- Binding an Enhanced Datasource Connection to an Elastic Resource Pool

- Unbinding an Enhanced Datasource Connection from an Elastic Resource Pool

- Adding a Route for an Enhanced Datasource Connection

- Deleting the Route for an Enhanced Datasource Connection

- Modifying Host Information in an Elastic Resource Pool

- Enhanced Datasource Connection Tag Management

- Deleting an Enhanced Datasource Connection

- Example Typical Scenario: Connecting DLI to a Data Source on a Private Network

- Example Typical Scenario: Connecting DLI to a Data Source on a Public Network

- Configuring an Agency to Allow DLI to Access Other Cloud Services

- Submitting a SQL Job Using DLI

- Submitting a Flink Job Using DLI

- Submitting a Spark Job Using DLI

- Using Cloud Eye to Monitor DLI

- Using CTS to Audit DLI

- Permissions Management

- Common DLI Management Operations

-

Best Practices

- Overview

- Data Migration

- Data Analysis

- Connections

-

Developer Guide

- SQL Jobs

-

Flink OpenSource SQL Jobs

- Reading Data from Kafka and Writing Data to RDS

- Reading Data from Kafka and Writing Data to GaussDB(DWS)

- Reading Data from Kafka and Writing Data to Elasticsearch

- Reading Data from MySQL CDC and Writing Data to GaussDB(DWS)

- Reading Data from PostgreSQL CDC and Writing Data to GaussDB(DWS)

- Configuring High-Reliability Flink Jobs (Automatic Restart upon Exceptions)

- Flink Jar Jobs

- Flink Job Agencies

-

Spark Jar Jobs

- Using Spark Jar Jobs to Read and Query OBS Data

- Using the Spark Job to Access DLI Metadata

- Using Spark-submit to Submit a Spark Jar Job

- Submitting a Spark Jar Job Using Livy

- Using Spark Jobs to Access Data Sources of Datasource Connections

- Spark Job Agencies

-

Spark SQL Syntax Reference

- Common Configuration Items

- Spark SQL Syntax

- Spark Open Source Commands

- Databases

-

Tables

- Creating an OBS Table

- Creating a DLI Table

- Deleting a Table

- Viewing a Table

- Modifying a Table

-

Partition-related Syntax

- Adding Partition Data (Only OBS Tables Supported)

- Renaming a Partition (Only OBS Tables Supported)

- Deleting a Partition

- Deleting Partitions by Specifying Filter Criteria (Only Supported on OBS Tables)

- Altering the Partition Location of a Table (Only OBS Tables Supported)

- Updating Partitioned Table Data (Only OBS Tables Supported)

- Updating Table Metadata with REFRESH TABLE

- Backing Up and Restoring Data of Multiple Versions

- Table Lifecycle Management

- Data

- Exporting Query Results

-

Datasource Connections

- Creating a Datasource Connection with an HBase Table

- Creating a Datasource Connection with an OpenTSDB Table

- Creating a Datasource Connection with a DWS Table

- Creating a Datasource Connection with an RDS Table

- Creating a Datasource Connection with a CSS Table

- Creating a Datasource Connection with a DCS Table

- Creating a Datasource Connection with a DDS Table

- Creating a Datasource Connection with an Oracle Table

- Views

- Viewing the Execution Plan

- Data Permissions

- Data Types

- User-Defined Functions

-

Built-In Functions

-

Date Functions

- Overview

- add_months

- current_date

- current_timestamp

- date_add

- dateadd

- date_sub

- date_format

- datediff

- datediff1

- datepart

- datetrunc

- day/dayofmonth

- from_unixtime

- from_utc_timestamp

- getdate

- hour

- isdate

- last_day

- lastday

- minute

- month

- months_between

- next_day

- quarter

- second

- to_char

- to_date

- to_date1

- to_utc_timestamp

- trunc

- unix_timestamp

- weekday

- weekofyear

- year

-

String Functions

- Overview

- ascii

- concat

- concat_ws

- char_matchcount

- encode

- find_in_set

- get_json_object

- instr

- instr1

- initcap

- keyvalue

- length

- lengthb

- levenshtein

- locate

- lower/lcase

- lpad

- ltrim

- parse_url

- printf

- regexp_count

- regexp_extract

- replace

- regexp_replace

- regexp_replace1

- regexp_instr

- regexp_substr

- repeat

- reverse

- rpad

- rtrim

- soundex

- space

- substr/substring

- substring_index

- split_part

- translate

- trim

- upper/ucase

- Mathematical Functions

- Aggregate Functions

- Window Functions

- Other Functions

-

Date Functions

- SELECT

-

Identifiers

- aggregate_func

- alias

- attr_expr

- attr_expr_list

- attrs_value_set_expr

- boolean_expression

- class_name

- col

- col_comment

- col_name

- col_name_list

- condition

- condition_list

- cte_name

- data_type

- db_comment

- db_name

- else_result_expression

- file_format

- file_path

- function_name

- groupby_expression

- having_condition

- hdfs_path

- input_expression

- input_format_classname

- jar_path

- join_condition

- non_equi_join_condition

- number

- num_buckets

- output_format_classname

- partition_col_name

- partition_col_value

- partition_specs

- property_name

- property_value

- regex_expression

- result_expression

- row_format

- select_statement

- separator

- serde_name

- sql_containing_cte_name

- sub_query

- table_comment

- table_name

- table_properties

- table_reference

- view_name

- view_properties

- when_expression

- where_condition

- window_function

- Operators

-

Flink SQL Syntax Reference

-

Flink OpenSource SQL 1.15 Syntax Reference

- Constraints and Definitions

- Overview

- Flink OpenSource SQL 1.15 Usage

- Formats

- Connectors

- DML Snytax

-

Functions

- UDFs

- Type Inference

- Parameter Transfer

-

Built-In Functions

- Comparison Functions

- Logical Functions

- Arithmetic Functions

- String Functions

- Temporal Functions

- Conditional Functions

- Type Conversion Functions

- Collection Functions

- JSON Functions

- Value Construction Functions

- Value Retrieval Functions

- Grouping Functions

- Hash Functions

- Aggregate Functions

- Table-Valued Functions

- Flink OpenSource SQL 1.12 Syntax Reference

-

Flink Opensource SQL 1.10 Syntax Reference

- Constraints and Definitions

- Flink OpenSource SQL 1.10 Syntax

-

Data Definition Language (DDL)

- Creating a Source Table

-

Creating a Result Table

- ClickHouse Result Table

- Kafka Result Table

- Upsert Kafka Result Table

- DIS Result Table

- JDBC Result Table

- GaussDB(DWS) Result Table

- Redis Result Table

- SMN Result Table

- HBase Result Table

- Elasticsearch Result Table

- OpenTSDB Result Table

- User-defined Result Table

- Print Result Table

- File System Result Table

- Creating a Dimension Table

- Data Manipulation Language (DML)

- Functions

-

Historical Version

-

Flink SQL Syntax (This Syntax Will Not Evolve. Use FlinkOpenSource SQL Instead.)

- Constraints and Definitions

- Overview

- Creating a Source Stream

-

Creating a Sink Stream

- CloudTable HBase Sink Stream

- CloudTable OpenTSDB Sink Stream

- MRS OpenTSDB Sink Stream

- CSS Elasticsearch Sink Stream

- DCS Sink Stream

- DDS Sink Stream

- DIS Sink Stream

- DMS Sink Stream

- DWS Sink Stream (JDBC Mode)

- DWS Sink Stream (OBS-based Dumping)

- MRS HBase Sink Stream

- MRS Kafka Sink Stream

- Open-Source Kafka Sink Stream

- File System Sink Stream (Recommended)

- OBS Sink Stream

- RDS Sink Stream

- SMN Sink Stream

- Creating a Temporary Stream

- Creating a Dimension Table

- Custom Stream Ecosystem

- Data Manipulation Language (DML)

- Data Types

- User-Defined Functions

- Built-In Functions

- Geographical Functions

- Configuring Time Models

- Pattern Matching

- StreamingML

- Reserved Keywords

-

Flink SQL Syntax (This Syntax Will Not Evolve. Use FlinkOpenSource SQL Instead.)

-

Flink OpenSource SQL 1.15 Syntax Reference

-

HetuEngine SQL Syntax Reference

-

HetuEngine SQL Syntax

- Before You Start

- Data Type

-

DDL Syntax

- CREATE SCHEMA

- CREATE TABLE

- CREATE TABLE AS

- CREATE TABLE LIKE

- CREATE VIEW

- ALTER TABLE

- ALTER VIEW

- ALTER SCHEMA

- DROP SCHEMA

- DROP TABLE

- DROP VIEW

- TRUNCATE TABLE

- COMMENT

- VALUES

- SHOW Syntax Overview

- SHOW SCHEMAS (DATABASES)

- SHOW TABLES

- SHOW TBLPROPERTIES TABLE|VIEW

- SHOW TABLE/PARTITION EXTENDED

- SHOW FUNCTIONS

- SHOW PARTITIONS

- SHOW COLUMNS

- SHOW CREATE TABLE

- SHOW VIEWS

- SHOW CREATE VIEW

- DML Syntax

- DQL Syntax

- Auxiliary Command Syntax

- Reserved Keywords

-

SQL Functions and Operators

- Logical Operators

- Comparison Functions and Operators

- Condition Expression

- Lambda Expression

- Conversion Functions

- Mathematical Functions and Operators

- Bitwise Functions

- Decimal Functions and Operators

- String Functions and Operators

- Regular Expressions

- Binary Functions and Operators

- JSON Functions and Operators

- Date and Time Functions and Operators

- Aggregate Functions

- Window Functions

- Array Functions and Operators

- Map Functions and Operators

- URL Function

- UUID Function

- Color Function

- Teradata Function

- Data Masking Functions

- IP Address Functions

- Quantile Digest Functions

- T-Digest Functions

- Implicit Data Type Conversion

- Appendix

-

HetuEngine SQL Syntax

-

API Reference

- Before You Start

- Overview

- Calling APIs

- Getting Started

- Permission-related APIs

- Global Variable-related APIs

- APIs Related to Resource Tags

-

APIs Related to Enhanced Datasource Connections

- Creating an Enhanced Datasource Connection

- Deleting an Enhanced Datasource Connection

- Listing Enhanced Datasource Connections

- Querying an Enhanced Datasource Connection

- Binding a Queue

- Unbinding a Queue

- Modifying Host Information

- Querying Authorization of an Enhanced Datasource Connection

- Creating a Route

- Deleting a Route

- Datasource Authentication-related APIs

-

APIs Related to Elastic Resource Pools

- Creating an Elastic Resource Pool

- Querying All Elastic Resource Pools

- Deleting an Elastic Resource Pool

- Modifying Elastic Resource Pool Information

- Querying All Queues in an Elastic Resource Pool

- Associating a Queue with an Elastic Resource Pool

- Viewing Scaling History of an Elastic Resource Pool

- Modifying the Scaling Policy of a Queue Associated with an Elastic Resource Pool

- Queue-related APIs (Recommended)

- SQL Job-related APIs

- SQL Template-related APIs

-

Flink Job-related APIs

- Creating a SQL Job

- Updating a SQL Job

- Creating a Flink Jar job

- Updating a Flink Jar Job

- Running Jobs in Batches

- Listing Jobs

- Querying Job Details

- Querying the Job Execution Plan

- Stopping Jobs in Batches

- Deleting a Job

- Deleting Jobs in Batches

- Exporting a Flink Job

- Importing a Flink Job

- Generating a Static Stream Graph for a Flink SQL Job

- APIs Related to Flink Job Templates

- Flink Job Management APIs

- Spark Job-related APIs

- APIs Related to Spark Job Templates

- Permissions Policies and Supported Actions

-

Out-of-Date APIs

- Agency-related APIs (Discarded)

-

Package Group-related APIs (Discarded)

- Uploading a Package Group (Discarded)

- Listing Package Groups (Discarded)

- Uploading a JAR Package Group (Discarded)

- Uploading a PyFile Package Group (Discarded)

- Uploading a File Package Group (Discarded)

- Querying Resource Packages in a Group (Discarded)

- Deleting a Resource Package from a Group (Discarded)

- Changing the Owner of a Group or Resource Package (Discarded)

- APIs Related to Spark Batch Processing (Discarded)

- SQL Job-related APIs (Discarded)

- Resource-related APIs (Discarded)

- Permission-related APIs (Discarded)

- Queue-related APIs (Discarded)

- Datasource Authentication-related APIs (Discarded)

- APIs Related to Enhanced Datasource Connections (Discarded)

- Template-related APIs (Discarded)

- Table-related APIs (Discarded)

- APIs Related to SQL Jobs (Discarded)

- APIs Related to Data Upload (Discarded)

- Cluster-related APIs

- APIs Related to Flink Jobs (Discarded)

- Public Parameters

- SDK Reference

-

FAQs

-

DLI Basics

- What Are the Differences Between DLI Flink and MRS Flink?

- What Are the Differences Between MRS Spark and DLI Spark?

- How Do I Upgrade the Engine Version of a DLI Job?

- Where Can Data Be Stored in DLI?

- Can I Import OBS Bucket Data Shared by Other Tenants into DLI?

- Regions and AZs

- Can a Member Account Use Global Variables Created by Other Member Accounts?

- Is DLI Affected by the Apache Spark Command Injection Vulnerability (CVE-2022-33891)?

- How Do I Manage Jobs Running on DLI?

- How Do I Change the Field Names of an Existing Table on DLI?

-

DLI Elastic Resource Pools and Queues

- How Can I Check the Actual and Used CUs for an Elastic Resource Pool as Well as the Required CUs for a Job?

- How Do I Check for a Backlog of Jobs in the Current DLI Queue?

- How Do I View the Load of a DLI Queue?

- How Do I Monitor Job Exceptions on a DLI Queue?

- How Do I Migrate an Old Version Spark Queue to a General-Purpose Queue?

- How Do I Do If I Encounter a Timeout Exception When Executing DLI SQL Statements on the default Queue?

-

DLI Databases and Tables

- Why Am I Unable to Query a Table on the DLI Console?

- How Do I Do If the Compression Rate of an OBS Table Is High?

- How Do I Do If Inconsistent Character Encoding Leads to Garbled Characters?

- Do I Need to to Regrant Permissions to Users and Projects After Deleting and Recreating a Table With the Same Name?

- How Do I Do If Files Imported Into a DLI Partitioned Table Lack Data for the Partition Columns, Causing Query Failures After the Import Is Completed?

- How Do I Fix Incorrect Data in an OBS Foreign Table Caused by Newline Characters in OBS File Fields?

- How Do I Prevent a Cartesian Product Query and Resource Overload Due to Missing "ON" Conditions in Table Joins?

- How Do I Do If I Can't Query Data After Manually Adding It to the Partition Directory of an OBS Table?

- Why Does the "insert overwrite" Operation Affect All Data in a Partitioned Table Instead of Just the Targeted Partition?

- Why Does the "create_date" Field in an RDS Table (Datetime Data Type) Appear as a Timestamp in DLI Queries?

- How Do I Do If Renaming a Table After a SQL Job Causes Incorrect Data Size?

- How Can I Resolve Data Inconsistencies When Importing Data from DLI to OBS?

-

Enhanced Datasource Connections

- How Do I Do If I Can't Bind an Enhanced Datasource Connection to a Queue?

- How Do I Resolve a Failure in Connecting DLI to GaussDB(DWS) Through an Enhanced Datasource Connection?

- How Do I Do If the Datasource Connection Is Successfully Created but the Network Connectivity Test Fails?

- How Do I Configure Network Connectivity Between a DLI Queue and a Data Source?

- Why Is Creating a VPC Peering Connection Necessary for Enhanced Datasource Connections in DLI?

- How Do I Do If Creating a Datasource Connection in DLI Gets Stuck in the "Creating" State When Binding It to a Queue?

- How Do I Resolve the "communication link failure" Error When Using a Newly Created Datasource Connection That Appears to Be Activated?

- How Do I Troubleshoot a Connection Timeout Issue That Isn't Recorded in Logs When Accessing MRS HBase Through a Datasource Connection?

- How Do I Fix the "Failed to get subnet" Error When Creating a Datasource Connection in DLI?

- How Do I Do If I Encounter the "Incorrect string value" Error When Executing insert overwrite on a Datasource RDS Table?

- How Do I Resolve the Null Pointer Error When Creating an RDS Datasource Table?

- Error Message "org.postgresql.util.PSQLException: ERROR: tuple concurrently updated" Is Displayed When the System Executes insert overwrite on a Datasource GaussDB(DWS) Table

- RegionTooBusyException Is Reported When Data Is Imported to a CloudTable HBase Table Through a Datasource Table

- How Do I Do If A Null Value Is Written Into a Non-Null Field When Using a DLI Datasource Connection to Connect to a GaussDB(DWS) Table?

- How Do I Do If an Insert Operation Failed After the Schema of the GaussDB(DWS) Source Table Is Updated?

- How Do I Insert Data into an RDS Table with an Auto-Increment Primary Key Using DLI?

-

SQL Jobs

-

SQL Job Development

- SQL Jobs

- How Do I Merge Small Files?

- How Do I Use DLI to Access Data in an OBS Bucket?

- How Do I Specify an OBS Path When Creating an OBS Table?

- How Do I Create a Table Using JSON Data in an OBS Bucket?

- How Can I Use the count Function to Perform Aggregation?

- How Do I Synchronize DLI Table Data Across Regions?

- How Do I Insert Table Data into Specific Fields of a Table Using a SQL Job?

- How Do I Troubleshoot Slow SQL Jobs?

- How Do I View DLI SQL Logs?

- How Do I View SQL Execution Records in DLI?

- How Do I Do When Data Skew Occurs During the Execution of a SQL Job?

- Why Does a SQL Job That Has Join Operations Stay in the Running State?

- Why Is a SQL Job Stuck in the Submitting State?

-

SQL Job O&M

- Why Is Error "path obs://xxx already exists" Reported When Data Is Exported to OBS?

- Why Is Error "SQL_ANALYSIS_ERROR: Reference 't.id' is ambiguous, could be: t.id, t.id.;" Displayed When Two Tables Are Joined?

- Why Is Error "The current account does not have permission to perform this operation,the current account was restricted. Restricted for no budget." Reported when a SQL Statement Is Executed?

- Why Is Error "There should be at least one partition pruning predicate on partitioned table XX.YYY" Reported When a Query Statement Is Executed?

- Why Is Error "IllegalArgumentException: Buffer size too small. size" Reported When Data Is Loaded to an OBS Foreign Table?

- Why Is Error "DLI.0002 FileNotFoundException" Reported During SQL Job Running?

- Why Is a Schema Parsing Error Reported When I Create a Hive Table Using CTAS?

- Why Is Error "org.apache.hadoop.fs.obs.OBSIOException" Reported When I Run DLI SQL Scripts on DataArts Studio?

- Why Is Error "UQUERY_CONNECTOR_0001:Invoke DLI service api failed" Reported in the Job Log When I Use CDM to Migrate Data to DLI?

- Why Is Error "File not Found" Reported When I Access a SQL Job?

- Why Is Error "DLI.0003: AccessControlException XXX" Reported When I Access a SQL Job?

- Why Is Error "DLI.0001: org.apache.hadoop.security.AccessControlException: verifyBucketExists on {{bucket name}}: status [403]" Reported When I Access a SQL Job?

- Why Am I Seeing the Error Message "The current account does not have permission to perform this operation,the current account was restricted. Restricted for no budget" When Executing a SQL Statement?

-

SQL Job Development

-

Flink Jobs

-

Flink Job Consulting

- What Data Formats and Sources Are Supported by DLI Flink Jobs?

- How Do I Authorize a Subuser to View Flink Jobs?

- How Do I Configure Auto Restart upon Exception for a Flink Job?

- How Do I Save Logs for Flink Jobs?

- Why Is Error "No such user. userName:xxxx." Reported on the Flink Job Management Page When I Grant Permission to a User?

- How Do I Restore a Flink Job from a Specific Checkpoint After Manually Stopping the Job?

- Why Is a Message Displayed Indicating That the SMN Topic Does Not Exist When I Use the SMN Topic in DLI?

-

Flink SQL Jobs

- How Do I Map an OBS Table to a DLI Partitioned Table?

- How Do I Change the Number of Kafka Partitions in a Flink SQL Job Without Stopping It?

- How Do I Fix the DLI.0005 Error When Using EL Expressions to Create a Table in a Flink SQL Job?

- Why Is No Data Queried in the DLI Table Created Using the OBS File Path When Data Is Written to OBS by a Flink Job Output Stream?

- Why Does a Flink SQL Job Fails to Be Executed, and Is "connect to DIS failed java.lang.IllegalArgumentException: Access key cannot be null" Displayed in the Log?

- Data Writing Fails After a Flink SQL Job Consumed Kafka and Sank Data to the Elasticsearch Cluster

- How Does Flink Opensource SQL Parse Nested JSON?

- Why Is the Time Read by a Flink OpenSource SQL Job from the RDS Database Is Different from the RDS Database Time?

- Why Does Job Submission Fail When the failure-handler Parameter of the Elasticsearch Result Table for a Flink Opensource SQL Job Is Set to retry_rejected?

- How Do I Configure Connection Retries for Kafka Sink If it is Disconnected?

- How Do I Write Data to Different Elasticsearch Clusters in a Flink Job?

- Why Does DIS Stream Not Exist During Job Semantic Check?

- Why Is Error "Timeout expired while fetching topic metadata" Repeatedly Reported in Flink JobManager Logs?

-

Flink Jar Jobs

- Can I Upload Configuration Files for Flink Jar Jobs?

- Why Does a Flink Jar Package Conflict Result in Job Submission Failure?

- Why Does a Flink Jar Job Fail to Access GaussDB(DWS) and a Message Is Displayed Indicating Too Many Client Connections?

- Why Is Error Message "Authentication failed" Displayed During Flink Jar Job Running?

- Why Is Error Invalid OBS Bucket Name Reported After a Flink Job Submission Failed?

- Why Does the Flink Submission Fail Due to Hadoop JAR File Conflict?

- How Do I Locate a Flink Job Submission Error?

-

Flink Job Performance Tuning

- What Is the Recommended Configuration for a Flink Job?

- Flink Job Performance Tuning

- How Do I Prevent Data Loss After Flink Job Restart?

- How Do I Locate a Flink Job Running Error?

- How Can I Check if a Flink Job Can Be Restored From a Checkpoint After Restarting It?

- Why Are Logs Not Written to the OBS Bucket After a DLI Flink Job Fails to Be Submitted for Running?

- Why Is the Flink Job Abnormal Due to Heartbeat Timeout Between JobManager and TaskManager?

-

Flink Job Consulting

-

Spark Jobs

-

Spark Job Development

- Spark Jobs

- How Do I Use Spark to Write Data into a DLI Table?

- How Do I Set the AK/SK for a Queue to Operate an OBS Table?

- How Do I View the Resource Usage of DLI Spark Jobs?

- How Do I Use Python Scripts to Access the MySQL Database If the pymysql Module Is Missing from the Spark Job Results Stored in MySQL?

- How Do I Run a Complex PySpark Program in DLI?

- How Do I Use JDBC to Set the spark.sql.shuffle.partitions Parameter to Improve the Task Concurrency?

- How Do I Read Uploaded Files for a Spark Jar Job?

- Why Can't I Find the Specified Python Environment After Adding the Python Package?

- Why Is a Spark Jar Job Stuck in the Submitting State?

-

Spark Job O&M

- What Can I Do When Receiving java.lang.AbstractMethodError in the Spark Job?

- Why Do I Get "ResponseCode: 403" and "ResponseStatus: Forbidden" Errors When a Spark Job Accesses OBS Data?

- Why Do I Encounter the Error "verifyBucketExists on XXXX: status [403]" When Using a Spark Job to Access an OBS Bucket That I Have Permission to Access?

- Why Does a Job Running Timeout Occur When Processing a Large Amount of Data with a Spark Job?

- Why Does a Spark Job Fail to Execute with an Abnormal Access Directory Error When Accessing Files in SFTP?

- Why Does the Job Fail to Be Executed Due to Insufficient Database and Table Permissions?

- Why Is the global_temp Database Missing in the Job Log of Spark 3.x?

- Why Does Using DataSource Syntax to Create an OBS Table of Avro Type Fail When Accessing Metadata With Spark 2.3.x?

-

Spark Job Development

- DLI Resource Quotas

-

DLI Permissions Management

- How Do I Do If I Receive an Error Message Stating That I Do Not Have Sufficient Permissions When Creating a Table After Upgrading the Engine Version of a Queue?

- What Is Column-Level Authorization for DLI Partitioned Tables?

- How Do I Do If I Encounter Insufficient Permissions While Updating Packages?

- Why Is Error "DLI.0003: Permission denied for resource..." Reported When I Run a SQL Statement?

- How Do I Do If I Can't Query Table Data After Being Granted Table Permissions?

- Will Granting Duplicate Permissions to a Table After Inheriting Database Permissions Cause an Error?

- Why Can't I Query a View After I'm Granted the Select Table Permission on the View?

- How Do I Do If I Receive a Message Saying I Don't Have Sufficient Permissions to Submit My Jobs to the Job Bucket?

- How Do I Resolve an Unauthorized OBS Bucket Error?

- DLI APIs

-

DLI Basics

-

More Documents

-

User Guide (ME-Abu Dhabi Region)

- DLI Introduction

- Getting Started

- DLI Console Overview

- SQL Editor

- Job Management

- Queue Management

- Data Management

- Job Templates

- Datasource Connections

- Global Configuration

- UDFs

- Permissions Management

- Change History

-

API Reference (ME-Abu Dhabi Region)

- Before You Start

- Overview

- Calling APIs

- Getting Started

- Permission-related APIs

- Queue-related APIs (Recommended)

- APIs Related to SQL Jobs

- Package Group-related APIs

-

APIs Related to Flink Jobs

- Granting OBS Permissions to DLI

- Creating a SQL Job

- Updating a SQL Job

- Creating a Flink Jar job

- Updating a Flink Jar Job

- Running Jobs in Batches

- Querying the Job List

- Querying Job Details

- Querying the Job Execution Plan

- Stopping Jobs in Batches

- Deleting a Job

- Deleting Jobs in Batches

- Exporting a Flink Job

- Importing a Flink Job

- APIs Related to Spark jobs

- APIs Related to Flink Job Templates

-

APIs Related to Enhanced Datasource Connections

- Creating an Enhanced Datasource Connection

- Deleting an Enhanced Datasource Connection

- Querying an Enhanced Datasource Connection List

- Querying an Enhanced Datasource Connection

- Binding a Queue

- Unbinding a Queue

- Modifying the Host Information

- Querying Authorization of an Enhanced Datasource Connection

- Global Variable-related APIs

- Public Parameters

- Change History

-

SQL Syntax Reference (ME-Abu Dhabi Region)

-

Spark SQL Syntax Reference

- Common Configuration Items of Batch SQL Jobs

- SQL Syntax Overview of Batch Jobs

- Databases

- Creating an OBS Table

- Creating a DLI Table

- Deleting a Table

- Viewing Tables

- Modifying a Table

-

Syntax for Partitioning a Table

- Adding Partition Data (Only OBS Tables Supported)

- Renaming a Partition (Only OBS Tables Supported)

- Deleting a Partition

- Deleting Partitions by Specifying Filter Criteria (Only OBS Tables Supported)

- Altering the Partition Location of a Table (Only OBS Tables Supported)

- Updating Partitioned Table Data (Only OBS Tables Supported)

- Updating Table Metadata with REFRESH TABLE

- Importing Data to the Table

- Inserting Data

- Clearing Data

- Exporting Search Results

- Backing Up and Restoring Data of Multiple Versions

- Creating a Datasource Connection with an HBase Table

- Creating a Datasource Connection with an OpenTSDB Table

- Creating a Datasource Connection with a DWS table

- Creating a Datasource Connection with an RDS Table

- Creating a Datasource Connection with a CSS Table

- Creating a Datasource Connection with a DCS Table

- Creating a Datasource Connection with a DDS Table

- Views

- Viewing the Execution Plan

- Data Permissions Management

- Data Types

- User-Defined Functions

- Built-in Functions

- Basic SELECT Statements

- Filtering

- Sorting

- Grouping

- JOIN

- Subquery

- Alias

- Set Operations

- WITH...AS

- CASE...WHEN

- OVER Clause

-

Flink SQL Syntax

- SQL Syntax Constraints and Definitions

- SQL Syntax Overview of Stream Jobs

- Creating a Source Stream

-

Creating a Sink Stream

- MRS OpenTSDB Sink Stream

- CSS Elasticsearch Sink Stream

- DCS Sink Stream

- DDS Sink Stream

- DIS Sink Stream

- DMS Sink Stream

- DWS Sink Stream (JDBC Mode)

- DWS Sink Stream (OBS-based Dumping)

- MRS HBase Sink Stream

- MRS Kafka Sink Stream

- Open-Source Kafka Sink Stream

- File System Sink Stream (Recommended)

- OBS Sink Stream

- RDS Sink Stream

- SMN Sink Stream

- Creating a Temporary Stream

- Creating a Dimension Table

- Custom Stream Ecosystem

- Data Type

- Built-In Functions

- User-Defined Functions

- Geographical Functions

- SELECT

- Condition Expression

- Window

- JOIN Between Stream Data and Table Data

- Configuring Time Models

- Pattern Matching

- StreamingML

- Reserved Keywords

-

Identifiers

- aggregate_func

- alias

- attr_expr

- attr_expr_list

- attrs_value_set_expr

- boolean_expression

- col

- col_comment

- col_name

- col_name_list

- condition

- condition_list

- cte_name

- data_type

- db_comment

- db_name

- else_result_expression

- file_format

- file_path

- function_name

- groupby_expression

- having_condition

- input_expression

- join_condition

- non_equi_join_condition

- number

- partition_col_name

- partition_col_value

- partition_specs

- property_name

- property_value

- regex_expression

- result_expression

- select_statement

- separator

- sql_containing_cte_name

- sub_query

- table_comment

- table_name

- table_properties

- table_reference

- when_expression

- where_condition

- window_function

- Operators

-

Spark SQL Syntax Reference

- User Guide (Paris Region)

-

API Reference (Paris Region)

- Before You Start

- Overview

- Calling APIs

- Getting Started

- Permission-related APIs

- Queue-related APIs (Recommended)

- APIs Related to SQL Jobs

- Package Group-related APIs

-

APIs Related to Flink Jobs

- Granting OBS Permissions to DLI

- Creating a SQL Job

- Updating a SQL Job

- Creating a Flink Jar job

- Updating a Flink Jar Job

- Running Jobs in Batches

- Querying the Job List

- Querying Job Details

- Querying the Job Execution Plan

- Stopping Jobs in Batches

- Deleting a Job

- Deleting Jobs in Batches

- Exporting a Flink Job

- Importing a Flink Job

- APIs Related to Spark jobs

- APIs Related to Flink Job Templates

-

APIs Related to Enhanced Datasource Connections

- Creating an Enhanced Datasource Connection

- Deleting an Enhanced Datasource Connection

- Querying an Enhanced Datasource Connection List

- Querying an Enhanced Datasource Connection

- Binding a Queue

- Unbinding a Queue

- Modifying the Host Information

- Querying Authorization of an Enhanced Datasource Connection

- Global Variable-related APIs

- Public Parameters

- Change History

-

SQL Syntax Reference (Paris Region)

-

Spark SQL Syntax Reference

- Common Configuration Items of Batch SQL Jobs

- SQL Syntax Overview of Batch Jobs

- Databases

- Creating an OBS Table

- Creating a DLI Table

- Deleting a Table

- Viewing Tables

- Modifying a Table

-

Syntax for Partitioning a Table

- Adding Partition Data (Only OBS Tables Supported)

- Renaming a Partition (Only OBS Tables Supported)

- Deleting a Partition

- Deleting Partitions by Specifying Filter Criteria (Only OBS Tables Supported)

- Altering the Partition Location of a Table (Only OBS Tables Supported)

- Updating Partitioned Table Data (Only OBS Tables Supported)

- Updating Table Metadata with REFRESH TABLE

- Importing Data to the Table

- Inserting Data

- Clearing Data

- Exporting Search Results

- Backing Up and Restoring Data of Multiple Versions

- Creating a Datasource Connection with an HBase Table

- Creating a Datasource Connection with an OpenTSDB Table

- Creating a Datasource Connection with a DWS table

- Creating a Datasource Connection with an RDS Table

- Creating a Datasource Connection with a CSS Table

- Creating a Datasource Connection with a DCS Table

- Creating a Datasource Connection with a DDS Table

- Views

- Viewing the Execution Plan

- Data Permissions Management

- Data Types

- User-Defined Functions

- Built-in Functions

- Basic SELECT Statements

- Filtering

- Sorting

- Grouping

- JOIN

- Subquery

- Alias

- Set Operations

- WITH...AS

- CASE...WHEN

- OVER Clause

-

Flink SQL Syntax

- SQL Syntax Constraints and Definitions

- SQL Syntax Overview of Stream Jobs

- Creating a Source Stream

-

Creating a Sink Stream

- MRS OpenTSDB Sink Stream

- CSS Elasticsearch Sink Stream

- DCS Sink Stream

- DDS Sink Stream

- DIS Sink Stream

- DMS Sink Stream

- DWS Sink Stream (JDBC Mode)

- DWS Sink Stream (OBS-based Dumping)

- MRS HBase Sink Stream

- MRS Kafka Sink Stream

- Open-Source Kafka Sink Stream

- File System Sink Stream (Recommended)

- OBS Sink Stream

- RDS Sink Stream

- SMN Sink Stream

- Creating a Temporary Stream

- Creating a Dimension Table

- Custom Stream Ecosystem

- Data Type

- Built-In Functions

- User-Defined Functions

- Geographical Functions

- SELECT

- Condition Expression

- Window

- JOIN Between Stream Data and Table Data

- Configuring Time Models

- Pattern Matching

- StreamingML

- Reserved Keywords

-

Identifiers

- aggregate_func

- alias

- attr_expr

- attr_expr_list

- attrs_value_set_expr

- boolean_expression

- col

- col_comment

- col_name

- col_name_list

- condition

- condition_list

- cte_name

- data_type

- db_comment

- db_name

- else_result_expression

- file_format

- file_path

- function_name

- groupby_expression

- having_condition

- input_expression

- join_condition

- non_equi_join_condition

- number

- partition_col_name

- partition_col_value

- partition_specs

- property_name

- property_value

- regex_expression

- result_expression

- select_statement

- separator

- sql_containing_cte_name

- sub_query

- table_comment

- table_name

- table_properties

- table_reference

- when_expression

- where_condition

- window_function

- Operators

-

Spark SQL Syntax Reference

-

User Guide (Kuala Lumpur Region)

- DLI Introduction

- Getting Started

- DLI Console Overview

- SQL Editor

- Job Management

- Queue Management

- Data Management

- Job Templates

- Datasource Connections

- Global Configuration

- UDFs

- Permissions Management

- Change History

-

API Reference (Kuala Lumpur Region)

- Before You Start

- Overview

- Calling APIs

- Getting Started

- Permission-related APIs

- Queue-related APIs (Recommended)

- APIs Related to SQL Jobs

- Package Group-related APIs

-

APIs Related to Flink Jobs

- Granting OBS Permissions to DLI

- Creating a SQL Job

- Updating a SQL Job

- Creating a Flink Jar job

- Updating a Flink Jar Job

- Running Jobs in Batches

- Querying the Job List

- Querying Job Details

- Querying the Job Execution Plan

- Stopping Jobs in Batches

- Deleting a Job

- Deleting Jobs in Batches

- Exporting a Flink Job

- Importing a Flink Job

- APIs Related to Spark jobs

- APIs Related to Flink Job Templates

-

APIs Related to Enhanced Datasource Connections

- Creating an Enhanced Datasource Connection

- Deleting an Enhanced Datasource Connection

- Querying an Enhanced Datasource Connection List

- Querying an Enhanced Datasource Connection

- Binding a Queue

- Unbinding a Queue

- Modifying the Host Information

- Querying Authorization of an Enhanced Datasource Connection

- Global Variable-related APIs

- Permissions Policies and Supported Actions

- Public Parameters

- Change History

-

SQL Syntax Reference (Kuala Lumpur Region)

-

Spark SQL Syntax Reference

- Common Configuration Items of Batch SQL Jobs

- SQL Syntax Overview of Batch Jobs

- Databases

- Creating an OBS Table

- Creating a DLI Table

- Deleting a Table

- Viewing Tables

- Modifying a Table

-

Syntax for Partitioning a Table

- Adding Partition Data (Only OBS Tables Supported)

- Renaming a Partition (Only OBS Tables Supported)

- Deleting a Partition

- Deleting Partitions by Specifying Filter Criteria (Only OBS Tables Supported)

- Altering the Partition Location of a Table (Only OBS Tables Supported)

- Updating Partitioned Table Data (Only OBS Tables Supported)

- Updating Table Metadata with REFRESH TABLE

- Importing Data to the Table

- Inserting Data

- Clearing Data

- Exporting Search Results

- Backing Up and Restoring Data of Multiple Versions

- Creating a Datasource Connection with an HBase Table

- Creating a Datasource Connection with an OpenTSDB Table

- Creating a Datasource Connection with a DWS table

- Creating a Datasource Connection with an RDS Table

- Creating a Datasource Connection with a CSS Table

- Creating a Datasource Connection with a DCS Table

- Creating a Datasource Connection with a DDS Table

- Views

- Viewing the Execution Plan

- Data Permissions Management

- Data Types

- User-Defined Functions

- Built-in Functions

- Basic SELECT Statements

- Filtering

- Sorting

- Grouping

- JOIN

- Subquery

- Alias

- Set Operations

- WITH...AS

- CASE...WHEN

- OVER Clause

-

Flink SQL Syntax

- SQL Syntax Constraints and Definitions

- SQL Syntax Overview of Stream Jobs

- Creating a Source Stream

-

Creating a Sink Stream

- CloudTable HBase Sink Stream

- CloudTable OpenTSDB Sink Stream

- MRS OpenTSDB Sink Stream

- CSS Elasticsearch Sink Stream

- DCS Sink Stream

- DDS Sink Stream

- DIS Sink Stream

- DMS Sink Stream

- DWS Sink Stream (JDBC Mode)

- DWS Sink Stream (OBS-based Dumping)

- MRS HBase Sink Stream

- MRS Kafka Sink Stream

- Open-Source Kafka Sink Stream

- File System Sink Stream (Recommended)

- OBS Sink Stream

- RDS Sink Stream

- SMN Sink Stream

- Creating a Temporary Stream

- Creating a Dimension Table

- Custom Stream Ecosystem

- Data Type

- Built-In Functions

- User-Defined Functions

- Geographical Functions

- SELECT

- Condition Expression

- Window

- JOIN Between Stream Data and Table Data

- Configuring Time Models

- Pattern Matching

- StreamingML

- Reserved Keywords

-

Identifiers

- aggregate_func

- alias

- attr_expr

- attr_expr_list

- attrs_value_set_expr

- boolean_expression

- col

- col_comment

- col_name

- col_name_list

- condition

- condition_list

- cte_name

- data_type

- db_comment

- db_name

- else_result_expression

- file_format

- file_path

- function_name

- groupby_expression

- having_condition

- input_expression

- join_condition

- non_equi_join_condition

- number

- partition_col_name

- partition_col_value

- partition_specs

- property_name

- property_value

- regex_expression

- result_expression

- select_statement

- separator

- sql_containing_cte_name

- sub_query

- table_comment

- table_name

- table_properties

- table_reference

- when_expression

- where_condition

- window_function

- Operators

-

Spark SQL Syntax Reference

-

User Guide (ME-Abu Dhabi Region)

- Videos

-

SQL Syntax Reference (To Be Offline)

- Notice on Taking This Syntax Reference Offline

-

Spark SQL Syntax Reference (Unavailable Soon)

- Common Configuration Items of Batch SQL Jobs

- SQL Syntax Overview of Batch Jobs

- Spark Open Source Commands

- Databases

- Creating an OBS Table

- Creating a DLI Table

- Deleting a Table

- Checking Tables

- Modifying a Table

-

Syntax for Partitioning a Table

- Adding Partition Data (Only OBS Tables Supported)

- Renaming a Partition (Only OBS Tables Supported)

- Deleting a Partition

- Deleting Partitions by Specifying Filter Criteria (Only Supported on OBS Tables)

- Altering the Partition Location of a Table (Only OBS Tables Supported)

- Updating Partitioned Table Data (Only OBS Tables Supported)

- Updating Table Metadata with REFRESH TABLE

- Importing Data to the Table

- Inserting Data

- Clearing Data

- Exporting Search Results

- Backing Up and Restoring Data of Multiple Versions

- Table Lifecycle Management

- Creating a Datasource Connection with an HBase Table

- Creating a Datasource Connection with an OpenTSDB Table

- Creating a Datasource Connection with a DWS table

- Creating a Datasource Connection with an RDS Table

- Creating a Datasource Connection with a CSS Table

- Creating a Datasource Connection with a DCS Table

- Creating a Datasource Connection with a DDS Table

- Creating a Datasource Connection with an Oracle Table

- Views

- Checking the Execution Plan

- Data Permissions Management

- Data Types

- User-Defined Functions

-

Built-in Functions

-

Date Functions

- Overview

- add_months

- current_date

- current_timestamp

- date_add

- dateadd

- date_sub

- date_format

- datediff

- datediff1

- datepart

- datetrunc

- day/dayofmonth

- from_unixtime

- from_utc_timestamp

- getdate

- hour

- isdate

- last_day

- lastday

- minute

- month

- months_between

- next_day

- quarter

- second

- to_char

- to_date

- to_date1

- to_utc_timestamp

- trunc

- unix_timestamp

- weekday

- weekofyear

- year

-

String Functions

- Overview

- ascii

- concat

- concat_ws

- char_matchcount

- encode

- find_in_set

- get_json_object

- instr

- instr1

- initcap

- keyvalue

- length

- lengthb

- levenshtein

- locate

- lower/lcase

- lpad

- ltrim

- parse_url

- printf

- regexp_count

- regexp_extract

- replace

- regexp_replace

- regexp_replace1

- regexp_instr

- regexp_substr

- repeat

- reverse

- rpad

- rtrim

- soundex

- space

- substr/substring

- substring_index

- split_part

- translate

- trim

- upper/ucase

- Mathematical Functions

- Aggregate Functions

- Window Functions

- Other Functions

-

Date Functions

- Basic SELECT Statements

- Filtering

- Sorting

- Grouping

- JOIN

- Subquery

- Alias

- Set Operations

- WITH...AS

- CASE...WHEN

- OVER Clause

- Flink OpenSource SQL 1.12 Syntax Reference

-

Flink Opensource SQL 1.10 Syntax Reference

- Constraints and Definitions

- Flink OpenSource SQL 1.10 Syntax

-

Data Definition Language (DDL)

- Creating a Source Table

-

Creating a Result Table

- ClickHouse Result Table

- Kafka Result Table

- Upsert Kafka Result Table

- DIS Result Table

- JDBC Result Table

- GaussDB(DWS) Result Table

- Redis Result Table

- SMN Result Table

- HBase Result Table

- Elasticsearch Result Table

- OpenTSDB Result Table

- User-defined Result Table

- Print Result Table

- File System Result Table

- Creating a Dimension Table

- Data Manipulation Language (DML)

- Functions

-

Historical Versions (Unavailable Soon)

-

Flink SQL Syntax (This Syntax Will Not Evolve. Use FlinkOpenSource SQL Instead.)

- SQL Syntax Constraints and Definitions

- SQL Syntax Overview of Stream Jobs

- Creating a Source Stream

-

Creating a Sink Stream

- CloudTable HBase Sink Stream

- CloudTable OpenTSDB Sink Stream

- MRS OpenTSDB Sink Stream

- CSS Elasticsearch Sink Stream

- DCS Sink Stream

- DDS Sink Stream

- DIS Sink Stream

- DMS Sink Stream

- DWS Sink Stream (JDBC Mode)

- DWS Sink Stream (OBS-based Dumping)

- MRS HBase Sink Stream

- MRS Kafka Sink Stream

- Open-Source Kafka Sink Stream

- File System Sink Stream (Recommended)

- OBS Sink Stream

- RDS Sink Stream

- SMN Sink Stream

- Creating a Temporary Stream

- Creating a Dimension Table

- Custom Stream Ecosystem

- Data Type

- Built-In Functions

- User-Defined Functions

- Geographical Functions

- SELECT

- Condition Expression

- Window

- JOIN Between Stream Data and Table Data

- Configuring Time Models

- Pattern Matching

- StreamingML

- Reserved Keywords

-

Flink SQL Syntax (This Syntax Will Not Evolve. Use FlinkOpenSource SQL Instead.)

-

Identifiers

- aggregate_func

- alias

- attr_expr

- attr_expr_list

- attrs_value_set_expr

- boolean_expression

- col

- col_comment

- col_name

- col_name_list

- condition

- condition_list

- cte_name

- data_type

- db_comment

- db_name

- else_result_expression

- file_format

- file_path

- function_name

- groupby_expression

- having_condition

- input_expression

- join_condition

- non_equi_join_condition

- number

- partition_col_name

- partition_col_value

- partition_specs

- property_name

- property_value

- regex_expression

- result_expression

- select_statement

- separator

- sql_containing_cte_name

- sub_query

- table_comment

- table_name

- table_properties

- table_reference

- when_expression

- where_condition

- window_function

- Operators

- General Reference

Copied.

Migrating Data from Elasticsearch to DLI

This section describes how to use the CDM data synchronization function to migrate data from a CSS Elasticsearch cluster to DLI. Data in a self-built Elasticsearch cluster can also be bidirectionally synchronized between CDM and DLI.

Prerequisites

- You have created a DLI SQL queue. For details about how to create a DLI queue, see Creating a Queue.

CAUTION:

When you create a queue, set its Type to For SQL.

- You have created a CSS Elasticsearch cluster. For how to create a CSS cluster, see Creating a CSS Cluster.

In this example, the version of the created CSS cluster is 7.6.2, and security mode is disabled for the cluster.

- You have created a CDM cluster. For details about how to create a CDM cluster, see Creating a CDM Cluster.

NOTE:

- If the destination data source is an on-premises database, you need the Internet or Direct Connect. When using the Internet, ensure that an EIP has been bound to the CDM cluster, the security group of CDM allows outbound traffic from the host where the off-cloud data source is located, the host where the data source is located can access the Internet, and the connection port has been enabled in the firewall rules.

- If the data source is CSS on a cloud, the network must meet the following requirements:

i. If the CDM cluster and the cloud service are in different regions, a public network or a dedicated connection is required for enabling communication between the CDM cluster and the cloud service. If the Internet is used for communication, ensure that an EIP has been bound to the CDM cluster, the host where the data source is located can access the Internet, and the port has been enabled in the firewall rules.

ii. If the CDM cluster and the cloud service are in the same region, VPC, subnet, and security group, they can communicate with each other by default. If the CDM cluster and the cloud service are in the same VPC but in different subnets or security groups, you must configure routing rules and security group rules.

For details about how to configure routes, see Configure routes. For details about how to configure security groups, see section Security Group Configuration Examples.

iii. The cloud service instance and the CDM cluster belong to the same enterprise project. If they do not, you can modify the enterprise project of the workspace.

In this example, the VPC, subnet, and security group of the CDM cluster are the same as those of the CSS cluster.

Step 1: Prepare Data

- Create an index for the CSS cluster and import data.

- Log in to the CSS management console and choose Clusters > Elasticsearch from the navigation pane on the left.

- On the Clusters page, click Access Kibana in the Operation column of the created CSS cluster.

- In the navigation pane of Kibana, choose Dev Tools. The Console page is displayed.

- On the displayed Console page, run the following command to create index my_test:

PUT /my_test { "settings": { "number_of_shards": 1 }, "mappings": { "properties": { "productName": { "type": "text", "analyzer": "ik_smart" }, "size": { "type": "keyword" } } } } - Run the following command to import data to the my_test index:

POST /my_test/_doc/_bulk {"index":{}} {"productName":"2017 Autumn New Shirts for Women", "size":"L"} {"index":{}} {"productName":"2017 Autumn New Shirts for Women", "size":"M"} {"index":{}} {"productName":"2017 Autumn New Shirts for Women", "size":"S"} {"index":{}} {"productName":"2018 Spring New Jeans for Women","size":"M"} {"index":{}} {"productName":"2018 Spring New Jeans for Women","size":"S"} {"index":{}} {"productName":"2017 Spring Casual Pants for Women","size":"L"} {"index":{}} {"productName":"2017 Spring Casual Pants for Women","size":"S"}If errors is false in the command output, the data is imported.

- Create a database and table on DLI.

- Log in to the DLI management console and click SQL Editor. On the displayed page, set Engine to spark and Queue to the created SQL queue.

Enter the following statement in the editing window to create a database, for example, the migrated DLI database testdb: For details about the syntax for creating a DLI database, see Creating a Database.

create database testdb;

- Create a table in the database. For details about the table creation syntax, see Creating a DLI Table Using the DataSource Syntax.

create table tablecss(size string, productname string);

- Log in to the DLI management console and click SQL Editor. On the displayed page, set Engine to spark and Queue to the created SQL queue.

Step 2: Migrate Data

- Create a CDM connection to MRS Hive.

- Create a connection to link CDM to the data source CSS.

- Log in to the CDM console, choose Cluster Management. On the displayed page, locate the created CDM cluster, and click Job Management in the Operation column.

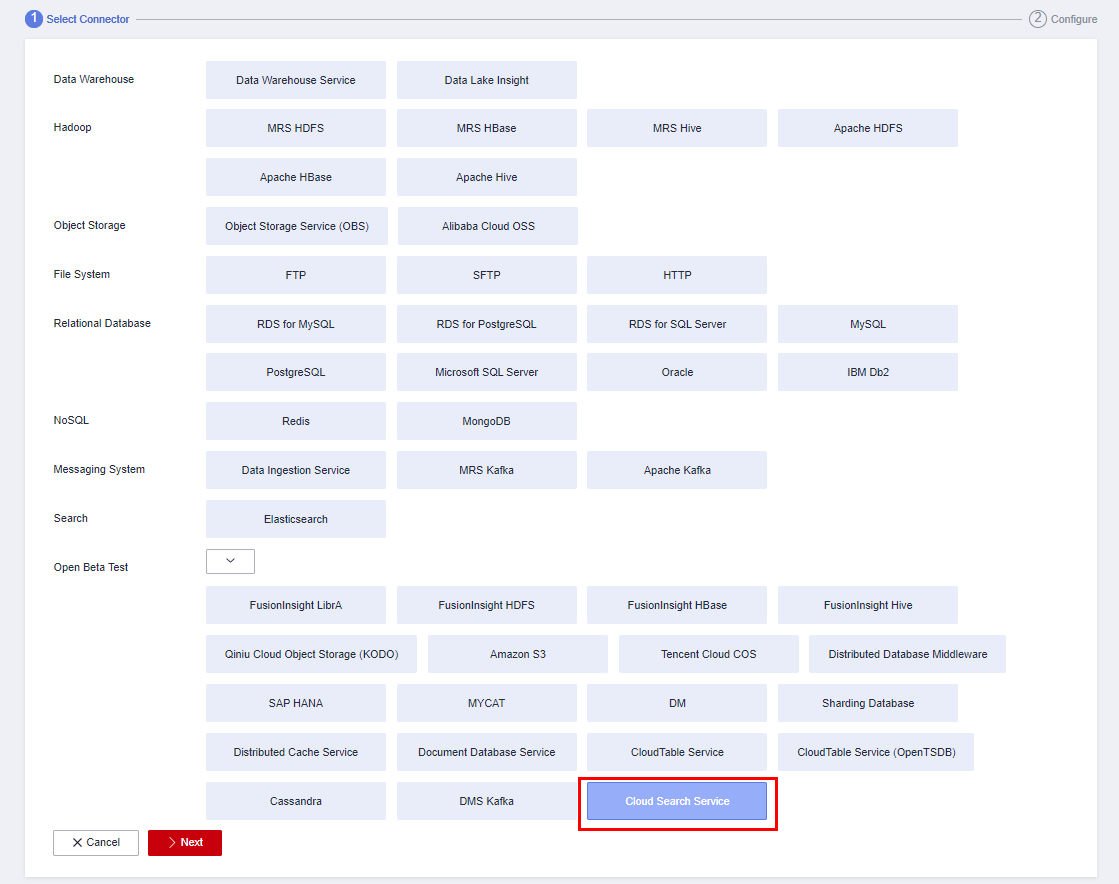

- On the Job Management page, click the Links tab, and click Create Link. On the displayed page, select Cloud Search Service and click Next.

Figure 1 Selecting the CSS connector

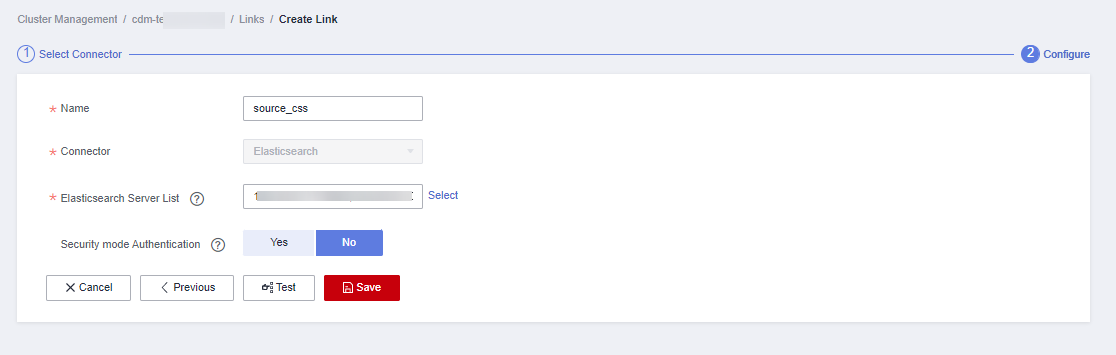

- Configure the connection. The following table describes the required parameters. For details about parameter settings, see Link to Elasticsearch/CSS.

Table 1 CSS data source configuration Parameter

Value.

Name

Name of the CSS data source, for example, source_css.

Elasticsearch Server List

Click Select next to the text box and select the CSS cluster. The Elasticsearch server list is automatically displayed.

Security mode Authentication

If you have enabled the security mode for the CSS cluster, set this parameter to Yes. Otherwise, set this parameter to No.

In this example, set this parameter to No.

Figure 2 Configuring the CSS connection

- Click Save to complete the configuration.

- Create a connection to link CDM to DLI.

- Log in to the CDM console, choose Cluster Management. On the displayed page, locate the created CDM cluster, and click Job Management in the Operation column.

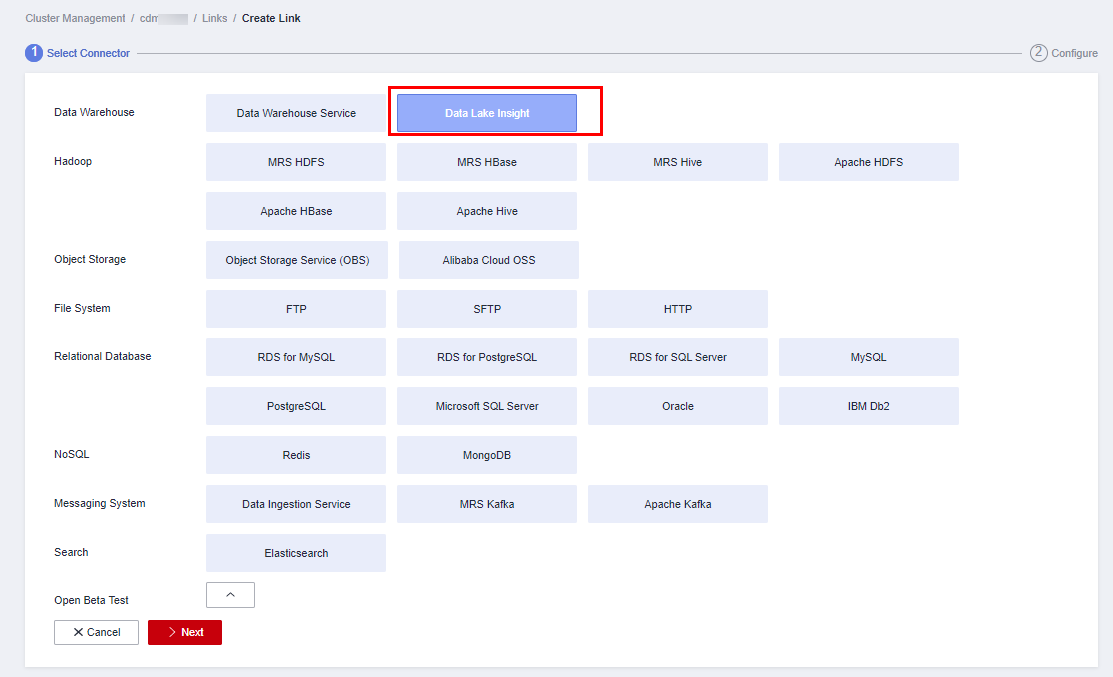

- On the Job Management page, click the Links tab, and click Create Link. On the displayed page, select Data Lake Insight and click Next.

Figure 3 Selecting the DLI connector

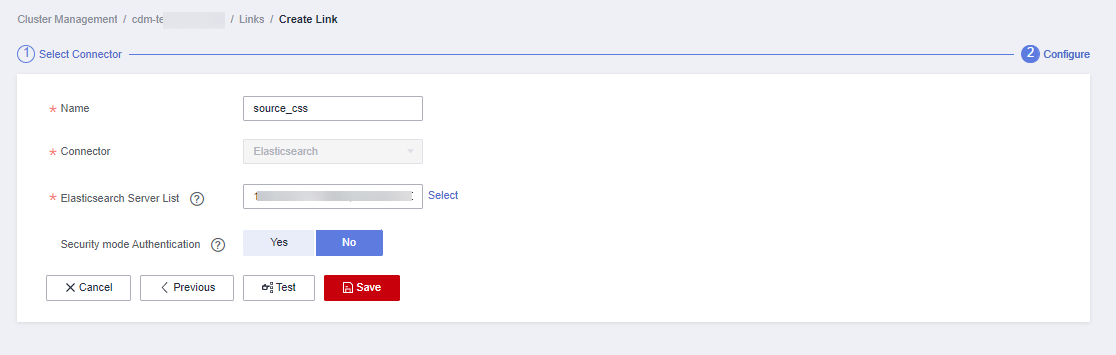

- Configure the connection parameters. For details about parameter settings, see Link to DLI.

Figure 4 Configuring connection parameters

- After the configuration is complete, click Save.

- Create a connection to link CDM to the data source CSS.

- Create a CDM migration job.

- Log in to the CDM console, choose Cluster Management. On the displayed page, locate the created CDM cluster, and click Job Management in the Operation column.

- On the Job Management page, choose the Table/File Migration tab and click Create Job.

- On the Create Job page, specify job information.

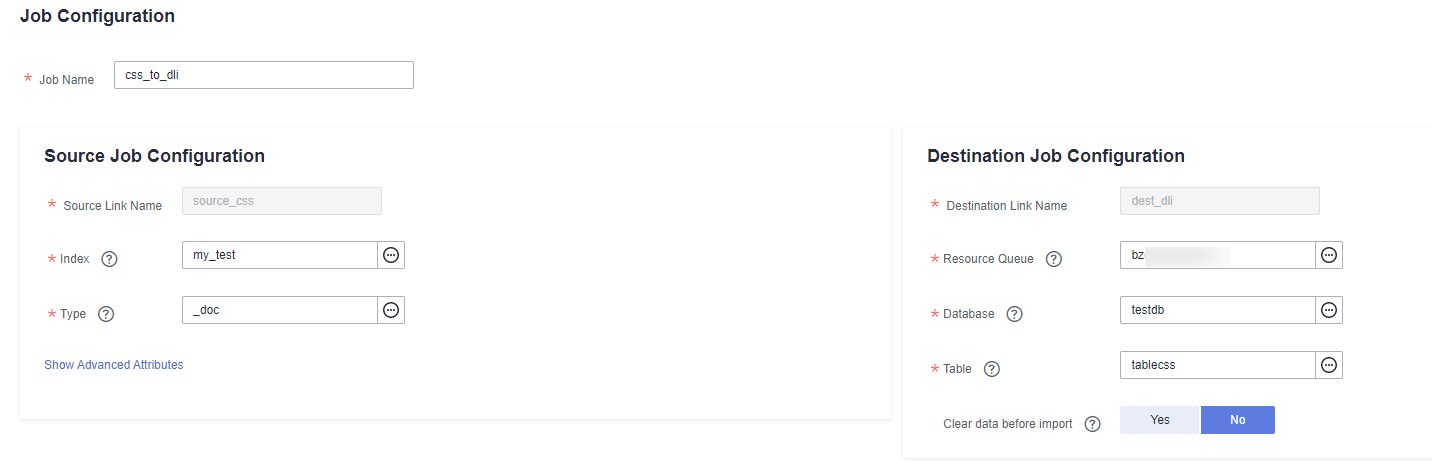

Figure 5 Configuring the CDM job

- Job Name: Name of the data migration job, for example, css_to_dli

- Set parameters required for Source Job Configuration.

Table 2 Source job configuration parameters Parameter

Value

Source Link Name

Select the name of the data source created in 1.a.

Index

Select the Elasticsearch index created for the CSS cluster. In this example, the my_test index created in Create an index for the CSS cluster and import data is used.

The index can contain only lowercase letters.

Type

Elasticsearch type, which is similar to the table name of a relational database. The type name can contain only lowercase letters. Example: _doc.

For details about other parameters, see From Elasticsearch or CSS.

- Set parameters required for Destination Job Configuration.

Table 3 Destination job configuration parameters Parameter

Value

Destination Link Name

Select the DLI data source connection created in 1.b.

Resource Queue

Select a created DLI SQL queue.

Database

Select a created DLI database. In this example, database testdb created in Create a database and table on DLI is selected.

Table

Select the name of a table in the database. In this example, table tablecss created in Create a database and table on DLI is created.

Clear data before import

Whether to clear data in the destination table before data import. In this example, set this parameter to No.

If this parameter is set to Yes, data in the destination table will be cleared before the task is started.

For details about parameter settings, see To DLI.

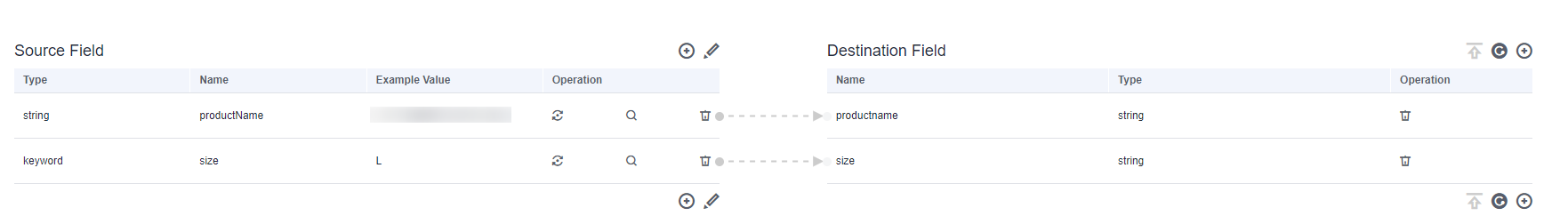

- Click Next. The Map Field page is displayed. CDM automatically matches the source and destination fields.

- If the field mapping is incorrect, you can drag the fields to adjust the mapping.

- If the type is automatically created at the migration destination, you need to configure the type and name of each field.

- CDM allows for field conversion during migration. For details, see Field Conversion.

Figure 6 Field mapping

- Click Next and set task parameters. Generally, retain the default values of all parameters.

In this step, you can configure the following optional functions:

- Retry Upon Failure: If the job fails to be executed, you can determine whether to automatically retry. Retain the default value Never.

- Group: Select the group to which the job belongs. The default group is DEFAULT. On the Job Management page, jobs can be displayed, started, or exported by group.

- Scheduled Execution: For details about how to configure scheduled execution, see Scheduling Job Execution. Retain the default value No.

- Concurrent Extractors: Enter the number of extractors to be concurrently executed. Retain the default value 1.

- Write Dirty Data: Specify this parameter if data that fails to be processed or filtered out during job execution needs to be written to OBS. Before writing dirty data, create an OBS link. You can view the data on OBS later. Retain the default value No so that dirty data is not recorded.

- Click Save and Run. On the Job Management page, you can view the job execution progress and result.

Figure 7 Job progress and execution result

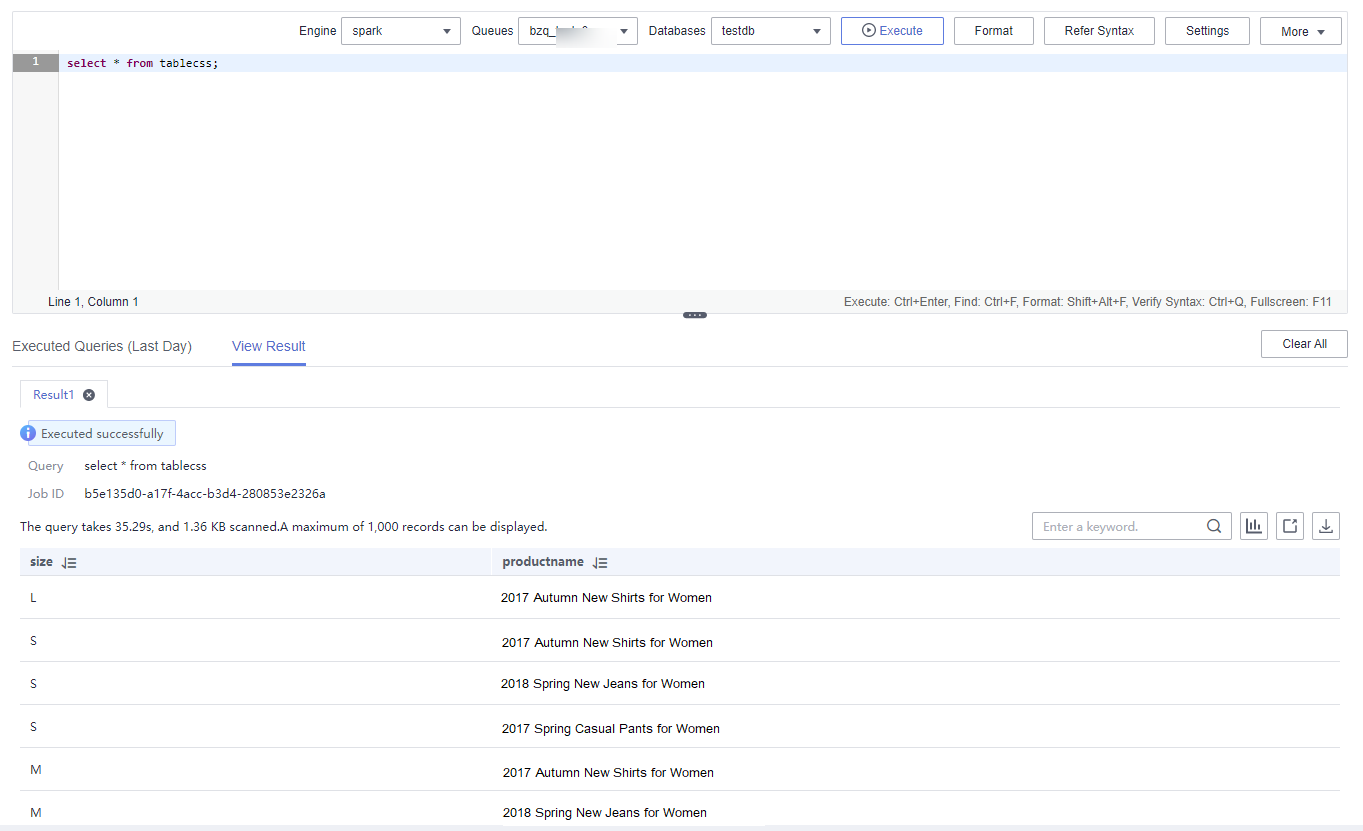

Step 3: Query Results

select * from tablecss;

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot