Using a Large Model to Create a Model on ModelArts Standard and Deploy It as a Real-Time Service

Context

Currently, a large model can have hundreds of billions or even trillions of parameters, and its size becomes larger and larger. A large model with hundreds of billions of parameters exceeds 200 GB, and poses new requirements for version management and production deployment of the platform. For example, importing models requires dynamic adjustment of the tenant storage quota. Slow model loading and startup require a flexible timeout configuration in the deployment. The service recovery time needs to be shortened in the event that the model needs to be reloaded upon a restart caused by a load exception.

To address the preceding requirements, the ModelArts inference platform provides a solution to model management and service deployment in large model application scenarios.

Constraints

- You need to apply for the size quota of a model and add the whitelist cached using the local storage of the node.

- You need to use the custom engine Custom to configure dynamic loading.

- A dedicated resource pool is required to deploy the service.

- The disk space of the dedicated resource pool must be greater than 1 TB.

Procedure

Applying for Increasing the Size Quota of a Model and Using the Local Storage of the Node to Cache the Whitelist

During service deployment, the dynamically loaded model package is stored in the temporary disk space by default. When the service is stopped, the loaded files are deleted, and they need to be reloaded when the service is restarted. To avoid repeated loading, the platform allows the model package to be loaded from the local storage space of the node in the resource pool and keeps the loaded files valid even when the service is stopped or restarted (using the hash value to ensure data consistency).

To use a large model, you need to use a custom engine and enable dynamic loading when importing the model. In this regard, you need to perform the following operations:

- If the model size exceeds the default quota, submit a service ticket to increase the size quota of a single model. The default size quota of a model is 20 GB.

- Submit a service ticket to add the whitelist cached using the local storage of the node.

Uploading Model Data and Verifying the Consistency of Uploaded Objects

To ensure data integrity during dynamic loading, you need to verify the consistency of uploaded objects when uploading model data to OBS. obsutil, OBS Browser+, and OBS SDKs support verification of data consistency during upload. You can select a method that meets your requirements. For details, see Verifying Data Consistency During Upload.

For example, if you upload data via OBS Browser+, enable MD5 verification, as shown in Figure 1. When dynamic loading is enabled and the local persistent storage of the node is used, OBS Browser+ checks data consistency during data upload.

Creating a Dedicated Resource Pool

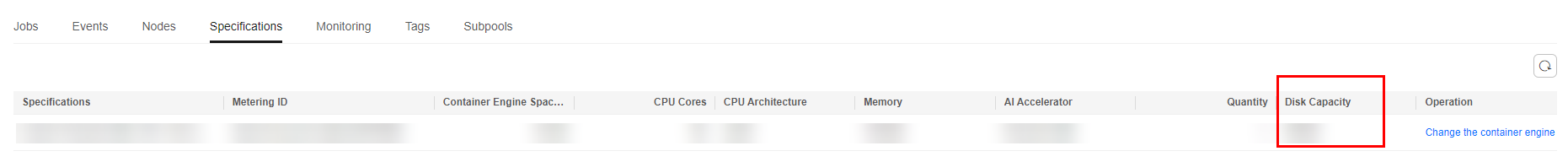

To use the local persistent storage, you need to create a dedicated resource pool whose disk space is greater than 1 TB. You can view the disk information on the Specifications tab of the Basic Information page of the dedicated resource pool. If a service fails to be deployed and the system displays a message indicating that the disk space is insufficient, see What Do I Do If Resources Are Insufficient When a Real-Time Service Is Deployed, Started, Upgraded, or Modified.

Creating a Model

If you use a large model to create a model and import the model from OBS, complete the following configurations:

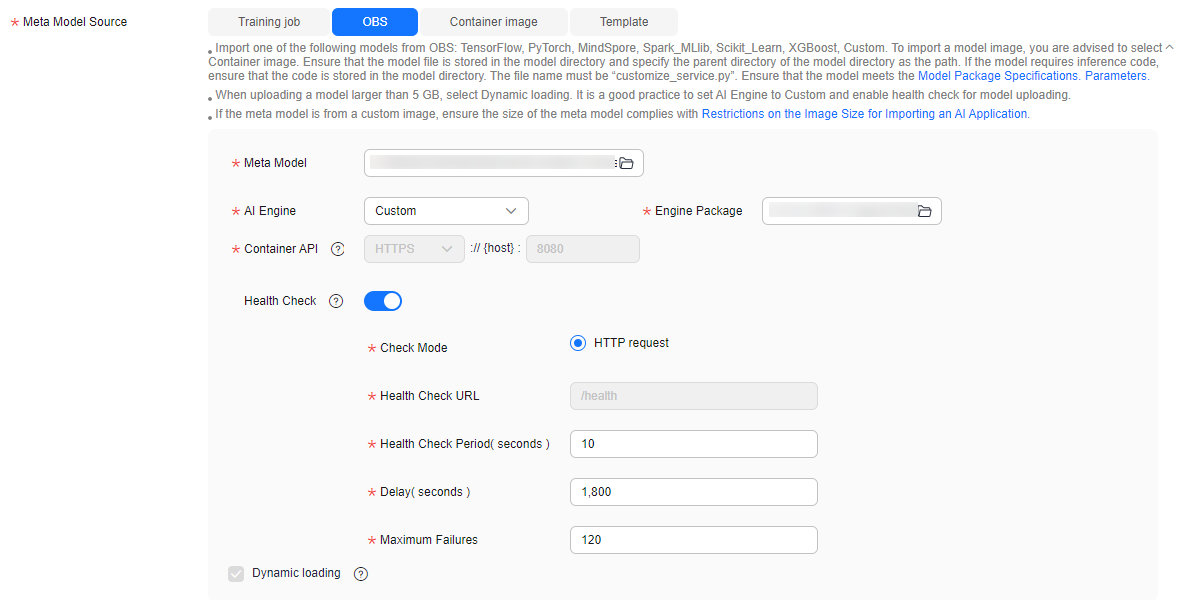

- Use a custom engine and enable dynamic loading.

To use a large model, you need to use a custom engine and enable dynamic loading when importing the model. You can create a custom engine to meet special requirements for image dependency packages and inference frameworks in large model scenarios. For details about how to create a custom engine, see Creating a Model Using a Custom Engine.

When you use a custom engine, dynamic loading is enabled by default. The model package is separated from the image, and the model is dynamically loaded to the service load during service deployment.

- Configure health check.

Health check is mandatory for the models imported using a large model to identify unavailable services that are displayed as started.

Figure 3 Using a custom engine, enabling dynamic loading, and configuring health check

Deploying a Real-Time Service

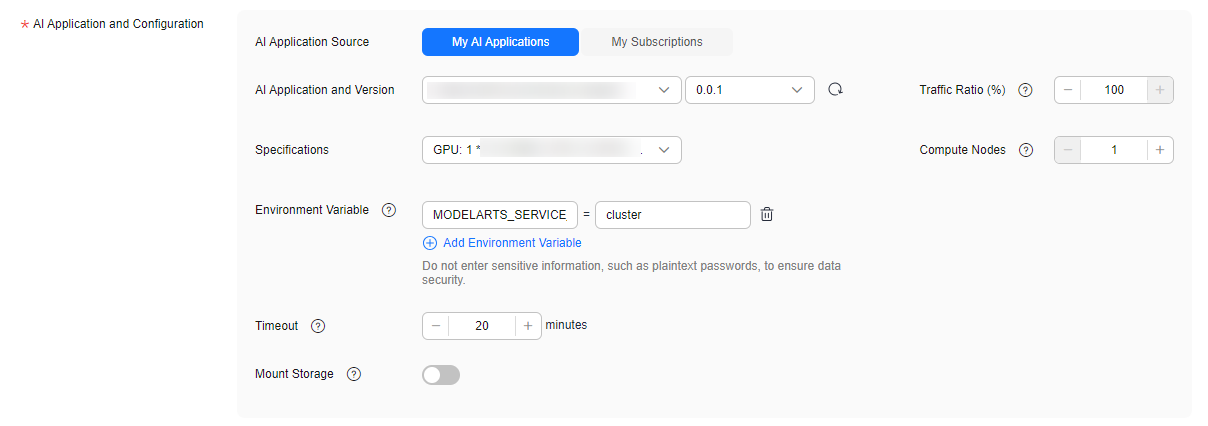

When deploying the service, complete the following configurations:

- Customize the deployment timeout interval.

Generally, the time for loading and starting a large model is longer than that for a common model. Set Timeout to a proper value. Otherwise, the timeout may elapse prior to the completion of the model startup, and the deployment may fail.

- Add an environment variable.

During service deployment, add the following environment variable to set the service traffic load balancing policy to cluster affinity, preventing unready service instances from affecting the prediction success rate:

MODELARTS_SERVICE_TRAFFIC_POLICY: cluster

Figure 4 Customizing the deployment timeout interval and adding an environment variable

You are advised to deploy multiple instances to improve service reliability.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot