Creating a Custom Image and Using It to Create a Model

If you want to use an AI engine that is not supported by ModelArts, create a custom image for the engine, import the image to ModelArts, and use the image to create models. This section describes how to use a custom image to create a model and deploy it as a real-time service.

The procedure is as follows:

- Building an Image Locally: Create a custom image package locally. For details, see Specifications for Custom Images.

- Verifying the Image Locally and Uploading It to SWR: Verify the APIs of the custom image and upload the custom image to SWR.

- Creating a Model Using a Custom Image: Import the image to ModelArts model management.

- Deploying the Model as a Real-Time Service: Deploy the imported model.

Building an Image Locally

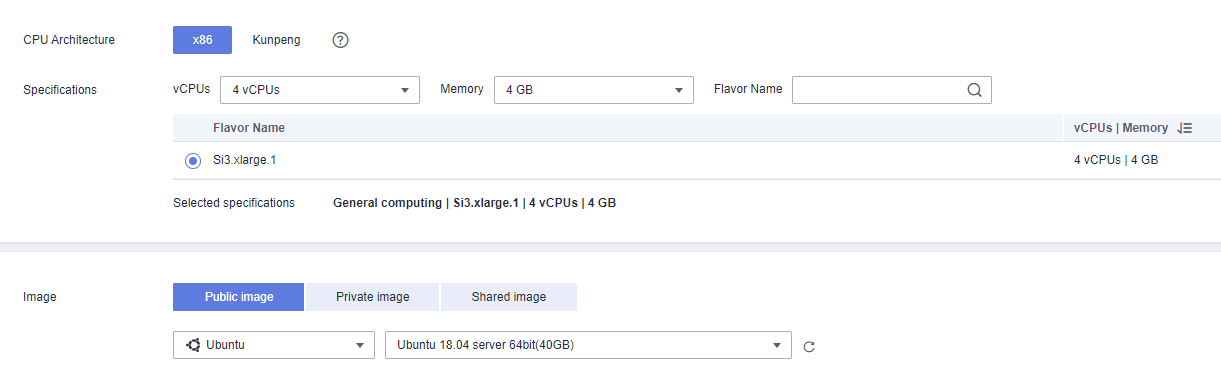

This section uses a Linux x86_x64 host as an example. You can purchase an ECS of the same specifications or use an existing local host to create a custom image.

- After logging in to the host, install Docker. For details, see Docker official documents. Alternatively, run the following commands to install Docker:

curl -fsSL get.docker.com -o get-docker.sh sh get-docker.sh

- Obtain the base image. Ubuntu 18.04 is used in this example.

docker pull ubuntu:18.04

- Create the self-define-images folder, and edit Dockerfile and test_app.py in the folder for the custom image. In the sample code, the application code runs on the Flask framework.

The file structure is as follows:

self-define-images/ --Dockerfile --test_app.py- Dockerfile

From ubuntu:18.04 # Configure the Huawei Cloud source and install Python, Python3-PIP, and Flask. RUN cp -a /etc/apt/sources.list /etc/apt/sources.list.bak && \ sed -i "s@http://.*security.ubuntu.com@http://repo.huaweicloud.com@g" /etc/apt/sources.list && \ sed -i "s@http://.*archive.ubuntu.com@http://repo.huaweicloud.com@g" /etc/apt/sources.list && \ apt-get update && \ apt-get install -y python3 python3-pip && \ pip3 install --trusted-host https://repo.huaweicloud.com -i https://repo.huaweicloud.com/repository/pypi/simple Flask # Copy the application code to the image. COPY test_app.py /opt/test_app.py # Specify the boot command of the image. CMD python3 /opt/test_app.py

- test_app.py

from flask import Flask, request import json app = Flask(__name__) @app.route('/greet', methods=['POST']) def say_hello_func(): print("----------- in hello func ----------") data = json.loads(request.get_data(as_text=True)) print(data) username = data['name'] rsp_msg = 'Hello, {}!'.format(username) return json.dumps({"response":rsp_msg}, indent=4) @app.route('/goodbye', methods=['GET']) def say_goodbye_func(): print("----------- in goodbye func ----------") return '\nGoodbye!\n' @app.route('/', methods=['POST']) def default_func(): print("----------- in default func ----------") data = json.loads(request.get_data(as_text=True)) return '\n called default func !\n {} \n'.format(str(data)) # host must be "0.0.0.0", port must be 8080 if __name__ == '__main__': app.run(host="0.0.0.0", port=8080)

- Dockerfile

- Switch to the self-define-images folder and run the following command to create custom image test:v1:

docker build -t test:v1 .

- Run docker images to view the custom image you have created.

Verifying the Image Locally and Uploading It to SWR

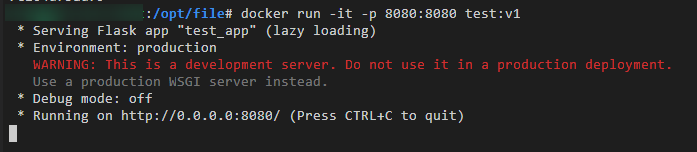

- Run the following command in the local environment to start the custom image:

docker run -it -p 8080:8080 test:v1

Figure 2 Starting a custom image

- Open another terminal and run the following commands to test the functions of the three APIs of the custom image:

curl -X POST -H "Content-Type: application/json" --data '{"name":"Tom"}' 127.0.0.1:8080/ curl -X POST -H "Content-Type: application/json" --data '{"name":"Tom"}' 127.0.0.1:8080/greet curl -X GET 127.0.0.1:8080/goodbyeIf information similar to the following is displayed, the function verification is successful.

Figure 3 Testing API functions

- Upload the custom image to SWR.

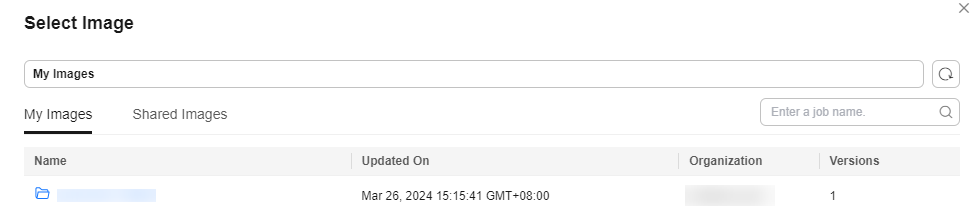

- After the custom image is uploaded, view the uploaded image on the My Images > Private Images page of the SWR console.

Creating a Model Using a Custom Image

- Meta Model Source: Select Container image.

- Container Image Path: Select the created private image.

Figure 4 Created private image

- Container API: Protocol and port number for starting a model. Ensure that the protocol and port number are the same as those provided in the custom image.

- Image Replication: indicates whether to copy the model image in the container image to ModelArts. This parameter is optional.

- Health Check: checks health status of a model. This parameter is optional. This parameter is configurable only when the health check API is configured in the custom image. Otherwise, creating the model will fail.

- Container Image Path: Select the created private image.

- APIs: APIs of a custom image. This parameter is optional. The model APIs must comply with ModelArts specifications. For details, see Specifications for Editing a Model Configuration File.

The configuration file is as follows:

[{ "url": "/", "method": "post", "request": { "Content-type": "application/json" }, "response": { "Content-type": "application/json" } }, { "url": "/greet", "method": "post", "request": { "Content-type": "application/json" }, "response": { "Content-type": "application/json" } }, { "url": "/goodbye", "method": "get", "request": { "Content-type": "application/json" }, "response": { "Content-type": "application/json" } } ]

Deploying the Model as a Real-Time Service

- Deploy the model as a real-time service. For details, see Deploying a Model as a Real-Time Service.

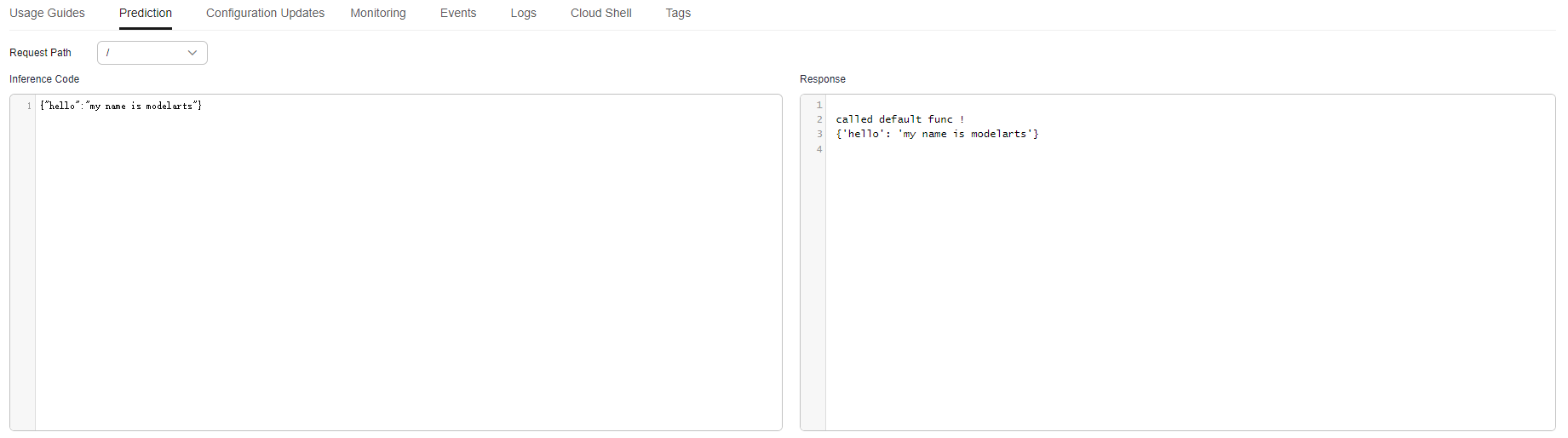

- View the details about the real-time service.

- Access the real-time service in the Prediction tab.

Figure 5 Accessing the real-time service

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot