Managing JMeter Test Reports

Test Report Description

Real-time and offline JMeter test reports allow you to view and analyze test data at any time.

For details about the JMeter test report, see Table 1.

This report shows the response performance of the tested system in a scenario with a large number of concurrent users. To understand the report, see the following information:

- Statistical dimension: In this report, RPS, response time, and concurrency are measured in a single thread group. If a request has multiple packets, the request is considered successful only when all the packets are responded to. The response time of the request is the sum of the response time of the packets.

- Response timeout: If the corresponding TCP connection does not return the response data within the set response timeout (customized in *.jmx files), the thread group request is counted as a response timeout. Possible causes include: the tested server is busy or crashes, or the network bandwidth is fully occupied.

- Verification failure: The response packet content and response code returned from the server do not meet the expectation (the default expected response code of HTTP/HTTPS is 200), such as code 404 or 502. A possible cause is that the tested service cannot be processed normally in scenarios with a large number of concurrent users. For example, a database bottleneck exists in the distributed system or the backend application returns an error.

- Parsing failure: All response packets are received, but some packets are lost. As a result, the entire case response is incomplete. This may be caused by network packet loss.

- Bandwidth: This report collects statistics on the bandwidth of the execution end of CodeArts PerfTest. The uplink indicates the traffic sent from CodeArts PerfTest, and the downlink indicates the received traffic. In the external pressure test scenario, you need to check whether the EIP bandwidth of the executor meets the uplink bandwidth requirement and whether the bandwidth exceeds the downlink bandwidth of 1 GB.

- Requests per second (RPS): indicates the number of requests per second. Average RPS = Total number of requests in a statistical period/Statistical period.

- How to determine the quality of tested applications: According to the service quality definition of an application, the optimal status is that there is no response or verification failure. If there is any response or verification failure, it must be within the defined service quality range. Generally, the value does not exceed 1%. The shorter the response time is, the better. User experience is considered good if the response time is within 2s, acceptable if it is within 5s and needs optimization when it is over 5s. TP90 and TP99 can objectively reflect the response time experienced by 90% to 99% of users.

|

Parameter |

Description |

|---|---|

|

Total Number of Metrics |

Total number of metrics in all thread groups.

|

|

Average RPS |

Total number of requests in a statistical period/Statistical period |

|

Average RT |

Changes of the average response time of a pressure test task |

|

Concurrent Users |

Changes on the number of concurrent virtual users during testing |

|

Bandwidth (kbit/s) |

Records the real-time bandwidth usage during the running of a pressure test task.

|

|

Response distribution statistics |

Number of transactions processed per second for normal return, abnormal return, parsing failure, verification failure, response timeout, connection rejection, and other errors. This metric is related to the think time, number of concurrent users, and server response capability. For example, if the think time is 500 ms and the response time of the last request for the current user is less than 500 ms, the user requests twice per second.

|

|

Response Code statistics |

1XX/2XX/3XX/4XX/5XX |

|

Response Time Ratio |

Ratio of response time of cases. |

|

Maximum Response Time of TP (ms) |

If you want to calculate the top percentile XX (TPXX) for a request, collect all the response time values for the request over a time period (such as 10s) and sort them in an ascending order. Remove the top (100–XX)% from the list, and the highest value left is the value of TPXX.

|

Viewing a Real-Time Test Report

View the monitoring data of each metric during a pressure test through the real-time test report.

Prerequisites

The test task is being executed.

Procedure

- Log in to the CodeArts PerfTest console, choose JMeter Projects in the navigation pane, and choose

in the row containing the desired test project.

in the row containing the desired test project. - On the Performance Reports tab page, click

in the row containing the test plan whose real-time test report you want to view. For details about the parameters, see Table 1. Click Stop Task to stop the current task.

in the row containing the test plan whose real-time test report you want to view. For details about the parameters, see Table 1. Click Stop Task to stop the current task. - You can change the test report name. In the report list, move the cursor to the target name, click

, and enter a new report name.

, and enter a new report name. - On the Overview tab page, you can view the number of failed/total requests, average RT, maximum concurrency, success rate, bandwidth, dynamic trend, and response codes.

- On the Detail tab, you can view the logs, common test metrics, and request details of the test plan.

- Click View Log. In the displayed dialog box, view request logs, event logs, and pod information. Ten request logs are displayed based on the request name, return code, and result.

Request logs can be filtered by return code, result, or request name.

- The sampling modes displayed in the report details are equidistant sampling value and equidistant average value.

- Equidistant sampling value: Based on the case execution duration, the trend chart of cases whose execution duration is longer than 30 minutes is displayed with sampling points at a fixed interval.

- Equidistant average value: Based on the case execution duration, the trend chart of cases whose execution duration is longer than 30 minutes is displayed with average values at a fixed interval.

- Click the Data View drop-down list box and enter a keyword to search for the required thread group or request data. You can also click the thread group or request data to be displayed in the directory in the Data View drop-down list box.

- On the Detail tab page, you can also click List to go to the report metric summary page.

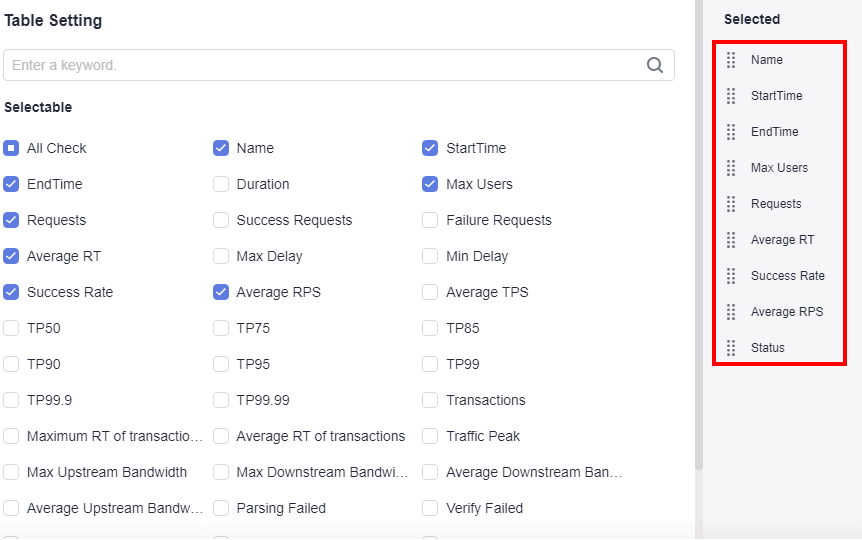

- Click Customized Column. In the displayed dialog box, select the items to be displayed, and drag the selected items in the Selected list on the right to change the item sequence.

Figure 1 Table settings

- Click

in the Operation column to view logs.

in the Operation column to view logs. - Click

in the Operation column to edit a thread group.

in the Operation column to edit a thread group.

- Click Customized Column. In the displayed dialog box, select the items to be displayed, and drag the selected items in the Selected list on the right to change the item sequence.

- Click View Log. In the displayed dialog box, view request logs, event logs, and pod information. Ten request logs are displayed based on the request name, return code, and result.

- If the test plan has been associated with Application Monitor (APM) in the intelligent analysis object, the Invoke Analysis tab is displayed in the test report. On the Invoke Analysis tab, you can set search criteria to view the APM invoking details.

If the test plan has been associated with Node Monitor (AOM) in the intelligent analysis object, the Monitored Indicator tab is displayed in the test report. On the Monitored Indicator tab, you can view information such as CPU usage, memory usage, disk read speed, and disk write speed.

Viewing an Offline Test Report

After a pressure test is complete, the system generates an offline test result report.

Prerequisites

The test task is complete.

Procedure

- Log in to the CodeArts PerfTest console, choose JMeter Projects in the navigation pane, and choose

in the row containing the desired test project.

in the row containing the desired test project. - On the Performance Reports tab page, click

in the row containing the test plan whose test report you want to view. For details about the parameters, see Table 1. CodeArts PerfTest retains offline report data for one year. You can download an offline PDF report to a local PC and export the raw data (in CSV) for further processing.

in the row containing the test plan whose test report you want to view. For details about the parameters, see Table 1. CodeArts PerfTest retains offline report data for one year. You can download an offline PDF report to a local PC and export the raw data (in CSV) for further processing. - You can change the test report name. In the report list, move the cursor to the target name, click

, and enter a new report name.

, and enter a new report name. - On the Overview tab page, you can view the number of failed/total requests, average RT, maximum concurrency, success rate, bandwidth, dynamic trend, and response codes.

- On the Detail tab, you can view the logs, common test metrics, and request details of the test plan.

- Click View Log. In the displayed dialog box, view request logs, event logs, and pod information. Ten request logs are displayed based on the request name, return code, and result.

Request logs can be filtered by return code, result, or request name.

- The sampling modes displayed in the report details are equidistant sampling value and equidistant average value.

- Equidistant sampling value: Based on the case execution duration, the trend chart of cases whose execution duration is longer than 30 minutes is displayed with sampling points at a fixed interval.

- Equidistant average value: Based on the case execution duration, the trend chart of cases whose execution duration is longer than 30 minutes is displayed with average values at a fixed interval.

- Click the Data View drop-down list box and enter a keyword to search for the required thread group or request data. You can also click the thread group or request data to be displayed in the directory in the Data View drop-down list box.

- On the Detail tab page, you can also click List to go to the report metric summary page.

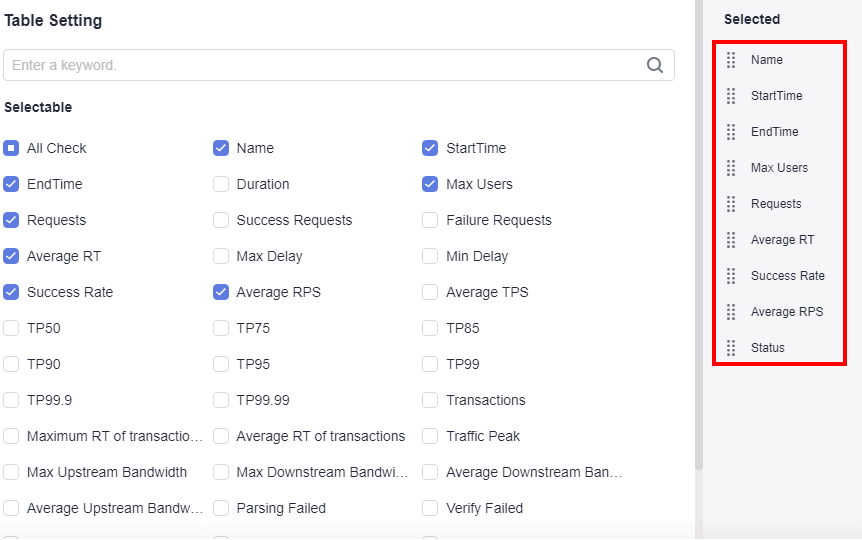

- Click Customized Column. In the displayed dialog box, select the items to be displayed, and drag the selected items in the Selected list on the right to change the item sequence.

Figure 2 Table settings

- Click

in the Operation column to view logs.

in the Operation column to view logs. - Click

in the Operation column to edit a thread group.

in the Operation column to edit a thread group.

- Click Customized Column. In the displayed dialog box, select the items to be displayed, and drag the selected items in the Selected list on the right to change the item sequence.

- Click View Log. In the displayed dialog box, view request logs, event logs, and pod information. Ten request logs are displayed based on the request name, return code, and result.

- If the test plan has been associated with Application Monitor (APM) in the intelligent analysis object, the Invoke Analysis tab is displayed in the test report. On the Invoke Analysis tab, you can set search criteria to view the APM invoking details.

If the test plan has been associated with Node Monitor (AOM) in the intelligent analysis object, the Monitored Indicator tab is displayed in the test report. On the Monitored Indicator tab, you can view information such as CPU usage, memory usage, disk read speed, and disk write speed.

Report Comparison

For the test reports generated at different time or under different conditions, you can view the test result by comparing the reports.

Procedure

- Log in to the CodeArts PerfTest console, choose JMeter Projects in the navigation pane, and choose

in the row containing the desired test project.

in the row containing the desired test project. - On the Performance Reports tab page, click the name of the desired plan or click

in the Operation column.

in the Operation column. - On the Comparison tab page, select the desired test reports.

You can select a maximum of three offline reports for comparison. The first selected report is used as the baseline report.

- Select a thread group from the Thread Group Comparison box to compare the metrics of this thread group in different reports.

- Thread group metric comparison supports metric filtering.

- Click Custom Comparison. In the displayed dialog box, select the metrics to be displayed.

- (Optional) Drag the selected items in the Selected list on the right of the dialog box to change the item sequence.

- Click OK.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot