Deploying an Nginx Workload in a CCE Autopilot Cluster

CCE Autopilot clusters are serverless. They support intelligent version upgrade, automatic vulnerability fixing, and intelligent parameter tuning, allowing you to have a more intelligent experience. In addition, CCE Autopilot uses the same underlying resource pool. You do not need to manage and maintain the allocation and expansion of underlying resources, effectively reducing O&M costs. The underlying resource pool supports quick fault isolation and rectification. This ensures that applications can run continuously and stably and improves application reliability.

Nginx is a high-performance, open-source HTTP web server and a reverse proxy for handling concurrent connections, balancing traffic, and caching static content. An Nginx workload in a cluster can serve as a load balancer and reverse proxy to effectively distribute traffic and ensure high availability and fault tolerance of applications. It also simplifies traffic management, security control, and API gateway functions in the microservice architecture, improving system flexibility and scalability.

The following describes how to create a CCE Autopilot cluster and deploy an Nginx workload in the cluster. Figure 1 shows the overall architecture.

Video Tutorial

Procedure

|

Step |

Description |

Billing |

|---|---|---|

|

Sign up for a HUAWEI ID and make sure you have a valid payment method configured. |

Billing is not involved. |

|

|

Step 1: Enable CCE for the First Time and Perform Authorization |

Obtain the required permissions for your account when you use CCE in the current region for the first time. |

Billing is not involved. |

|

Create a CCE Autopilot cluster so that you can use Kubernetes in a more simple way. |

Cluster management and VPC endpoints are billed. For details, see CCE Billing. |

|

|

Create an Nginx workload in the cluster and create a LoadBalancer Service so that the workload can be accessed from the public network. |

Pods and load balancers are billed. For details, see CCE Billing and ELB Billing Items. |

|

|

To avoid additional expenditures, release resources promptly if you no longer need them. |

Billing is not involved. |

Preparations

- Before you start, sign up for a HUAWEI ID and complete real-name authentication. For details, see Signing Up for a HUAWEI ID and Enabling Huawei Cloud Services and Getting Authenticated.

Step 1: Enable CCE for the First Time and Perform Authorization

When you first log in to the CCE console, CCE automatically requests permissions to access related cloud services (compute, storage, networking, and monitoring) in the region where the cluster is deployed. If you have authorized CCE in the deployment region, skip this step.

- Log in to the CCE console using your HUAWEI ID.

- Click

in the upper left corner of the console and select a region, for example, CN East-Shanghai1.

in the upper left corner of the console and select a region, for example, CN East-Shanghai1. - Wait for the Authorization Statement dialog box to appear, carefully read the statement, and click OK.

After you agree to delegate the permissions, CCE creates an agency named cce_admin_trust in IAM to perform operations on other cloud resources and grants it the Tenant Administrator permissions. Tenant Administrator has the permissions on all cloud services except IAM. The permissions are used to call the cloud services on which CCE depends. The delegation takes effect only in the current region.

CCE depends on other cloud services. If you do not have the Tenant Administrator permissions, CCE may be unavailable due to insufficient permissions. For this reason, do not delete or modify the cce_admin_trust agency when using CCE.

CCE has updated the cce_admin_trust agency permissions to enhance security while accommodating dependencies on other cloud services. The new permissions no longer include Tenant Administrator permissions. This update is only available in certain regions. If your clusters are of v1.21 or later, a message will appear on the console asking you to re-grant permissions. After re-granting, the cce_admin_trust agency will be updated to include only the necessary cloud service permissions, with the Tenant Administrator permissions removed.

When creating the cce_admin_trust agency, CCE creates a custom policy named CCE admin policies. Do not delete this policy.

Step 2: Create a CCE Autopilot Cluster

Create a CCE Autopilot cluster so that you can use Kubernetes in a more simple way. In this example, only some mandatory parameters are described. You can keep the default values for other parameters. For details about other parameters, see Buying a CCE Autopilot Cluster.

- Log in to the CCE console.

- If there is no cluster in your account in the current region, click Buy Cluster or Buy CCE Autopilot Cluster.

- If there is already a cluster in your account in the current region, choose Clusters in the navigation pane and then click Buy Cluster.

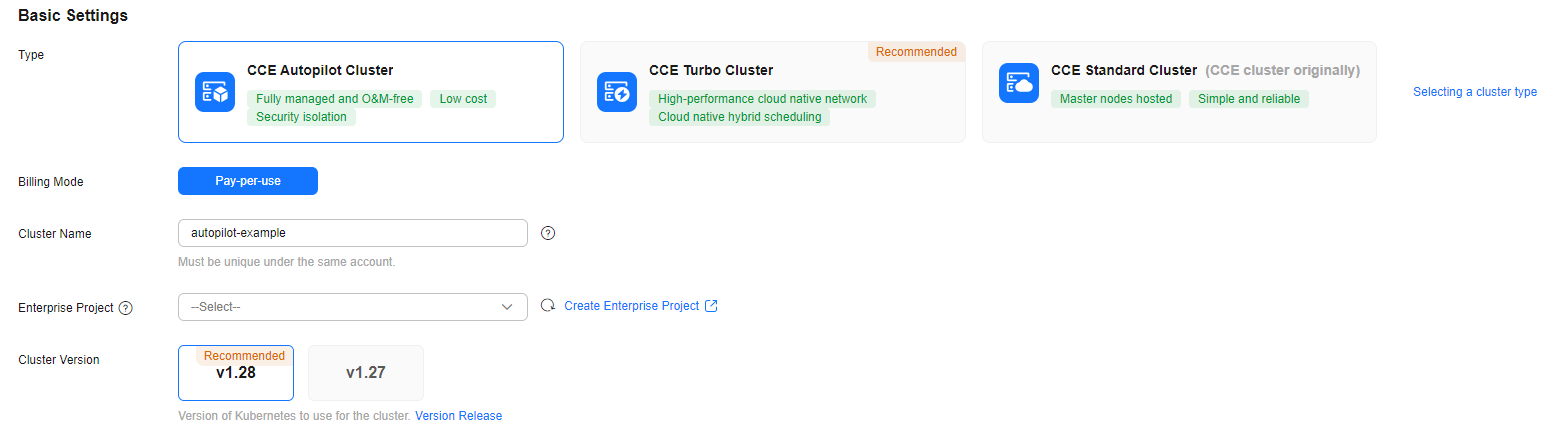

- Configure basic cluster information. For details about the parameters, see Figure 2 and Table 2.

Table 2 Basic cluster information Parameter

Example Value

Description

Type

CCE Autopilot Cluster

CCE allows you to create various types of clusters for diverse needs.

- CCE standard clusters provide highly reliable and secure containers for commercial use.

- CCE Turbo clusters use high-performance cloud native networks and provide cloud native hybrid scheduling. Such clusters have improved resource utilization and can be used in more scenarios.

- CCE Autopilot clusters are serverless, and you do not need to bother with server O&M. This greatly reduces O&M costs and improves application reliability and scalability.

For more information about cluster types, see Cluster Comparison.

Cluster Name

autopilot-example

Enter a name for the cluster.

Enter 4 to 128 characters. Start with a lowercase letter and do not end with a hyphen (-). Only lowercase letters, digits, and hyphens (-) are allowed.

Enterprise Project

default

This parameter is displayed only for enterprise users who have enabled Enterprise Project.

Enterprise projects are used for cross-region resource management and make it easy to centrally manage resources by department or project team. For more information, see Project Management.

Select an enterprise project as needed. If there is no special requirement, you can select default.

Cluster Version

v1.28

Select the Kubernetes version for the cluster. You are advised to select the latest version.

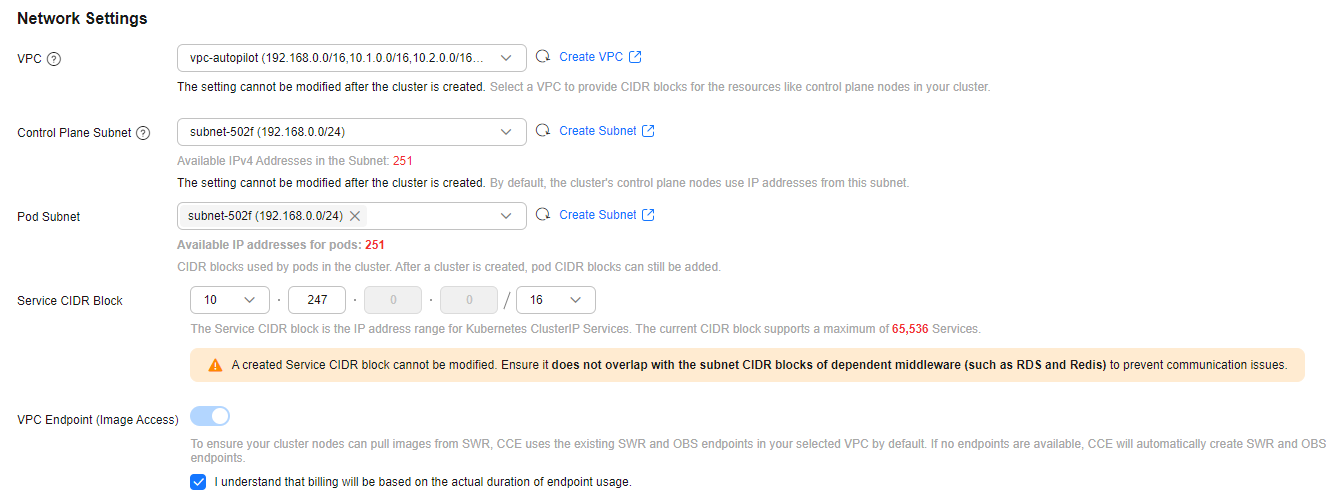

- Configure cluster network information. For details about the parameters, see Figure 3 and Table 3.

Table 3 Cluster network information Parameter

Example Value

Description

VPC

vpc-autopilot

Select a VPC where the cluster will be running. If no VPC is available, click Create VPC on the right to create one. For details, see Creating a VPC and Subnet. The VPC cannot be changed after the cluster is created.

Control Plane Subnet

subnet-502f

Select the subnet where the control plane is located. The cluster control plane node uses the IP address in this subnet by default. Ensure that the subnet has sufficient available IPv4 addresses. The subnet cannot be modified after being created.

If no subnet is available, click Create Subnet on the right to create one. For details, see Creating a VPC and Subnet.

Pod Subnet

subnet-502f

Select the subnet where the pods will be running. Each pod requires a unique IP address. The number of IP addresses in a subnet determines the maximum number of pods in a cluster and the maximum number of containers. After the cluster is created, you can add subnets.

If no subnet is available, click Create Subnet on the right to create one. For details, see Creating a VPC and Subnet.

Service CIDR Block

10.247.0.0/16

Select a Service CIDR block, which will be used by containers in the cluster to access each other. This CIDR block determines the maximum number of Services. After the cluster is created, the Service CIDR block cannot be changed.

VPC Endpoint (Image Access)

-

To ensure that your cluster nodes can pull images from SWR, existing SWR and OBS endpoints in the selected VPC are used by default. If there are no such endpoints, new SWR and OBS endpoints will be automatically created.

VPC endpoints are billed. For details, see VPC Endpoint Price Calculator.

- Deselect Enable Alarm Center because no alarms are involved in this example. Configure this item based on your requirements.

- Click Next: Select Add-on. On the page displayed, select the add-ons to be installed during cluster creation.

In this example, only the default add-ons, CoreDNS and Kubernetes Metrics Server, are installed.

- Click Next: Add-on Configuration to configure the selected add-ons. Mandatory add-ons cannot be configured.

- Click Next: Confirm Settings, check the displayed cluster resource list, and click Submit.

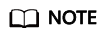

It takes about 5 to 10 minutes to create a cluster. After the cluster is created, the cluster is in the Running state.

Figure 4 A running cluster

Step 3: Deploy Nginx and Access It

You can create an Nginx workload in a cluster and deploy it in containers for high resource utilization and automatic management. You also need to create a LoadBalancer Service for the workload so that the workload can be accessed from the public network. You can use either of the following methods to deploy and access the Nginx workload.

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Workloads. In the upper right corner, click Create Workload.

- Configure basic workload information. For details about the parameters, see Figure 5 and Table 4.

In this example, only some mandatory parameters are described. You can keep the default values for other parameters. For details about other parameters, see Creating a Workload. You can select a reference document based on the workload type.

Table 4 Basic workload information Parameter

Example Value

Description

Workload Type

Deployment

Select a workload type. A workload defines the creation, status, and lifecycle of pods. By creating a workload, you can manage and control the behavior of multiple pods, such as scaling, update, and restoration.

- Deployment: runs a stateless application. It supports online deployment, rolling upgrade, replica creation, and restoration.

- StatefulSet: runs a stateful application. Each pod for running the application has an independent state, and the data can be restored when the pod is restarted or migrated, ensuring application reliability and consistency.

- Job: a one-off job. After the job is complete, the pods are automatically deleted.

- Cron Job: a time-based job runs a specified job in a specified period.

For more information about workloads, see Workload Overview.

In this example, Nginx is deployed as a Deployment because Nginx is mainly used to forward requests, balance loads, and distribute static content. No persistent data needs to be stored locally.

Workload Name

nginx

Enter a name for the workload.

Enter 1 to 63 characters, starting with a lowercase letter and ending with a lowercase letter or digit. Only lowercase letters, digits, and hyphens (-) are allowed.

Namespace

default

Select a namespace. A namespace is a conceptual grouping of resources or objects. Each namespace provides isolation for data from other namespaces.

After a cluster is created, the default namespace is created by default. If there is no special requirement, select default.

Pod Type

General computing

Select the pod type. The performance and price of each pod type are different. For details, see Specifying the Pod Type.

- General-computing: This type is suitable for customers who have high requirements on computing performance and focus on the scale and stability of compute. Intel CPUs are used to provide compute.

- General-computing-lite: provides cost-effective compute with comparable performance.

Pods

1

Enter the number of pods. The policy for setting the number of pods is as follows:

- High availability: To ensure high availability of the workload, at least 2 pods are required to prevent individual faults.

- High performance: Set the number of pods based on the workload traffic and resource requirements to avoid overload or resource waste.

In this example, set the number of pods to 1.

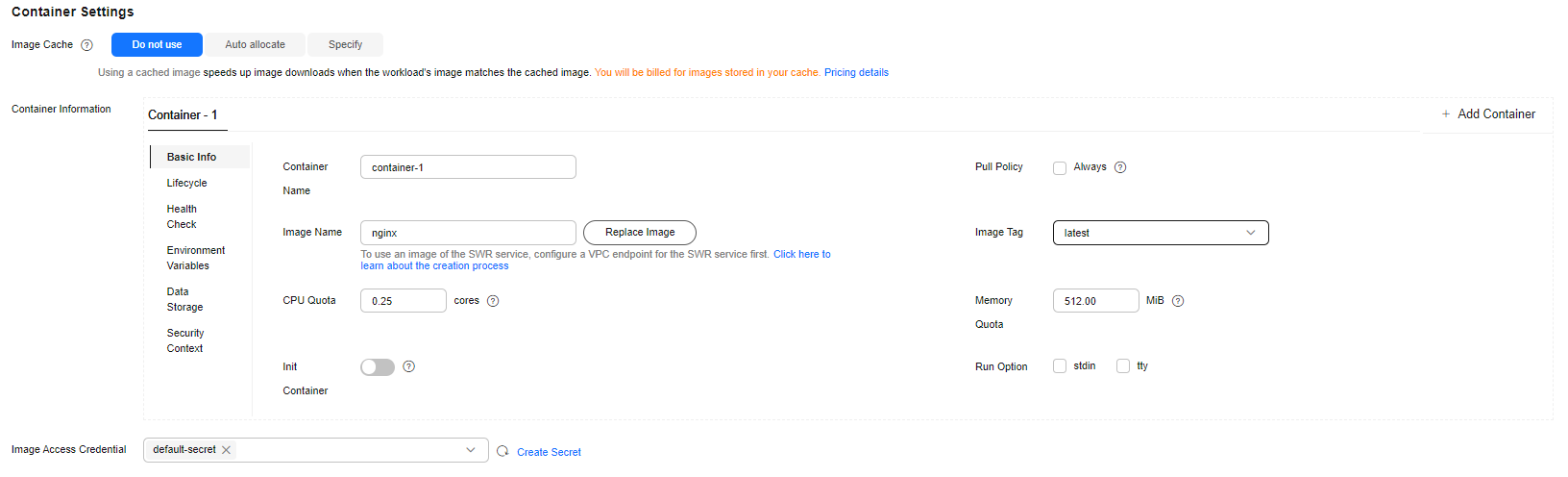

- Configure container information. For details about the parameters, see Figure 6 and Table 5.

In this example, only some mandatory parameters are described. You can keep the default values for other parameters. For details about other parameters, see Creating a Workload. You can select a reference document based on the workload type.

Table 5 Container settings Parameter

Example Value

Description

Image Name

nginx

Click Select Image. In the displayed dialog box, click the Open Source Images tab and select a public image.

Image Tag

latest

Select the required image tag.

CPU Quota

0.25 cores

Specify the CPU limit, which defines the maximum number of CPU cores that can be used by a container. The default value is 0.25 cores.

Memory Quota

512 MiB

Specify the memory limit, which is the maximum memory available for a container. The default value is 512 MiB. If the memory exceeds 512 MiB, the container will be terminated.

- Click

under Service Settings. On the page that is displayed, configure the Service. For details about the parameters, see Figure 7 and Table 6.

under Service Settings. On the page that is displayed, configure the Service. For details about the parameters, see Figure 7 and Table 6.

In this example, only some mandatory parameters are described. You can keep the default values for other parameters. For details about other parameters, see Service. Select a reference document based on the Service type.

Table 6 Service settings Parameter

Example Value

Description

Service Name

nginx

Enter a name for the Service.

Enter 1 to 63 characters, starting with a lowercase letter and ending with a lowercase letter or digit. Only lowercase letters, digits, and hyphens (-) are allowed.

Service Type

LoadBalancer

Select a Service type, which determines how the workload is accessed.

- ClusterIP: The workload can only be accessed using IP addresses in the cluster.

- LoadBalancer: The workload can be accessed from the public network through a load balancer.

In this example, external access to Nginx is required. So you need to create a Service of the LoadBalancer type. For more information about the Service types, see Service.

Load Balancer

- Dedicated

- Network load balancing (TCP/UDP)

- Use existing

- elb-nginx

- Select Use existing if there is a load balancer available.

NOTE:

The load balancer must meet the following requirements:

- It is in the same VPC as the cluster.

- It is a dedicated load balancer.

- It has a private IP address bound.

- If there is no available load balancer, select Auto create to create one with an EIP bound. For details, see Creating a LoadBalancer Service.

Port

Protocol: TCP

Protocol: Select a protocol for the load balancer listener.

Container Port: 80

Container Port: Enter the port on which the application listens. This port must be the same as the listening port provided by the application for external systems.

When the nginx image is used, the container port must be set to 80 because Nginx uses port 80 to provide HTTP services by default.

Service Port: 8080

Service Port: Enter a custom port. The load balancer will use this port as the listening port to provide an entry for external traffic.

- Click Create Workload.

After the Deployment is created, it is in the Running state in the Deployment list.

Figure 8 A running workload

- Click the workload name to go to the workload details page and obtain the external access address of Nginx. On the Access Mode tab, view the access address in the format of {EIP bound to the load balancer}:{Access port}. {EIP bound to the load balancer} indicates the public IP address of the load balancer, and {Access port} is the Service Port in 5.

Figure 9 Access address

- In the address box of your browser, enter <EIP-bound-to-the-load-balancer>:<access-port> to access the application.

Figure 10 Accessing Nginx

Command line operations are required. You can perform related operations in the following ways:

- Using CloudShell: You need to ensure kubectl has been configured in CloudShell and the cluster has been connected using CloudShell. For details, see Connecting to a Cluster Using CloudShell.

- Using an ECS: You need to prepare a Linux ECS that is in the same VPC as the cluster and has an EIP bound. For details, see Purchasing and Using a Linux ECS. You can use an existing ECS or buy a new one. You also need to install kubectl and connect to the cluster through kubectl.

The following uses the first method as an example to describe how you can use kubectl to create an Nginx workload.

- Click the cluster name to access the cluster console.

- In the upper right corner, click Kubectl Shell to access CloudShell.

Using CloudShell to connect to a cluster is only available in some regions. For details, see the management console. If a region is not supported, using an ECS for operations.

Figure 11 CloudShell

- Create a YAML file for creating a workload. In this example, the file name is nginx-deployment.yaml. You can change it as needed.

A Linux file name is case sensitive and can contain letters, digits, underscores (_), and hyphens (-), but cannot contain slashes (/) or null characters (\0). To improve compatibility, do not use special characters, such as spaces, question marks (?), and asterisks (*).

vim nginx-deployment.yamlThe file content is as follows:

apiVersion: apps/v1 kind: Deployment metadata: name: nginx # Workload name spec: replicas: 1 # Number of pods selector: matchLabels: # Selector, which is used to select resources with specific labels. The value must be the same as that of the selector in the YAML file of the LoadBalancer Service in 6. app: nginx template: metadata: labels: # Labels app: nginx spec: containers: - image: nginx:latest # {Image name}:{Image tag} name: nginx imagePullSecrets: - name: default-secret

Press Esc to exit editing mode and enter :wq to save the file.

- Run the following command to create the workload:

kubectl create -f nginx-deployment.yamlIf information similar to the following is displayed, the workload is being created:

deployment.apps/nginx created

- Run the following command to check the workload status:

kubectl get deployment

If the value of READY is 1/1, the pod created for the workload is available. This means the workload has been created.

NAME READY UP-TO-DATE AVAILABLE AGE nginx 1/1 1 1 4m59s

The following table describes the parameters in the command output.

Table 7 Parameters in the command output Parameter

Example Value

Description

NAME

nginx

Workload name.

READY

1/1

The number of available pods/desired pods for the workload.

UP-TO-DATE

1

The number of pods that have been updated for the workload.

AVAILABLE

1

The number of pods available for the workload.

AGE

4m59s

How long the workload has run.

- Run the following command to create the nginx-elb-svc.yaml file for configuring the LoadBalancer Service and associating the Service with the created workload. You can change the file name as needed.

An existing load balancer is used to create the Service. For details, see Using kubectl to Create a Service (Automatically Creating a Load Balancer).

vim nginx-elb-svc.yamlThe file content is as follows:

apiVersion: v1 kind: Service metadata: name: nginx # Service name annotations: kubernetes.io/elb.id: <your_elb_id> # Load balancer ID. Replace it with the actual value. kubernetes.io/elb.class: performance # Load balancer type spec: selector: # The value must be the same as the value of matchLabels in the YAML file of the workload in 3. app: nginx ports: - name: service0 port: 8080 protocol: TCP targetPort: 80 type: LoadBalancer

Press Esc to exit editing mode and enter :wq to save the file.

Table 8 Parameters for using an existing load balancer Parameter

Example Value

Description

kubernetes.io/elb.id

405ef586-0397-45c3-bfc4-xxx

ID of an existing load balancer.

NOTE:The load balancer must meet the following requirements:

- It is in the same VPC as the cluster.

- It is a dedicated load balancer.

- It has a private IP address bound.

How to Obtain: Log in to the Network Console. In the upper left corner, select the region where the cluster resides. In the navigation pane, choose Elastic Load Balance > Load Balancers. Locate the load balancer and obtain the ID below the load balancer name. Click the name of the load balancer. On the Summary tab, verify that the load balancer meets the preceding requirements.

kubernetes.io/elb.class

performance

Load balancer type. The value can only be performance, which means that only dedicated load balancers are supported.

selector

app: nginx

Selector, which is used by the Service to send traffic to pods with specific labels.

ports.port

8080

The port used by the load balancer as an entry for external traffic. You can use any port.

ports.protocol

TCP

Protocol for the load balancer listener.

ports.targetPort

80

Port used by a Service to access the target container. This port is closely related to the applications running in the container.

If the nginx image is used, set this port to 80.

- Run the following command to create a Service:

kubectl create -f nginx-elb-svc.yaml

If information similar to the following is displayed, the Service has been created:

service/nginx created

- Run the following command to check the Service:

kubectl get svc

If information similar to the following is displayed, the workload access mode has been configured:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.247.0.1 <none> 443/TCP 18h nginx LoadBalancer 10.247.56.18 xx.xx.xx.xx,xx.xx.xx.xx 8080:30581/TCP 5m8s

- In the address box of your browser, enter {External access address}:{Service port} to access the workload. The external access address is the first IP address displayed for EXTERNAL-IP, and the Service port is 8080.

Figure 12 Accessing Nginx

Follow-up Operations: Releasing Resources

To avoid additional expenditures, release resources promptly if you no longer need them.

- Deleting a cluster will delete the workloads and Services in the cluster, and the deleted data cannot be recovered.

- VPC resources (such as endpoints, NAT gateways, and EIPs for SNAT) associated with a cluster are retained by default when the cluster is deleted. Before deleting a cluster, ensure that the resources are not used by other clusters.

- Log in to the CCE console. In the navigation pane, choose Clusters.

- Locate the cluster, click

in the upper right corner of the cluster card, and click Delete Cluster.

in the upper right corner of the cluster card, and click Delete Cluster. - In the displayed Delete Cluster dialog box, delete related resources as prompted.

- Enter DELETE and click Yes to start deleting the cluster.

It takes 1 to 3 minutes to delete a cluster. If the cluster name disappears from the cluster list, the cluster has been deleted.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot