Creating a LoadBalancer Service

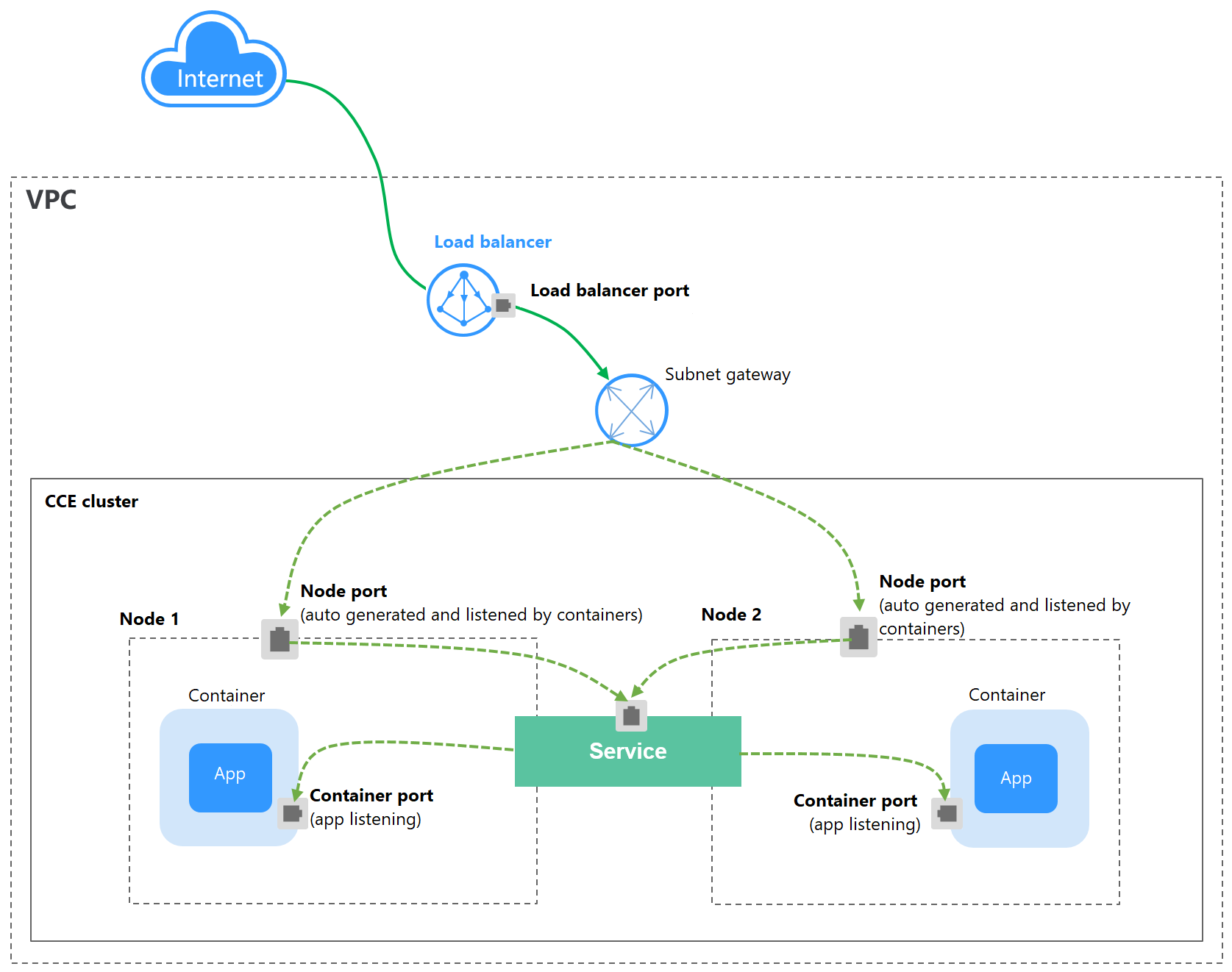

A LoadBalancer Service adds an external load balancer on the top of a NodePort Service and distributes external traffic to multiple pods within a cluster. A LoadBalancer Service provides higher reliability than a NodePort Service. It automatically assigns an external IP address to allow access from the clients. LoadBalancer Services process TCP and UDP traffic at Layer 4 (transport layer) of the OSI model. They can be extended to support Layer 7 (application layer) capabilities to manage HTTP and HTTPS traffic.

If cloud applications require a stable, easy-to-manage entry for external access, you can create a LoadBalancer Service. For example, in a production environment, you can use LoadBalancer Services to expose public-facing services such as web applications and API services to the Internet. These services often need to handle heavy traffic while maintaining high availability. The access address of a LoadBalancer Service is in the format of {EIP-of-load-balancer}:{access-port}, for example, 10.117.117.117:80.

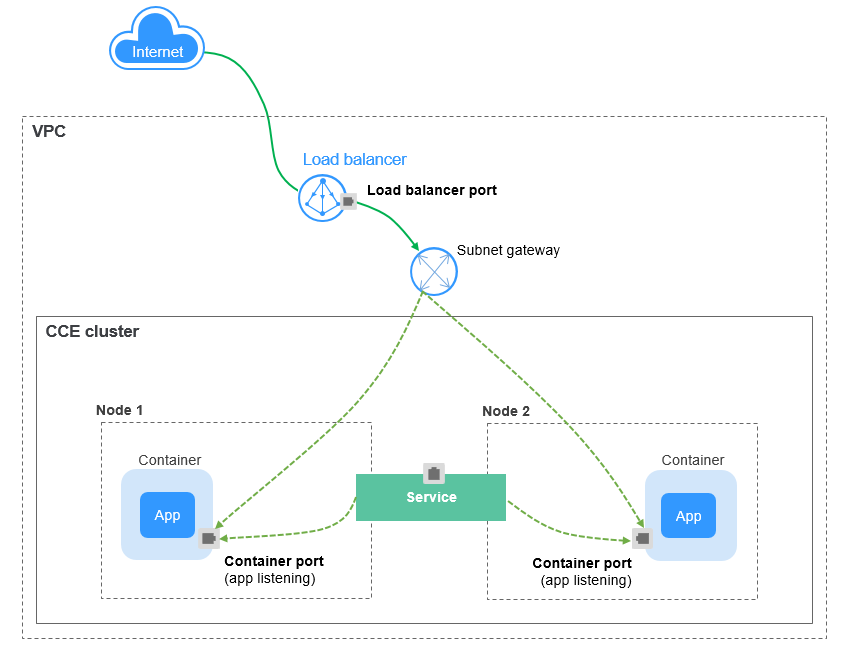

If dedicated load balancers are associated with Services in CCE Turbo clusters, passthrough networking is supported to reduce the network latency and ensure zero performance loss.

External access requests are directly forwarded from a load balancer to pods. Internal access requests can be forwarded to a pod through a Service.

Constraints

- Constraints on load balancers associated with Services:

- The load balancer automatically created based on the Service configuration cannot be associated with other resources. If you associate the load balancer with other resources, the load balancer cannot be automatically deleted when the Service is deleted, resulting in residual resources.

- The names of load balancer listeners for clusters of v1.15 or earlier cannot be changed. If you change the names, access to the load balancer may be abnormal.

- Constraints on dedicated load balancers:

- Dedicated load balancers can be used only in clusters of v1.17 and later.

- Dedicated load balancers must support private networks (with private IP addresses). If a Service needs to support HTTP, the dedicated load balancer must be of the application (HTTP/HTTPS) type.

- Constraints on the service affinity (externalTrafficPolicy) configuration of a Service:

- If the service affinity is set to the node level (externalTrafficPolicy is set to Local), access to the load balancer from within the cluster may be abnormal. For details, see Why a Service Fail to Be Accessed from Within the Cluster.

- After a Service is created, if the affinity setting is switched from the cluster level to the node level, the connection tracing table will not be cleared. Do not modify the Service affinity setting after the Service is created. To modify it, create a Service again.

- In a CCE Turbo cluster that utilizes Cloud Native Network 2.0, node-level affinity is supported only when the Service backend is connected to a hostNetwork pod.

- If you have an IPVS-backed cluster, kube-proxy mounts the Service's load balancer address to the ipvs-0 bridge, which will intercept the traffic from within the cluster to the load balancer. In this case, there may be the following access exceptions:

- If you use the same load balancer for both a LoadBalancer ingress and Service, the ipvs-0 bridge will intercept the traffic from the cluster to the ingress and redirect it to the Service, resulting in an access failure.

- If you use the same load balancer for a Service in the current cluster and a Service in another cluster, the ipvs-0 bridge will intercept the traffic to the Service in another cluster and redirect the traffic to the Service in the current cluster, resulting in an access failure. You can perform the operations in Configuring Passthrough Networking for a LoadBalancer Service to prevent kube-proxy from forwarding the Service's load balancer traffic from the cluster.

- When the cluster service forwarding (proxy) mode is IPVS, the node IP cannot be configured as the external IP of the Service. Otherwise, the node is unavailable.

Creating a LoadBalancer Service

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Services & Ingresses. In the upper right corner, click Create Service.

- Configure parameters.

- Service Name: Specify a name, which can be the same as the workload name.

- Service Type: Select LoadBalancer.

- Namespace: Select the namespace that the workload belongs to.

- Service Affinity: For details, see Service Affinity (externalTrafficPolicy).

- Cluster-level: The IP addresses and ports of all nodes in a cluster can access the workload associated with the Service. However, accessing the Service may result in decreased performance due to route redirection, and the client's source IP address may not be obtainable.

- Node-level: Only the IP address and port of the node where the workload is located can access the workload associated with the Service. Accessing the Service will not result in a performance decrease due to route redirection, and client's source IP address can be obtained.

- Selector: Add a label and click Confirm. The Service will use this label to select pods. You can also click Reference Workload Label to use the label of an existing workload. In the dialog box that is displayed, select a workload and click OK.

- Protocol Version: This function is disabled by default. After this function is enabled, the cluster IP address of the Service can be set to an IPv6 address. For details, see Creating an IPv4/IPv6 Dual-Stack Cluster in CCE. This parameter is available only in clusters of v1.15 or later with IPv6 enabled (set during cluster creation).

- Load Balancer: Select a load balancer type and creation mode.

A load balancer can be dedicated or shared. A dedicated load balancer supports Network (TCP/UDP/TLS), Application (HTTP/HTTPS), or Network (TCP/UDP/TLS) & Application (HTTP/HTTPS).

You can select Use existing or Auto create to obtain a load balancer. For details about the configuration of different creation modes, see Table 1.Table 1 Load balancer configuration How to Create

Configuration

Use existing

Only the load balancers in the same VPC as the cluster can be selected. If no load balancer is available, click Create Load Balancer to create one on the ELB console.

Auto create

- Instance Name: Enter a load balancer name.

- Enterprise Project: This parameter is available only for enterprise users who have enabled an enterprise project. Enterprise projects facilitate project-level management and grouping of cloud resources and users.

- AZ: available only to dedicated load balancers. You can create load balancers in multiple AZs to improve service availability. If diaster recovery is required, you are advised to select multiple AZs.

- Frontend Subnet: available only to dedicated load balancers. It is used to allocate IP addresses for load balancers to provide services externally.

- Backend Subnet: available only to dedicated load balancers. It is used to allocate IP addresses for load balancers to access the backend service.

- Network Specifications, Application-oriented Specifications, or Specifications (available only to dedicated load balancers)

- Fixed: applies to stable traffic, billed based on specifications.

- EIP: If you select Auto assign, you can configure the billing mode and bandwidth size for the public network.

- Resource Tag: You can add resource tags to classify resources. You can create predefined tags on the TMS console. These tags are available to all resources that support tags. You can use these tags to improve the tag creation and resource migration efficiency.

Set ELB: You can click Edit and configure the load balancing algorithm and sticky session.

- Load balancing algorithm: You can select Weighted round robin, Weighted least connections, or Source IP hash.

- Weighted round robin: Requests are forwarded to different servers based on their weights, which indicate server processing performance. Backend servers with higher weights receive proportionately more requests, whereas equal-weighted servers receive the same number of requests. This algorithm is often used for short connections, such as HTTP services.

- Weighted least connections: In addition to the weight assigned to each server, the number of connections processed by each backend server is considered. Requests are forwarded to the server with the lowest connections-to-weight ratio. This algorithm is based on the least connections algorithm and assigns a weight to each server based on their processing capability. This algorithm is often used for persistent connections, such as database connections.

- Source IP hash: The source IP address of each request is calculated using the hash algorithm to obtain a unique hash key, and all backend servers are numbered. The generated key allocates the client to a particular server. This enables requests from different clients to be distributed in load balancing mode and ensures that requests from the same client are forwarded to the same server. This algorithm applies to TCP connections without cookies.

- Sticky Session: This function is disabled by default.

If the listener's frontend protocol is TCP or UDP, sticky sessions can use source IP addresses. This means that access requests from the same IP address will be directed to the same backend server or pod.

- Health Check: Configure health check for the load balancer.

- Global: applies only to ports using the same protocol. If you need to configure multiple ports using different protocols, select Custom.

- Custom: applies to ports using different protocols. For details about the YAML configuration for custom health check, see Configuring Health Check on Multiple LoadBalancer Service Ports.

Table 2 Health check parameters Parameter

Description

Protocol

When the protocol of Port is set to TCP, the TCP and HTTP protocols are supported. When the protocol of Port is set to UDP, the UDP protocol is supported.

- Check Path: This parameter is only available for HTTP health check. It specifies the URL for health check. The check path must start with a slash (/) and contain 1 to 80 characters.

Port

By default, the service port (NodePort or container port of the Service) is used for health check. You can also specify another port for health check. After the port is specified, a service port named cce-healthz will be added for the Service.

- Node Port: If a shared or dedicated load balancer is used without an associated network interface, the node port will be used as the health check port. If the port is not specified, a random port will be used. The value ranges from 30000 to 32767.

- Container Port: When a dedicated load balancer is associated with a network interface, the container port is used for health check. The value ranges from 1 to 65535.

Check Period (s)

Specifies the maximum interval between health checks. The value ranges from 1 to 50.

Timeout (s)

Specifies the maximum timeout for each health check. The value ranges from 1 to 50.

Max. Retries

Specifies the maximum number of health check retries. The value ranges from 1 to 10.

- Port

- Protocol: the protocol used by the Service. If a Service needs to use ELB TLS or HTTP, set Protocol to TCP and select the target frontend protocol. For details, see Protocols for Services.

- Container Port: the port that the workload listens on. For example, Nginx uses port 80 by default.

- Service Port: the port used by the Service. The port ranges from 1 to 65535.

ELB allows you to create listeners that listen on ports within specified ranges. Each listener can support up to 10 non-overlapping port ranges.

To configure port ranges for load balancer listeners, ensure the following conditions are met:

- The cluster version must be v1.23.18-r0, v1.25.13-r0, v1.27.10-r0, v1.28.8-r0, v1.29.4-r0, v1.30.1-r0, or later.

- A dedicated load balancer must be used with TCP/UDP/TLS selected.

- This function requires ELB. Before using this function, check whether ELB supports full-port listening and forwarding for layer-4 protocols in the current region.

- Frontend Protocol: Set the protocol of the load balancer listener for establishing connections with clients. When a dedicated load balancer is selected, HTTP/HTTPS can be configured only when Application (HTTP/HTTPS) is selected and TLS can be configured only when Network (TCP/UDP/TLS) is selected.

- Health Check: If Health Check is set to Custom, you can configure health check for ports using different protocols. For details, see Table 2.

When a LoadBalancer Service is created, a random node port number (NodePort) is automatically generated.

- Listener

- SSL Authentication: Select this option if HTTPS/TLS is enabled on the listener port. This parameter is available only in clusters of v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later versions.

- One-way authentication: Only the backend server is authenticated. If you also need to authenticate the identity of the client, select two-way authentication.

- Two-way authentication: Both the clients and the load balancer authenticate each other. This ensures only authenticated clients can access the load balancer. No additional backend server configuration is required if you select this option.

- CA Certificate: If SSL Authentication is set to Two-way authentication, add a CA certificate to authenticate the client. A CA certificate is issued by a Certificate Authority (CA) and is used to verify the issuer of the client's certificate. If HTTPS two-way authentication is enabled, HTTPS connections can be established only if the client provides a certificate issued by a specific CA.

- Server Certificate: If HTTPS/TLS is enabled on the listener port, you must select a server certificate. If no certificate is available, create one on the ELB console. For details, see Adding a Certificate.

- SNI: If HTTPS/TLS is enabled on the listener port, you must determine whether to add an SNI certificate. Before adding an SNI certificate, ensure the certificate contains a domain name. If no certificate is available, create one on the ELB console. For details, see Adding a Certificate.

If the server cannot find an SNI certificate matching the client-requested domain name, it will return the default server certificate.

- Security Policy: If HTTPS/TLS is enabled on the listener port, you can select a security policy. This parameter is available only in clusters of v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later versions.

- Backend Protocol: If HTTPS is enabled on the listener port, HTTP or HTTPS can be used to access the backend server. The default value is HTTP. If TLS is enabled on the listener port, TCP or TLS can be used to access the backend server. The default value is TCP. This parameter is available only in clusters of v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later versions.

- Access Control

- Inherit ELB Configurations: CCE does not modify the existing access control configurations on the ELB console.

- Allow all IP addresses: No access control is configured.

- Trustlist: Only the selected IP address group can access the load balancer.

- Blocklist: The selected IP address group cannot access the load balancer.

For clusters of v1.25.16-r10, v1.27.16-r10, v1.28.15-r0, v1.29.10-r0, v1.30.6-r0, v1.31.1-r0, or later, when using a dedicated load balancer, you can select a maximum of five IP address groups at a time for access control.

- Advanced Options

Configuration

Description

Constraint

Transfer Listener Port Number

If this function is enabled, the listening port on the load balancer can be transferred to backend servers through the HTTP header of the packet.

This parameter is available only after HTTP/HTTPS is enabled on the listener port of a dedicated load balancer.

Transfer Port Number in the Request

If this function is enabled, the source port of the client can be transferred to backend servers through the HTTP header of the packet.

This parameter is available only after HTTP/HTTPS is enabled on the listener port of a dedicated load balancer.

Rewrite X-Forwarded-Host

If this function is enabled, X-Forwarded-Host will be rewritten using the Host field in the client request header and transferred to backend servers.

This parameter is available only after HTTP/HTTPS is enabled on the listener port of a dedicated load balancer.

Rewrite X-Real-IP

If this function is enabled, the source IP address of the client will be rewritten into the X-Real-IP header and transferred to backend servers.

This parameter is available only after HTTP/HTTPS is enabled on the listener port of a dedicated load balancer.

Data Compression

If this function is enabled, specific files will be compressed. If it is not enabled, files will not be compressed.

This parameter is available only after HTTP/HTTPS is enabled on the listener port of a dedicated load balancer.

Idle Timeout (s)

The duration for which a client connection can remain idle before being terminated. If there are no requests reaching the load balancer during this period, the load balancer will disconnect the connection from the client and establish a new connection when there is a new request.

This configuration is not supported if the port of a shared load balancer uses UDP.

Request Timeout (s)

The duration within which a request from a client must be received. There are two cases:

- If the client fails to send a request header to the load balancer during this period, the request will be interrupted.

- If the interval between two consecutive request bodies reaching the load balancer exceeds the timeout duration, the connection will be disconnected.

This parameter is available only after HTTP/HTTPS is enabled on ports.

Response Timeout (s)

The duration within which a response from the backend server is expected. If the backend server does not respond within this period after receiving a request, the load balancer will stop waiting and return an HTTP 504 error.

This parameter is available only after HTTP/HTTPS is enabled on ports.

Enable HTTP/2

Whether to enable HTTP/2 for HTTPS requests between the client and the load balancer. Request forwarding using HTTP/2 improves access performance between your application and the load balancer. However, the load balancer still uses HTTP/1.x to forward requests to the backend server.

This parameter is available only after HTTPS is enabled on ports.

- SSL Authentication: Select this option if HTTPS/TLS is enabled on the listener port. This parameter is available only in clusters of v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later versions.

- Annotation: LoadBalancer Services expose advanced CCE functions via annotations. For details, see Configuring Advanced Load Balancing Functions Using Annotations.

- Click OK.

You can configure LoadBalancer Service access using kubectl when creating a workload. If you already have a load balancer in the same VPC and want to use it as the application access entry when creating a Service in the cluster, follow the following steps to associate the load balancer with the Service:

- Use kubectl to access the cluster. For details, see Accessing a Cluster Using kubectl.

- Create and edit the nginx-deployment.yaml file to configure the sample workload. For details, see Creating a Deployment. nginx-deployment.yaml is an example file name. You can rename it as needed.

vi nginx-deployment.yaml

File content:apiVersion: apps/v1 kind: Deployment metadata: name: nginx spec: replicas: 1 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - image: nginx:latest name: nginx imagePullSecrets: - name: default-secret - Create and edit the nginx-elb-svc.yaml file to configure Service parameters. nginx-elb-svc.yaml is an example file name. You can rename it as needed.

vi nginx-elb-svc.yaml

File content:

apiVersion: v1 kind: Service metadata: name: nginx annotations: kubernetes.io/elb.id: <your_elb_id> # Load balancer ID. Replace it with the actual value. kubernetes.io/elb.class: performance # Load balancer type kubernetes.io/elb.lb-algorithm: ROUND_ROBIN # Load balancer algorithm kubernetes.io/elb.session-affinity-mode: SOURCE_IP # The sticky session type is source IP address. kubernetes.io/elb.session-affinity-option: '{"persistence_timeout": "30"}' # Stickiness duration, which is measured in minutes kubernetes.io/elb.health-check-flag: 'on' # Enable ELB health check. kubernetes.io/elb.health-check-option: '{ "protocol":"TCP", "delay":"5", "timeout":"10", "max_retries":"3" }' spec: selector: app: nginx ports: - name: service0 port: 80 # Port for accessing the Service, which is also the listener port on the load balancer. protocol: TCP targetPort: 80 # Port used by a Service to access the target container. This port is closely related to the applications running in a container. nodePort: 31128 # Port number of the node. If this parameter is not specified, a random port number ranging from 30000 to 32767 is generated. type: LoadBalancer

To enable sticky sessions, ensure anti-affinity is configured for the workload pods so that the pods are deployed onto different nodes. For details, see Configuring Workload Affinity or Anti-affinity Scheduling (podAffinity or podAntiAffinity).

The preceding example uses annotations to implement some advanced functions of load balancing, such as sticky session and health check. For details, see Table 3.

For more annotations and examples related to advanced functions, see Configuring Advanced Load Balancing Functions Using Annotations.

Table 3 annotations parameters Parameter

Mandatory

Type

Description

kubernetes.io/elb.id

Yes

String

ID of a load balancer.

Mandatory when an existing load balancer is associated.

How to obtain:

On the management console, click Service List, and choose Networking > Elastic Load Balance. Click the name of the target load balancer. On the Summary tab page, find and copy the ID.

NOTE:The system preferentially connects to the load balancer based on the kubernetes.io/elb.id field. If this field is not specified, the spec.loadBalancerIP field is used (optional and available only in 1.23 and earlier versions).

Do not use the spec.loadBalancerIP field to connect to the load balancer. This field will be discarded by Kubernetes. For details, see Deprecation.

kubernetes.io/elb.class

Yes

String

Select a proper load balancer type.

Options:

- union: shared load balancer

- performance: dedicated load balancer, which can be used only in clusters of v1.17 and later. For details, see Differences Between Shared and Dedicated Load Balancers.

kubernetes.io/elb.lb-algorithm

No

String

Specifies the load balancing algorithm of the backend server group. The default value is ROUND_ROBIN.

Options:

- ROUND_ROBIN: weighted round robin algorithm

- LEAST_CONNECTIONS: weighted least connections algorithm

- SOURCE_IP: source IP hash algorithm

NOTE:If this parameter is set to SOURCE_IP, the weight setting (weight field) of backend servers bound to the backend server group is invalid, and sticky session cannot be enabled.

kubernetes.io/elb.session-affinity-mode

No

String

Source IP address-based sticky session means that access requests from the same IP address are forwarded to the same backend server.

- To disable sticky sessions, leave this parameter unconfigured.

- To enable sticky sessions, ensure the frontend protocol is TCP or UDP and set the sticky session type to SOURCE_IP.

NOTE:When kubernetes.io/elb.lb-algorithm is set to SOURCE_IP (source IP hash), sticky session cannot be enabled.

kubernetes.io/elb.session-affinity-option

No

Table 4 object

Sticky session timeout.

kubernetes.io/elb.health-check-flag

No

String

Whether to enable the ELB health check.

- Enabling health check: Leave this parameter blank or set it to on.

- Disabling health check: Set this parameter to off.

If this parameter is enabled, the kubernetes.io/elb.health-check-option field must also be specified.

kubernetes.io/elb.health-check-option

No

Table 5 object

ELB health check configuration items.

Table 4 elb.session-affinity-option data structure Parameter

Mandatory

Type

Description

persistence_timeout

Yes

String

Sticky session timeout, in minutes. This parameter is valid only when elb.session-affinity-mode is set to SOURCE_IP.

Value range: 1 to 60. Default value: 60

Table 5 elb.health-check-option data structure Parameter

Mandatory

Type

Description

delay

No

String

Health check interval (s)

Value range: 1 to 50. Default value: 5

timeout

No

String

Health check timeout, in seconds.

Value range: 1 to 50. Default value: 10

max_retries

No

String

Maximum number of health check retries.

Value range: 1 to 10. Default value: 3

protocol

No

String

Health check protocol.

Options: TCP, UDP, or HTTP

path

No

String

Health check URL. This parameter needs to be configured when the protocol is HTTP.

Default value: /

Value range: 1-80 characters

- Create a workload.

kubectl create -f nginx-deployment.yaml

If information similar to the following is displayed, the workload has been created:

deployment/nginx created

Check the created workload.

kubectl get pod

If information similar to the following is displayed, the workload is running:

NAME READY STATUS RESTARTS AGE nginx-2601814895-znhbr 1/1 Running 0 15s

- Create a Service.

kubectl create -f nginx-elb-svc.yaml

If information similar to the following is displayed, the Service has been created:

service/nginx created

View the created Service.

kubectl get svc

If information similar to the following is displayed, the workload's access mode has been configured:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.247.0.1 <none> 443/TCP 3d nginx LoadBalancer 10.247.130.196 10.78.42.242 80:31540/TCP 51s

- Enter the URL in the address box of the browser, for example, 10.78.42.242:80. 10.78.42.242 indicates the IP address of the load balancer, and 80 indicates the access port displayed on the CCE console.

The Nginx is accessible.

Figure 3 Accessing Nginx through the LoadBalancer Service

You can configure LoadBalancer Service access using kubectl when creating a workload. If no load balancer is available when you create a Service, perform the following operations to automatically create a load balancer and associate it with the Service:

- Use kubectl to access the cluster. For details, see Accessing a Cluster Using kubectl.

- Create and edit the nginx-deployment.yaml file to configure the sample workload. For details, see Creating a Deployment. nginx-deployment.yaml is an example file name. You can rename it as needed.

vi nginx-deployment.yaml

File content:apiVersion: apps/v1 kind: Deployment metadata: name: nginx spec: replicas: 1 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - image: nginx:latest name: nginx imagePullSecrets: - name: default-secret - Create and edit the nginx-elb-svc.yaml file to configure Service parameters. nginx-elb-svc.yaml is an example file name. You can rename it as needed.

vi nginx-elb-svc.yaml

To enable sticky sessions, ensure anti-affinity is configured for the workload pods so that the pods are deployed onto different nodes. For details, see Configuring Workload Affinity or Anti-affinity Scheduling (podAffinity or podAntiAffinity).

Example of a Service using a public network shared load balancer:apiVersion: v1 kind: Service metadata: annotations: kubernetes.io/elb.class: union kubernetes.io/elb.autocreate: '{ "type": "public", "bandwidth_name": "cce-bandwidth-1551163379627", "bandwidth_chargemode": "bandwidth", "bandwidth_size": 5, "bandwidth_sharetype": "PER", "vip_subnet_cidr_id": "*****", "vip_address": "**.**.**.**", "eip_type": "5_bgp" }' kubernetes.io/elb.enterpriseID: '0' # ID of the enterprise project that the load balancer belongs to kubernetes.io/elb.lb-algorithm: ROUND_ROBIN # Load balancer algorithm kubernetes.io/elb.session-affinity-mode: SOURCE_IP # The sticky session type is source IP address. kubernetes.io/elb.session-affinity-option: '{"persistence_timeout": "30"}' # Stickiness duration, which is measured in minutes kubernetes.io/elb.health-check-flag: 'on' # Enable ELB health check. kubernetes.io/elb.health-check-option: '{ "protocol":"TCP", "delay":"5", "timeout":"10", "max_retries":"3" }' kubernetes.io/elb.tags: key1=value1,key2=value2 # ELB resource tags labels: app: nginx name: nginx spec: ports: - name: service0 port: 80 protocol: TCP targetPort: 80 selector: app: nginx type: LoadBalancerExample Service using a public network dedicated load balancer (only for clusters of v1.17 and later):apiVersion: v1 kind: Service metadata: name: nginx labels: app: nginx namespace: default annotations: kubernetes.io/elb.class: performance kubernetes.io/elb.autocreate: '{ "type": "public", "bandwidth_name": "cce-bandwidth-1626694478577", "bandwidth_chargemode": "bandwidth", "bandwidth_size": 5, "bandwidth_sharetype": "PER", "eip_type": "5_bgp", "vip_subnet_cidr_id": "*****", "vip_address": "**.**.**.**", "elb_virsubnet_ids": ["*****"], "ipv6_vip_virsubnet_id": "*****", "available_zone": [ "" ], "l4_flavor_name": "L4_flavor.elb.s1.small" }' kubernetes.io/elb.enterpriseID: '0' # ID of the enterprise project that the load balancer belongs to kubernetes.io/elb.lb-algorithm: ROUND_ROBIN # Load balancer algorithm kubernetes.io/elb.session-affinity-mode: SOURCE_IP # The sticky session type is source IP address. kubernetes.io/elb.session-affinity-option: '{"persistence_timeout": "30"}' # Stickiness duration, which is measured in minutes kubernetes.io/elb.health-check-flag: 'on' # Enable ELB health check. kubernetes.io/elb.health-check-option: '{ "protocol":"TCP", "delay":"5", "timeout":"10", "max_retries":"3" }' kubernetes.io/elb.tags: key1=value1,key2=value2 # ELB resource tags spec: selector: app: nginx ports: - name: cce-service-0 targetPort: 80 nodePort: 0 port: 80 protocol: TCP type: LoadBalancerThe preceding example uses annotations to implement some advanced functions of load balancing, such as sticky session and health check. For details, see Table 6.

For more annotations and examples related to advanced functions, see Configuring Advanced Load Balancing Functions Using Annotations.

Table 6 annotations parameters Parameter

Mandatory

Type

Description

kubernetes.io/elb.class

Yes

String

Select a proper load balancer type.

Options:

- union: shared load balancer

- performance: dedicated load balancer, which can be used only in clusters of v1.17 and later. For details, see Differences Between Shared and Dedicated Load Balancers.

The default value is union.

kubernetes.io/elb.autocreate

Yes

elb.autocreate object

Whether to automatically create a load balancer associated with the Service.

CAUTION:kubernetes.io/elb.id and kubernetes.io/elb.autocreate cannot be specified concurrently.

If both annotations are specified in a Service, CCE will use an existing load balancer instead of automatically creating one. If this Service is deleted, the existing load balancer is considered as an automatically created one. If no other resources are associated with the load balancer, the load balancer will also be deleted.

Example:

- Automatically created dedicated load balancer with an EIP bound:

'{"type":"public","bandwidth_name":"cce-bandwidth-1741230802502","bandwidth_chargemode":"bandwidth","bandwidth_size":5,"bandwidth_sharetype":"PER","eip_type":"5_bgp","available_zone":["*****"],"elb_virsubnet_ids":["*****"],"l7_flavor_name":"","l4_flavor_name":"L4_flavor.elb.pro.max","vip_subnet_cidr_id":"*****"}'

- Automatically created dedicated load balancer with no EIP bound:

'{"type":"inner","available_zone":["*****"],"elb_virsubnet_ids":["*****"],"l7_flavor_name":"","l4_flavor_name":"L4_flavor.elb.pro.max","vip_subnet_cidr_id":"*****"}'

- Automatically created shared load balancer with an EIP bound:

'{"type":"public","bandwidth_name":"cce-bandwidth-1551163379627","bandwidth_chargemode":"bandwidth","bandwidth_size":5,"bandwidth_sharetype":"PER","eip_type":"5_bgp","name":"james"}'

- Automatically created shared load balancer with no EIP bound:

kubernetes.io/elb.subnet-id

N/A

String

ID of the subnet where the cluster is located. The value can contain 1 to 100 characters.

- Mandatory when a cluster of v1.11.7-r0 or earlier is to be automatically created.

- Optional for clusters of a version later than v1.11.7-r0.

For details about how to obtain the value, see What Is the Difference Between the VPC Subnet API and the OpenStack Neutron Subnet API?

kubernetes.io/elb.enterpriseID

No

String

This parameter is available in clusters of v1.15 or later. In clusters earlier than v1.15, load balancers are created in the default project by default.

This parameter specifies the ID of the enterprise project where a load balancer is created. It is applicable only to enterprise accounts with enterprise projects enabled. After an enterprise project ID is selected, resources can be created in the ELB enterprise project.

If this parameter is not specified or is set to 0, resources will be bound to the default enterprise project.

How to obtain:

Log in to the EPS console, click the name of the target enterprise project. On the enterprise project details page, find the ID field and copy the ID.

kubernetes.io/elb.lb-algorithm

No

String

Specifies the load balancing algorithm of the backend server group. The default value is ROUND_ROBIN.

Options:

- ROUND_ROBIN: weighted round robin algorithm

- LEAST_CONNECTIONS: weighted least connections algorithm

- SOURCE_IP: source IP hash algorithm

NOTE:If this parameter is set to SOURCE_IP, the weight setting (weight field) of backend servers bound to the backend server group is invalid, and sticky session cannot be enabled.

kubernetes.io/elb.session-affinity-mode

No

String

Source IP address-based sticky session means that access requests from the same IP address are forwarded to the same backend server.

- To disable sticky sessions, leave this parameter unconfigured.

- To enable sticky session, add this parameter and set it to SOURCE_IP, indicating that the sticky session is based on the source IP address.

NOTE:When kubernetes.io/elb.lb-algorithm is set to SOURCE_IP (source IP hash), sticky session cannot be enabled.

kubernetes.io/elb.session-affinity-option

No

Table 4 object

Sticky session timeout.

kubernetes.io/elb.health-check-flag

No

String

Whether to enable the ELB health check.

- Enabling health check: Leave this parameter blank or set it to on.

- Disabling health check: Set this parameter to off.

If this parameter is enabled, the kubernetes.io/elb.health-check-option field must also be specified.

kubernetes.io/elb.health-check-option

No

Table 5 object

ELB health check configuration items.

kubernetes.io/elb.tags

No

String

Whether to add resource tags to a load balancer. This function is available only when the load balancer is automatically created, and the cluster is of v1.23.11-r0, v1.25.6-r0, v1.27.3-r0, or later.

A tag is in the format of "key=value". Use commas (,) to separate multiple tags.

Table 7 elb.autocreate data structure Parameter

Mandatory

Type

Description

name

No

String

Name of the automatically created load balancer.

The value can contain 1 to 64 characters. Only letters, digits, underscores (_), hyphens (-), and periods (.) are allowed.

Default: cce-lb+service.UID

type

No

String

Network type of the load balancer.

- public: public network load balancer

- inner: private network load balancer

Default: inner

bandwidth_name

Yes for public network load balancers

String

Bandwidth name. The default value is cce-bandwidth-******.

The value can contain 1 to 64 characters. Only letters, digits, underscores (_), hyphens (-), and periods (.) are allowed.

bandwidth_chargemode

Yes for public network load balancers

String

Bandwidth billing mode.

- bandwidth: billed by bandwidth

- traffic: billed by traffic

bandwidth_size

Yes for public network load balancers

Integer

Bandwidth size. The value ranges from 1 Mbit/s to 2000 Mbit/s by default. Configure this parameter based on the bandwidth range allowed in your region.

The minimum increment for bandwidth adjustment varies depending on the bandwidth range.- The minimum increment is 1 Mbit/s if the allowed bandwidth does not exceed 300 Mbit/s.

- The minimum increment is 50 Mbit/s if the allowed bandwidth ranges from 300 Mbit/s to 1000 Mbit/s.

- The minimum increment is 500 Mbit/s if the allowed bandwidth exceeds 1000 Mbit/s.

bandwidth_sharetype

Yes for public network load balancers

String

Bandwidth sharing mode.

- PER: dedicated bandwidth

eip_type

Yes for public network load balancers

String

EIP type.

- 5_telcom: China Telecom

- 5_union: China Unicom

- 5_bgp: dynamic BGP

- 5_sbgp: static BGP

The types vary by region. For details, see the EIP console.

vip_subnet_cidr_id

No

String

The ID of the IPv4 subnet where the load balancer resides. This subnet is used to allocate IP addresses for the load balancer to provide external services. The IPv4 subnet must belong to the cluster's VPC.

If this parameter is not specified, the load balancer and the cluster will be in the same subnet by default.

This field can be specified only for clusters of v1.21 or later.

How to obtain:

Log in to the VPC console. In the navigation pane, choose Subnets. Filter the target subnet by the cluster's VPC name, click the subnet name, and copy the IPv4 Subnet ID on the Summary tab.

ipv6_vip_virsubnet_id

No

String

The ID of the IPv6 subnet where the load balancer is deployed. IPv6 must be enabled for the subnet.

This parameter is available only for dedicated load balancers.

How to obtain:

Log in to the VPC console. In the navigation pane, choose Subnets. Filter the target subnet by the cluster's VPC name, click the subnet name, and copy the Network ID on the Summary tab.

elb_virsubnet_ids

No

Array of strings

The network ID of the subnet where the load balancer is located. This subnet is used to allocate IP addresses for accessing the backend server. If this parameter is not specified, the subnet specified by vip_subnet_cidr_id will be used by default. Load balancers occupy varying numbers of subnet IP addresses based on their specifications. Do not use the subnet CIDR blocks of other resources (such as clusters or nodes) as the load balancer's CIDR block.

This parameter is available only for dedicated load balancers.

Example:

"elb_virsubnet_ids": [ "14567f27-8ae4-42b8-ae47-9f847a4690dd" ]

How to obtain:

Log in to the VPC console. In the navigation pane, choose Subnets. Filter the target subnet by the cluster's VPC name, click the subnet name, and copy the Network ID on the Summary tab.

vip_address

No

String

Private IP address of the load balancer. Only IPv4 addresses are supported.

The IP address must be in the ELB CIDR block. If this parameter is not specified, an IP address will be automatically assigned from the ELB CIDR block.

This parameter is available only in clusters of v1.23.11-r0, v1.25.6-r0, v1.27.3-r0, or later versions.

available_zone

Yes

Array of strings

AZ where the load balancer is located.

You can obtain all supported AZs by getting the AZ list.

This parameter is available only for dedicated load balancers.

l4_flavor_name

No

String

Flavor name of the Layer 4 load balancer. This parameter is mandatory when TCP, TLS, or UDP is used.

You can obtain all supported types by getting the flavor list.

This parameter is available only for dedicated load balancers.

l7_flavor_name

No

String

Flavor name of the Layer 7 load balancer. This parameter is mandatory when HTTP is used.

You can obtain all supported types by getting the flavor list.

This parameter is available only for dedicated load balancers. Its value must match that of l4_flavor_name, both of which must be either elastic flavors or fixed flavors.

- Create a workload.

kubectl create -f nginx-deployment.yaml

If information similar to the following is displayed, the workload has been created:

deployment/nginx created

Check the created workload.

kubectl get pod

If information similar to the following is displayed, the workload is running:

NAME READY STATUS RESTARTS AGE nginx-2601814895-znhbr 1/1 Running 0 15s

- Create a Service.

kubectl create -f nginx-elb-svc.yaml

If information similar to the following is displayed, the Service has been created:

service/nginx created

View the created Service.

kubectl get svc

If information similar to the following is displayed, the workload's access mode has been configured:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.247.0.1 <none> 443/TCP 3d nginx LoadBalancer 10.247.130.196 10.78.42.242 80:31540/TCP 51s

- Enter the URL in the address box of the browser, for example, 10.78.42.242:80. 10.78.42.242 indicates the IP address of the load balancer, and 80 indicates the access port displayed on the CCE console.

The Nginx is accessible.

Figure 4 Accessing Nginx through the LoadBalancer Service

Helpful Links

- LoadBalancer Services provide Layer 4 network access. If you need Layer 7 network access, create an ingress.

- If workloads in a cluster cannot be access through a LoadBalancer Service, you can rectify the fault by referring to How Do I Resolve Issues with a LoadBalancer Service?

- To configure more advanced features, see Configuring Advanced Load Balancing Functions Using Annotations.

- For details about the Kubernetes Service API, see the Kubernetes documentation.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot