Configuring Node Fault Detection Policies

Node fault detection depends on the CCE Node Problem Detector add-on (CCE Node Problem Detector). The add-on pod runs on each node to monitor node faults. This section describes how to enable node fault detection.

Prerequisites

The CCE Node Problem Detector add-on (CCE Node Problem Detector) add-on has been installed in the cluster.

Enabling Node Fault Detection

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Nodes. Then click the Nodes tab. Verify that the CCE Node Problem Detector add-on is installed in the cluster and updated to the latest version. Fault detection will then be available.

- When this add-on is running normally, click Fault Detection Policies to check the current fault detection items. For more details, see NPD Check Items.

- Check the node list for any abnormal metrics.

- Click Abnormal metrics and rectify the fault as prompted.

Custom Check Items

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Nodes and then click the Nodes tab. Then, click Fault Detection Policies above the node list.

- On the displayed page, view the current check items. Click Edit in the Operation column and edit checks.

Currently, the following configurations are supported:

- Enable/Disable: Enable or disable a check item.

- Target Node: By default, check items are executed on all nodes. You can add the node label to filter the node that meets all conditions. For example, the spot ECS interruption reclamation check item is executed only on spot ECS nodes. You can use the node label cce.io/is-spot to filter the spot ECS node.

- Check Interval: The default check period is 30 seconds. You can change the value as required.

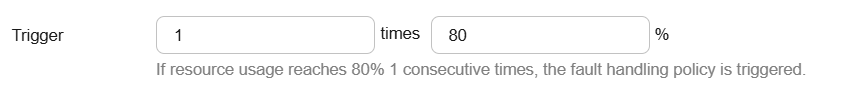

- Trigger: The CCE Node Problem Detector add-on provides the default threshold to match common fault scenarios. You can change the threshold as required. The threshold varies depending on check items, such as the number of failures and resource usage percentage. You can adjust the threshold as required. For example, you can change the threshold of resource usage percentage from 90% to 80%.

- Policy: After a fault occurs, you can select the strategies listed in the following table as needed.

Table 1 Troubleshooting strategies Troubleshooting Strategy

Effect

Report exception

Kubernetes events are reported.

Disabling scheduling

Kubernetes events are reported and the NoSchedule taint is added to the node.

Evict workload

Kubernetes events are reported and the NoExecute taint is added to the node. This operation will evict workloads on the node and interrupt services. Exercise caution when performing this operation.

NPD Check Items

Check items are supported only in 1.16.0 and later versions.

Check items cover events and statuses.

- Event-related

For event-related check items, when a problem occurs, NPD reports an event to the API server. The event type can be Normal (normal event) or Warning (abnormal event).

Table 2 Event-related check items Check Item

Function

Description

OOMKilling

Listen to the kernel logs and check whether OOM events occur and are reported.

Typical scenario: The memory used by the process in the container exceeds the limit, triggering OOM and terminating the process.

Warning event

Listening object: /dev/kmsg

Matching rule: "Killed process \\d+ (.+) total-vm:\\d+kB, anon-rss:\\d+kB, file-rss:\\d+kB.*"

TaskHung

Listen to the kernel logs and check whether taskHung events occur and are reported.

Typical scenario: Disk I/O suspension causes process suspension.

Warning event

Listening object: /dev/kmsg

Matching rule: "task \\S+:\\w+ blocked for more than \\w+ seconds\\."

ReadonlyFilesystem

Check whether the Remount root filesystem read-only error occurs in the system kernel by listening to the kernel logs.

Typical scenario: A user detaches a data disk from a node by mistake on the ECS, and applications continuously write data to the mount point of the data disk. As a result, an I/O error occurs in the kernel and the disk is remounted as a read-only disk.

NOTE:If the rootfs of node pods is of the device mapper type, an error will occur in the thin pool if a data disk is detached. This will affect NPD and NPD will not be able to detect node faults.

Warning event

Listening object: /dev/kmsg

Matching rule: Remounting filesystem read-only

- Status-related

For status-related check items, when a problem occurs, NPD reports an event to the API server and changes the node status synchronously. This function can be used together with Node-problem-controller fault isolation to isolate nodes.

If the check period is not specified in the following check items, the default period is 30 seconds.

Table 4 Checking system metrics Check Item

Function

Description

Conntrack table full

ConntrackFullProblem

Check whether the conntrack table is full.

- Default threshold: 90%

- Usage: nf_conntrack_count

- Maximum value: nf_conntrack_max

Insufficient disk resources

DiskProblem

Check the usage of the system disk and CCE data disks (including the CRI logical disk and kubelet logical disk) on the node.

- Default threshold: 90%

- Source:

df -h

Currently, additional data disks are not supported.

Insufficient file handles

FDProblem

Check if the FD file handles are used up.

- Default threshold: 90%

- Usage: the first value in /proc/sys/fs/file-nr

- Maximum value: the third value in /proc/sys/fs/file-nr

Insufficient node memory

MemoryProblem

Check whether memory is used up.

- Default threshold: 80%

- Usage: MemTotal-MemAvailable in /proc/meminfo

- Maximum value: MemTotal in /proc/meminfo

Insufficient process resources

PIDProblem

Check whether PID process resources are exhausted.

- Default threshold: 90%

- Usage: denominator of the fourth value in /proc/loadavg, which indicates the total number of processes that can run

- Maximum value: smaller value between /proc/sys/kernel/pid_max and /proc/sys/kernel/threads-max.

Table 6 Other check items Check Item

Function

Description

Abnormal NTP

NTPProblem

Check whether the node clock synchronization service ntpd or chronyd is running properly and whether a system time drift is caused.

Default clock offset threshold: 8000 ms

Process D error

ProcessD

Check whether there is a process D on the node.

Default threshold: 10 abnormal processes detected for three consecutive times

Source:

- /proc/{PID}/stat

- Alternately, you can run the ps aux command.

Exceptional scenario: The ProcessD check item ignores the resident D processes (heartbeat and update) on which the SDI driver on the BMS node depends.

Process Z error

ProcessZ

Check whether the node has processes in Z state.

ResolvConf error

ResolvConfFileProblem

Check whether the ResolvConf file is lost.

Check whether the ResolvConf file is normal.

Definition: No upstream domain name resolution server (nameserver) is included.

Object: /etc/resolv.conf

Existing scheduled event

ScheduledEvent

Check whether scheduled live migration events exist on the node. A live migration plan event is usually triggered by a hardware fault and is an automatic fault rectification method at the IaaS layer.

Typical scenario: The host is faulty. For example, the fan is damaged or the disk has bad sectors. As a result, live migration is triggered for VMs.

Source:

- http://169.254.169.254/meta-data/latest/events/scheduled

This check item is an Alpha feature and is disabled by default.

The spot price node is being reclaimed.

SpotPriceNodeReclaimNotification

Check whether the reclaiming of a spot price node is interrupted due to preemption.

Default check interval: 120 seconds

Default fault handling policy: evicts node loads.

The kubelet component has the following default check items, which have bugs or defects. You can fix them by upgrading the cluster or using NPD.

Table 7 Default kubelet check items Check Item

Function

Description

Insufficient PID resources

PIDPressure

Check whether PIDs are sufficient.

- Interval: 10 seconds

- Threshold: 90%

- Defect: In community version 1.23.1 and earlier versions, this check item becomes invalid when over 65535 PIDs are used. For details, see issue 107107. In community version 1.24 and earlier versions, thread-max is not considered in this check item.

Insufficient memory

MemoryPressure

Check whether the allocable memory for the containers is sufficient.

- Interval: 10 seconds

- Threshold: Maximum value – 100 MiB

- Allocable = Total memory of a node – Reserved memory of a node

- Defect: This check item checks only the memory consumed by containers, and does not consider that consumed by other elements on the node.

Insufficient disk resources

DiskPressure

Check the disk usage and inodes usage of the kubelet and Docker disks.

- Interval: 10 seconds

- Threshold: 90%

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot