Configuring HTTP/HTTPS for a LoadBalancer Service

By default, a Layer 4 TCP/UDP listener is created for a LoadBalancer Service. You can also configure HTTP/HTTPS listeners for more refined and diverse task scheduling and to ensure secure access to applications from external networks.

Notes and Constraints

- Clusters must meet version requirements to expose HTTP/HTTPS Services.

Table 1 Scenarios where a load balancer supports HTTP or HTTPS ELB Type

Application

Whether to Support HTTP or HTTPS

Description

Shared load balancer

Interconnecting with an existing load balancer

Supported

None

Automatically creating a load balancer

Supported

None

Dedicated load balancer

Interconnecting with an existing load balancer

Supported

Clusters must be of v1.19.16 or later. Requirements on ELB specifications vary depending on the following minor versions:

- The ELB must support both network and application types for clusters of a version earlier than v1.19.16-r50, v1.21.11-r10, v1.23.9-r10, v1.27.1-r10, or v1.25.4-r10.

- The ELB must support the application type for clusters of v1.19.16-r50, v1.21.11-r10, v1.23.9-r10, v1.25.4-r10, v1.27.1-r10, or later.

Automatically creating a load balancer

Supported

Clusters must be of v1.19.16 or later. Requirements on ELB specifications vary depending on the following minor versions:

- The ELB must support both network and application types for clusters of a version earlier than v1.19.16-r50, v1.21.11-r10, v1.23.9-r10, v1.27.1-r10, or v1.25.4-r10.

- The ELB must support the application type for clusters of v1.19.16-r50, v1.21.11-r10, v1.23.9-r10, v1.25.4-r10, v1.27.1-r10, or later.

- Do not connect an ingress and a Service that uses HTTP/HTTPS to the same listener of the same load balancer. Otherwise, a port conflict occurs.

Step 1: Deploy a Sample Application

This section uses a Nginx Deployment as an example.

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Workloads. In the upper right corner, click Create Workload.

- In the Basic Info area, enter the workload name. In this example, the workload name is nginx. Retain the default settings for other parameters.

- In Container Information under Container Settings, specify the image name and tag. Retain the default settings for other parameters.

Parameter

Example

Image Name

Click Select Image. In the displayed dialog box, click Open Source Images under SWR Shared Edition, search for nginx, select it, and click OK.

Image Tag

Select the latest image tag.

- Retain the default settings for other parameters and click Create Workload.

Step 2: Create a LoadBalancer Service and Configure HTTP or HTTPS

Use one of the following methods.

In this section, the sample certificate is cert-test. Replace it with the actual one.

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Services & Ingresses. In the upper right corner, click Create Service.

In this example, only mandatory parameters for configuring HTTP/HTTPS are listed. Retain the default settings for other parameters. For details, see Using the CCE Console (New Version).

- Configure basic parameters.

Parameter

Description

Example

Service Type

Select LoadBalancer.

None

Service Name

Enter a name, which can be the same as the workload name.

nginx

Namespace

Select the namespace that the workload belongs to.

default

Selector

Add the key and value of a pod label. The Service will be associated with the workload pods based on the label and direct traffic to the pods with this label.

You can also click Reference Workload Label to use the label of an existing workload. In the dialog box displayed, select a workload and click OK.

app:nginx

- Configure load balancer parameters.

Parameter

Description

Example

Load Balancer

Select a load balance type and how the load balancer will be created. To enable HTTP/HTTPS on the listener port of a dedicated load balancer, the type of the load balancer must be Application (HTTP/HTTPS) or Network (TCP/UDP/TLS) & Application (HTTP/HTTPS).- Use existing: Only the load balancers in the same VPC as the cluster can be selected. If no load balancer is available, click Create Load Balancer to create one on the ELB console.

- Auto create: The load balancer will be created in the VPC that the cluster belongs to. For details, see Table 1.

An existing Dedicated load balancer of the Network (TCP/UDP/TLS) & Application (HTTP/HTTPS) type

- Configure access parameters.

Parameter

Description

Example

Service Affinity

Whether to route external traffic to a local node or a cluster-wide endpoint. For details, see Service Affinity (externalTrafficPolicy).- Cluster-level: The IP addresses and ports of all nodes in a cluster can access the workload associated with the Service. However, accessing the Service may result in decreased performance due to route redirection, and the client's source IP address may not be obtainable.

- Node-level: Only the IP address and port of the node where the workload is located can access the workload associated with the Service. Accessing the Service will not result in a performance decrease due to route redirection, and the client's source IP address can be obtained.

Cluster-level

Backend Routing Policy

- Global: All ports use the same backend routing policy. For details about the parameters, see Table 2. CAUTION:

When multiple ports are added, and some of them use different frontend protocols, the global backend routing policy cannot be applied across all protocols simultaneously. For any protocol to which the policy cannot be applied, CCE automatically falls back to the default configuration. For details about the mapping between the frontend and backend protocols of listeners and backend server groups, see Mapping Between Frontend and Backend Protocols for Load Balancing and Configuration Examples. Custom is recommended.

- Custom: Each port can have a unique backend routing policy. You can configure the policy in Port > Backend Routing Policy.

Custom

Port

- Protocol: the protocol used by the Service. If a Service needs to use ELB TLS or HTTP, set Protocol to TCP and select the target frontend protocol. For details, see Protocols for Services.

- Container Port: the port that the workload listens on. For example, Nginx uses port 80 by default.

- Service Port: the port used by the Service.

- Listen on a port: The port ranges from 1 to 65535.

- Listen on ports: ELB allows you to create listeners that listen on ports within specified ranges. Each listener can support up to 10 non-overlapping port ranges.

To configure port ranges for load balancer listeners, ensure the following conditions are met:

- The cluster version must be v1.23.18-r0, v1.25.13-r0, v1.27.10-r0, v1.28.8-r0, v1.29.4-r0, v1.30.1-r0, or later.

- A dedicated load balancer must be used with TCP/UDP/TLS selected.

- This function requires ELB. Before using this function, check whether ELB supports full-port listening and forwarding for layer-4 protocols in the current region.

- Frontend Protocol: Set the protocol of the load balancer listener for establishing connections with clients. When a dedicated load balancer is selected, HTTP/HTTPS can be configured only when Application (HTTP/HTTPS) is selected and TLS can be configured only when Network (TCP/UDP/TLS) is selected.

- Backend Routing Policy: If Backend Routing Policy is set to Custom, you can configure backend routing policies for ports that use different protocols. For details about the parameters, see Table 2.

NOTE:When a LoadBalancer Service is created, a random node port number (NodePort) is automatically generated.

- Protocol: TCP

- Container Port: 80

- Service Port: 443

- Frontend Protocol: HTTPS

Table 2 Parameters for configuring the backend routing policy Parameter

Description

Example Value

Backend Protocol

The service protocol used by the load balancer's backend server group. TCP, HTTP, HTTPS, TLS, QUIC, and UDP are supported. For details about the mapping between the frontend and backend protocols of listeners and backend server groups, see Mapping Between Frontend and Backend Protocols for Load Balancing and Configuration Examples.

HTTP

Load Balancing Algorithm

Load balancers forward requests from clients to the corresponding backend servers based on the configured traffic routing policies.

- Weighted round robin: Requests are forwarded to different backend servers in turn based on the server weights you define in the target group.

- Weighted least connections: Requests are forwarded to the backend server with the smallest connections-to-weight ratio.

- Source IP hash: Requests from the same source IP address are forwarded to the same backend server by hashing the source IP address.

Weighted round robin

- Configure HTTP/HTTPS listener parameters. Retain the default settings for other parameters.

Parameter

Description

Constraint

Example

SSL Authentication

- One-way authentication: Only the backend server is authenticated. If you also need to authenticate the identity of the client, select two-way authentication.

- Two-way authentication: Both the clients and the load balancer authenticate each other. This ensures only authenticated clients can access the load balancer. No additional backend server configuration is required if you select this option.

This parameter is available only when Frontend Protocol is set to HTTPS or TLS.

Dedicated load balancers are available in clusters v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later. Shared load balancers are available in clusters v1.28.15-r60, v1.29.15-r20, v1.30.14-r20, v1.31.10-r20, v1.32.6-r20, v1.33.5-r10, or later.

One-way authentication

CA Certificate

If SSL Authentication is set to Two-way authentication, add a CA certificate to authenticate the client. A CA certificate is issued by a Certificate Authority (CA) and is used to verify the issuer of the client's certificate. If HTTPS two-way authentication is enabled, HTTPS connections can be established only if the client provides a certificate issued by a specific CA.

This parameter is available only when Frontend Protocol is set to HTTPS or TLS and SSL Authentication is set to Two-way authentication.

None

Server Certificate

Select a server certificate. If no certificate is available, create one on the ELB console. For details, see Adding a Certificate.

This parameter is available only when Frontend Protocol is set to HTTPS or TLS.

cert-test

- Click Create.

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Services & Ingresses. In the upper right corner, click Create Service.

- Configure Service parameters. In this example, only mandatory parameters required for using HTTP/HTTPS are listed. For details about how to configure other parameters, see Using the CCE Console (Old Version).

- Service Name: Specify a Service name, which can be the same as the workload name.

- Service Type: Select LoadBalancer.

- Selector: Add a label and click Confirm. The Service will use this label to select pods. You can also click Reference Workload Label to use the label of an existing workload. In the dialog box that is displayed, select a workload and click OK.

- Load Balancer: Select a load balancer type and creation mode.

- A load balancer can be dedicated or shared. To enable HTTP/HTTPS on the listener port of a dedicated load balancer, the type of the load balancer must be Application (HTTP/HTTPS) or Network (TCP/UDP) & Application (HTTP/HTTPS).

- This section uses an existing load balancer as an example. For details about the parameters for automatically creating a load balancer, see Table 4.

- Port

- Protocol: Select TCP. If you select UDP, HTTP and HTTPS will be unavailable.

- Service Port: the port used by the Service. The port ranges from 1 to 65535.

- Container Port: the port that the workload listens on. For example, Nginx uses port 80 by default.

- Frontend Protocol: specifies whether to enable HTTP/HTTPS on the listener port. For a dedicated load balancer, to use HTTP/HTTPS, the type of the load balancer must be Application (HTTP/HTTPS).

- Listener

- SSL Authentication: Select this option if HTTPS is enabled on the listener port. Dedicated load balancers are available in clusters v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later. Shared load balancers are available in clusters v1.28.15-r60, v1.29.15-r20, v1.30.14-r20, v1.31.10-r20, v1.32.6-r20, v1.33.5-r10, or later.

- One-way authentication: Only the backend server is authenticated. If you also need to authenticate the identity of the client, select two-way authentication.

- Two-way authentication: Both the clients and the load balancer authenticate each other. This ensures only authenticated clients can access the load balancer. No additional backend server configuration is required if you select this option.

- CA Certificate: If SSL Authentication is set to Two-way authentication, add a CA certificate to authenticate the client. A CA certificate is issued by a Certificate Authority (CA) and is used to verify the issuer of the client's certificate. If HTTPS two-way authentication is enabled, HTTPS connections can be established only if the client provides a certificate issued by a specific CA.

- Server Certificate: If Frontend Protocol is set to HTTPS or TLS, you must select a server certificate. If no certificate is available, create one on the ELB console. For details, see Adding a Certificate.

- SNI: If Frontend Protocol is set to HTTPS or TLS, you must determine whether to add an SNI certificate. Before adding an SNI certificate, ensure the certificate contains a domain name. If no certificate is available, create one on the ELB console. For details, see Adding a Certificate.

- Security Policy: If Frontend Protocol is set to HTTPS, you can select a security policy. This parameter is available only in clusters of v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later.

- Backend Protocol: If Frontend Protocol is set to HTTPS, HTTP or HTTPS can be used to access the backend server. The default value is HTTP. This parameter is available only in clusters of v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later.

If multiple HTTPS Services are released, all listeners will use the same certificate configuration.

- SSL Authentication: Select this option if HTTPS is enabled on the listener port. Dedicated load balancers are available in clusters v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later. Shared load balancers are available in clusters v1.28.15-r60, v1.29.15-r20, v1.30.14-r20, v1.31.10-r20, v1.32.6-r20, v1.33.5-r10, or later.

Figure 1 HTTP or HTTPS

- Click OK.

If a Service uses the HTTP or HTTPS protocol, it is important to take note of the following configuration requirements:

- Different ELB types and cluster versions have different requirements on flavors. For details, see Table 1.

- The two ports in spec.ports must correspond to those in kubernetes.io/elb.protocol-port. In this example, ports 443 and 80 are enabled with HTTPS and HTTP, respectively.

The following uses an automatically created dedicated load balancer as an example.

apiVersion: v1

kind: Service

metadata:

annotations:

# Specify the Layer 4 and Layer 7 flavors in the parameters for automatically creating a load balancer.

kubernetes.io/elb.autocreate: '

{

"type": "public",

"bandwidth_name": "cce-bandwidth-1634816602057",

"bandwidth_chargemode": "bandwidth",

"bandwidth_size": 5,

"bandwidth_sharetype": "PER",

"eip_type": "5_bgp",

"available_zone": [

""

],

"l7_flavor_name": "L7_flavor.elb.s2.small",

"l4_flavor_name": "L4_flavor.elb.s1.medium"

}'

kubernetes.io/elb.class: performance # A dedicated load balancer

kubernetes.io/elb.protocol-port: "https:443,http:80" # HTTP/HTTPS and port number, which must be the same as the port numbers in spec.ports

kubernetes.io/elb.cert-id: "17e3b4f4bc40471c86741dc3aa211379" # The certificate ID of the LoadBalancer Service

kubernetes.io/elb.security-pool-protocol: 'off' # Backend security protocol. If it is enabled, the backend protocol is HTTPS. If it is not enabled, the backend protocol is HTTP.

labels:

app: nginx

name: test

name: test

namespace: default

spec:

ports:

- name: cce-service-0

port: 443

protocol: TCP

targetPort: 80

- name: cce-service-1

port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx

version: v1

sessionAffinity: None

type: LoadBalancer Parameter | Type | Description |

|---|---|---|

kubernetes.io/elb.protocol-port | String | If a Service is TLS/HTTP/HTTPS-compliant, configure the protocol and port number in the format of "protocol:port". where,

In this example, ports 443 and 80 are enabled with HTTPS and HTTP, respectively. Therefore, the parameter value is https:443,http:80. |

kubernetes.io/elb.cert-id | String | ID of an ELB certificate, which is used as the TLS/HTTPS server certificate. How to obtain: Log in to the ELB console and choose Certificates. In the certificate list, copy the ID under the target certificate name. |

kubernetes.io/elb.client-ca-cert-id | String | Required only for mutual authentication. The ELB certificate ID serves as the CA certificate. How to obtain: Log in to the ELB console and choose Certificates. In the certificate list, copy the ID under the target certificate name. Dedicated load balancers are available in clusters v1.23.14-r0, v1.25.9-r0, v1.27.6-r0, v1.28.4-r0, or later. Shared load balancers are available in clusters v1.28.15-r60, v1.29.15-r20, v1.30.14-r20, v1.31.10-r20, v1.32.6-r20, v1.33.5-r10, or later. |

kubernetes.io/elb.security-pool-protocol | String | If the frontend protocol of a listener is HTTPS, you can enable the backend security protocol HTTPS. The backend security protocol of an existing listener cannot be changed. The modification takes effect only on new listeners that are created by changing the protocol or port.

NOTE: When multiple ports are added, if the frontend protocols of some ports are not HTTPS, this configuration takes effect only for HTTPS ports. |

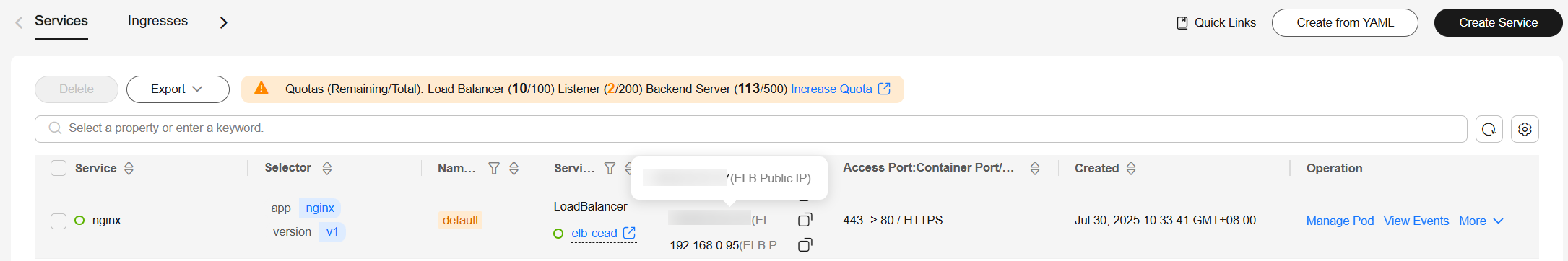

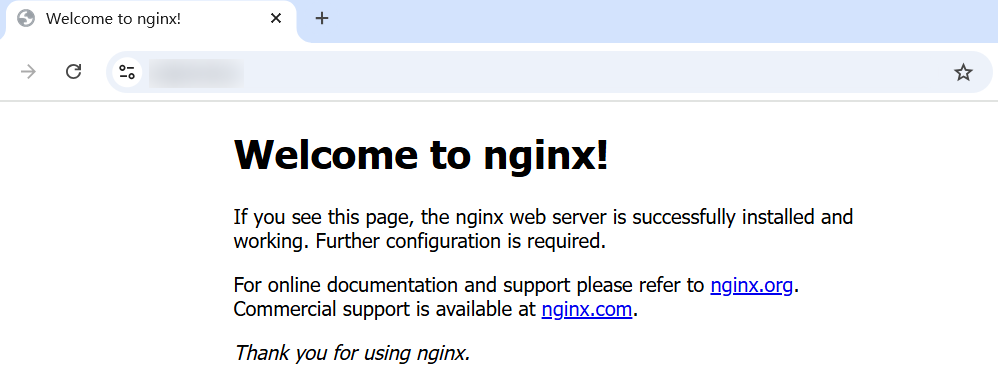

Step 3: Access the Workload

- After the Service is created, copy the load balancer's EIP.

- Access the address via HTTPS in the browser.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot