快速开始

环境准备

- 操作系统:Linux ARM64,服务器可访问公网。

需使用ARM64系统制作的Agent镜像,使用X86系统制作的镜像在调用智能体运行时时会调用失败。

- 安装Python:请确保Python 3.10及以上版本已安装。

大多数Linux发行版(如Ubuntu)都预装了Python,您可以先通过python3 --version检查。如未安装,可以使用如下命令安装:

sudo apt update sudo apt install python3

- 安装Docker:请确保Docker 18.06及以上版本已安装。如未安装,可以使用如下命令安装:

# 查询 Docker 版本 docker --version # 安装Docker sudo apt update sudo apt install docker.io

华为云SWR基础版不支持OCI镜像格式,如果您使用的是Docker 27及以上版本,并且需要处理OCI镜像,可以通过设置环境变量来关闭OCI支持。

export BUILDKIT_USE_OCI_MEDIA_TYPES=0

- 执行以下命令安装SDK(建议在Python虚拟环境中安装,以避免与系统包产生冲突)。

# 创建并激活虚拟环境 (linux) python3 -m venv venv source venv/bin/activate # 安装sdk pip install agentarts-sdk

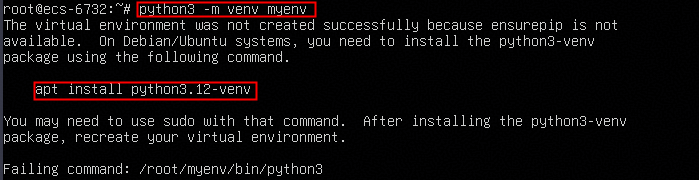

- 如果系统缺少python3-venv包,导致无法创建虚拟环境,请按照命令回显提示安装python3-venv包。

- 如安装SDK时速度较慢或下载超时,请参考执行pip install agentarts-sdk命令安装AgentArts SDK时速度较慢或下载超时解决。

- 执行以下命令配置华为云凭证,获取华为云凭证请参考认证鉴权。

export HUAWEICLOUD_SDK_AK="your-access-key" export HUAWEICLOUD_SDK_SK="your-secret-key"

本地开发

- 执行如下命令安装langchain、langgraph。

pip install -U langchain langgraph

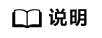

- 执行以下命令初始化一个langgraph项目,支持基本的问答能力(请提前安装langchain、langgraph,可以使用pip install -U langchain langgraph命令安装)。

agentarts init -n my-agent -t langgraph

执行命令后,根据提示输入Region信息。

执行完成后,会在当前目录下创建名为my-agent的项目文件夹。请先执行以下命令进入刚刚创建的项目目录:

cd my-agent

此时目录下会包含如下目录和文件:

my_agent/ ├── .agentarts_config.yaml # Project configuration(表示# 项目的配置文件) ├── agent.py # Agent implementation(表示Agent 核心业务代码) ├── Dockerfile # (表示云端部署所需的镜像构建文件) └── requirements.txt # Python dependencies(表示Python 依赖包列表)

执行cat << 'EOF' > agent.py命令,对agent.py文件的内容进行覆盖重写。其中,agent.py代码如下:

代码输入完毕后,新起一行,输入EOF后再按回车可退出编辑页面。

import os from typing import Dict, Any, TypedDict, Annotated from langgraph.graph.message import add_messages from agentarts.sdk import AgentArtsRuntimeApp, RequestContext app = AgentArtsRuntimeApp() class State(TypedDict): messages: Annotated[list, add_messages] query: str response: str class LangGraphAgent: def __init__(self): self.model_name = os.environ.get("MODEL_NAME", "deepseek-v3.2") self._graph = None def _build_graph(self): from langgraph.graph import StateGraph, END from langchain_openai import ChatOpenAI from langchain_core.messages import HumanMessage, AIMessage llm = ChatOpenAI( model=self.model_name, api_key=os.environ.get("MODEL_API_KEY"), base_url=os.environ.get("MODEL_URL", "https://api.modelarts-maas.com/openai/v1") ) async def process_node(state: State) -> Dict[str, Any]: query = state.get("query", "") messages = state.get("messages", []) or[HumanMessage(content=query)] response = await llm.ainvoke(messages) return { "messages": [AIMessage(content=response.content)], "response": response.content, } workflow = StateGraph(State) workflow.add_node("process", process_node) workflow.set_entry_point("process") workflow.add_edge("process", END) return workflow.compile() async def run(self, query: str) -> Dict[str, Any]: graph = self._graph or self._build_graph() self._graph = graph result = await graph.ainvoke({"messages":[], "query": query, "response": ""}) return {"response": result.get("response", "")} _agent = LangGraphAgent() @app.entrypoint async def handler(payload: Dict[str, Any], context: RequestContext = None) -> Dict[str, Any]: query = payload.get("message", "") return await _agent.run(query) if __name__ == "__main__": app.run() - 执行cat << 'EOF' > .agentarts_config.yaml命令,对yaml文件内容进行覆盖重写。

将下面代码中MODEL_API_KEY的值补充完整,填充为华为云MaaS服务的API Key的值,获取方法请参考获取华为云MaaS服务模型API Key。再将修改后的代码复制到.agentarts_config.yaml文件中。

代码输入完毕后,新起一行,输入EOF后再按回车可退出编辑页面。# AgentArts Configuration # Generated by 'agentarts init' command default_agent: my-agent agents: my-agent: base: name: my-agent entrypoint: agent:app dependency_file: requirements.txt platform: linux/arm64 language: python3 base_image: python:3.10-slim region: cn-southwest-2 swr_config: organization: agentarts-org1 repository: agent_my-agent organization_auto_create: true repository_auto_create: true runtime: invoke_config: protocol: HTTP port: 8080 network_config: network_mode: PUBLIC vpc_config: vpc_id: null subnet_id: null security_group_id: [] identity_configuration: authorizer_type: IAM authorizer_configuration: custom_jwt: discovery_url: null allowed_audience: [] allowed_clients: [] allowed_scopes: [] key_auth: api_keys: [] observability: tracing: enabled: false metrics: enabled: false logs: enabled: false artifact_source: url: swr.cn-southwest-2.myhuaweicloud.com/agentarts-org1/agent_my-agent:latest commands: [] environment_variables: - key: MODEL_API_KEY value: "请替换为华为云MaaS服务中模型的API Key" - key: MODEL_NAME value: "deepseek-v3.2" - key: MODEL_URL value: "https://api.modelarts-maas.com/openai/v1" tags: - 在my-agent目录下,依次执行以下命令安装依赖并启动本地测试服务器:

# 安装项目所需的依赖包 pip install -r requirements.txt # 启动本地开发服务 (该命令会自动读取 yaml 配置并启动服务) agentarts dev

服务启动后,可以打开一个新的终端窗口(保持原窗口运行),使用 curl 命令进行测试:

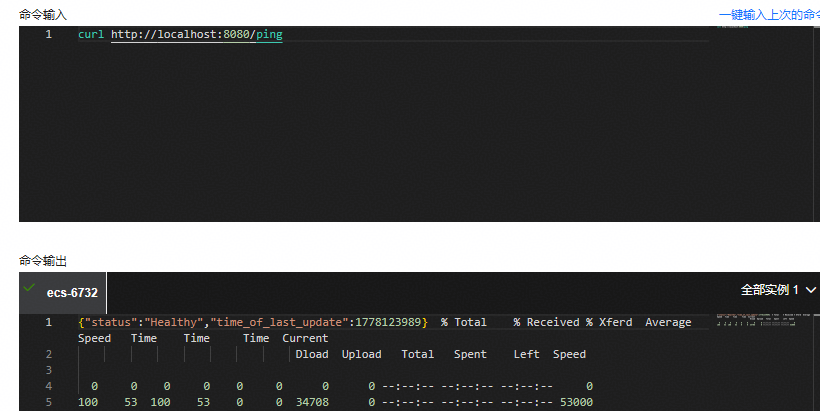

- 健康检查调用示例

curl http://localhost:8080/ping

检查成功结果示例

{"status":"Healthy","time_of_last_update":1778123989}

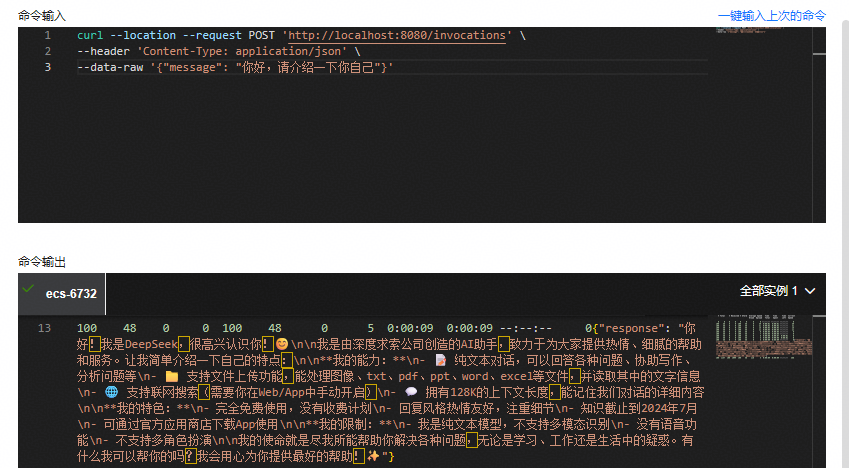

- Agent对话调用示例

curl --location --request POST 'http://localhost:8080/invocations' \ --header 'Content-Type: application/json' \ --data-raw '{"message": "你好,请介绍一下你自己"}'

调用成功结果示例

{"response": "你好!我是 **xxx**"}

- 健康检查调用示例

云端部署

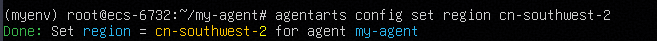

- 当本地测试无误后,通过Ctrl+C停止正在运行中的本地脚本。将Agent部署到华为云时,首先设置部署区域。

agentarts config set region cn-southwest-2

也可执行agentarts config命令进行交互式配置,配置向导会引导您完成以下设置:(按“回车”使用默认值)

- 部署region:默认西南贵阳一cn-southwest-2

- SWR组织:默认自动创建

- SWR仓库:默认自动创建

- 依赖文件:默认requirements.txt

如果需要自定义配置(例如,指定部署区域、镜像仓库或者agent入站认证、环境变量等),可以手动编辑 .agentarts_config.yaml文件中的配置。

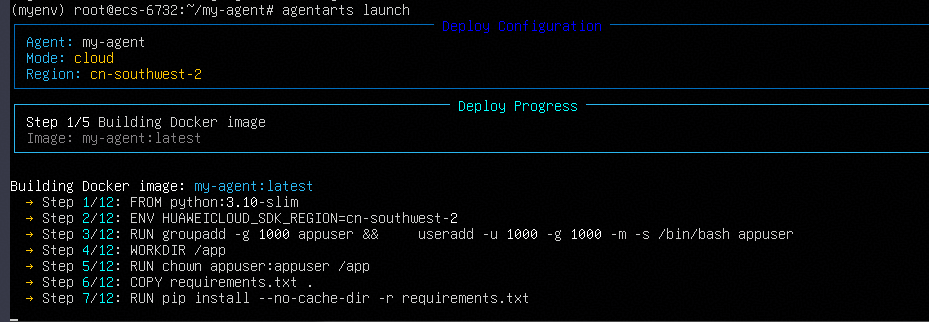

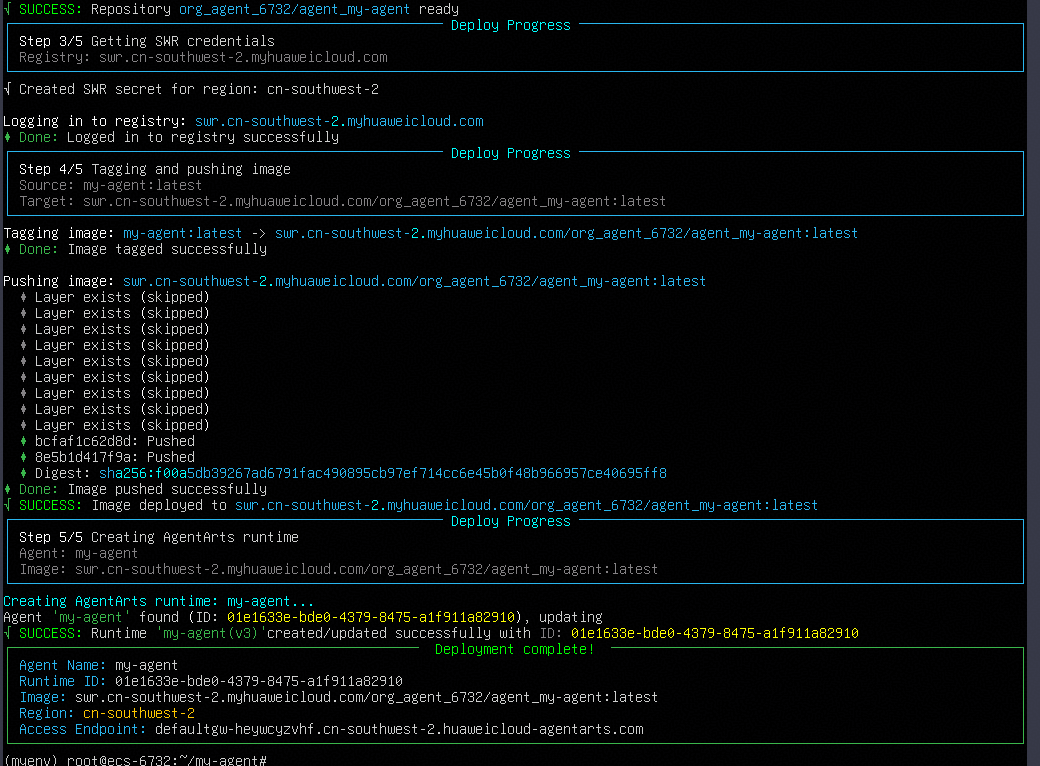

- 配置完成后,通过命令一键部署到AgentArts平台的云端(需要本地安装docker)。

agentarts launch

该命令会自动完成以下步骤:

- 本地构建Docker镜像。

- 将Docker镜像推送到华为云SWR镜像仓库。

- 部署到AgentArts运行时托管环境。

(可选)修改docker镜像源,用于拉取python:{version}-slim包。

执行以下命令在系统配置目录下创建一个名为docker的文件夹。

sudo mkdir -p /etc/docker

执行以下命令(直接复制下面这段代码并回车执行),更换Docker镜像源,从国内镜像站寻找python:3.10-slim。

sudo tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors":[ "https://docker.m.daocloud.net", "https://dockerproxy.net", "https://mirror.baidubce.com" ] } EOF依次执行下面两条命令,重启Docker服务:

sudo systemctl daemon-reload sudo systemctl restart docker

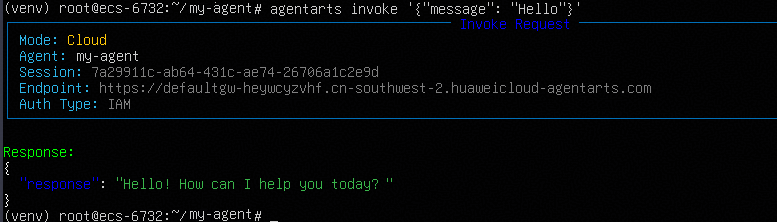

- 调用云端Agent进行会话。

agentarts invoke '{"message": "Hello"}'

常见问题

- 执行pip install agentarts-sdk命令,出现HTTPSConnectionPool(host='files.pythonhosted.org', port=443): Read timed out报错。

请重新执行pip install agentarts-sdk命令进行安装。

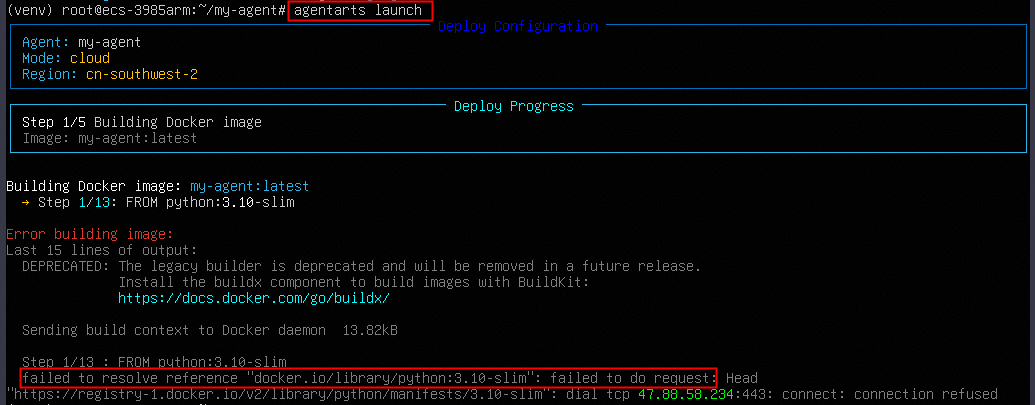

- 执行agentarts launch命令,出现failed to resolve reference "docker.io/library/python:3.10-slim"类型报错。

问题现象及原因

尝试连接Docker官方镜像仓库(docker.io)下载python:3.10-slim基础环境时,网络连接超时(网络连接被阻断或者限速)。

解决方案

配置Docker国内镜像加速器

执行以下命令在系统配置目录下创建一个名为docker的文件夹。

sudo mkdir -p /etc/docker

执行以下命令(直接复制下面这段代码并回车执行),更换Docker镜像源,从国内镜像站寻找python:3.10-slim。

sudo tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors":[ "https://docker.m.daocloud.net", "https://dockerproxy.net", "https://mirror.baidubce.com" ] } EOF依次执行下面两条命令,重启Docker服务:

sudo systemctl daemon-reload sudo systemctl restart docker

Docker配置完成后,再次执行agentarts launch命令进行Agent部署。

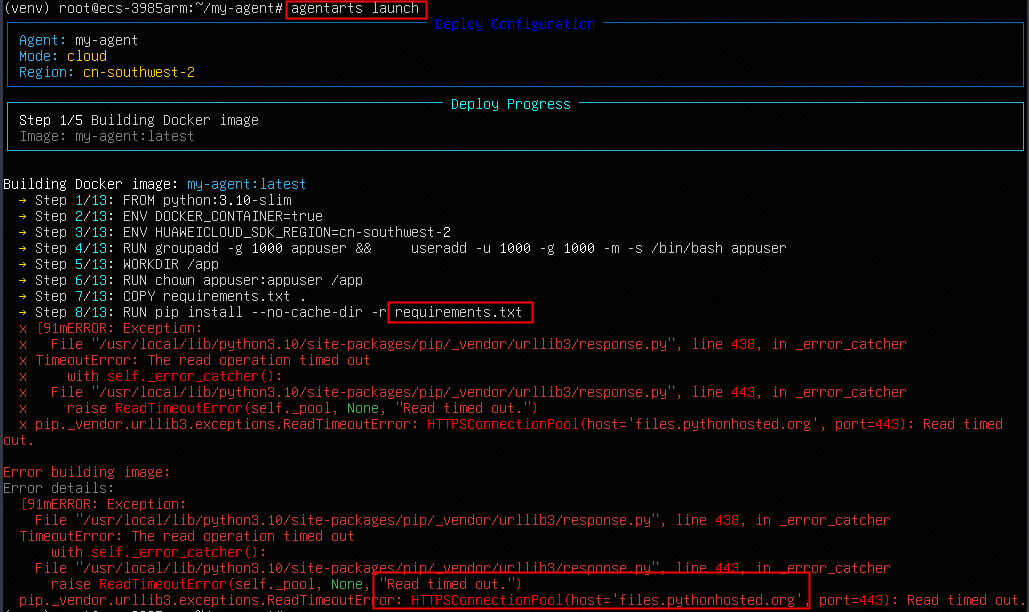

- 执行agentarts launch命令,在执行requirements.txt步骤中出现Read timed out ... files.pythonhosted.org报错。

问题现象及原因

Docker镜像在构建过程中,尝试从官方PyPI服务器下载在requirements.txt里写的那些Python包,由于网络环境原因造成超时。

解决方案

执行以下命令让其在安装依赖时使用华为云官方的Python镜像加速器。

sed -i 's|pip install --no-cache-dir -r requirements.txt|pip install --no-cache-dir -r requirements.txt -i https://repo.huaweicloud.com/repository/pypi/simple --trusted-host repo.huaweicloud.com|g' Dockerfile

配置完成后,再次执行agentarts launch命令进行Agent部署。

- 执行agentarts launch命令,出现Organization 'agentarts-org1' already exists、Failed to create/get organization 'agentarts-org1'报错。

SWR服务镜像名称已被占用,请重新更换名称,即更换.agentarts_config.yaml文件中swr_config配置中organization参数的值、artifact_source参数中的值(在yaml文件中,该值共有2处需要替换)。

可依次执行以下命令进行替换,也可以直接手动执行nano .agentarts_config.yaml命令进入到yaml文件中进行修改。

# 将.agentarts_config.yaml文件中原有的 agentarts-org1 组织名替换为一个不容易重名的名字,比如 org_agent_6731 sed -i 's/organization: agentarts-org1/organization: org_agent_6731/g' .agentarts_config.yaml # 修正 artifact_source 里的 URL 中的组织名,这里以 org_agent_6731 为例 sed -i 's|url: swr.cn-southwest-2.myhuaweicloud.com/agentarts-org1/agent_my-agent:latest|url: swr.cn-southwest-2.myhuaweicloud.com/org_agent_6731/agent_my-agent:latest|g' .agentarts_config.yaml

- 执行agentarts launch命令,出现SVCSCTG.SWR.4030017、403 Insufficient permissions报错。

如果使用IAM账户执行agentarts launch命令产生该报错,表示IAM用户没有SWR服务中创建组织或管理资源的权限。请联系主账号授予SWRFullAccessPolicy权限(在IAM新版控制台中授权,IAM控制台“总览”页面右上角处可切换至新版)。