Accessing HiveMetaStore Across Clusters

To access data stored in MRS Hive (including the scenario where Hive interconnects with HDFS and the scenario where Hive interconnects with OBS), you can refer to this tutorial to create an external schema.

Preparing the Environment

- You have created a GaussDB(DWS) 3.0 cluster, and the MRS and GaussDB(DWS) clusters are in the same region, AZ, and VPC subnet and that the clusters can communicate with each other.

- You have obtained the AK and SK.

Constraints and Limitations

- Currently, only the SELECT, INSERT, and INSERT OVERWRITE operations can be performed on tables in the Hive database through external schemas.

- MRS supports two types of data sources. For details, see Table 1.

Table 1 Operations supported by the two types of MRS data sources Data Source

Table Type

Operation

TEXT

CSV

PARQUET

ORC

HDFS

Non-partitioned table

SELECT

√

√

√

√

INSERT/INSERT OVERWRITE

x

x

x

√

Partitioned table

SELECT

√

√

√

√

INSERT/INSERT OVERWRITE

x

x

x

√

OBS

Non-partitioned table

SELECT

√

√

√

√

INSERT/INSERT OVERWRITE

x

x

x

√

Partitioned table

SELECT

x

x

√

√

INSERT/INSERT OVERWRITE

x

x

x

x

- Transaction atomicity is no longer ensured. If a transaction fails, data consistency cannot be ensured. Rollback is not supported.

- GRANT and REVOKE operations cannot be performed on tables created on Hive using external schemas.

- Concurrency support: Concurrent read and write operations on GaussDB(DWS), Hive, and Spark may cause dirty reads. Concurrent operations including INSERT OVERWRITE on the same non-partitioned table or the same partition of the same partitioned table may not ensure the expected result. Therefore, do not perform such operations.

- The HiveMetaStore features do not support the federation mechanism.

Procedure

This practice takes about 1 hour. The basic process is as follows:

- Create an MRS analysis cluster. (Select the Hive component.)

- Create a table on Hive.

- Insert data on Hive, or upload a local TXT data file to an OBS bucket then import the file to Hive through the OBS bucket, and import the file from the TXT storage table to the ORC storage table.

- Create an MRS data source connection.

- Create a foreign server.

- Create an external schema.

- Use the external schema to import data to or read data from Hive tables.

Creating an MRS Analysis Cluster

- Log in to the management console, choose Analytics > MapReduce Service, click Buy Cluster, select Custom Config, set the configuration parameters, and click Next.

Table 2 MRS configuration Parameter

Value

Region

Dublin

Cluster Name

mrs_01

Database Type

Normal

Cluster Version

MRS 3.1.3 (recommended)

NOTE:- MRS clusters support 3.0.*, 3.1.*, and later versions (* indicates a number).

Cluster Type

Analysis cluster

Metadata

Local

- Click Next to configure hardware parameters.

Table 3 Hardware configuration Parameter

Value

Billing Mode

Pay-per-use

AZ

AZ2

VPC

vpc-01

Subnet

subnet-01

Security Group

Auto create

EIP

10.x.x.x

Enterprise Project

default

Master

2

Analysis Core

3

Analysis Task

0

- Configure the advanced settings based on the following table, click Buy Now, and wait for about 15 minutes for the cluster creation to complete.

Table 4 Advanced settings Parameter

Value

Tags

test01

Hostname Prefix

(Optional) Prefix for the name of an ECS or BMS in the cluster.

Auto scaling

Retain the default value.

Bootstrap Action

Retain the default value. MRS 3.x does not support this parameter.

Agency

Retain the default value.

Data Disk Encryption

This function is disabled by default. Retain the default value.

Alarms

Retain the default value.

Rule Name

Retain the default value.

Topic Name

Select a topic.

Kerberos Authentication

This parameter is enabled by default.

Username

admin

Password

This password is used to log in to the cluster management page.

Confirm Password

Enter the password of user admin again.

Login Mode

Password

User

root

Password

This password is used to remotely log in to the ECS.

Confirm Password

Enter the password of user root again.

Agency

In Advanced Settings, set Agency to the preset agency MRS_ECS_DEFAULT_AGENCY of MRS in IAM.

Secure Communications

Select Enable.

Preparing the ORC Table Data Source of MRS

- Create a product_info.txt file on the local PC, copy the following data to the file, and save the file to the local PC.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

100,XHDK-A-1293-#fJ3,2017-09-01,A,2017 Autumn New Shirt Women,red,M,328,2017-09-04,715,good 205,KDKE-B-9947-#kL5,2017-09-01,A,2017 Autumn New Knitwear Women,pink,L,584,2017-09-05,406,very good! 300,JODL-X-1937-#pV7,2017-09-01,A,2017 autumn new T-shirt men,red,XL,1245,2017-09-03,502,Bad. 310,QQPX-R-3956-#aD8,2017-09-02,B,2017 autumn new jacket women,red,L,411,2017-09-05,436,It's really super nice 150,ABEF-C-1820-#mC6,2017-09-03,B,2017 Autumn New Jeans Women,blue,M,1223,2017-09-06,1200,The seller's packaging is exquisite 200,BCQP-E-2365-#qE4,2017-09-04,B,2017 autumn new casual pants men,black,L,997,2017-09-10,301,The clothes are of good quality. 250,EABE-D-1476-#oB1,2017-09-10,A,2017 autumn new dress women,black,S,841,2017-09-15,299,Follow the store for a long time. 108,CDXK-F-1527-#pL2,2017-09-11,A,2017 autumn new dress women,red,M,85,2017-09-14,22,It's really amazing to buy 450,MMCE-H-4728-#nP9,2017-09-11,A,2017 autumn new jacket women,white,M,114,2017-09-14,22,Open the package and the clothes have no odor 260,OCDA-G-2817-#bD3,2017-09-12,B,2017 autumn new woolen coat women,red,L,2004,2017-09-15,826,Very favorite clothes 980,ZKDS-J-5490-#cW4,2017-09-13,B,2017 Autumn New Women's Cotton Clothing,red,M,112,2017-09-16,219,The clothes are small 98,FKQB-I-2564-#dA5,2017-09-15,B,2017 autumn new shoes men,green,M,4345,2017-09-18,5473,The clothes are thick and it's better this winter. 150,DMQY-K-6579-#eS6,2017-09-21,A,2017 autumn new underwear men,yellow,37,2840,2017-09-25,5831,This price is very cost effective 200,GKLW-l-2897-#wQ7,2017-09-22,A,2017 Autumn New Jeans Men,blue,39,5879,2017-09-25,7200,The clothes are very comfortable to wear 300,HWEC-L-2531-#xP8,2017-09-23,A,2017 autumn new shoes women,brown,M,403,2017-09-26,607,good 100,IQPD-M-3214-#yQ1,2017-09-24,B,2017 Autumn New Wide Leg Pants Women,black,M,3045,2017-09-27,5021,very good. 350,LPEC-N-4572-#zX2,2017-09-25,B,2017 Autumn New Underwear Women,red,M,239,2017-09-28,407,The seller's service is very good 110,NQAB-O-3768-#sM3,2017-09-26,B,2017 autumn new underwear women,red,S,6089,2017-09-29,7021,The color is very good 210,HWNB-P-7879-#tN4,2017-09-27,B,2017 autumn new underwear women,red,L,3201,2017-09-30,4059,I like it very much and the quality is good. 230,JKHU-Q-8865-#uO5,2017-09-29,C,2017 Autumn New Clothes with Chiffon Shirt,black,M,2056,2017-10-02,3842,very good

- Log in to OBS Console, click Create Bucket, set the following parameters, and click Create Now.

Table 5 Bucket parameters Parameter

Value

Region

Dublin

Data Redundancy Policy

Single-AZ storage

Bucket Name

mrs-datasource

Default Storage Class

Standard

Bucket Policy

Private

Default Encryption

Disable

Direct Reading

Disable

Enterprise Project

default

Tags

-

- Wait until the bucket is created.

- Switch back to the MRS console and click the name of the created MRS cluster. On the Dashboard page, click the Synchronize button next to IAM User Sync. The synchronization takes about 5 minutes.

- Click Nodes and click a master node. On the displayed page, switch to the EIPs tab, click Bind EIP, select an existing EIP, and click OK. If no EIP is available, create one. Record the EIP.

- (Optional) Connect Hive to OBS.

Perform this step when Hive interconnects with OBS. Skip this step when Hive interconnects with HDFS.

- Go back to the MRS cluster page. Click the cluster name. On the Dashboard tab page of the cluster details page, click Access Manager. If a message is displayed indicating that EIP needs to be bound, bind an EIP first.

- In the Access MRS Manager dialog box, click OK. You will be redirected to the MRS Manager login page. Enter the username admin and its password for logging in to MRS Manager. The password is the one you entered when creating the MRS cluster.

- Interconnect Hive with OBS by referring to Interconnecting Hive with OBS.

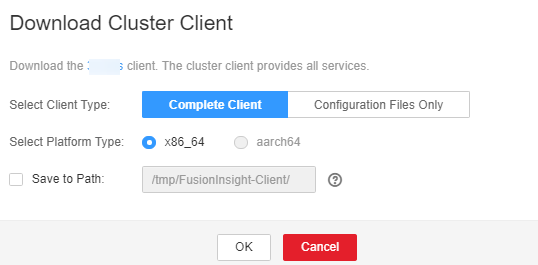

- Download the client.

- Go back to the MRS cluster page. Click the cluster name. On the Dashboard tab page of the cluster details page, click Access Manager. If a message is displayed indicating that EIP needs to be bound, bind an EIP first.

- In the Access MRS Manager dialog box, click OK. You will be redirected to the MRS Manager login page. Enter the username admin and its password for logging in to MRS Manager. The password is the one you entered when creating the MRS cluster.

- Choose Services > Download Client. Set Client Type to Only configuration files and set Download To to Server. Click OK.

- Log in to the active master node as user root and update the client configuration of the active management node.

cd /opt/client

sh refreshConfig.sh /opt/client Full_path_of_client_configuration_file_package

In this tutorial, run the following command:

sh refreshConfig.sh /opt/client /tmp/MRS-client/MRS_Services_Client.tar

- Switch to user omm and go to the directory where the Hive client is located.

su - omm

cd /opt/client

- Create the product_info table whose storage format is TEXTFILE on Hive.

- Import environment variables to the /opt/client directory.

source bigdata_env

If find: 'opt/client/Hudi': Permission denied is displayed, ignore it. This does not affect subsequent operations.

- Log in to the Hive client.

- If Kerberos authentication is enabled for the current cluster, run the following command to authenticate the current user. The current user must have the permission for creating Hive tables. . Configure a role with the required permissions. . Bind a role to the user. If Kerberos authentication is not enabled for the current cluster, you do not need to run the following command:

- Run the following command to start the Hive client:

- Run the following SQL commands in sequence to create a demo database and the product_info table:

1CREATE DATABASE demo;

1USE demo;

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

DROP TABLE product_info; CREATE TABLE product_info ( product_price int , product_id char(30) , product_time date , product_level char(10) , product_name varchar(200) , product_type1 varchar(20) , product_type2 char(10) , product_monthly_sales_cnt int , product_comment_time date , product_comment_num int , product_comment_content varchar(200) ) row format delimited fields terminated by ',' stored as TEXTFILE;

- Import environment variables to the /opt/client directory.

- Import the product_info.txt file to Hive.

- Hive is interconnected with OBS: Go back to OBS Console, click the name of the bucket, choose Objects > Upload Object, and upload the product_info.txt file to the path of the product_info table in the OBS bucket.

- Hive is interconnected with HDFS: Import the product_info.txt file to the HDFS path /user/hive/warehouse/demo.db/product_info/. For details about how to import data to an MRS cluster, see section Managing Data Files in the MapReduce Service User Guide..

- Create an ORC table and import data to the table.

- Run the following SQL commands to create an ORC table:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

DROP TABLE product_info_orc; CREATE TABLE product_info_orc ( product_price int , product_id char(30) , product_time date , product_level char(10) , product_name varchar(200) , product_type1 varchar(20) , product_type2 char(10) , product_monthly_sales_cnt int , product_comment_time date , product_comment_num int , product_comment_content varchar(200) ) row format delimited fields terminated by ',' stored as orc;

- Insert data in the product_info table into the Hive ORC table product_info_orc.

1insert into product_info_orc select * from product_info;

- Query whether the data import is successful.

1select * from product_info_orc;

- Run the following SQL commands to create an ORC table:

Creating an MRS Data Source Connection

- Log in to the GaussDB(DWS) console and click the created data warehouse cluster. Ensure that the GaussDB(DWS) and MRS clusters are in the same region, AZ, and VPC subnet.

- Click the MRS Data Source tab and click Create MRS Cluster Connection.

- Set the following parameters and click OK.

- Data Source: mrs_server

- Configuration Mode: MRS Account

- MRS Data Source: Select the created mrs_01 cluster.

- MRS Account: admin

- Password: Enter the password of the admin user created for the MRS data source.

Creating a Foreign Server

Perform this step only when Hive interconnects with OBS. Skip this step when Hive interconnects with HDFS.

- Use Data Studio to connect to the created GaussDB(DWS) cluster.

- Run the following statement to create a foreign server. {AK value} and {SK value} are obtained from Preparing the Environment.

Hard-coded or plaintext AK and SK are risky. For security purposes, encrypt your AK and SK and store them in the configuration file or environment variables.

1 2 3 4 5 6 7 8 9

CREATE SERVER obs_servevr FOREIGN DATA WRAPPER DFS_FDW OPTIONS ( address'obs.xxx.com:5443', //Address for accessing OBS. encrypt 'on', access_key '{AK value}', secret_access_key '{SK value}', type 'obs' );

- Check the foreign server.

1SELECT * FROM pg_foreign_server WHERE srvname='obs_server';

The server is successfully created if information similar to the following is displayed:

1 2 3 4

srvname | srvowner | srvfdw | srvtype | srvversion | srvacl | srvoptions --------------------------------------------------+----------+--------+---------+------------+--------+--------------------------------------------------------------------------------------------------------------------- obs_server | 16476 | 14337 | | | | {address=obs.xxx.com:5443,type=obs,encrypt=on,access_key=***,secret_access_key=***} (1 row)

Create an external schema.

- Obtain the internal IP address and port number of the Hive metastore service and the name of the Hive database to be accessed.

- Log in to the MRS console.

- Choose Cluster > Active Cluster and click the name of the cluster to be queried to enter the page displaying the cluster's basic information.

- Click Go to manager on the O&M Management page and enter the username and password to log in to the FusionInsight management page.

- Click Cluster, Hive, Configuration, All Configurations, MetaStore, and Port in sequence, and record the value of hive.metastore.port.

- Click Cluster, Hive, and Instance in sequence, and record the MetaStore management IP address of the host whose name contains master1.

- Create an external schema.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19

//When interconnecting Hive with OBS: Set Server to the name of the external server created in , DATABASE to the database created on Hive, METAADDRESS to the IP address and port number of the Hive metastore service recorded in , and CONFIGURATION to the default configuration path of the MRS data source. DROP SCHEMA IF EXISTS ex1; CREATE EXTERNAL SCHEMA ex1 WITH SOURCE hive DATABASE 'demo' SERVER obs_server METAADDRESS '***.***.***.***:***' CONFIGURATION '/MRS/gaussdb/mrs_server' //When interconnecting Hive with HDFS: Set Server to mrs_server (name of the data source created in ), METAADDRESS to the IP address and port number of the Hive metastore service recorded in , and CONFIGURATION to the default configuration path of the MRS data source. DROP SCHEMA IF EXISTS ex1; CREATE EXTERNAL SCHEMA ex1 WITH SOURCE hive DATABASE 'demo' SERVER mrs_server METAADDRESS '***.***.***.***:***' CONFIGURATION '/MRS/gaussdb/mrs_server'

- View the created external schema.

1 2

SELECT * FROM pg_namespace WHERE nspname='ex1'; SELECT * FROM pg_external_namespace WHERE nspid = (SELECT oid FROM pg_namespace WHERE nspname = 'ex1');

1 2 3 4

nspid | srvname | source | address | database | confpath | ensoptions | catalog --------------------------------------------------+----------+--------+---------+------------+--------+--------------------------------------------------------------------------------------------------------------------- 16393 | obs_server | hive | ***.***.***.***:*** | demo | *** | | (1 row)

Importing Data

- Create a local table for data import.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15

DROP TABLE IF EXISTS product_info; CREATE TABLE product_info ( product_price integer , product_id char(30) , product_time date , product_level char(10) , product_name varchar(200) , product_type1 varchar(20) , product_type2 char(10) , product_monthly_sales_cnt integer , product_comment_time date , product_comment_num integer , product_comment_content varchar(200) ) ;

- Import the target table from the Hive table.

1INSERT INTO product_info SELECT * FROM ex1.product_info_orc;

- Query the import result.

1SELECT * FROM product_info;

Exporting Data

- Create a local source table.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16

DROP TABLE IF EXISTS product_info_export; CREATE TABLE product_info_export ( product_price integer , product_id char(30) , product_time date , product_level char(10) , product_name varchar(200) , product_type1 varchar(20) , product_type2 char(10) , product_monthly_sales_cnt integer , product_comment_time date , product_comment_num integer , product_comment_content varchar(200) ) ; INSERT INTO product_info_export SELECT * FROM product_info;

- Create a target table on Hive.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

DROP TABLE product_info_orc_export; CREATE TABLE product_info_orc_export ( product_price int , product_id char(30) , product_time date , product_level char(10) , product_name varchar(200) , product_type1 varchar(20) , product_type2 char(10) , product_monthly_sales_cnt int , product_comment_time date , product_comment_num int , product_comment_content varchar(200) ) row format delimited fields terminated by ',' stored as orc;

- Import the local source table to the Hive table.

1INSERT INTO ex1.product_info_orc_export SELECT * FROM product_info_export;

- Query the import result on Hive

1SELECT * FROM product_info_orc_export;

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.