Concurrency

Overview

By default, each function instance processes only one request at a time. If FunctionGraph receives concurrent requests, for example, three concurrent requests, FunctionGraph starts three function instances to process the requests. To address this issue, FunctionGraph has launched the single-instance multi-concurrency feature, allowing multiple requests to be processed concurrently on one instance.

This feature is suitable for functions which spend a long time to initialize or wait for a response from downstream services.

The feature has the following advantages:

- Fewer cold starts and lower latency: Usually, FunctionGraph starts three instances to process three requests, involving three cold starts. If you configure the concurrency of three requests per instance, only one instance is required, involving only one cold start.

- Shorter processing duration and lower cost: Normally, the total duration of multiple requests is the sum of each request's processing time. With this feature configured, the total duration is from the start of the first request to the end of the last request.

FunctionGraph automatically scales in or out function instances based on the number of requests. If the number of concurrent requests increases, FunctionGraph allocates more function instances to process the requests. If that number decreases, FunctionGraph allocates fewer function instances accordingly.

Number of function instances = Function concurrency/Concurrency per instance

- Function concurrency: the number of requests concurrently executed by a function at a certain time point.

- Concurrency per instance: the maximum number of concurrent requests allowed by a single instance. This is equivalent to the Max. Requests per Instance parameter on the Concurrency page.

Comparison

If a function takes 5s to execute each time and you set the number of requests that can be concurrently processed by an instance to 1, three requests need to be processed in three instances, respectively. Therefore, the total execution duration is 15s.

When you set Max. Requests per Instance to 5, if three requests are sent, they will be concurrently processed by one instance. The total execution time is 5s.

If the maximum number of requests per instance is greater than 1, new instances will be automatically added when this number is reached. The maximum number of instances will not exceed Max. Instances per Function you set.

| Comparison Item | Single-Instance Single-Concurrency | Single-Instance Multi-Concurrency |

|---|---|---|

| Log printing | None | To print logs, Node.js uses the console.info() function, Python uses the print() function, and Java uses the System.out.println() function. In this mode, current request IDs are included in the log content. However, when multiple requests are concurrently processed by an instance, the request IDs are incorrect if you continue to use the preceding functions to print logs. In this case, use context.getLogger() to obtain a log output object. For example, in Python: log = context.getLogger()

log.info("test") |

| Shared variables | None | Modifying shared variables will cause errors. Mutual exclusion protection is required when you modify non-thread-safe variables during function writing. |

| Monitoring metrics | Perform monitoring based on the actual situation. | Under the same load, the number of function instances decreases significantly. |

| Flow control error | None | When the number of requests exceeds the processing capability of instances, FunctionGraph performs flow control on the requests. The error code in the body is FSS.0429, the status in the response header is 429, and the error message is Your request has been controlled by overload sdk, please retry later. |

Notes and Constraints

- This feature is supported only by FunctionGraph v2.

- This feature is supported only for HTTP functions created from scratch,using function templates, or created based on container images.

- If the number of concurrent requests per instance is set to 1 for an existing function created in another way, the value cannot be changed.

- To use the single-instance multi-concurrency feature for functions created in other ways, submit a service ticket to apply for whitelist access.

- For Python functions, threads on an instance are bound to one core due to the Python Global Interpreter Lock (GIL) lock. As a result, concurrent requests can only be processed using the single core, not multiple cores. The function processing performance cannot be improved even if larger resource specifications are configured.

- For Node.js functions, the single-process single-thread processing of the V8 engine results in processing of concurrent requests only using a single core, not multiple cores. The function processing performance cannot be improved even if larger resource specifications are configured.

Configuring Single-Instance Multi-Concurrency

- Log in to the FunctionGraph console. In the navigation pane, choose Functions > Function List.

- Click the name of a function.

- Choose Configuration > Concurrency.

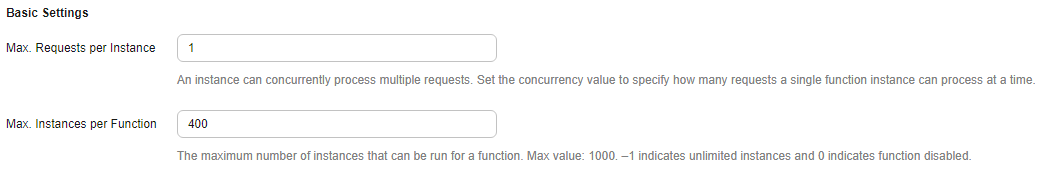

- Configure the concurrency for the function. Figure 1 Concurrency configuration

Table 2 Concurrency parameters Parameter

Description

Max. Requests per Instance

Number of concurrent requests supported by a single instance.

Restrictions:

- This parameter is only available for HTTP functions created from scratch, HTTP functions based on templates, and container image-based functions.

- If this parameter is set to 1, this parameter is not displayed and cannot be modified. If this parameter is set to a value greater than 1, this parameter is still displayed.

Value range:

1–1000

Default value:

1

Max. Instances per Function

Maximum number of on-demand instances that can be enabled for a function.

Restrictions:

- Requests that exceed the processing capability of instances will be discarded.

- Errors caused by excessive requests will not be displayed in function logs. You can obtain error details by referring to Asynchronous Notification Policy.

Value range:

-1 or an integer ranging from 1 to 1000. The value –1 indicates that the number of instances is not limited.

Default value:

400

- Click Save.

Helpful Links

For details about function instance types and their usage modes, see Function Instance Types and Usage Modes.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot