Containers

Video Tutorial

Containers and Docker

Containers are a kernel virtualization technology originating with Linux. They provide lightweight virtualization to isolate processes and resources. Containers have become popular since the emergence of Docker. Docker was the first system to make containers fully portable and makes it easy to run your container on any machine you want. It simplifies the packaging of applications, repositories, and dependencies. Even an OS file system can be packaged into a simple portable package that can be used on any machine running Docker.

Containers isolate and allocate resources similarly to VMs. Unlike VMs, however, containers virtualize OSs not hardware, which makes them more portable and efficient.

Containers have the following advantages over VMs:

- Better resource utilization

There is no overhead for virtualizing hardware and running a complete OS, which allows containers to outperform VMs in application execution speed, memory loss, and file storage speed. Compared with a VM with the same configuration, a container can run more applications.

- Faster startup

A traditional VM typically takes several minutes to start an application, but a Docker container can launch an application in just a few seconds, or even milliseconds. This because containerized applications run directly on the host kernel, and there is no need to load the entire OS. Running applications on containers greatly saves your time in development, testing, and deployment.

- Consistent environment

Inconsistent development, test, and production environments are a common pain point in application development. As a result, some issues cannot be detected prior to rollout. A Docker container image provides a complete runtime environment except the kernel for applications.

- Easier migration

Docker provides a consistent execution environment across many platforms, both physical and virtual. Regardless of what platform Docker is running on, the applications run the same way, which makes migrating them much easier. With Docker, you do not have to worry that an application running fine on one platform will fail in a different environment.

- Easier maintenance and extension

A Docker image is built up from a series of layers that are stacked. When you create a container, you add a container layer on top of image layers. In this way, duplicate layers are reused, which simplifies application maintenance and updates as well as image extension on base images. In addition, Docker collaborates with open source project teams to maintain a large number of high-quality official images. You can directly use them in production environments or create your images based on these images. This greatly reduces the image creation costs.

Typical Process of Using Docker Containers

Before using a Docker container, you should know the core components in Docker.

- Image: A Docker image is an executable software package that includes the data needed to run an application, including file systems and other metadata, such as executable file path of the runtime.

- Image repository: A Docker image repository stores Docker images, which can be shared between different users and computers. You can run an image on the computer where it was created, or you can push it to an image repository, pull it to another computer, and then run it there. Some repositories are public, where anyone can pull images. Some are private, which are only accessible to some users and machines.

- Container: A Docker container is usually a Linux container created from a Docker image. A running container is a process running on the Docker host, but it is isolated from the host and all other processes running on the host. The process is also resource-limited, and it can only access and use resources (such as CPU and memory) allocated to it.

Figure 2 shows the typical process of using containers.

- A developer develops an application and creates an image on the development machine.

- The developer sends a command to Docker for pushing the image.

After receiving the command, Docker pushes the local image to the image repository.

- The developer sends an image running command to the production machine.

After the command is received, Docker pulls the image from the image repository to the machine and then launches a container based on the image.

Example

In the following example, Docker packages a container image based on an Nginx image, runs an application based on the container image, and pushes the container image to an image repository.

Installing Docker

Docker is compatible with almost all OSs. Select whichever Docker version best suits your needs.

The following uses CentOS 7.5 64bit (40 GiB) as an example to describe how to quickly install Docker.

- Add a yum repository.

yum install epel-release -y yum clean all

- Install the required software packages.

yum install -y yum-utils device-mapper-persistent-data lvm2

- Configure the Docker yum repository.

yum-config-manager --add-repo https://mirrors.huaweicloud.com/docker-ce/linux/centos/docker-ce.repo sed -i 's+download.docker.com+mirrors.huaweicloud.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

- Check the available Docker version.

yum list docker-ce --showduplicates | sort -r

Information similar to the following is displayed:

Loading mirror speeds from cached hostfile Loaded plugins: fastestmirror docker-ce.x86_64 3:26.1.4-1.el7 docker-ce-stable docker-ce.x86_64 3:26.1.3-1.el7 docker-ce-stable docker-ce.x86_64 3:26.1.2-1.el7 docker-ce-stable ...

- Install Docker of the specified version. To facilitate the subsequent configuration of the image accelerator, use a Docker version from 18.06.0 to 24.0.9.

sudo yum install docker-ce-24.0.9 docker-ce-cli-24.0.9 containerd.io

Docker 24.0.9 is used in this example. If you choose a different version, replace 24.0.9 with the specific version number.

- Start Docker.

systemctl enable docker # Set Docker to start automatically upon system boot. systemctl start docker # Start Docker.

- Check the installation result.

docker --version

Information similar to the following is displayed:

Docker version 24.0.9, build 2936816

Packaging a Docker Image

Docker provides a convenient way to package your application as a Dockerfile, which allows you to create a simple custom Nginx image.

- To configure an image accelerator, perform the following operations: (An image accelerator can speed up the download of popular open source images, addressing issues with slow or failed downloads from third-party repositories like Docker Hub caused by network problems. Image accelerators are only available in certain regions.)

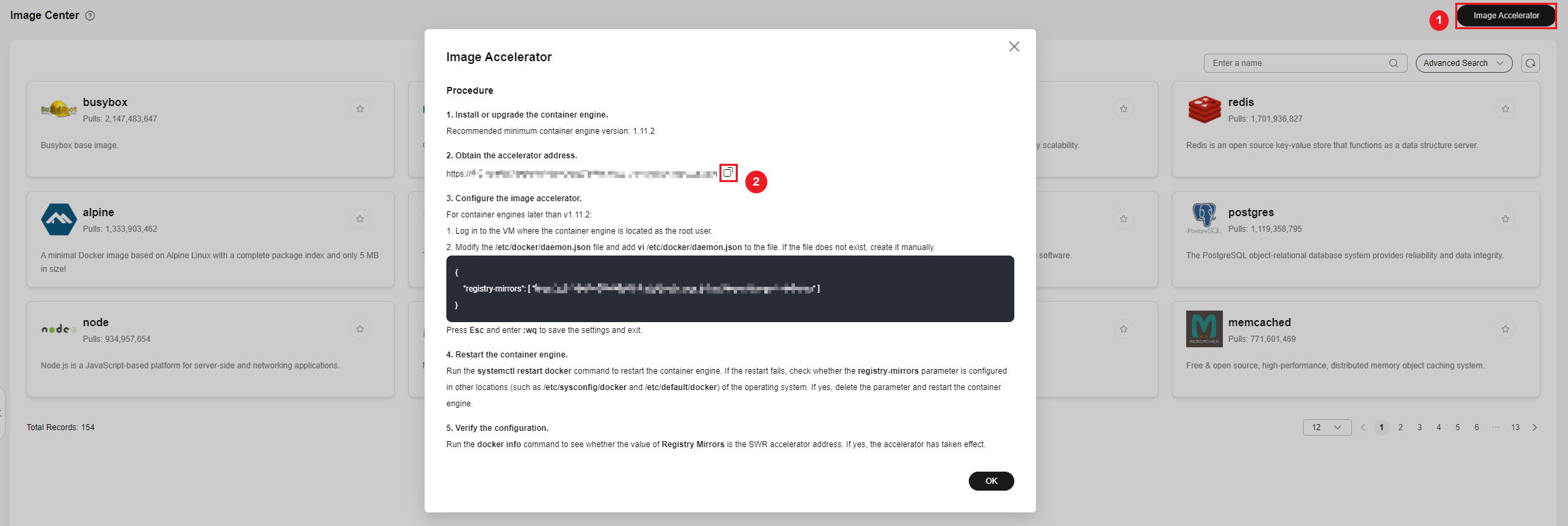

- Log in to the .

- In the navigation pane, choose Image Resources > Image Center. Ensure that Image Center is available in the current region.

- Click Image Accelerator. In the displayed dialog box, click

to copy the accelerator address.

Figure 3 Copying an accelerator address

to copy the accelerator address.

Figure 3 Copying an accelerator address

- Modify the /etc/docker/daemon.json file.

vim /etc/docker/daemon.json

Add the following content to the file:

{ "registry-mirrors": ["accelerator-address"] } - Restart the container engine.

systemctl restart docker

If the restart fails, check whether the registry-mirrors parameter is configured in another location of the OS, such as /etc/sysconfig/docker or /etc/default/docker. If the parameter is configured in another location, delete it from there and restart the container engine.

- View the Docker details.

docker info

If the value of Registry Mirrors is the accelerator address, the accelerator has been configured.

... Registry Mirrors: https://xxx.mirror.swr.myhuaweicloud.com/ ...

- Create a file named Dockerfile in the mynginx directory.

mkdir mynginx cd mynginx touch Dockerfile

- Edit the Dockerfile file.

vim Dockerfile

Add the following content to Dockerfile:

# Use the Nginx image as the base image. FROM nginx:latest # Replace the content of index.html with "hello world". RUN echo "hello world" > /usr/share/nginx/html/index.html # Permit external access to port 80 of the container. EXPOSE 80

- Package the image.

docker build -t hello .

-t is used to add a label to the image to name it. In this example, the image name is hello. The period . indicates that the packaging command is executed in the current directory.

- Check whether the image has been created.

docker images

If information similar to the following is displayed, the image has been created:REPOSITORY TAG IMAGE ID CREATED SIZE hello latest 1ff61881be30 10 seconds ago 236MB

Pushing the Image to an Image Repository

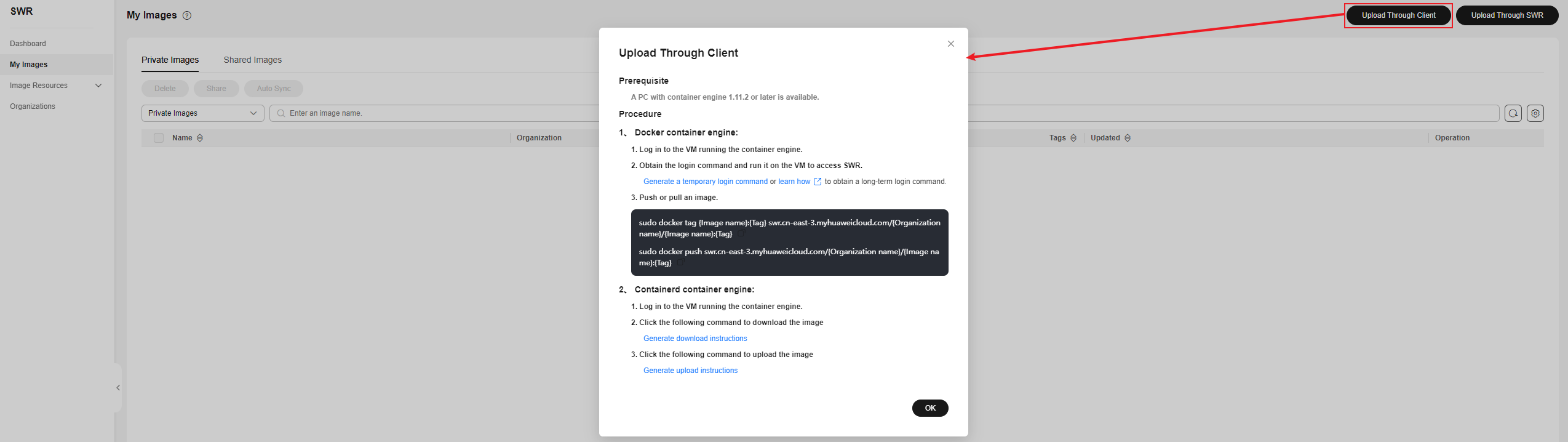

- Log in to the SWR console. In the navigation pane, choose My Images. On the page displayed, click Upload Through Client. In the dialog box displayed, click Generate a temporary login command. Then, copy the command and run it on the local host to log in to the SWR image repository.

- Before pushing an image, specify a complete name for the image.

docker tag hello swr.cn-east-3.myhuaweicloud.com/container/hello:v1

The command details are as follows:

- swr.cn-east-3.myhuaweicloud.com is the repository address, which varies with the region.

- container is the organization name. An organization is typically created in SWR. If no organizations are available, an organization will be automatically created when an image is pushed to SWR for the first time. Each organization name is unique in a single region.

- v1 is the tag allocated to the hello image.

- Push the image to SWR.

docker push swr.cn-east-3.myhuaweicloud.com/container/hello:v1

- Pull the image.

docker pull swr.cn-east-3.myhuaweicloud.com/container/hello:v1

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.