Configuring Destination Information

Overview

This topic describes how to configure destination information for a data integration task. Based on the destination information (including the data source and data storage), ROMA Connect writes data to the destination. The destination information configuration varies depending on data source types.

Constraints

During data migration, if a primary key conflict occurs at the destination, data is automatically updated based on the primary key.

API

|

Parameter |

Description |

|---|---|

|

Request Parameters |

Construct the parameter definition of an API request. For example, the data to be integrated to the destination must be defined in Body. Set this parameter based on the definition of the API data source.

|

|

Data Root Field |

This parameter specifies the path of upper-layer common fields in all parameters in the body sent to the destination in JSON format. Data Root Field and Body in Request Parameters form the request body sent to the destination API. For example, if the body parameter is {"c":"xx","d":"xx"} and Data Root Field is set to a.b, the encapsulated request data is {"a":{"b":{"c":"xx","d":"xx"}}}. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

Description on Body Parameter Configuration

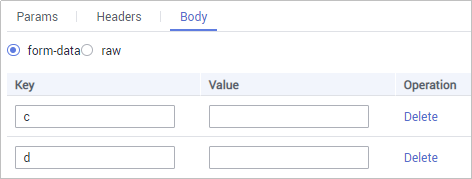

- form-data mode:

Set Key to the parameter name defined by the API data source and leave Value empty. The key will be used as the destination field name in mapping information to map and transfer the value of the source field.

Figure 1 form-data mode

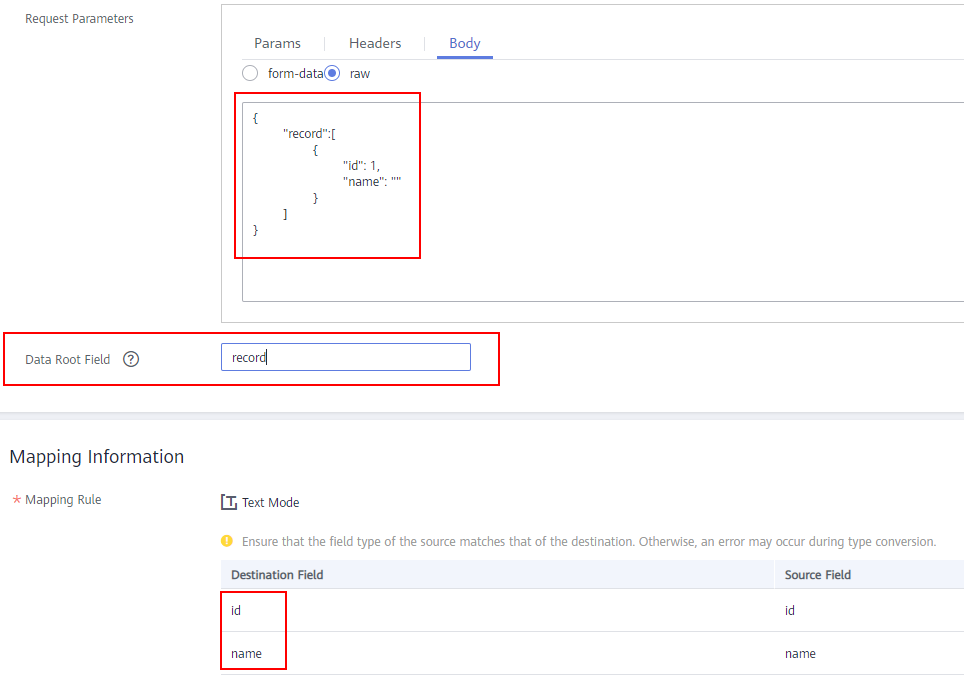

- Raw mode:

The raw mode supports the JSON, Array, and nested JSON formats. Enter an example body sent to the destination API in JSON format. ROMA Connect replaces the parameter values in the example based on the mapping configuration, and finally transfers the source data to the destination. The following is an example body in raw mode:

- JSON format:

{ "id": 1, "name": "name1" }Enter the body in JSON format, leave Data Root Field empty, and set the field names in Mapping Information.

- Array format:

{ "record":[ { "id": 1, "name": "" } ] }Set Data Root Field to the JSONArray object name, for example, record. Enter field names in mapping information.

- Nested JSON format:

{ "startDate":"", "record":[ { "id": 1, "name": "" } ] }Leave Data Root Field blank. In mapping information, set the json fields to the field names and set the jsonArray fields to specific paths, for example, record[0].id.

- JSON format:

ActiveMQ

|

Parameter |

Description |

|---|---|

|

Destination Type |

Select the message transfer model of the ActiveMQ data source. The value can be Topic or Queue. |

|

Destination Name |

Enter the name of a topic or queue to which to send data. Ensure that the topic or queue already exists. |

|

Metadata |

Define each underlying key-value data element to be written to the destination in JSON format. The number of fields to be integrated at the source determines the number of metadata records defined on the destination.

|

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

Description on Metadata Parsing Path Configuration

- Data in JSON format does not contain arrays:

For example, in the following JSON data written to the destination, the complete paths for elements a to d are a, a.b, a.b.c, and a.b.d, respectively. Elements c and d are underlying data elements.

In this scenario, Parsing Path of element c must be set to a.b.c, and Parsing Path of element d must be set to a.b.d.

{ "a": { "b": { "c": "xx", "d": "xx" } } } - Data in JSON format contains arrays:

For example, in the following JSON data written to the destination, the complete paths for elements a to d are a, a.b, a.b[i].c, and a.b[i].d, respectively. Elements c and d are underlying data elements.

In this scenario, Parsing Path of element c must be set to a.b[i].c, and Parsing Path of element d must be set to a.b[i].d.

{ "a": { "b": [{ "c": "xx", "d": "xx" }, { "c": "yy", "d": "yy" } ] } }

The preceding JSON data that does not contain arrays is used as an example. The following describes the configuration when the destination is ActiveMQ:

ArtemisMQ

|

Parameter |

Description |

|---|---|

|

Destination Type |

Select the message transfer model of the ArtemisMQ data source. The value can be Topic or Queue. |

|

Destination Name |

Enter the name of a topic or queue to which to send data. Ensure that the topic or queue already exists. |

|

Extended Metadata |

Define each underlying key-value data element to be written to the destination in JSON format. The number of fields to be integrated at the source determines the number of metadata records defined on the destination.

|

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

Description on Metadata Parsing Path Configuration

- Data in JSON format does not contain arrays:

For example, in the following JSON data written to the destination, the complete paths for elements a to d are a, a.b, a.b.c, and a.b.d, respectively. Elements c and d are underlying data elements.

In this scenario, Parsing Path of element c must be set to a.b.c, and Parsing Path of element d must be set to a.b.d.

{ "a": { "b": { "c": "xx", "d": "xx" } } } - Data in JSON format contains arrays:

For example, in the following JSON data written to the destination, the complete paths for elements a to d are a, a.b, a.b[i].c, and a.b[i].d, respectively. Elements c and d are underlying data elements.

In this scenario, Parsing Path of element c must be set to a.b[i].c, and Parsing Path of element d must be set to a.b[i].d.

{ "a": { "b": [{ "c": "xx", "d": "xx" }, { "c": "yy", "d": "yy" } ] } }

The configuration when the destination is ArtemisMQ is similar to that when the destination is ActiveMQ. For details, see ActiveMQ configuration example.

DB2

|

Parameter |

Description |

|---|---|

|

Table |

Select the data table to which data will be written. Then, click Select Table Field and select only the column fields that you want to write. |

|

Batch Number Field |

Select a field whose type is String and length is greater than 14 characters in the destination table. In addition, the batch number field cannot be the same as the destination field in mapping information. The value of this field is a random number, which is used to identify the data in the same batch. The data inserted in the same batch uses the same batch number, indicating that the data is inserted in the same batch and can be used for location or rollback. |

|

Batch Number Format |

The batch number can be in yyyyMMddHHmmss or UUID format. You are advised to use the UUID format because batch numbers in yyyyMMddHHmmss format are not unique. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

DWS

|

Parameter |

Description |

|---|---|

|

Table |

Select the data table to which data will be written. Then, click Select Table Field and select only the table fields that you want to write. |

|

Batch Number Field |

Select a field whose type is String and length is greater than 14 characters in the destination table. In addition, the batch number field cannot be the same as the destination field in mapping information. The value of this field is a random number, which is used to identify the data in the same batch. The data inserted in the same batch uses the same batch number, indicating that the data is inserted in the same batch and can be used for location or rollback. |

|

Batch Number Format |

The batch number can be in yyyyMMddHHmmss or UUID format. You are advised to use the UUID format because batch numbers in yyyyMMddHHmmss format are not unique. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

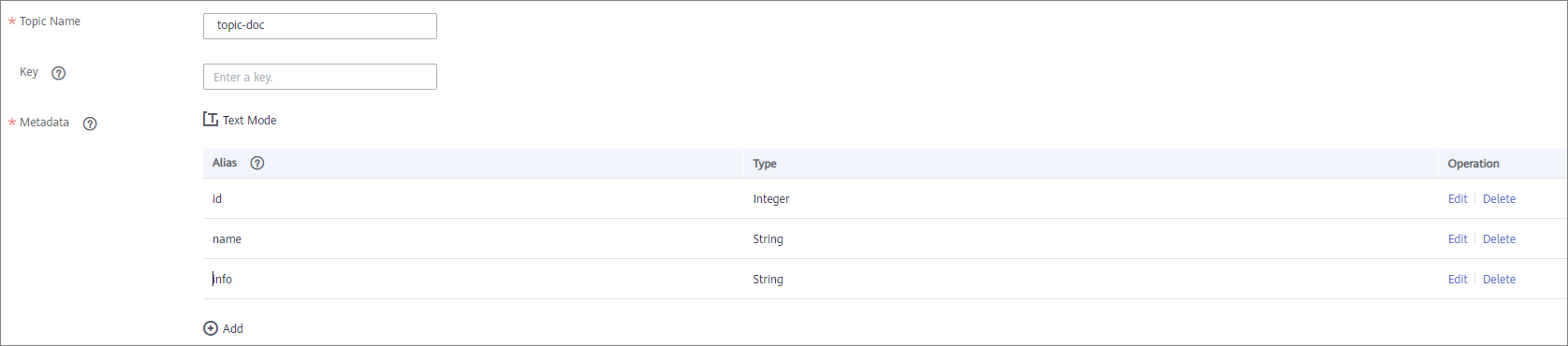

Kafka

|

Parameter |

Description |

|---|---|

|

Topic Name |

Select the name of the topic to which data is to be written. |

|

Key |

Enter the key value of a message so that the message will be stored in a specified partition. It can be used as an ordered message queue. If this parameter is left empty, messages are stored in different message partitions in a distributed manner. |

|

Metadata |

Define the data fields to be written to the destination Kafka. The number of fields to be integrated at the source determines the number of metadata records defined on the destination.

|

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

The following figure shows a configuration example when the destination is Kafka. id, name, and info are the data fields to be written to the Kafka data source.

The structure of the message written to Kafka is {"id":"xx", "name":"yy", "info":"zz"}, where xx, yy, and zz are the data values transferred from the source.

MySQL

|

Parameter |

Description |

|---|---|

|

Table |

Select an existing table and click Select Table Field to select only the column fields that you want to integrate. |

|

Batch Number Field |

Select a field whose type is String and length is greater than 14 characters in the destination table. In addition, the batch number field cannot be the same as the destination field in mapping information. The value of this field is a random number, which is used to identify the data in the same batch. The data inserted in the same batch uses the same batch number, indicating that the data is inserted in the same batch and can be used for location or rollback. |

|

Batch Number Format |

The batch number can be in yyyyMMddHHmmss or UUID format. You are advised to use the UUID format because batch numbers in yyyyMMddHHmmss format are not unique. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

MongoDB

|

Parameter |

Description |

|---|---|

|

Table |

Select the data set to be written to the MongoDB data source. (The data set is equivalent to a data table in a relational database.) Then, click Select Fields in Set and select only the column fields that you want to write. |

|

Upsert |

This parameter indicates whether to update or insert data to the destination, that is, whether to directly updating existing data fields in the data set on the destination. |

|

Upsert key |

This parameter is mandatory only if Upsert is enabled. Select the data field to be upserted. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

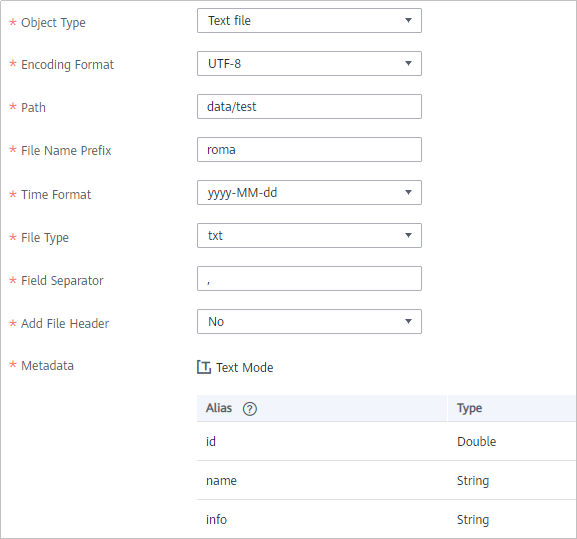

OBS

|

Parameter |

Description |

|---|---|

|

Object Type |

Select the type of the data file to be written to the OBS data source. Currently, Text file and Binary file are supported. |

|

Encoding Format |

This parameter is mandatory only if Object Type is set to Text file. Select the encoding mode of the data file to be written to the OBS data source. The value can be UTF-8 or GBK. |

|

Path |

Enter the name of the object to be written to the OBS data source. The value of Path cannot end with a slash (/). |

|

File Name Prefix |

Enter the prefix of the file name. This parameter is used together with Time Format to define the name of the file to be written to the OBS data source. |

|

Time Format |

Select the time format to be used in the file name. This parameter is used together with File Name Prefix to define the data file to be written to the OBS data source. |

|

File Type |

Select the format of the data file to be written to the OBS data source. A text file can be in TXT or CSV format, and a binary file can be in XLS or XLSX format. |

|

Advanced Attributes |

This parameter is mandatory only if File Type is set to csv. Select whether to configure the advanced properties of the file. |

|

Newline |

This parameter is mandatory only if Advanced Attributes is set to Enable. Enter a newline character in the file content to distinguish different data lines in the file. |

|

Enclosure Character |

This parameter is mandatory only if Advanced Attributes is set to Enable. If you select Use, each data field in the data file is enclosed by double quotation marks ("). If a data field contains the same symbol as a separator or newline character, the field will not be split into two fields. For example, if source data contains a data field aa|bb and the vertical bar (|) is set as the separator when the source data is integrated to the destination data file, the field is aa|bb in the destination data file and will not be split into aa and bb. |

|

Field Separator |

This parameter is mandatory only if File Type is set to txt or Advanced Attributes is set to Enable. Enter the field separator for the file contents to distinguish different fields in each row of data. |

|

Add File Header |

Determine whether to add a file header to the data file to be written. The file header is the first line or several lines at the beginning of a file, which helps identify and distinguish the file content. |

|

File Header |

This parameter is mandatory only if Add File Header is set to Yes. Enter the file header information. Use commas (,) to separate multiple file headers. |

|

Metadata |

Define the data fields to be written to the destination file. The number of fields to be integrated at the source determines the number of metadata records defined on the destination.

|

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

The following figure shows a configuration example when the destination is OBS. id, name, and info are the data fields to be written to the OBS data source.

Oracle

|

Parameter |

Description |

|---|---|

|

Table |

Select an existing table and click Select Table Field to select only the column fields that you want to integrate. |

|

Batch Number Field |

Select a field whose type is String and length is greater than 14 characters in the destination table. In addition, the batch number field cannot be the same as the destination field in mapping information. The value of this field is a random number, which is used to identify the data in the same batch. The data inserted in the same batch uses the same batch number, indicating that the data is inserted in the same batch and can be used for location or rollback. |

|

Batch Number Format |

The batch number can be in yyyyMMddHHmmss or UUID format. You are advised to use the UUID format because batch numbers in yyyyMMddHHmmss format are not unique. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

For the Oracle destination data source, if the source primary key field is empty, the record is discarded by default and no scheduling log error code is generated.

PostgreSQL

|

Parameter |

Description |

|---|---|

|

Auto Create Table |

Specify whether to automatically create a data table. |

|

Destination Table |

This parameter is displayed only when Auto Create Table is enabled. Enter the name of the table to be automatically created. |

|

Table |

This parameter is displayed only when Auto Create Table is disabled. Select the data table to which data is to be written and click Select Table Field to select the data column fields to be integrated. |

|

Batch Number Field |

Select a field whose type is String and length is greater than 14 characters in the destination table. In addition, the batch number field cannot be the same as the destination field in mapping information. The value of this field is a random number, which is used to identify the data in the same batch. The data inserted in the same batch uses the same batch number, indicating that the data is inserted in the same batch and can be used for location or rollback. |

|

Batch Number Format |

The batch number can be in yyyyMMddHHmmss or UUID format. You are advised to use the UUID format because batch numbers in yyyyMMddHHmmss format are not unique. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

RabbitMQ

|

Parameter |

Description |

|---|---|

|

New Queue Creation |

Determine whether to create a queue in the RabbitMQ data source.

|

|

Exchange Mode |

Select a routing mode for the exchange in the RabbitMQ data source to forward messages to the queue. If New Queue Creation is set to Yes, select the routing mode for the new queue. If New Queue Creation is set to No, select the routing mode that is the same as that of the existing destination queue.

|

|

Exchange Name |

Enter the exchange name of the RabbitMQ data source. If New Queue Creation is set to Yes, the exchange name of the new queue is used. If New Queue Creation is set to No, configure the exchange name that is the same as that of the existing destination queue. |

|

Routing Key |

This parameter is mandatory only if Exchange Mode is set to Direct or Topic. RabbitMQ uses the routing key as the judgment condition. Messages that meet the condition will be forwarded to the queue. If New Queue Creation is set to Yes, enter the routing key of the new queue. If New Queue Creation is set to No, enter the routing key that is the same as that of the existing destination queue. |

|

Message Parameters |

This parameter is mandatory only if Exchange Mode is set to Headers. RabbitMQ uses Headers as a judgment condition. Messages that meet the condition will be forwarded to a new queue. If New Queue Creation is set to Yes, enter the headers of the new queue. If New Queue Creation is set to No, enter the headers that are the same as those of the existing destination queue. |

|

Queue Name |

This parameter is mandatory only if New Queue Creation is set to Yes. Enter the name of a new queue. |

|

Automatic Deletion |

This parameter specifies whether a queue will be automatically deleted if no client is connected. |

|

Persistence |

This parameter specifies whether messages in a queue are stored permanently. |

|

Metadata |

Define each underlying key-value data element to be written to the destination in JSON format. The number of fields to be integrated at the source determines the number of metadata records defined on the destination.

|

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

Description on Metadata Parsing Path Configuration

- Data in JSON format does not contain arrays:

For example, in the following JSON data written to the destination, the complete paths for elements a to d are a, a.b, a.b.c, and a.b.d, respectively. Elements c and d are underlying data elements.

In this scenario, Parsing Path of element c must be set to a.b.c, and Parsing Path of element d must be set to a.b.d.

{ "a": { "b": { "c": "xx", "d": "xx" } } } - Data in JSON format contains arrays:

For example, in the following JSON data written to the destination, the complete paths for elements a to d are a, a.b, a.b[i].c, and a.b[i].d, respectively. Elements c and d are underlying data elements.

In this scenario, Parsing Path of element c must be set to a.b[i].c, and Parsing Path of element d must be set to a.b[i].d.

{ "a": { "b": [{ "c": "xx", "d": "xx" }, { "c": "yy", "d": "yy" } ] } }

The preceding JSON data that does not contain arrays is used as an example. The following describes the configuration when the destination is RabbitMQ:

SQL Server

|

Parameter |

Description |

|---|---|

|

Table |

Select an existing table and click Select Table Field to select only the column fields that you want to integrate. |

|

Batch Number Field |

Select a field whose type is String and length is greater than 14 characters in the destination table. In addition, the batch number field cannot be the same as the destination field in mapping information. The value of this field is a random number, which is used to identify the data in the same batch. The data inserted in the same batch uses the same batch number, indicating that the data is inserted in the same batch and can be used for location or rollback. |

|

Batch Number Format |

The batch number can be in yyyyMMddHHmmss or UUID format. You are advised to use the UUID format because batch numbers in yyyyMMddHHmmss format are not unique. |

|

Mapping Rules |

Configure the mapping rules between source data fields and destination data fields to convert the source data obtained into the destination data. For details about the mapping, see Configuring Mapping Rules.

|

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot