Monitoring a Real-Time Job

In the real-time processing mode, data is processed in real time, which is used in scenarios with high real-time performance. This type of job is a pipeline that consists of one or more nodes. You can configure scheduling policies for each node, and the tasks started by nodes can keep running for an unlimited period of time. In this type of job, lines with arrows represent only service relationships, rather than task execution processes or data flows.

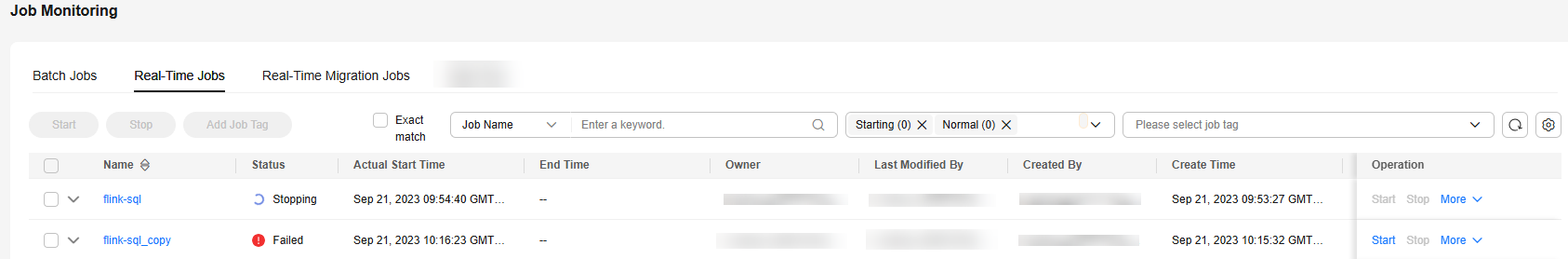

You can choose Monitor Job and click the Real-Time Job Monitoring tab to view the job status, start time, and end time, and perform the operations listed in Table 1.

| Operation | Description |

|---|---|

| Filtering jobs by Job Name, Owner, CDM Job, or Node Type | You can select Exact match to search for a job by its name. |

| Filtering jobs based on the job status or job tag | N/A |

| Perform operations on jobs in a batch | Select jobs and perform batch operations on them, including starting, stopping, and adding tags to them. |

| Viewing job instance status | Click job in front of the |

| Job status-related operations | In the Operation column of a job, you can start, stop, pause, and resume the job,and add tags to the job. NOTE: When pausing a job, you can select Apply immediately or Apply next time. Apply immediately: The current job instance will be paused immediately, and no new job instance will be generated. Apply next time: The current job instance will continue to run, but no new job instance will be generated. |

| Adding a job tag | Click Add Job Tag. The dialog box is displayed. |

| Viewing node information of a job | Click a job name. On the displayed page, click a node to view its associated job/scripts and monitoring information. NOTE: If event-driven scheduling is configured for a node in the job, the subjob monitoring page is displayed when you click the node. |

| Disabling and restoring a node | Click a job name. On the displayed page, right-click a node and select Disable. After the node is disabled, you can right-click it and select Restore to restore it on another location. For details, see Real-Time Job Monitoring: Disabling and Restoring a Node. |

| Viewing the boot log | Click a job name. On the displayed page, right-click a node and select View Run Log to view logs of the node. |

| Configuring scheduling | Click a job name. On the displayed page, right-click the node where event-driven scheduling is configured and select Configure Scheduling to modify the scheduling information about the node. For details, see Real-Time Job Monitoring: Configuring Scheduling for a Node Where Event-driven Scheduling Is Configured. |

| Clearing stream messages | Click a job name. On the displayed page, right-click the node where event-driven scheduling is configured and select Clear Stream Message. |

| Viewing logs | For real-time processing single-task Flink SQL and Flink JAR jobs, you can Click More and select View Log to view the logs of the jobs. NOTE: This function is unavailable if the MRS cluster version is not supported. |

| More operations | Click More and select Add Job Tag to add tags to the job. Click More and select View Log to view the run logs of Flink SQL and Flink JAR jobs. Click More and select FlinkUI. If the current node is not running, the node cannot be viewed. Click More and select Monitor Metric. The page can be displayed only if the elastic resource pool where the queue is located is associated with a Prometheus instance. Click More and select Rerun to rerun a real-time job. Click More and select Trigger Savepoint. Savepoints can be triggered only when a job is running. You can click a job name to access the job details page and set a savepoint. The storage path can be an OBS or HDFS path. After a savepoint is triggered, you can go to the Statesets page to view the savepoint. |

Click a job name. On the displayed page, view the job parameters, properties, and instances.

Click a node of a job to view the node properties, script content, node monitoring information, state set management, and historical execution records. On the Nodes tab page, you can view the run logs of the real-time job. On the Execution Records page, you can filter records by time period or job node status. In the Operation column, you can view logs and real-time monitoring metrics of the job.

- You can view the historical execution records of only DLI Flink, MRS Flink, and DLI Spark jobs.

- Execution records of the last 180 days are retained.

- You can view savepoints of MRS Flink jobs on the State Sets page. State sets include checkpoints and savepoints. You can view checkpoints and savepoints on the State Sets page. A maximum of five latest savepoints can be retained.

- In MRS_3.3.0-LTS.0.1 and later versions, you can obtain the checkpoint list only after restarting the cluster.

- The savepoints of MRS Flink jobs can be obtained through MRS management-plane APIs. If the MRS management plane is unavailable, the savepoints cannot be obtained. In this case, you need to manually trigger savepoints. The startup function runs properly.

In addition, you can view the current job version and status, start, rerun, and edit jobs, determine whether to display metric monitoring, and set the job refresh frequency.

Real-Time Job Monitoring: Disabling and Restoring a Node

You can disable a node in a real-time job and restore it in another location.

- Log in to the DataArts Studio console by following the instructions in Accessing the DataArts Studio Instance Console.

- On the DataArts Studio console, locate a workspace and click DataArts Factory.

- In the left navigation pane of DataArts Factory, choose .

- On the Real-Time Job Monitoring tab page, click a job name.

- On the displayed page, right-click the node and select Disable.

- Right-click the node and choose Resume from the shortcut menu. The Resume Node Running dialog box is displayed, as shown in Table 2. Figure 2 Resuming node running

Table 2 Resumption parameters Parameter

Description

Last Paused

Start time when a node is suspended.

Tasks Not Run

Number of tasks that are not running during node suspension.

Run From

Parameters for performing the tasks generated during the pause period.

Position from which running restarts.

- Paused node

- The first node of the subjob

Concurrent Tasks

Parameters for performing the tasks generated during the pause period.

Number of tasks to be processed.

Task Name

Parameters for performing the tasks generated during the pause period.

Task to be resumed.

Real-Time Job Monitoring: Configuring Scheduling for a Node Where Event-driven Scheduling Is Configured

If event-driven scheduling is configured for a node in a real-time job, right-click the node on the job monitoring details page and choose Configure Scheduling from the shortcut menu to view and modify the scheduling information about the node.

- Log in to the DataArts Studio console by following the instructions in Accessing the DataArts Studio Instance Console.

- On the DataArts Studio console, locate a workspace and click DataArts Factory.

- In the left navigation pane of DataArts Factory, choose .

- On the Real-Time Job Monitoring tab page, click a job name.

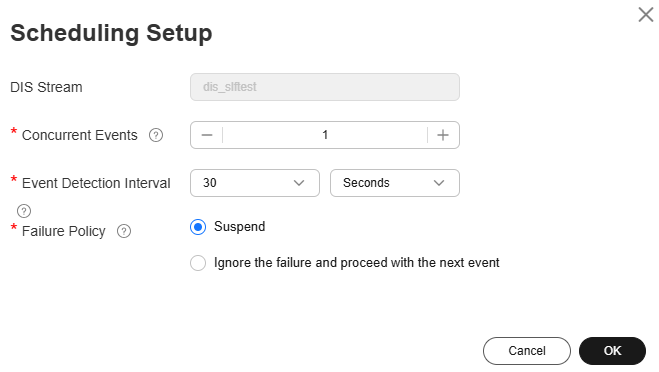

- On the displayed page, right-click the node where event-driven scheduling is configured, select Configure Scheduling, and configure the parameters shown in Table 3. Figure 3 Configuring scheduling

Table 3 Scheduling policy parameters Parameter

Description

DIS Stream

Name of the DIS stream. When a new message is sent to the specified DIS stream, DataArts Factory transfers the new message to the job to trigger the job running.

Concurrent Events

Number of jobs that can be concurrently processed. The maximum number of concurrent events is 128.

Event Detection Interval

Interval for event detection. The unit of the interval can be Seconds or Minutes.

Failure Policy

Select a policy to be performed after scheduling fails.

- Stop scheduling

- Ignore the failure and proceed with the next event

Figure 4 Configuring a DIS scheduling policy

SQL Statement Complexity

You can check the SQL statement complexity of a real-time processing single-task Flink SQL (including MRS Flink SQL) job.

SQL statement complexity: The system automatically collects statistics on the keywords in SQL statements and calculates SQL complexity based on the statistics.

- Collection of keywords in SQL statements

Number of keywords in a SQL statement = Quantity of JOIN + Quantity of GROUP BY + Quantity of ORDER BY + Quantity of DISTINCT + Quantity of window functions + MAX((Quantity of INSERT|Quantity of UPDATE|Quantity of DELETE), 1)

If the number of SQL keywords is much greater than 20, parsing will take a long time, and the job will be in the queue for a long time. You are advised to reduce the number of keywords in the SQL statement.

- SQL statement complexity calculation

- If the number of keywords in a SQL statement is less than or equal to 3, the complexity is 1.

- If the number of keywords in a SQL statement is less than or equal to 6 and greater than or equal to 4, the complexity is 1.5.

- If the number of keywords in a SQL statement is less than or equal to 19 and greater than or equal to 7, the complexity is 2.

- If the number of keywords in a SQL statement is greater than or equal to 20, the complexity is 4.

- The following is an example SQL statement:

SELECT DISTINCT total1 FROM(SELECT id1, COUNT(f1) AS total1 FROM in1 GROUP BY id1 ) tmp1 ORDER BY total1 DESC LIMIT 100;In the preceding example:

- DISTINCT quantity: 1

- GROUP BY quantity: 1

- ORDER BY quantity: 1

- MAX((INSERT quantity|UPDATE quantity|DELETE quantity), 1) = MAX(0|0|0, 1) = 1

- Number of keywords in the SQL statement = 1 + 1 + 1 + 1 = 4

The number of keywords in the SQL statement is less than or equal to 6 and greater than or equal to 4, so the SQL statement complexity is 1.5.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot