Performing PyTorch NPU Distributed Training In a ModelArts Lite Resource Pool Using Ranktable-based Route Planning

Description

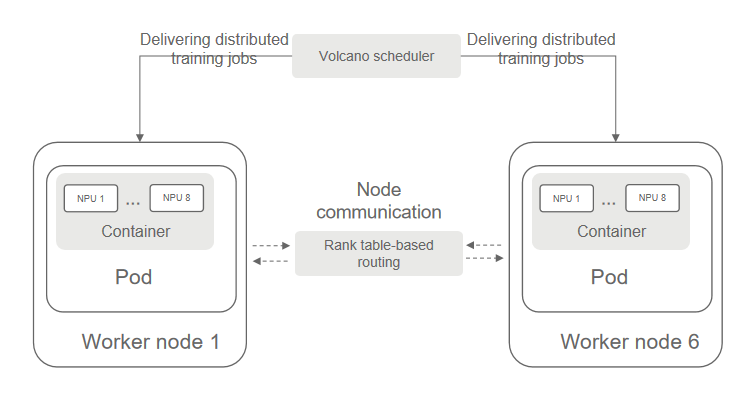

The ranktable route planning is a communication optimization capability used in distributed parallel training. When NPUs are used, network route affinity planning can be performed for communication paths between nodes based on the actual switch topology, improving the communication speed between nodes.

This case describes how to complete a PyTorch NPU distributed training task in ModelArts Lite using ranktable route planning. By default, training tasks are delivered to the Lite resource pool cluster in Volcano job mode.

Constraints

- This function is available only in CN Southwest-Guiyang1. If you want to use it in another region, contact technical support.

- The Huawei Cloud Volcano plug-ins of 1.10.12 or later must be installed in the CCE cluster corresponding to the ModelArts Lite resource pool. For details about how to install and upgrade a Volcano scheduler, see Volcano Scheduler. Only Huawei Cloud Volcano plug-ins support route acceleration.

- Python 3.7 or 3.9 must be used for training. Otherwise, the ranktable route cannot be used for accelerating.

- There must be at least three task nodes in a training job. Otherwise, the ranktable route will be skipped. Use ranktable route in large model scenarios, that is, there are 512 cards or more.

- The script execution directory cannot be a shared directory. Otherwise, the ranktable route will fail.

- To use ranktable route is to change the rank number. Therefore, the rank in codes must be unified. Otherwise, the training will be abnormal.

Procedure

- Enable the cabinet plug-in of the CCE cluster corresponding to the ModelArts Lite resource pool.

- In the ModelArts Lite dedicated resource pool list, click the resource pool name to view its details.

- On the displayed page, click the CCE cluster.

- In the navigation pane on the left, choose Add-ons, and search for Volcano Scheduler.

- Click Edit and check whether {"name":"cabinet"} exists in the plugins parameter.

- If {"name":"cabinet"} exists, go to 2.

- If {"name":"cabinet"} does not exist, add it to the plugins parameter in the advanced settings, and click Install.

- Modify the torch_npu training startup script.

You can only run the torch.distributed.launch/run command to start up the script. Otherwise, the ranktable route cannot be used for accelerating.

During Pytorch training, you need to set NODE_RANK to the value of the environment variable RANK_AFTER_ACC. The following shows an example of a training startup script (xxx_train.sh): MASTER_ADDR and NODE_RANK must retain these values.

#!/bin/bash # MASTER_ADDR MASTER_ADDR="${MA_VJ_NAME}-${MA_TASK_NAME}-${MA_MASTER_INDEX}.${MA_VJ_NAME}" NODE_RANK="$RANK_AFTER_ACC" NNODES="$MA_NUM_HOSTS" NGPUS_PER_NODE="$MA_NUM_GPUS" # self-define, it can be changed to >=10000 port MASTER_PORT="39888" # replace ${MA_JOB_DIR}/code/torch_ddp.py to the actutal training script PYTHON_SCRIPT=${MA_JOB_DIR}/code/torch_ddp.py PYTHON_ARGS="" # set hccl timeout time in seconds export HCCL_CONNECT_TIMEOUT=1800 # replace ${ANACONDA_DIR}/envs/${ENV_NAME}/bin/python to the actual python CMD="${ANACONDA_DIR}/envs/${ENV_NAME}/bin/python -m torch.distributed.launch \ --nnodes=$NNODES \ --node_rank=$NODE_RANK \ --nproc_per_node=$NGPUS_PER_NODE \ --master_addr $MASTER_ADDR \ --master_port=$MASTER_PORT \ $PYTHON_SCRIPT \ $PYTHON_ARGS " echo $CMD $CMD - Create the config.yaml file on the host.

The config.yaml file is used to configure pods. The following shows a code example. xxxx_train.sh indicates the modified training startup script in 2.

apiVersion: batch.volcano.sh/v1alpha1 kind: Job metadata: name: yourvcjobname # Job name namespace: default # Namespace labels: ring-controller.cce: ascend-1980 # Retain the default settings. fault-scheduling: "force" spec: minAvailable: 6 # Number of nodes used for distributed training schedulerName: volcano # Retain the default settings. policies: - event: PodEvicted action: RestartJob plugins: configmap1980: - --rank-table-version=v2 # Retain the default settings. The ranktable file of the v2 version is generated. env: [] svc: - --publish-not-ready-addresses=true # Retain the default settings. It is used for the communication between pods. Certain required environment variables are generated. maxRetry: 1 queue: default tasks: - name: "worker" # Retain the default settings. replicas: 6 # Number of tasks, which is the number of nodes in PyTorch. Set this to the value of minAvailable. template: metadata: annotations: cabinet: "cabinet" # Retain the default settings. Enable tor-topo delivery. labels: app: pytorch-npu # Tag ring-controller.cce: ascend-1980 # Retain the default settings. spec: affinity: podAntiAffinity: requiredDuringSchedulingIgnoredDuringExecution: - labelSelector: matchExpressions: - key: volcano.sh/job-name operator: In values: - yourvcjobname # Job name topologyKey: kubernetes.io/hostname containers: - image: swr.xxxxxx.com/xxxx/custom_pytorch_npu:v1 # Image address imagePullPolicy: IfNotPresent name: pytorch-npu # Container name env: - name: OPEN_SCRIPT_ADDRESS # Open script address. Set region-id based on the actual-life scenario, for example, cn-southwest-2. value: "https://mtest-bucket.obs.{region-id}.myhuaweicloud.com/acc/rank" - name: NAME valueFrom: fieldRef: fieldPath: metadata.name - name: MA_CURRENT_HOST_IP # Retain the default settings. This indicates the IP address of the node where the current pod is deployed. valueFrom: fieldRef: fieldPath: status.hostIP - name: MA_NUM_GPUS # Number of NPUs used by each pod value: "8" - name: MA_NUM_HOSTS # Number of nodes used in the distributed training. Set this to the value of minAvailable. value: "6" - name: MA_VJ_NAME # Name of the volcano job. valueFrom: fieldRef: fieldPath: metadata.annotations['volcano.sh/job-name'] - name: MA_TASK_NAME # Name of the task. valueFrom: fieldRef: fieldPath: metadata.annotations['volcano.sh/task-spec'] command: - /bin/bash - -c - Replace "wget ${OPEN_SCRIPT_ADDRESS}/bootstrap.sh -q && bash bootstrap.sh; export RANK_AFTER_ACC=${VC_TASK_INDEX}; rank_acc=$(cat /tmp/RANK_AFTER_ACC 2>/dev/null); [ -n \"${rank_acc}\" ] && export RANK_AFTER_ACC=${rank_acc};export MA_MASTER_INDEX=$(cat /tmp/MASTER_INDEX 2>/dev/null || echo 0); bash xxxx_train.sh" # Replace xxxx_train.sh with the actual training script path. resources: requests: huawei.com/ascend-1980: "8" # Number of cards required by each node. The key remains the same. Set this to the value of MA_NUM_GPUS. limits: huawei.com/ascend-1980: "8" # Maximum number of cards on each node. The key remains the same. Set this to the value of MA_NUM_GPUS. volumeMounts: - name: ascend-driver #Mount driver. Retain the settings. mountPath: /usr/local/Ascend/driver - name: ascend-add-ons #Mount driver. Retain the settings. mountPath: /usr/local/Ascend/add-ons - name: localtime mountPath: /etc/localtime - name: hccn # HCCN configuration of the driver. Retain the settings. mountPath: /etc/hccn.conf - name: npu-smi mountPath: /usr/local/sbin/npu-smi nodeSelector: accelerator/huawei-npu: ascend-1980 volumes: - name: ascend-driver hostPath: path: /usr/local/Ascend/driver - name: ascend-add-ons hostPath: path: /usr/local/Ascend/add-ons - name: localtime hostPath: path: /etc/localtime - name: hccn hostPath: path: /etc/hccn.conf - name: npu-smi hostPath: path: /usr/local/sbin/npu-smi restartPolicy: OnFailure - Run the following command to create and start the pod based on config.yaml. After the container is started, the training job is automatically executed.

kubectl apply -f config.yaml

- Run the following command to check the pod startup status. If 1/1 running is displayed, the startup is successful.

kubectl get pod

Figure 2 Command output of successful startup

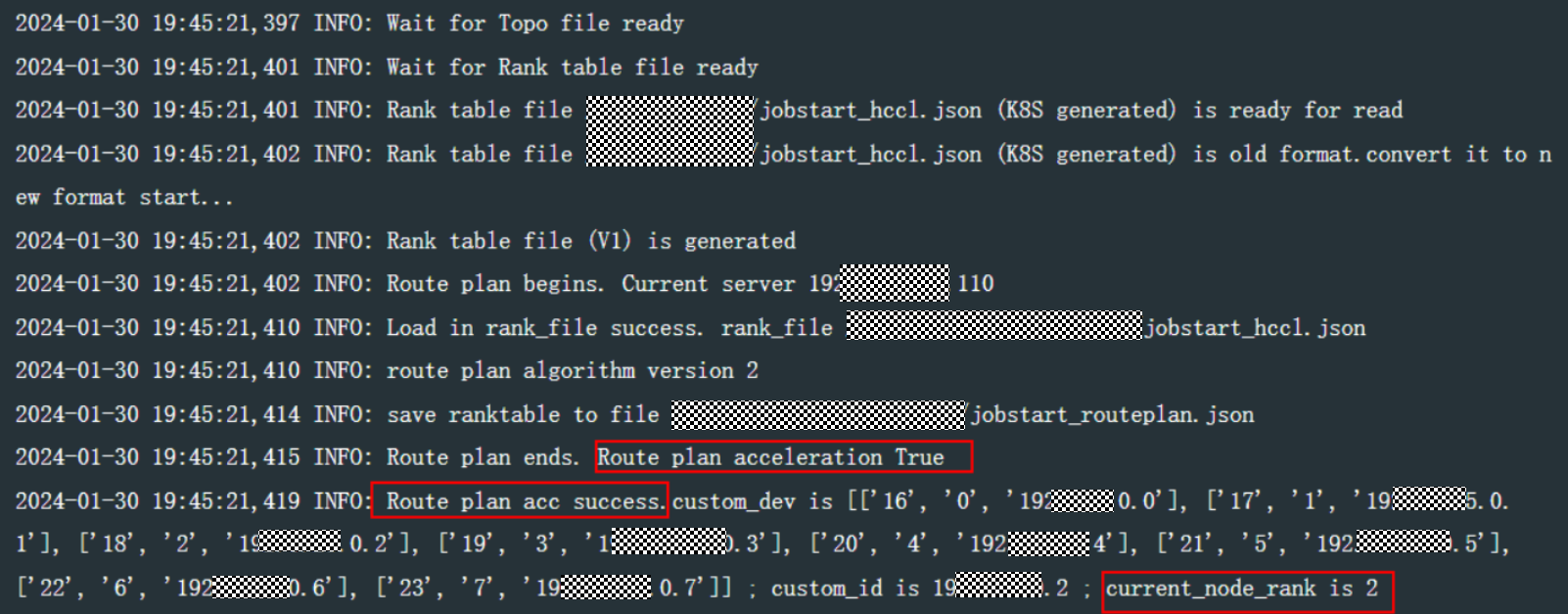

- Run the following command to view the logs. If Figure 3 is displayed, the route is executed.

kubectl logs {pod-name}Replace {pod-name} with the actual pod name, which can be obtained from the output in 5.

- Dynamic routing can be executed only if there are at least three training nodes in a training task.

- If the execution fails, rectify the fault by referring to Troubleshooting: ranktable Route Optimization Fails.

Troubleshooting: ranktable Route Optimization Fails

Symptom

There is error information in the container logs.

Possible Causes

The cluster node does not deliver the topo file and ranktable file.

Procedure

- In the ModelArts Lite dedicated resource pool list, click the resource pool name to view its details.

- On the displayed page, click the CCE cluster.

- In the navigation pane on the left, choose Nodes, and go to the Nodes tab.

- In the node list, locate the target node, and choose More > View YAML in the Operation column.

- Check whether the cce.kubectl.kubernetes.io/ascend-rank-table field in the yaml file has a value.

As shown in the following figure, if there is a value, delivering the topo file and ranktable file has been enabled on the node. Otherwise, contact technical support.

Figure 4 Viewing the YAML file of a node

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot