Using Hive to Load OBS Data and Analyze Enterprise Employee Information

Scenarios

MRS Hadoop analysis cluster provides Hive and Spark for storing, computing, and querying massive amounts of offline as well as distributed data.

This practice describes how to import and analyze raw data stored in OBS using Hive after you create an MRS cluster and how to implement elastic and low-cost big data analysis based on storage-compute decoupling.

In this practice, the raw data of employee information includes the following two tables:

|

ID |

Name |

Salary Currency |

Salary |

Tax Category |

Work Place |

Hire Date |

|---|---|---|---|---|---|---|

|

1 |

Wang |

R |

8000.01 |

personal income tax&0.05 |

China:Shenzhen |

2014 |

|

3 |

Tom |

D |

12000.02 |

personal income tax&0.09 |

America:NewYork |

2014 |

|

4 |

Jack |

D |

24000.03 |

personal income tax&0.09 |

America:Manhattan |

2015 |

|

6 |

Linda |

D |

36000.04 |

personal income tax&0.09 |

America:NewYork |

2014 |

|

8 |

Zhang |

R |

9000.05 |

personal income tax&0.05 |

China:Shanghai |

2014 |

|

ID |

Mobile Number |

Email Addresses |

|---|---|---|

|

1 |

135 XXXX XXXX |

xxxx@example.com |

|

3 |

159 XXXX XXXX |

xxxxx@example.com.cn |

|

4 |

186 XXXX XXXX |

xxxx@example.org |

|

6 |

189 XXXX XXXX |

xxxx@example.cn |

|

8 |

134 XXXX XXXX |

xxxx@example.cn |

You can perform the following analysis through a data application:

- Query contact information of employees whose salaries are paid in USD.

- Query the IDs and names of employees who were hired in 2014, and load the query results to a new table.

- Collect the number of employee information records.

- Query information about employees whose email addresses end with "cn".

Solution Architecture

Hive is a data warehouse built on Hadoop. It provides batch computing capability for the big data platform and is able to batch analyze and summarize structured and semi-structured data for data calculation. Hive operates structured data using Hive Query Language (HQL), a SQL-like language. HQL is automatically converted into MapReduce tasks for the query and analysis of massive data in the Hadoop cluster.

Hive is able to:

- Analyze massive structured data and summarizes analysis results.

- Allow complex MapReduce jobs to be compiled in SQL languages.

- Support flexible data storage formats, including JavaScript object notation (JSON), comma-separated values (CSV), TextFile, RCFile, SequenceFile, and ORC.

Hive functions as a data warehouse based on HDFS and MapReduce architecture and translates HQL statements into MapReduce jobs or HDFS operations.

- Metastore: reads, writes, and updates metadata such as tables, columns, and partitions. Its lower layer is relational databases.

- Driver: manages the lifecycle of HQL execution and participates in the entire Hive job execution.

- Compiler: translates HQL statements into a series of interdependent Map or Reduce jobs.

- Optimizer: is classified into logical optimizer and physical optimizer to optimize HQL execution plans and MapReduce jobs, respectively.

- Executor: runs Map or Reduce jobs based on job dependencies.

- ThriftServer: functions as the servers of JDBC, provides Thrift APIs, and integrates with Hive and other applications.

- Clients: include the web UI and JDBC APIs and provides APIs for user access.

Video Tutorial

This video uses MRS cluster creation in custom config mode as an example to describe how to connect to OBS by binding an IAM agency during MRS cluster creation and how to use Hive to access OBS data.

The UI may vary depending on the version. The video tutorial is for reference only.

Procedure

This practice describes how to develop a Hive data analysis application and how to run HQL statements to access Hive data stored in OBS after you connect to Hive through the client. For example, manage and query enterprise employee information. If you need to develop and build your application based on the sample code project provided by MRS, see Application Development Overview.

The operation process is as below:

Creating an MRS Offline Query Cluster

- Go to the Buy Cluster page.

- Click the Quick Config tab and set configuration parameters.

Table 3 Software parameters (for reference only) Parameter

Description

Example Value

Billing Mode

Billing mode of the cluster.

Pay-per-use

Region

Region where the MRS resources belong.

MRS clusters in different regions cannot communicate with each other over an intranet. For lower network latency and quick resource access, select the region nearest to you.

CN-Hong Kong

Cluster Name

Name of the MRS cluster.

MRS_demo

Cluster Type

Type of the cluster.

Custom

Version Type

Version type of the MRS cluster.

Normal

Cluster Version

MRS cluster version.

MRS 3.1.0

Component

Components in the MRS cluster.

Hadoop Analysis Cluster

AZ

Available AZ associated with the cluster region.

AZ1

VPC

VPC where you want to create the cluster. You can click View VPC to view the name and ID. If no VPC is available, create one.

vpc-01

Subnet

Subnet where your cluster belongs. You can access the VPC management console to view the names and IDs of existing subnets in the VPC. If no subnet is created under the VPC, click Create Subnet to create one.

subnet-01

Cluster Nodes

Cluster node details.

Default settings

Kerberos Authentication

Whether to enable Kerberos authentication when logging in to Manager.

Disabled

Username

Name of the administrator of MRS Manager. admin is used by default.

admin/root

Password

Password of the MRS Manager administrator.

Set the password for logging in to the cluster management page and ECS node, for example, Test!@12345.

Confirm Password

Enter the password of the Manager administrator again.

Enter the password again.

Enterprise Project

Enterprise project to which the cluster belongs.

default

Secure Communications

If the secure communications function is not enabled, MRS clusters cannot be created.

Select the check box.

- Click Buy Now and wait until the MRS cluster is created.

Creating an OBS Agency and Binding It to an MRS Cluster

- MRS presets MRS_ECS_DEFAULT_AGENCY in the agency list of IAM so that you can select this agency when creating a custom cluster. This agency has the OBSOperateAccess permissions and the CESFullAccess (only available for users who have enabled fine-grained policies), CES Administrator, and KMS Administrator permissions in the region where the cluster resides.

- If you want to use a custom agency, perform the following steps to create an agency. (To create or modify an agency, you must have the Security Administrator permission.)

- Log in to the Huawei Cloud management console.

- Choose Service List > Management & Governance > Identity and Access Management.

- In the navigation pane on the left, choose Agencies. On the displayed page, click Create Agency.

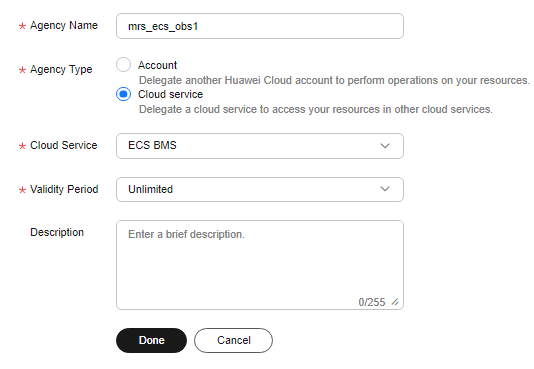

- Set Agency Name, select Cloud service for Agency Type, and select Elastic Cloud Server (ECS) and Bare Metal Server (BMS) for Cloud Service to authorize ECS or BMS to invoke OBS.

- Set Validity Period to Unlimited and click OK.

Figure 2 Creating an agency

- Click Authorize in the Operation column of the created agency, search for the OBS OperateAccess policy, and select the policy.

- Click Next. On the displayed page, select the desired scope for permissions you selected. By default, All resources is selected. You can click Show More and select Global resources.

- In the displayed dialog box, click OK to start authorization. After the message "Authorization successful." is displayed, click Finish. The agency is successfully created.

- Switch back to the MRS console and click the name of the created MRS cluster. On the Dashboard page, click Select Agency, select the created OBS agency, and click OK.

Creating a Hive Table and Loading Data from OBS

- Choose Service List > Object Storage Service. In the navigation pane on the left, choose Parallel File Systems and click Create Parallel File System, set the following parameters, and click Create Now.

Table 4 Parallel file system parameters Parameter

Description

Example Value

Region

Region where the parallel file system is deployed.

CN-Hong Kong

File System Name

Name of the parallel file system.

hiveobs

Data Redundancy Policy

- Multi-AZ storage: Data is stored in multiple AZs to achieve higher reliability.

- Single-AZ storage: Data is stored in a single AZ, with lower costs.

Single-AZ storage

Policy

Read and write policy of the parallel file system.

Private

Direct Reading

Direct reading allows you to download files from the Archive storage class without restoring them in advance.

Disable

Enterprise Project

Enterprise project where the parallel file system belongs, which facilitates unified management.

default

Tags

(Optional) Tags are used to identify and classify parallel file systems in OBS.

-

- Download the MRS cluster client, and install it, for example, in the /opt/client directory of the active master node. For details, see Installing a Client.

You can also use the cluster client provided in the /opt/Bigdata/client directory of the master node.

- Bind an EIP to the active master node and enable port 22 in the security group. Then, log in to the active master node as user root, go to the directory where the client is located, and load variables.

cd /opt/client

source bigdata_env

- Run the beeline command to go to the Hive Beeline page.

Run the following command to create an employee information data table employees_info that matches the raw data fields:

create external table if not exists employees_info

(

id INT,

name STRING,

usd_flag STRING,

salary DOUBLE,

deductions MAP<STRING, DOUBLE>,

address STRING,

entrytime STRING

)

row format delimited fields terminated by ',' map keys terminated by '&'

stored as textfile

location 'obs://hiveobs/employees_info';

Run the following command to create an employee contact information table employees_contact that matches the raw data fields:

create external table if not exists employees_contact

(

id INT,

phone STRING,

email STRING

)

row format delimited fields terminated by ','

stored as textfile

location 'obs://hiveobs/employees_contact';

- Run the following command to check whether the table is successfully created:

show tables;

+--------------------------+ | tab_name | +--------------------------+ | employees_contact | | employees_info | +--------------------------+

- Import data to the corresponding OBS table directory.

By default, a folder is created in the specified storage space for a Hive internal table. The table can read data that matches the table structure as long as the file is stored in the folder.

Log in to the OBS Console. On the Files page of the created file system, upload the local raw data to the employees_info and employees_contact folders.

The following is an example of the raw data format:

info.txt:

1,Wang,R,8000.01,personal income tax&0.05,China:Shenzhen,2014 3,Tom,D,12000.02,personal income tax&0.09,America:NewYork,2014 4,Jack,D,24000.03,personal income tax&0.09,America:Manhattan,2015 6,Linda,D,36000.04,personal income tax&0.09,America:NewYork,2014 8,Zhang,R,9000.05,personal income tax&0.05,China:Shanghai,2014

contact.txt:

1,135 XXXX XXXX,xxxx@xx.com 3,159 XXXX XXXX,xxxx@xx.com.cn 4,189 XXXX XXXX,xxxx@xx.org 6,189 XXXX XXXX,xxxx@xx.cn 8,134 XXXX XXXX,xxxx@xxx.cn

- Run the following command on the Hive Beeline client to check whether the source data is correctly loaded:

select * from employees_info;

+--------------------+----------------------+--------------------------+------------------------+-------------------------------+-------------------------+---------------------------+ | employees_info.id | employees_info.name | employees_info.usd_flag | employees_info.salary | employees_info.deductions | employees_info.address | employees_info.entrytime | +--------------------+----------------------+--------------------------+------------------------+-------------------------------+-------------------------+---------------------------+ | 1 | Wang | R | 8000.01 | {"personal income tax":0.05} | China:Shenzhen | 2014 | | 3 | Tom | D | 12000.02 | {"personal income tax":0.09} | America:NewYork | 2014 | | 4 | Jack | D | 24000.03 | {"personal income tax":0.09} | America:Manhattan | 2015 | | 6 | Linda | D | 36000.04 | {"personal income tax":0.09} | America:NewYork | 2014 | | 8 | Zhang | R | 9000.05 | {"personal income tax":0.05} | China:Shanghai | 2014 | +--------------------+----------------------+--------------------------+------------------------+-------------------------------+-------------------------+---------------------------+select * from employees_contact;

+-----------------------+--------------------------+--------------------------+ | employees_contact.id | employees_contact.phone | employees_contact.email | +-----------------------+--------------------------+--------------------------+ | 1 | 135 XXXX XXXX | xxxx@xx.com | | 3 | 159 XXXX XXXX | xxxx@xx.com.cn | | 4 | 186 XXXX XXXX | xxxx@xx.org | | 6 | 189 XXXX XXXX | xxxx@xx.cn | | 8 | 134 XXXX XXXX | xxxx@xxx.cn | +-----------------------+--------------------------+--------------------------+

Analyzing Data Using HQL

On the Hive Beeline client, run the HQL statements to analyze the raw data.

- Query contact information of employees whose salaries are paid in USD.

Run the following command to create a data table for data cleansing:

create table employees_info_v2 as select id, name, regexp_replace(usd_flag, '\s+','') as usd_flag, salary, deductions, address, entrytime from employees_info;

After the Map task is complete, run the following command:

select a.* from employees_info_v2 a inner join employees_contact b on a.id = b.id where a.usd_flag='D';

INFO : MapReduce Jobs Launched: INFO : Stage-Stage-3: Map: 1 Cumulative CPU: 2.95 sec HDFS Read: 8483 HDFS Write: 317 SUCCESS INFO : Total MapReduce CPU Time Spent: 2 seconds 950 msec INFO : Completed executing command(queryId=omm_20211022162303_c26d4f1b-a577-4d6c-919c-6cb96095b24b); Time taken: 26.259 seconds INFO : OK INFO : Concurrency mode is disabled, not creating a lock manager +-------+---------+-------------+-----------+-------------------------------+--------------------+--------------+ | a.id | a.name | a.usd_flag | a.salary | a.deductions | a.address | a.entrytime | +-------+---------+-------------+-----------+-------------------------------+--------------------+--------------+ | 3 | Tom | D | 12000.02 | {"personal income tax":0.09} | America:NewYork | 2014 | | 4 | Jack | D | 24000.03 | {"personal income tax":0.09} | America:Manhattan | 2015 | | 6 | Linda | D | 36000.04 | {"personal income tax":0.09} | America:NewYork | 2014 | +-------+---------+-------------+-----------+-------------------------------+--------------------+--------------+ 3 rows selected (26.439 seconds) - Query the IDs and names of employees who were hired in 2014, and load the query results to the partition with the hire date of 2014 in the employees_info_extended table.

Run the following to create a table:

create table if not exists employees_info_extended (id int, name string, usd_flag string, salary double, deductions map<string, double>, address string) partitioned by (entrytime string) stored as textfile;

Run the following command to write data into the table:

insert into employees_info_extended partition(entrytime='2014') select id,name,usd_flag,salary,deductions,address from employees_info_v2 where entrytime = '2014';

After data is extracted, run the following command to query the data:

select * from employees_info_extended;

+-----------------------------+-------------------------------+-----------------------------------+---------------------------------+-------------------------------------+----------------------------------+------------------------------------+ | employees_info_extended.id | employees_info_extended.name | employees_info_extended.usd_flag | employees_info_extended.salary | employees_info_extended.deductions | employees_info_extended.address | employees_info_extended.entrytime | +-----------------------------+-------------------------------+-----------------------------------+---------------------------------+-------------------------------------+----------------------------------+------------------------------------+ | 1 | Wang | R | 8000.01 | {"personal income tax":0.05} | China:Shenzhen | 2014 | | 3 | Tom | D | 12000.02 | {"personal income tax":0.09} | America:NewYork | 2014 | | 6 | Linda | D | 36000.04 | {"personal income tax":0.09} | America:NewYork | 2014 | | 8 | Zhang | R | 9000.05 | {"personal income tax":0.05} | China:Shanghai | 2014 | +-----------------------------+-------------------------------+-----------------------------------+---------------------------------+-------------------------------------+----------------------------------+------------------------------------+ - Run the following command to collect the number of employee information records:

select count(1) from employees_info_v2;

+------+ | _c0 | +------+ | 5 | +------+

- Run the following command to query information about employees whose email addresses end with "cn":

select a.*, b.email from employees_info_v2 a inner join employees_contact b on a.id = b.id where b.email rlike '.*cn$';

+-------+---------+-------------+-----------+-------------------------------+------------------+--------------+-----------------+ | a.id | a.name | a.usd_flag | a.salary | a.deductions | a.address | a.entrytime | b.email | +-------+---------+-------------+-----------+-------------------------------+------------------+--------------+-----------------+ | 3 | Tom | D | 12000.02 | {"personal income tax":0.09} | America:NewYork | 2014 | xxxx@xx.com.cn | | 6 | Linda | D | 36000.04 | {"personal income tax":0.09} | America:NewYork | 2014 | xxxx@xx.cn | | 8 | Zhang | R | 9000.05 | {"personal income tax":0.05} | China:Shanghai | 2014 | xxxx@xxx.cn | +-------+---------+-------------+-----------+-------------------------------+------------------+--------------+-----------------+

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot