Cloud Native Network 2.0

Definición del modelo

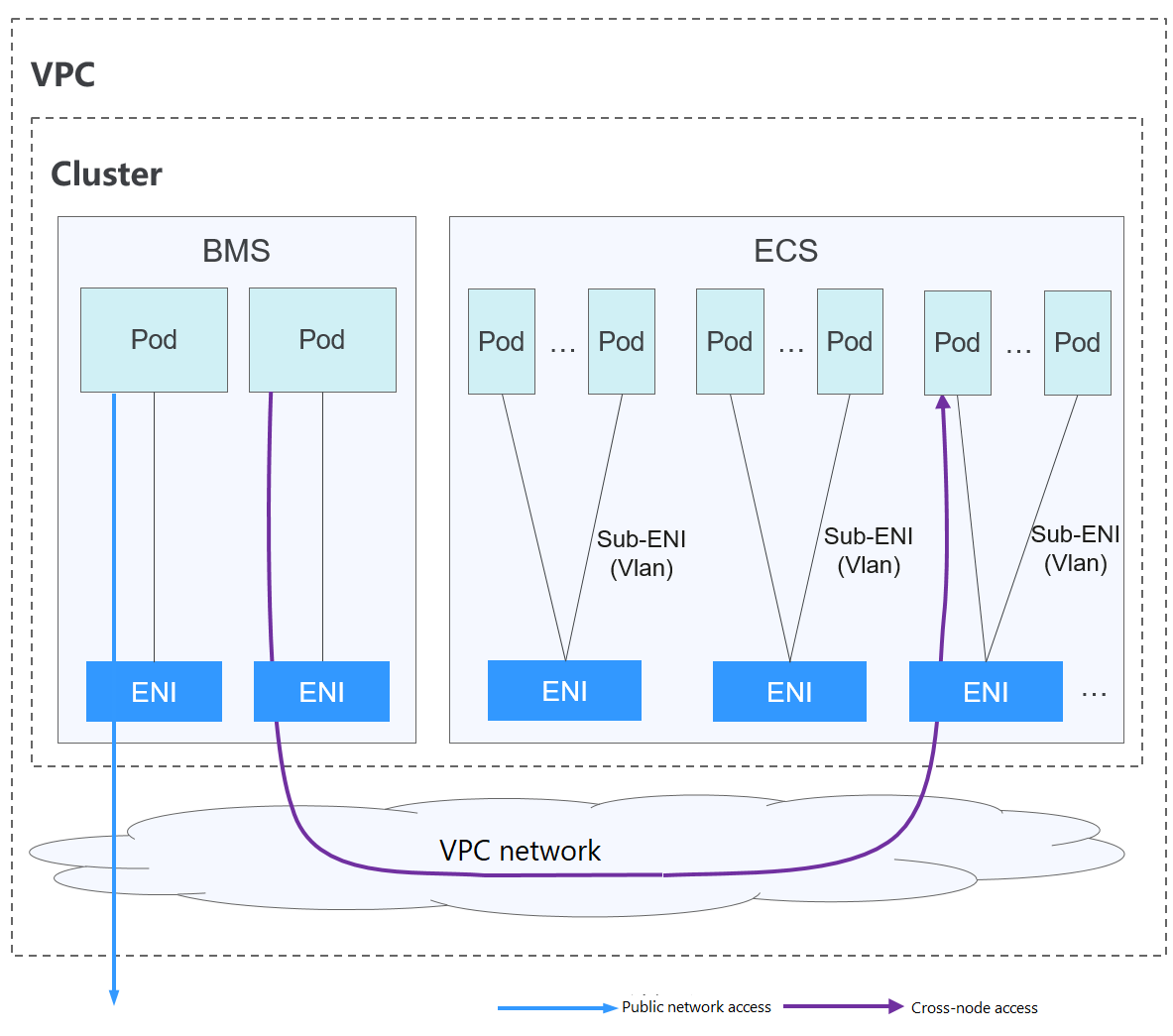

Desarrollado por CCE, Cloud Native Network 2.0 integra profundamente las interfaces de red elástica (ENI) y sub-ENI de Virtual Private Cloud (VPC). Las direcciones IP del contenedor se asignan desde el bloque CIDR de VPC. Se admite la red de paso a través de ELB para las solicitudes de acceso directo a contenedores. Los grupos de seguridad y las IP elásticas (EIP) están destinados a ofrecer un alto rendimiento.

Comunicación de pod a pod

- Los pods de los nodos de BMS usan las ENI, mientras que los pods de los nodos de ECS usan las Sub-ENI. Las sub-ENI se conectan a las ENI con subinterfaces de VLAN.

- En el mismo nodo: Los paquetes se reenvían con la ENI o sub-ENI de VPC.

- A través de los nodos: Los paquetes se reenvían a través de la ENI o sub-ENI de VPC.

Notas y restricciones

Este modelo de red solo está disponible para los clústeres de CCE Turbo.

Ventajas y desventajas

Ventajas

- Como la red de contenedor utiliza directamente VPC, es fácil localizar problemas de red y proporcionar el más alto rendimiento.

- Las redes externas de una VPC se pueden conectar directamente a las direcciones IP de contenedor.

- Las capacidades de balanceo de carga, grupo de seguridad y EIP proporcionadas por VPC se pueden utilizar directamente.

Desventajas

La red de contenedor utiliza directamente la VPC, que ocupa el espacio de direcciones de la VPC. Por lo tanto, debe planificar correctamente el bloque CIDR de contenedor antes de crear un clúster.

Escenarios de aplicación

- Requisitos de alto rendimiento y uso de otras capacidades de red de VPC: Cloud Native Network 2.0 utiliza directamente la VPC, que ofrece casi el mismo rendimiento que la red de VPC. Por lo tanto, es aplicable a escenarios que tienen altos requisitos de ancho de banda y de latencia, como la transmisión en vivo en línea y el seckill de comercio electrónico.

- Redes a gran escala: Cloud Native Network 2.0 admite un máximo de 2000 nodos de ECS y 100,000 contenedores.

Gestión de direcciones IP de contenedores

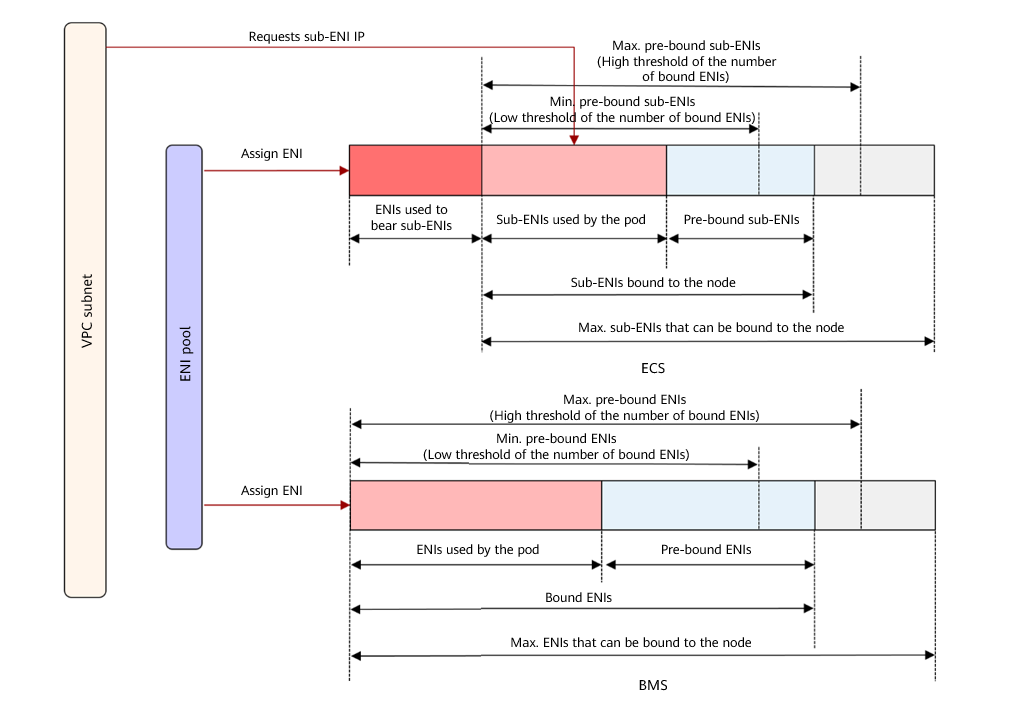

En el modelo de Cloud Native Network 2.0, los nodos de BMS usan las ENI y los nodos de ECS usan las sub-ENI.

- La dirección IP del pod se asigna directamente desde la subred de VPC configurada para la red de contenedor. No es necesario asignar un segmento de red pequeño independiente al nodo.

- Para agregar un nodo de ECS a un clúster, enlaza primero la ENI que lleva la sub-ENI. Después de enlazar la ENI, puede enlazar la sub-ENI.

- Número de las ENI enlazadas a un nodo de ECS: Número máximo de sub-ENIs que pueden enlazarse al nodo/64. El valor se redondea hacia arriba.

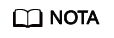

- ENI enlazada a un nodo de ECS = Número de ENIs utilizadas para soportar sub-ENIs + Número de sub-ENIs actualmente utilizadas por los pods + Número de sub-ENIs preenlazadas

- ENIs unidas a un nodo de BMS = Número de ENIs utilizadas actualmente por los pods + Número de ENIs preenlazadas

- Cuando se crea un pod, se asigna aleatoriamente una ENI disponible desde el grupo de ENI de preenlace del nodo.

- Cuando se elimina el pod, la ENI se libera de nuevo al grupo de ENI del nodo.

- Cuando se elimina un nodo, las ENI se liberan de nuevo en el grupo y las sub-ENI se eliminan.

Actualmente, el modelo de Cloud Native Network 2.0 es compatible con las políticas de enlace previo ENI dinámicas y basadas en umbrales. En la siguiente tabla se enumeran los escenarios.

|

Política |

Política de pre-vinculación dinámica de ENI (predeterminada) |

Política de pre-vinculación ENI basada en umbral |

|---|---|---|

|

Política de gestión |

nic-minimum-target: número mínimo de ENIs (no utilizadas + utilizadas) enlazadas a un nodo nic-maximum-target: si el número de ENIs enlazadas a un nodo excede el valor de este parámetro, el sistema no vincula de forma proactiva las ENIs. Pre-bound ENIs: ENIs adicionales que estarán preenlazadas a un nodo nic-max-above-warm-target: Las ENI no están unidas y se recuperan solo cuando el número de ENI inactivas menos el número de nic-warm-target es mayor que el umbral. |

Low threshold of the number of bound ENIs: número mínimo de ENIs (no utilizadas + utilizadas) enlazadas a un nodo High threshold of the number of bound ENIs: número máximo de ENIs que se pueden enlazar a un nodo. Si el número de ENI enlazadas a un nodo excede el valor de este parámetro, el sistema desvincula los ENI inactivas. |

|

Escenario de aplicación |

Acelera el inicio del pod al tiempo que mejora la utilización de los recursos IP. Este modo se aplica a escenarios en los que el número de direcciones IP en el segmento de red contenedor es insuficiente. Para obtener más información sobre los parámetros anteriores, consulte ENIs de previnculación para clústeres de CCE Turbo. |

Se aplica a escenarios en los que el número de direcciones IP en el bloque CIDR contenedor es suficiente y el número de pods en los nodos cambia bruscamente pero se fija en un cierto rango. |

- Para clústeres de 1.19.16-r2, 1.21.5-r0, 1.23.3-r0 a 1.19.16-r4, 1.21.7-r0 y 1.23.5-r0, solo se admiten los parámetros nic-minimum-target y nic-warm-target. La política de previnculación basada en umbrales tiene prioridad sobre la política de previnculación de ENI dinámica.

- Para clústeres de 1.19.16-r4, 1.21.7-r0, 1.23.5-r0, 1.25.1-r0 y posteriores, se admiten los cuatro parámetros anteriores. La política de pre-vinculación de ENI dinámica tiene prioridad sobre la política de pre-vinculación basada en umbrales.

CCE proporciona cuatro parámetros para la política dinámica de pre-encuadernación de ENI. Establezca estos parámetros correctamente.

|

Parámetro |

Valor predeterminado |

Descripción |

Sugerencia |

|---|---|---|---|

|

nic-minimum-target |

10 |

Número mínimo de ENI enlazadas a un nodo. El valor puede ser un número o un porcentaje.

Establezca tanto nic-minimum-target como nic-maximum-target en el mismo valor o porcentaje. |

Establezca estos parámetros en función del número de pods. |

|

nic-maximum-target |

0 |

Si el número de ENI enlazadas a un nodo excede el valor de nic-maximum-target, el sistema no enlaza de forma proactiva las ENI. Si el valor de este parámetro es mayor o igual que el valor de nic-minimum-target, se activa la comprobación del número máximo de ENIs preenlazadas. De lo contrario, la comprobación está deshabilitada. El valor puede ser un número o un porcentaje.

Establezca tanto nic-minimum-target como nic-maximum-target en el mismo valor o porcentaje. |

Establezca estos parámetros en función del número de pods. |

|

nic-warm-target |

2 |

Las ENIs adicionales estarán pre-enlazadas después de que el nic-minimum-target se haya usado en un pod. El valor solo puede ser un número. Cuando el valor de nic-warm-target + el número de ENIs enlazadas es mayor que el valor de nic-maximum-target, el sistema pre-enlazará ENIs en función de la diferencia entre el valor de nic-maximum-target y el número de ENIs enlazadas. |

Establezca este parámetro en el número de pods que se pueden escalar instantáneamente en 10 segundos. |

|

nic-max-above-warm-target |

2 |

Solo cuando el número de ENIs inactivas en un nodo menos el valor de nic-warm-target es mayor que el umbral, las ENIs preenlazadas no se enlazarán y se reclamarán. El valor solo puede ser un número.

|

Establezca este parámetro en función de la diferencia entre el número de pods que se escalan con frecuencia en la mayoría de los nodos en cuestión de minutos y el número de pods que se escalan instantáneamente en la mayoría de los nodos en 10 segundos. |

Los parámetros anteriores admiten la configuración global en el nivel del clúster y la configuración diferenciada en el nivel del grupo de nodos. Este último tiene prioridad sobre el primero.

- Número de ENIs preenlazadas = min(nic-máximo-objetivo - Número de ENIs vinculadas, máx(nic-mínimo-objetivo - Número de ENIs vinculadas, nic-warm-target - Número de ENIs inactivas)

- Número de ENIs a no enlazar = min(Número de ENIs inactivas - nic-warm-target-nic-max-above-warm-target, número de ENIs unidas - nic-minimum-target)

- Número mínimo de ENIs a ser pre-enlazadas = min(máx(nic-minimum-target - número de ENIs enlazadas, nic-warm-target), nic-maximum-target - número de ENIs enlazadas)

- Número máximo de ENIs a ser pre-enlazadas = máx(nic-warm-target+ nic-max-above-warm-target, número de ENIs enlazadas - nic-minimum-target)

Cuando se crea un pod, una ENI inactiva (la más antigua no utilizada) se asigna preferentemente desde el grupo. Si no hay ENI inactiva disponible, una nueva sub-ENI está enlazada al pod.

Cuando se elimina el pod, la ENI correspondiente se libera de nuevo al grupo de ENI preenlazadas del nodo, entra en un periodo de enfriamiento de 2 minutos, y se puede vincular a otro pod. Si la ENI no está vinculada a ningún pod en 2 minutos, se liberará.

CCE proporciona un parámetro de configuración para los algoritmos de umbral. Puede establecer este parámetro en función del plan de servicio, la escala del clúster y el número de ENI que se pueden enlazar a un nodo.

- Low threshold of the number of bound ENIs: El valor predeterminado es 0 que indica el número mínimo de ENIs (no utilizadas + utilizadas) enlazadas a un nodo. Número mínimo de ENIs preenlazadas en un nodo de ECS = Número de ENIs enlazadas al nodo en el umbral bajo x Número de subENIs en el nodo. Número mínimo de ENIs preenlazadas en un nodo de BMS = Número de ENIs enlazadas al nodo en el umbral bajo x Número de ENIs en el nodo.

- High threshold of the number of bound ENIs: El valor predeterminado es 0 que indica el número máximo de ENI que se pueden enlazar a un nodo. Si el número de ENI enlazadas a un nodo excede el valor de este parámetro, el sistema desvincula los ENI inactivas. Número máximo de ENIs preenlazadas en un nodo de ECS = Número de ENIs enlazadas en el umbral alto x Número de subENIs en el nodo. Número máximo de ENIs preenlazadas en un nodo de BMS = Número de ENIs enlazadas en el umbral alto x Número de ENIs en el nodo.

El componente de red de contenedor mantiene un grupo de ENI escalable para cada nodo.

- Si el número de ENIs enlazadas (ENIs usadas + ENIs preenlazadas) es menor que el número de ENIs preenlazadas en el umbral bajo, las ENIs son enlazadas hasta que los dos números son iguales.

- Si el número de ENIs enlazadas (ENIs usadas + ENIs preenlazadas) es mayor que el número de ENIs preenlazadas en el umbral alto y el número de ENIs preenlazadas es mayor que 0, las ENIs preenlazadas que no se utilicen durante más de 2 minutos se liberarán periódicamente hasta que el número de ENIs enlazadas = Número de ENIs preenlazadas en el umbral alto o el número de ENIs usadas sea mayor que el número de ENIs preenlazadas en el umbral alto y el número de ENIs preenlazadas en el nodo es 0.

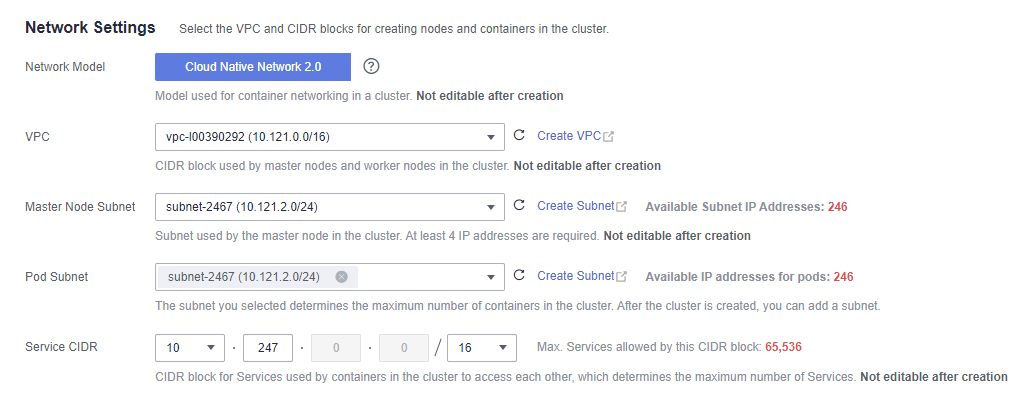

Recomendación para la planificación de bloques CIDR

Como se describe en Estructura de red de clústeres, las direcciones de red de un clúster se pueden dividir en tres partes: red de nodo, red de contenedor y red de servicio. Al planificar direcciones de red, tenga en cuenta los siguientes aspectos:

- Los tres bloques CIDR no pueden superponerse. De lo contrario, se produce un conflicto. Todas las subredes (incluidas las creadas a partir del bloque CIDR secundario) en la VPC donde reside el clúster no pueden entrar en conflicto con los bloques CIDR de contenedor y de Service.

- Asegúrese de que cada bloque CIDR tenga suficientes direcciones IP.

- Las direcciones IP en el bloque CIDR del nodo deben coincidir con la escala del clúster. De lo contrario, no se pueden crear nodos debido a la insuficiencia de direcciones IP.

- Las direcciones IP en el bloque CIDR de contenedor deben coincidir con la escala de servicio. De lo contrario, los pods no se pueden crear debido a la insuficiencia de direcciones IP.

En el modelo de Cloud Native Network 2.0, el bloque CIDR de contenedor y el bloque CIDR del nodo comparten las direcciones de red en una VPC. Se recomienda que la subred de contenedor y la subred de nodo no utilicen la misma subred. De lo contrario, es posible que no se creen contenedores o nodos debido a la insuficiencia de recursos de la IP.

Además, se puede agregar una subred al bloque CIDR de contenedor después de crear un clúster para aumentar el número de direcciones IP disponibles. En este caso, asegúrese de que la subred agregada no entre en conflicto con otras subredes en el bloque CIDR de contenedor.

Ejemplo de acceso a Cloud Native Network 2.0

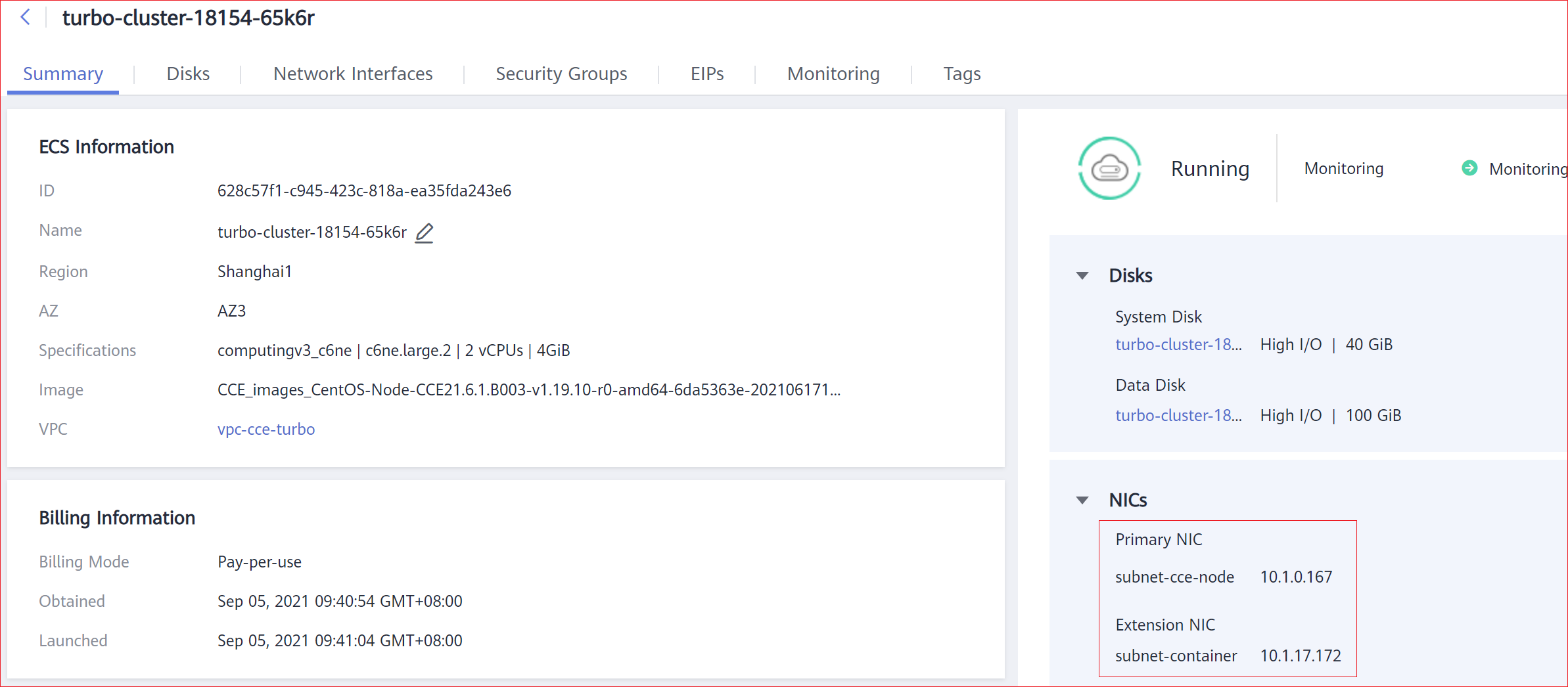

Cree un clúster de CCE Turbo, que contenga tres nodos de ECS.

Acceda a la página de detalles de un nodo. Puede ver que el nodo tiene una ENI principal y una ENI extendida, y ambas son las ENI. La ENI extendida pertenece al bloque CIDR de contenedor y se utiliza para montar un sub-ENI en el pod.

Cree una Deployment en el clúster.

kind: Deployment

apiVersion: apps/v1

metadata:

name: example

namespace: default

spec:

replicas: 6

selector:

matchLabels:

app: example

template:

metadata:

labels:

app: example

spec:

containers:

- name: container-0

image: 'nginx:perl'

resources:

limits:

cpu: 250m

memory: 512Mi

requests:

cpu: 250m

memory: 512Mi

imagePullSecrets:

- name: default-secret

Vea el pod creado.

$ kubectl get pod -owide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES example-5bdc5699b7-54v7g 1/1 Running 0 7s 10.1.18.2 10.1.0.167 <none> <none> example-5bdc5699b7-6dzx5 1/1 Running 0 7s 10.1.18.216 10.1.0.186 <none> <none> example-5bdc5699b7-gq7xs 1/1 Running 0 7s 10.1.16.63 10.1.0.144 <none> <none> example-5bdc5699b7-h9rvb 1/1 Running 0 7s 10.1.16.125 10.1.0.167 <none> <none> example-5bdc5699b7-s9fts 1/1 Running 0 7s 10.1.16.89 10.1.0.144 <none> <none> example-5bdc5699b7-swq6q 1/1 Running 0 7s 10.1.17.111 10.1.0.167 <none> <none>

Las direcciones IP de todos los pods son sub-ENIs, que se montan en la ENI (ENI extendida) del nodo.

Por ejemplo, la ENI extendida del nodo 10.1.0.167 es 10.1.17.172. En la página Network Interfaces de la consola de red, puede ver que tres sub-ENI están montadas en la ENI extendida 10.1.17.172, que es la dirección IP del pod.

En la VPC, se puede acceder con éxito a la dirección IP del pod.