Configuring a Data Mapping Rule

Overview

This topic describes how to configure mapping information for a data integration task. Based on mappings between source data fields and destination data fields, ROMA Connect converts the obtained source data and writes it to the destination.

Constraints

- Do not use the keywords of corresponding databases as source/destination field names. Otherwise, data integration tasks may fail.

- If MRS Hive is used as the data source type at the destination and you need to configure partition field writing, see Mapping Configuration of MRS Hive Partition Fields.

Configuring Mapping Information

- On the Create Task page, configure mapping information in auto or manual mode.

- Auto Mapping Configuration

If metadata is defined at both the source and destination, you can use the automatic mapping mode to configure mapping information.

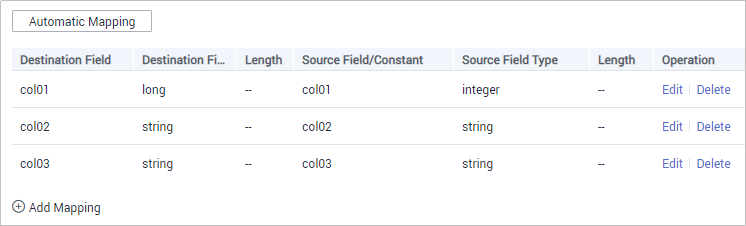

Click Automatic Mapping. A mapping rule between the source and destination data fields is automatically created. If the fields are inconsistent, click Edit to change them or click Add Mapping below.

Figure 1 Automatic mapping

- Manual Mapping Configuration

You can manually add mapping rules between source data fields and destination data fields. This method applies to the integration scenario of all data types. You can configure the mapping rule by entering a key-value pair or entering a value in the text box.

- Key-Value Pair Mode

By default, the key-value pair input mode is used. Click Add Field Mapping to add mapping rules from source data fields to destination data fields one by one.

- Text Mode

Click Text Mode and enter the mapping rule script in the text box. The format is as follows:

[{ "sourceKey": "a1", "targetKey": "b1" }, { "sourceKey": "a2", "targetKey": "b2" }]sourceKey indicates a source data field, and targetKey indicates a destination data field. In the preceding example, source field a1 is mapped to destination field b1, and source field a2 is mapped to destination field b2.

- Key-Value Pair Mode

- Auto Mapping Configuration

- After the mapping information is configured, if you need to configure the abnormal data storage and post-integration operation, go to (Optional) Configuring Fault Information Storage and (Optional) Configuring the Post-Integration Operation. Otherwise, click Create to complete the data integration task configuration.

Mapping Configuration of MRS Hive Partition Fields

When the data source type is set to MRS Hive at the destination, partition fields can be written. You can configure the partition fields based on the site requirements.

Source Field corresponding to the partition field must be manually entered. The specific requirements are as follows:

Format: {Partition field source field}.format({Character string parsing format},{Partition field parsing format}",{year|month|day|hour|minute|second},{offset})

- If {Partition field source field} is of the String type, {String parsing format} must be specified.

- If {Partition field source field} is of the Timestamp type, {String parsing format} can be left blank.

- If {Partition field source field} is empty, the time when data is written to the destination is used as the partition field.

For example, if the partition field on the destination is yyyymm and the createtime field on the source is used as the source field of the partition field, the time format of the createtime field is ddMMyyyy (day, month, and year), and the partition field on the destination is yyyyMM (year and month). If the value of the partition field is one hour later than the value of the source field, the source field name of the partition field yyyymm in the mapping information is as follows:

- If the value of createtime is of the String type, set this parameter to createtime.format("ddMMyyyy", "yyyyMM", hour, 1).

- If the value of createtime is of the Timestamp type, set this parameter to createtime.format("","yyyyMM", hour, 1).

- If the time when data is written to the destination is used as the partition field, set this parameter to .format(","yyyyMM", hour, 1).

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot