Running a Hadoop Streaming Job

Hadoop Streaming is a utility provided by Apache Hadoop. The utility allows you to create and run Map and Reduce jobs with any executables (for example, Python and shell scripts) as the mapper and/or the reducer, instead of Java. Hadoop Streaming uses standard input (STDIN) and output (STDOUT) for data exchange. It enables developers to quickly write distributed computing jobs using existing script languages and is especially suitable for rapid prototyping and cross-language integration.

MRS allows you to submit and run your own programs, and get the results. This section will show you how to submit a Hadoop Streaming job in an MRS cluster.

You can create a job online and submit it for running on the MRS console, or submit a job in CLI mode on the MRS cluster client.

Prerequisites

- You have uploaded the program packages and data files required by jobs to OBS or HDFS.

- If the job program needs to read and analyze data in the OBS file system, you need to configure storage-compute decoupling for the MRS cluster. For details, see Configuring Storage-Compute Decoupling for an MRS Cluster.

Notes and Constraints

- When the policy of the user group to which an IAM user belongs changes from MRS ReadOnlyAccess to MRS CommonOperations, MRS FullAccess, or MRS Administrator, or vice versa, wait for five minutes after user synchronization for the System Security Services Daemon (SSSD) cache of the cluster node to refresh. Submit a job on the MRS console after the new policy takes effect. Otherwise, the job submission may fail.

- If the IAM username contains spaces (for example, admin 01), you cannot create jobs on the MRS console.

- Prepare the application and data.

This section uses a word count application as an example. You can obtain the sample program from the MRS cluster client (Client installation directory/HDFS/hadoop/share/hadoop/tools/lib/hadoop-streaming-XXX.jar).

To run the application, you need to specify the following parameters:

- input: Input file or directory. You can configure the path in the HDFS or OBS file system.

For example, upload data file data1.txt. The file content is as follows:

Hello Hadoop Hello Streaming Hadoop is awesome

- Output: Path of the result file after the application counts words. Set this parameter to a directory that does not exist. The directory will be automatically generated after you run the application.

- mapper: An executable of a Map job, which can be a system command or a script path.

- reducer: An executable of a Reduce job, which can be a system command or a script path.

- input: Input file or directory. You can configure the path in the HDFS or OBS file system.

- Log in to the MRS console.

- On the Active Clusters page, select a running cluster and click its name to switch to the cluster details page.

- On the Dashboard page, click Synchronize on the right side of IAM User Sync to synchronize IAM users.

Perform this step only when Kerberos authentication is enabled for the cluster.

After IAM user synchronization, wait for five minutes before submitting a job. For details about IAM user synchronization, see Synchronizing IAM Users to MRS.

- Click Job Management. On the displayed job list page, click Create.

- Set Type to HadoopStreaming. Configure job information by referring to Table 1.

Table 1 Job parameters Parameter

Description

Example

Name

Job name. It can contain 1 to 64 characters. Only letters, digits, hyphens (-), and underscores (_) are allowed.

hadoop_job

Program Parameter

(Optional) Used to configure optimization parameters such as threads, memory, and vCPUs for the job to optimize resource usage and improve job execution performance.

Table 2 describes the common parameters of a running program.

-

Parameters

(Optional) Key parameters for program execution. The parameters are specified by the function of the user's program. MRS is only responsible for loading the parameters.

Multiple parameters are separated by spaces. The value can contain a maximum of 150,000 characters and can be left blank. The value cannot contain special characters such as ;|&><'$

CAUTION:When entering a parameter containing sensitive information (for example, login password), you can add an at sign (@) before the parameter name to encrypt the parameter value. This prevents the sensitive information from being persisted in plaintext.

When you view job information on the MRS console, the sensitive information is displayed as *.

Example: username=testuser @password=User password

-input obs://mrs-demotest/input/data1.txt -output obs://mrs-demotest/output/demo2 -mapper "cat" -reducer "wc -l"

Service Parameter

(Optional) Service parameters for the job.

To modify the current job, change this parameter. For permanent changes to the entire cluster, refer to Modifying the Configuration Parameters of an MRS Cluster Component and modify the cluster component parameters accordingly.

For example, if decoupled storage and compute is not configured for the MRS cluster and jobs need to access OBS using AK/SK, you can add the following service parameters:

- fs.obs.access.key: key ID for accessing OBS.

- fs.obs.secret.key: key corresponding to the key ID for accessing OBS.

-

Command Reference

Commands submitted to the background for execution when a job is submitted.

N/A

Table 2 Program parameters Parameter

Description

Example Value

-ytm

Memory size of each TaskManager container. (You can select a unit as required, and the default value is MB.)

1024

-yjm

Memory size of JobManager container. (You can select a unit as required, and the default value is MB.)

1024

-yn

Number of YARN containers allocated to applications. The value is the same as the number of TaskManagers.

For MRS 3.x or later, the -yn parameter is not supported.

2

-ys

Number of TaskManager cores

2

-ynm

Custom name of an application on YARN

test

-c

Class of the program entry method (for example, the main or getPlan() method). This parameter is required only when the JAR file does not specify the class of its manifest.

com.bigdata.mrs.test

- Confirm job configuration information and click OK.

- After the job is submitted, you can view the job running status and execution result in the job list. After the job status changes to Completed, you can view the analysis result of related programs.

In this example, you can view the data statistics in the specified OBS output directory.

Figure 1 Viewing the job execution result

During job execution, you can click View Log or choose More > View Details to view program execution details. If the job execution is abnormal or fails, you can locate the fault based on the error information.

A created job cannot be modified. If you need to execute the job again, you can click Clone to quickly copy the created job and adjust required parameters.

- Prepare the application and data.

This section uses a word count application as an example. You can obtain the sample program from the MRS cluster client (Client installation directory/HDFS/hadoop/share/hadoop/tools/lib/hadoop-streaming-XXX.jar).

To run the application, you need to specify the following parameters:

- input: Input file or directory. You can configure the path in the HDFS or OBS file system.

For example, upload data file data1.txt. The file content is as follows:

Hello Hadoop Hello Streaming Hadoop is awesome

- Output: Path of the result file after the application counts words. Set this parameter to a directory that does not exist. The directory will be automatically generated after you run the application.

- mapper: An executable of a Map job, which can be a system command or a script path.

- reducer: An executable of a Reduce job, which can be a system command or a script path.

- input: Input file or directory. You can configure the path in the HDFS or OBS file system.

- If Kerberos authentication has been enabled for the current cluster, create a service user with job submission permissions on FusionInsight Manager in advance. For details, see Creating an MRS Cluster User.

In this example, create human-machine user testuser, and associate the user with user group supergroup and role System_administrator.

- Install an MRS cluster client.

For details, see Installing an MRS Cluster Client.

The MRS cluster comes with a client installed for job submission by default, which can also be used directly. In MRS 3.x or later, the default client installation path is /opt/Bigdata/client on the Master node. In versions earlier than MRS 3.x, the default client installation path is /opt/client on the Master node.

- Log in to the node where the client is located as the MRS cluster client installation user.

For details, see Logging In to an MRS Cluster Node.

- Run the following command to go to the client installation directory:

cd /opt/Bigdata/client

Run the following command to load the environment variables:

source bigdata_env

If Kerberos authentication is enabled for the current cluster, run the following command to authenticate the user. If Kerberos authentication is disabled for the current cluster, you do not need to run the kinit command.

kinit testuser

- Run the following command to submit a Hadoop Streaming job:

hadoop jar HDFS/hadoop/share/hadoop/tools/lib/hadoop-streaming-*.jar -input /tmp/input -output /tmp/output -mapper "cat" -reducer "wc -l"

In this example, the wc -l command is used to count the number of lines. After the job is executed, you can run the following command to view the result data generated in the specified HDFS directory:

hdfs dfs -cat /tmp/output/part-00000

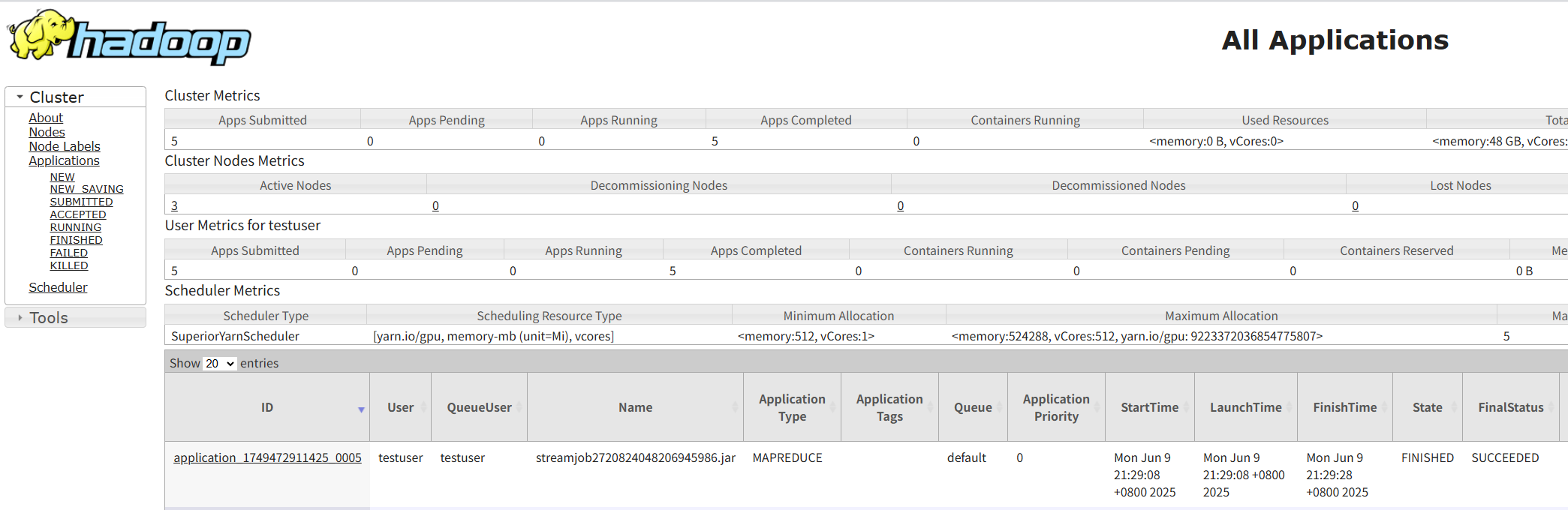

- Log in to FusionInsight Manager as user testuser, choose Cluster > Services > Yarn, and click the hyperlink on the right of ResourceManager Web UI to access the YARN Web UI. Click the application ID of the job to view the job running information and related logs.

Figure 2 Viewing Hadoop job details

Helpful Links

- Kerberos authentication has been enabled for a cluster and IAM user synchronization has not been performed. When you submit a job, an error is reported. For details about how to handle the error, see What Can I Do If the System Displays a Message Indicating that the Current User Does Not Exist on Manager When I Submit a Job?

- For more MRS application development sample programs, see MRS Developer Guide.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot