Running a Spark SQL Job

Spark SQL is a Spark module for structured data processing. It integrates relational SQL queries with the distributed computing capabilities of Spark and allows you to perform operations on data using SQL statements or DataFrame/Dataset APIs. Spark SQL jobs are characterized by high performance, ease of use, and high scalability, making them suitable for batch processing, stream processing (such as Structured Streaming), and interactive queries.

MRS allows you to submit and run your own programs, and get the results. This section will show you how to submit a Spark SQL job in an MRS cluster.

A Spark SQL job supports both SQL statements and scripts. If SQL statements contain sensitive information, you can submit the job using a script.

You can create a job online and submit it for running on the MRS console, or submit a job in CLI mode on the MRS cluster client.

Video Tutorial

This tutorial demonstrates how to submit and view a Spark SQL job on the cluster management page of the MRS console.

The UI may vary depending on the version. This tutorial is for reference only.

Prerequisites

- You have uploaded the program packages and data files required by jobs to OBS or HDFS.

- If the job program needs to read and analyze data in the OBS file system, you need to configure storage-compute decoupling for the MRS cluster. For details, see Configuring Storage-Compute Decoupling for an MRS Cluster.

Notes and Constraints

- When the policy of the user group to which an IAM user belongs changes from MRS ReadOnlyAccess to MRS CommonOperations, MRS FullAccess, or MRS Administrator, or vice versa, wait for five minutes after user synchronization for the System Security Services Daemon (SSSD) cache of the cluster node to refresh. Submit a job on the MRS console after the new policy takes effect. Otherwise, the job submission may fail.

- If the IAM username contains spaces (for example, admin 01), you cannot create jobs on the MRS console.

Submitting a Job

You can create and run jobs online using the management console or submit jobs by running commands on the cluster client.

- Develop an SQL script.

A Spark SQL job can execute SQL statements directly or execute an SQL script file.

In this section, the script for creating a Hive data table and inserting data in Spark is used as an example. Upload the developed spark_basic.sql script file to a specified directory on HDFS, OBS, or an MRS cluster node. For details, see Uploading Application Data to an MRS Cluster.

-- Create a table. CREATE TABLE IF NOT EXISTS test_table1 ( id INT, name STRING, age INT, gender STRING ) USING parquet OPTIONS ( path '/user/hive/warehouse/test_table1', compression 'snappy' ); -- Insert a single row of data. INSERT INTO test_table1 VALUES (1, 'lisi', 30, 'Male'); - Log in to the MRS console.

- On the Active Clusters page, select a running cluster and click its name to switch to the cluster details page.

- On the Dashboard page, click Synchronize on the right side of IAM User Sync to synchronize IAM users.

Perform this step only when Kerberos authentication is enabled for the cluster.

After IAM user synchronization, wait for five minutes before submitting a job. For details about IAM user synchronization, see Synchronizing IAM Users to MRS.

- Click Job Management. On the displayed job list page, click Create.

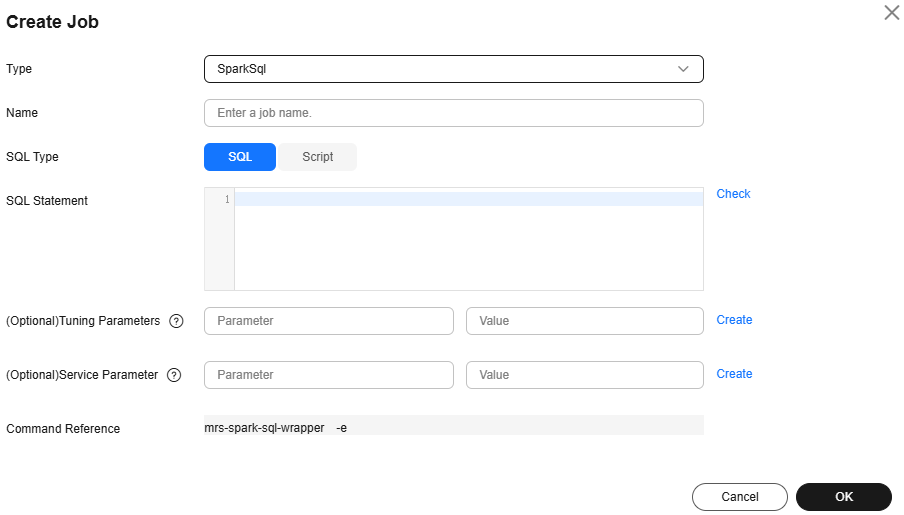

- Set Type to SparkSql and configure Spark SQL information by referring to Table 1.

Figure 1 Creating a Spark SQL job

Table 1 Job parameters Parameter

Description

Example

Name

Job name. It can contain 1 to 64 characters. Only letters, digits, hyphens (-), and underscores (_) are allowed.

spark_sql_job

SQL Type

Submission type of the SQL statement

- SQL: Run the entered SQL statement.

- Script: Load SQL scripts from HDFS or OBS to run SQL statements.

Script

SQL Statement

This parameter is valid only when SQL Type is set to SQL. Enter the SQL statement to be executed, and then click Check to check whether the SQL statement is correct.

If you want to submit and execute multiple statements at the same time, use semicolons (;) to separate them.

-

SQL File

This parameter is available only when SQL Type is set to Script. It specifies the path of the SQL script file to be executed. You can enter the path or click HDFS or OBS to select a file.

- The path can contain a maximum of 1,023 characters. It cannot contain special characters (;|&>,<'$) and cannot be left blank or all spaces.

- The OBS program path starts with obs://. The HDFS program path starts with hdfs://hacluster, for example, hdfs://hacluster/user/XXX.jar.

- The script file must end with .sql.

obs://mrs-demotest/program/spark_basic.sql

Tunning Parameters

(Optional) Used to configure optimization parameters such as threads, memory, and vCPUs for the job to optimize resource usage and improve job execution performance.

Table 2 lists the common program parameters of Spark SQL jobs. You can configure the parameters based on the execution program and cluster resources. If you do not configure the parameters, the default values of the cluster are used.

-

Service Parameter

(Optional) Service parameters for the job.

To modify the current job, change this parameter. For permanent changes to the entire cluster, refer to Modifying the Configuration Parameters of an MRS Cluster Component and modify the cluster component parameters accordingly.

For example, if decoupled storage and compute is not configured for the MRS cluster and jobs need to access OBS using AK/SK, you can add the following service parameters:

- fs.obs.access.key: key ID for accessing OBS.

- fs.obs.secret.key: key corresponding to the key ID for accessing OBS.

-

Command Reference

Commands submitted to the background for execution when a job is submitted.

N/A

Table 2 Program parameters Parameter

Description

Example

--conf

Spark job configuration in property=value format.

For more information about parameters required for submitting Spark jobs, see https://spark.apache.org/docs/latest/submitting-applications.html.

spark.executor.memory=2G

--driver-memory

Amount of memory to allocate to the driver process in a Spark job.

For example, if the allocated memory is 2 GB, set this parameter to 2g. If the allocated memory is 512 MB, set this parameter to 512m.

2g

--num-executors

Number of executors to run in a Spark job.

5

--executor-cores

Number of CPU cores to use on each executor in a Spark job.

2

--executor-memory

Amount of memory to use per executor in a Spark job.

For example, if the allocated memory is 2 GB, set this parameter to 2g. If the allocated memory is 512 MB, set this parameter to 512m.

2g

--jars

Additional dependency packages of a task, which is used to add the external dependency packages to the task.

-

- Confirm job configuration information and click OK.

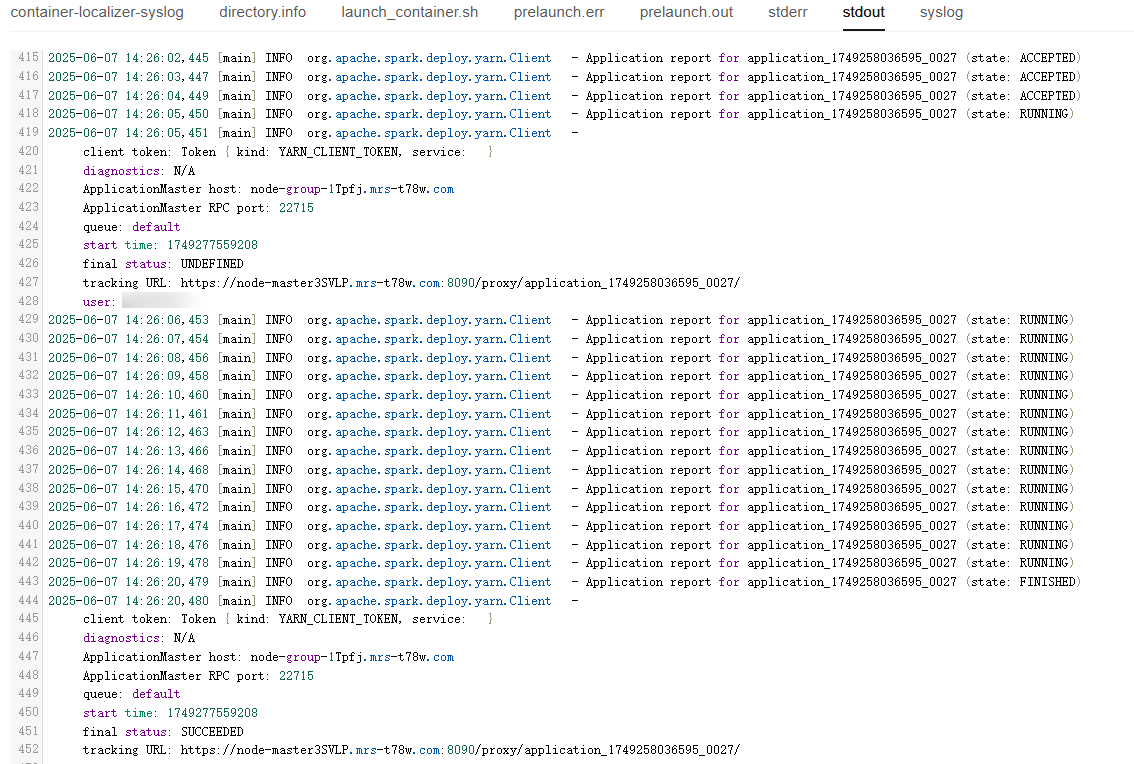

- After the job is submitted, you can view the job running status and execution result in the job list. After the job status changes to Completed, you can view the analysis result of related programs.

In this sample program, you can click View Log to view the detailed execution process of the Spark SQL job.

Figure 2 Viewing job execution details

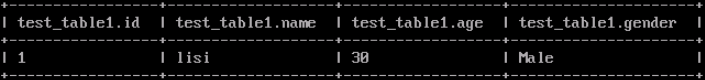

After the job is successfully executed, connect to Hive Beeline through the client to view the Hive table data.

- If Kerberos authentication has been enabled for the current cluster, create a service user with job submission permissions on FusionInsight Manager in advance. For details, see Creating an MRS Cluster User.

In this example, create human-machine user testuser, and associate the user with user group supergroup and role System_administrator.

- Install an MRS cluster client.

For details, see Installing an MRS Cluster Client.

The MRS cluster comes with a client installed for job submission by default, which can also be used directly. In MRS 3.x or later, the default client installation path is /opt/Bigdata/client on the Master node. In versions earlier than MRS 3.x, the default client installation path is /opt/client on the Master node.

- Run the following command to go to the client installation directory:

cd /opt/Bigdata/client

Run the following command to load the environment variables:

source bigdata_env

If Kerberos authentication is enabled for the current cluster, run the following command to authenticate the user. If Kerberos authentication is disabled for the current cluster, you do not need to run the kinit command.

kinit testuser

- Run the following command to access Hive Beeline and view the generated data:

beeline

Run the following command to view the generated Hive table data:

select * from test_table1;

Figure 3 Viewing Hive table data

- If Kerberos authentication has been enabled for the current cluster, create a service user with job submission permissions on FusionInsight Manager in advance. For details, see Creating an MRS Cluster User.

- Develop an SQL script.

A Spark SQL job can execute SQL statements directly or execute an SQL script file.

In this section, the script for creating a Hive data table and inserting data in Spark is used as an example. Upload the developed spark_basic.sql script file to a specified directory on HDFS, OBS, or an MRS cluster node. For details, see Uploading Application Data to an MRS Cluster.

-- Create a table. CREATE TABLE IF NOT EXISTS test_table1 ( id INT, name STRING, age INT, gender STRING ) USING parquet OPTIONS ( path '/user/hive/warehouse/test_table1', compression 'snappy' ); -- Insert a single row of data. INSERT INTO test_table1 VALUES (1, 'lisi', 30, 'Male'); - If Kerberos authentication has been enabled for the current cluster, create a service user with job submission permissions on FusionInsight Manager in advance. For details, see Creating an MRS Cluster User.

In this example, create human-machine user testuser, and associate the user with user group supergroup and role System_administrator.

- Install an MRS cluster client.

For details, see Installing an MRS Cluster Client.

The MRS cluster comes with a client installed for job submission by default, which can also be used directly. In MRS 3.x or later, the default client installation path is /opt/Bigdata/client on the Master node. In versions earlier than MRS 3.x, the default client installation path is /opt/client on the Master node.

- Log in to the node where the client is located as the MRS cluster client installation user.

For details, see Logging In to an MRS Cluster Node.

- Run the following command to go to the client installation directory:

cd /opt/Bigdata/client

Run the following command to load the environment variables:

source bigdata_env

If Kerberos authentication is enabled for the current cluster, run the following command to authenticate the user. If Kerberos authentication is disabled for the current cluster, you do not need to run the kinit command.

kinit testuser

- Run the following command to switch to the Spark component directory:

cd $SPARK_HOME

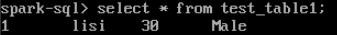

- Run a spark-sql command to execute the SQL script.

./bin/spark-sql -f /opt/spark_basic.sql

After running the spark-sql command, you can directly execute SQL statements.

spark-sql

After the script is executed, view the generated Hive table data.

select * from test_table1;

Figure 4 Viewing table data

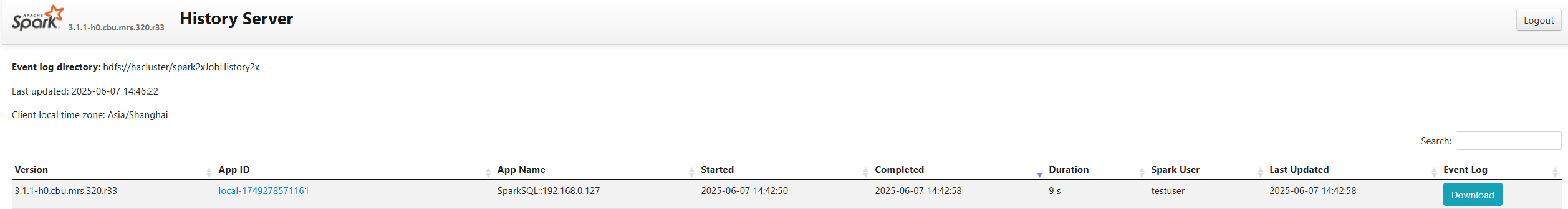

- Log in to FusionInsight Manager as user testuser, choose Cluster > Services > Spark or Spark2x, click the hyperlink on the right of Spark WebUI to access the Spark Web UI, and view Spark job running information and event logs.

Figure 5 Viewing Spark job details

Helpful Links

- For details about the differences between the client and cluster modes of Spark jobs, see What Are the Differences Between the Client Mode and Cluster Mode of Spark Jobs?

- Kerberos authentication has been enabled for a cluster and IAM user synchronization has not been performed. When you submit a job, an error is reported. For details about how to handle the error, see What Can I Do If the System Displays a Message Indicating that the Current User Does Not Exist on Manager When I Submit a Job?

- For more Spark job troubleshooting cases, see Job Management FAQs and Spark Troubleshooting.

- For more MRS application development sample programs, see MRS Developer Guide.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot