Self-Hosted Redis Cluster Migration with redis-shake (RDB)

redis-shake is an open-source tool for migrating data online or offline (by importing backup files) between Redis Clusters. Data can be migrated to DCS Redis Cluster instances seamlessly because DCS Redis Cluster inherits the native Redis Cluster design. If the source Redis and the target Redis cannot be connected, or the source Redis is deployed on other clouds, you can migrate data by importing backup files.

The following describes how to use Linux redis-shake to migrate self-hosted Redis Cluster to a DCS Redis Cluster instance offline.

Notes and Constraints

To migrate to an instance with SSL enabled, disable the SSL setting first. For details, see Transmitting DCS Redis Data with Encryption Using SSL.

Prerequisites

- A DCS Redis Cluster instance has been created. For details about how to create one, see Buying a DCS Redis Instance.

The memory of the target Redis instance cannot be smaller than that of the source Redis.

- An Elastic Cloud Server (ECS) has been created. For details about how to create an ECS, see Purchasing an ECS. Select the same VPC, subnet, and security group as the DCS Redis Cluster instance.

Obtaining Information of the Source and Target Redis Nodes

- Connect to the source and target Redis instances, respectively. Connect to Redis by referring to Connecting to Redis on redis-cli.

- In online migration of Redis Clusters, the migration must be performed node by node. Run the following command to query the IP addresses and ports of all nodes in both the source and target Redis Clusters.

redis-cli -h {redis_address} -p {redis_port} -a {redis_password} cluster nodes{redis_address} indicates the Redis connection address, {redis_port} indicates the Redis port, and {redis_password} indicates the Redis connection password.

In the output, obtain the IP addresses and ports of all the master nodes.

Installing RedisShake

- Log in to the ECS.

- Run the following command on the ECS to download the redis-shake: This section uses v2.1.2 as an example. You can also download other redis-shake versions as required.

wget https://github.com/tair-opensource/RedisShake/releases/download/release-v2.1.2-20220329/release-v2.1.2-20220329.tar.gz

- Decompress the redis-shake file.

tar -xvf release-v2.1.2-20220329.tar.gz

If the source cluster is deployed in the data center intranet, install redis-shake on the intranet server. Export the source cluster backup file by referring to Exporting the Backup File. Upload the backup to the ECS.

Exporting the Backup File

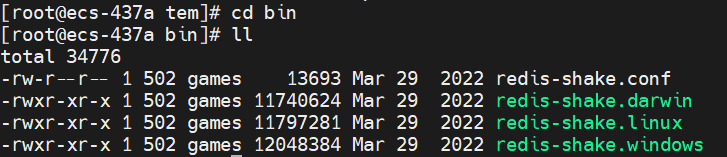

- Go to the redis-shake directory.

cd bin

- Edit the redis-shake.conf file by providing the following information about all the masters of the source:

vim redis-shake.conf

The modification is as follows:source.type = cluster # If there is no password, skip the following parameter. source.password_raw = {source_redis_password} # IP addresses and port numbers of all masters of the source Redis Cluster, which are separated by semicolons (;). source.address = {master1_ip}:{master1_port};{master2_ip}:{master2_port}...{masterN_ip}:{masterN_port}Press Esc to exit the editing mode and enter :wq!. Press Enter to save the configuration and exit the editing interface.

- Run the following command to export the RDB file:

./redis-shake -type dump -conf redis-shake.conf

If the following information is displayed in the execution log, the backup file is exported successfully:

execute runner[*run.CmdDump] finished!

Importing the Backup File

- Import the RDB file (or files) to the cloud server. The cloud server must be connected to the target DCS instance.

- Edit the redis-shake.conf file by providing the following information about all the masters of the target:

vim redis-shake.conf

The modification is as follows:target.type = cluster # If there is no password, skip the following parameter. target.password_raw = {target_redis_password} # IP addresses and port numbers of all masters of the target instance, which are separated by semicolons (;). target.address = {master1_ip}:{master1_port};{master2_ip}:{master2_port}...{masterN_ip}:{masterN_port} # List the RDB files to be imported, separated by semicolons (;). rdb.input = {local_dump.0};{local_dump.1};{local_dump.2};{local_dump.3}Press Esc to exit the editing mode and enter :wq!. Press Enter to save the configuration and exit the editing interface.

- Run the following command to import the RDB file to the target instance:

./redis-shake -type restore -conf redis-shake.conf

If the following information is displayed in the execution log, the backup file is imported successfully:

Enabled http stats, set status (incr), and wait forever.

Verifying the Migration

- After the data synchronization, connect to the Redis Cluster DCS instance by referring to Connecting to Redis on redis-cli.

- Run the info command to check whether the data has been successfully imported as required.

If the data has not been fully imported, run the flushall or flushdb command to clear the cached data in the target instance, and migrate data again.

- After the verification is complete, you are advised to clear the redis-shake configuration in time.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot