Kafka Application Development

Kafka is a distributed message publish-subscribe system. With features similar to JMS, Kafka processes active streaming data.

Kafka applies to many scenarios, such as message queuing, behavior tracing, O&M data monitoring, log collection, stream processing, event tracing, and log persistence.

Kafka has the following features:

- High throughput

- Message persistence to disks

- Scalable distributed system

- High fault tolerance

- Support for online and offline scenarios

MRS provides sample application development projects based on Kafka. This practice provides guidance for you to obtain and import a sample project after creating an MRS cluster and then conduct building and commissioning locally. In this sample project, you can implement processing of streaming data.

The guidelines for the sample project in this practice are as follows:

- Create two topics on the Kafka client to serve as the input and output topics.

- Develop Kafka Streams to count words in each message by reading messages in the input topic and to output the result in key-value pairs by consuming data in the output topic.

Creating an MRS Cluster

- Create and purchase an MRS cluster that contains Kafka. For details, see Buying a Custom Cluster.

In this practice, an MRS 3.1.0 cluster, with Hadoop and Kafka installed and with Kerberos authentication disabled, is used as an example.

- After the cluster is purchased, install the client on any node in the cluster. For details, see Installing and Using the Cluster Client.

For example, install the client in the /opt/client directory on the active management node.

- After the client is installed, create the lib directory on the client to store related JAR packages.

Copy the Kafka JAR packages in the directory decompressed during client installation to lib.

For example, if the download path of the client software package is /tmp/FusionInsight-Client on the active management node, run the following commands:

mkdir /opt/client/lib

cd /tmp/FusionInsight-Client/FusionInsight_Cluster_1_Services_ClientConfig

scp Kafka/install_files/kafka/libs/* /opt/client/lib

Developing the Application

- Obtain the sample project from Huawei Mirrors.

Download the Maven project source code and configuration files of the sample project, and configure related development tools on your local PC. For details, see Obtaining Sample Projects from Huawei Mirrors.

Select a branch based on the cluster version and download the required MRS sample project.

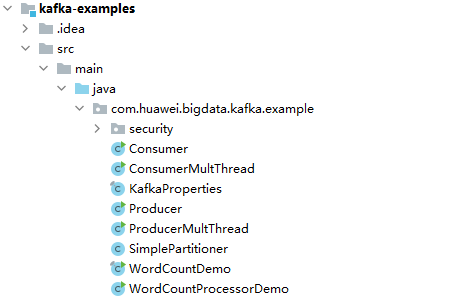

For example, the sample project suitable for this practice is WordCountDemo, which can be obtained at https://github.com/huaweicloud/huaweicloud-mrs-example/tree/mrs-3.1.0/src/kafka-examples.

- Use IDEA to import the sample project and wait for the Maven project to download the dependency packages.

After you configure Maven and SDK parameters on the local PC, the sample project automatically loads related dependency packages. For details, see Configuring and Importing Sample Projects.

The WordCountDemo sample project calls Kafka APIs to obtain and sort word records and then obtain the records of each word. The code snippet is as follows:

... static Properties getStreamsConfig() { final Properties props = new Properties(); KafkaProperties kafkaProc = KafkaProperties.getInstance(); //Set broker addresses based on site requirements. props.put(BOOTSTRAP_SERVERS, kafkaProc.getValues(BOOTSTRAP_SERVERS, "node-group-1kLFk.mrs-rbmq.com:9092")); props.put(SASL_KERBEROS_SERVICE_NAME, "kafka"); props.put(KERBEROS_DOMAIN_NAME, kafkaProc.getValues(KERBEROS_DOMAIN_NAME, "hadoop.hadoop.com")); props.put(APPLICATION_ID, kafkaProc.getValues(APPLICATION_ID, "streams-wordcount")); //Set the protocol type, which can be SASL_PLAINTEXT or PLAINTEXT. props.put(SECURITY_PROTOCOL, kafkaProc.getValues(SECURITY_PROTOCOL, "PLAINTEXT")); props.put(CACHE_MAX_BYTES_BUFFERING, 0); props.put(DEFAULT_KEY_SERDE, Serdes.String().getClass().getName()); props.put(DEFAULT_VALUE_SERDE, Serdes.String().getClass().getName()); props.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, "earliest"); return props; } static void createWordCountStream(final StreamsBuilder builder) { //Receives input records from the input topic. final KStream<String, String> source = builder.stream(INPUT_TOPIC_NAME); //Aggregates the calculation results of the key-value pairs. final KTable<String, Long> counts = source .flatMapValues(value -> Arrays.asList(value.toLowerCase(Locale.getDefault()).split(REGEX_STRING))) .groupBy((key, value) -> value) .count(); //Outputs the key-value pairs from the output topic. counts.toStream().to(OUTPUT_TOPIC_NAME, Produced.with(Serdes.String(), Serdes.Long())); } ...

- Set BOOTSTRAP_SERVERS to the host name and port number of the Kafka broker node based on site requirements. You can choose Cluster > Services > Kafka > Instance on FusionInsight Manager to view the broker instance information.

- Set SECURITY_PROTOCOL to the protocol for connecting to Kafka. In this example, set this parameter to PLAINTEXT.

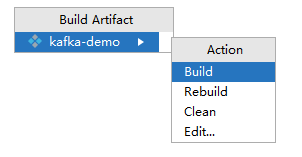

- After confirming that the parameters in WordCountDemo.java are correct, build the project and package it into a JAR file.

For details about how to build a JAR file, see Commissioning an Application in Linux.

For example, the JAR file is kafka-demo.jar.

Uploading the JAR Package and Source Data

- Upload the JAR package to a directory, for example, /opt/client/lib, on the client node.

If you cannot directly access the client node to upload files through the local network, upload the JAR package or source data to OBS, import it to HDFS on the Files tab of the MRS console, and run the hdfs dfs -get command on the HDFS client to download it to the client node.

Running a Job and Viewing the Result

- Log in to the node where the cluster client is installed as user root.

cd /opt/client

source bigdata_env

- Create an input topic and an output topic. Ensure that the topic names are the same as those specified in the sample code. Set the cleanup policy of the output topic to compact.

kafka-topics.sh --create --zookeeper IP address of the quorumpeer instance:ZooKeeper client connection port/kafka --replication-factor 1 --partitions 1 --topic Topic name

To query the IP address of the quorumpeer instance, log in to FusionInsight Manager of the cluster, choose Cluster > Services > ZooKeeper, and click the Instance tab. Use commas (,) to separate multiple IP addresses. You can obtain the ZooKeeper client connection port by querying the ZooKeeper configuration parameter clientPort. The default value is 2181.

For example, run the following commands:

kafka-topics.sh --create --zookeeper 192.168.0.17:2181/kafka --replication-factor 1 --partitions 1 --topic streams-wordcount-input

kafka-topics.sh --create --zookeeper 192.168.0.17:2181/kafka --replication-factor 1 --partitions 1 --topic streams-wordcount-output --config cleanup.policy=compact

- After the topics are created, run the following command to run the application:

java -cp .:/opt/client/lib/* com.huawei.bigdata.kafka.example.WordCountDemo

- Open a new client window and run the following commands to use kafka-console-producer.sh to write messages to the input topic:

cd /opt/client

source bigdata_env

kafka-console-producer.sh --broker-list IP address of the broker instance:Kafka connection port(for example, 192.168.0.13:9092) --topic streams-wordcount-input --producer.config /opt/client/Kafka/kafka/config/producer.properties

- Open a new client window and run the following commands to use kafka-console-consumer.sh to consume data from the output topic and view the result:

cd /opt/client

source bigdata_env

kafka-console-consumer.sh --topic streams-wordcount-output --bootstrap-server IP address of the broker instance:Kafka connection port --consumer.config /opt/client/Kafka/kafka/config/consumer.properties --from-beginning --property print.key=true --property print.value=true --property key.deserializer=org.apache.kafka.common.serialization.StringDeserializer --property value.deserializer=org.apache.kafka.common.serialization.LongDeserializer --formatter kafka.tools.DefaultMessageFormatter

Write a message to the input topic.

>This is Kafka Streams test >test starting >now Kafka Streams is running >test end

The output is as follows:

this 1 is 1 kafka 1 streams 1 test 1 test 2 starting 1 now 1 kafka 2 streams 2 is 2 running 1 test 3 end 1

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot