Experience in Rewriting SQL Statements

- Replace UNION with UNION ALL.

UNION eliminates duplicate rows while merging two result sets but UNION ALL merges the two result sets without deduplication. Therefore, replace UNION with UNION ALL if you are sure that the two result sets do not contain duplicate rows based on the service logic.

- Add NOT NULL to the join columns.

If there are many NULL values in the JOIN columns, you can add the filter criterion IS NOT NULL to filter data in advance to improve the JOIN efficiency.

- Convert NOT IN to NOT EXISTS.

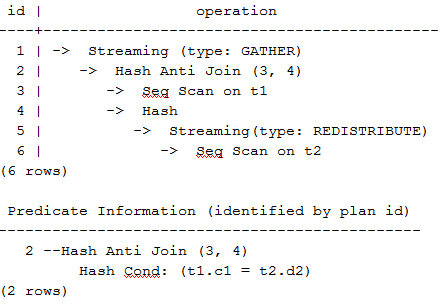

nestloop anti join must be used to implement NOT IN, and hash anti join is required for NOT EXISTS. If no NULL value exists in the JOIN columns, NOT IN is equivalent to NOT EXISTS. Therefore, if you are sure that no NULL value exists, you can convert NOT IN to NOT EXISTS to generate hash join and to improve the query performance.

As shown in the following statement, the t2.d2 column does not contain null values (it is set to NOT NULL) and NOT EXISTS is used for the query.

1SELECT * FROM t1 WHERE NOT EXISTS (SELECT * FROM t2 WHERE t1.c1=t2.d2);

The generated execution plan is as follows:

Figure 1 NOT EXISTS execution plan

- Use hashagg.

If a plan involving groupAgg and SORT operations generated by the GROUP BY statement is poor in performance, you can set work_mem to a larger value to generate a hashagg plan, which does not require sorting and improves the performance.

- Replace functions with CASE statements.

The GaussDB performance greatly deteriorates if a large number of functions are called. In this case, you can modify the pushdown functions to CASE statements.

- Do not use functions or expressions for indexes.

Using functions or expressions for indexes stops indexing. Instead, it enables scanning on the full table.

- Do not use the !=, <, or > operator, NULL, OR, or implicit parameter conversion in WHERE clauses.

- If the values of >= and <= are the same in WHERE condition, change the condition to = because range equivalence class derivation is not supported currently.

For example, change SELECT * FROM t1 WHERE c1 >= 1 AND c1 <= 1 to SELECT * FROM t1 WHERE c1 = 1.

For range queries, the optimizer has a larger error when calculating selectivity than equivalent queries. Therefore, change range queries to equivalent queries as much as possible.

- Split complex SQL statements.

You can split an SQL statement into several ones and save the execution result to a temporary table if the SQL statement is too complex to be tuned using the solutions above, including but not limited to the following scenarios:

- The same subquery is involved in multiple SQL statements of a job and the subquery contains large amounts of data.

- Incorrect plan cost causes a small hash bucket of subquery. For example, the actual number of rows is 10 million, but only 1000 rows are in hash bucket.

- Functions such as substr and to_number cause incorrect measures for subqueries containing large amounts of data.

- BROADCAST subqueries are performed on large tables in multi-DN environment.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot