CCE AI Suite (NVIDIA GPU)

Introduction

The CCE AI Suite (NVIDIA GPU) add-on helps you use and manage GPUs in your clusters. It supports access to GPUs in containers and helps you efficiently run and maintain GPU-based compute-intensive workloads in cloud native environments. With this add-on, both CCE standard and Turbo clusters can handle GPU scheduling, install drivers automatically, manage runtimes, and monitor performance. This means you get full support for GPU workloads throughout their entire lifecycle. To run GPU nodes in a cluster, you must install this add-on.

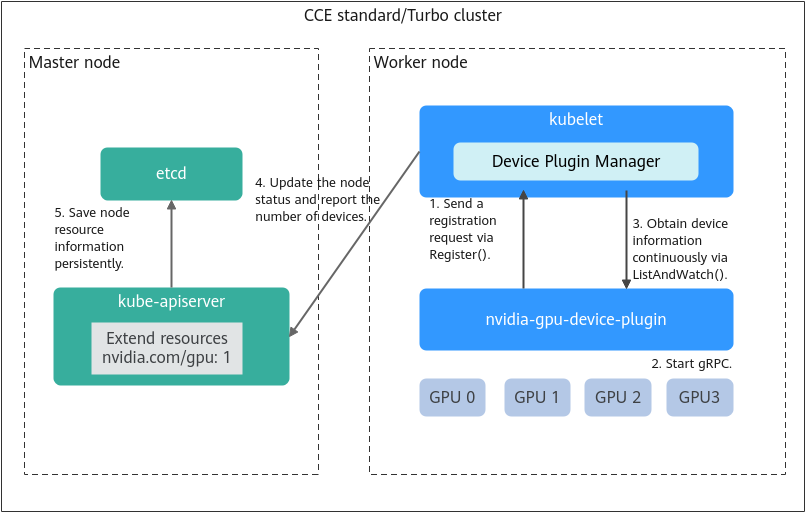

How nvidia-gpu-device-plugin Works

nvidia-gpu-device-plugin is one of the core components of CCE AI Suite (NVIDIA GPU). As a bridge between the container platform and GPU hardware, nvidia-gpu-device-plugin abstracts physical GPUs into resources that can be identified and scheduled by the container platform. This addresses the GPU allocation and usage problems in containerized environments.

- Sending a registration request: nvidia-gpu-device-plugin sends a registration request to kubelet as a client along with:

- Device name (nvidia.com/gpu): identifies the type of hardware resource managed by the add-on for kubelet to identify and schedule.

- Unix socket: enables local gRPC communication between the component and kubelet to ensure that kubelet can properly call the correct services.

- API version: specifies the version of the Device Plugin API protocol. It ensures that the communication protocols of both parties are compatible.

- Starting a service: After registration, nvidia-gpu-device-plugin starts a gRPC server to provide services for external systems. The gRPC server handles kubelet requests, including device list queries, health status reporting, and resource allocation. The listening address (Unix socket path) of the gRPC server and supported Device Plugin API version have been reported to kubelet during registration. This ensures that kubelet can properly establish connections and call the correct APIs based on the registration information.

- Health monitoring: After the gRPC server is started, kubelet establishes a persistent connection with nvidia-gpu-device-plugin through the ListAndWatch API to continuously listen to the device IDs and their health. If a device becomes unhealthy, nvidia-gpu-device-plugin reports the error to kubelet through the connection.

- Information reporting: kubelet integrates the device information into the node statuses and reports resource details such as the number of devices to Kubernetes API server. The scheduler (kube-scheduler or Volcano) uses these details to make scheduling decisions.

- Persistent storage: CCE stores the GPU device information (such as quantity and status) reported by nodes in etcd for cluster-level resource persistence. This ensures that GPU data can be kept after a cluster component is faulty or restarted, and provides consistent data sources for components such as the scheduler and controller, ensuring reliability of resource scheduling and management.

GPU Device Plugin DRA

Starting with v2.15.0, the GPU Device Plugin supports Dynamic Resource Allocation (DRA). DRA is implemented through the open-source NVIDIA DRA driver (v25.12.0), enabling declarative and flexible GPU resource allocation.

- Core Features and Updates

- Architecture: With DRA enabled, GPU resource scheduling and allocation are handled entirely by gpu-kubelet-plugin. The native GPU Device Plugin retains only automatic driver installation. All other resource management functions are disabled.

- Feature scope: This release focuses on basic GPU compute resource management. GPU sharing and virtualization are not supported. Extended components for compute domains and network management from the community version have been removed.

- Enabling DRA: Set dra_mode to true in the YAML configuration file.

- Prerequisites

- Cluster version: Kubernetes v1.34 or later

- Driver version: 580 or later in DRA mode

- Scheduler: Volcano v1.22.1 or later (if applicable)

- Container runtime: containerd or any CRI-compatible runtime. Docker is not supported.

- API migration: DRA is mutually exclusive with the native Device Plugin scheduling mechanism. After DRA is enabled, the traditional resources.requests/limits fields no longer support GPU resources in pods. Use the DRA-compliant ResourceClaim API to request GPU resources.

- Migration from Device Plugin to DRA

After a cluster switches from Device Plugin to DRA, previously provisioned devices are incorrectly identified as idle. This may lead to repeated device provisioning, runtime conflicts, or service interruptions. Before performing the switchover, fully evaluate the risks and strictly follow the recommendations in this section.

- Core risk: In Kubernetes clusters, the traditional Device Plugin and DRA use two completely independent and mutually unaware device resource accounting mechanisms. After a cluster switches from Device Plugin to DRA, DRA does not inherit the historical allocation records from Device Plugin. As a result, physical GPUs already occupied by running pods are incorrectly marked as available or idle in DRA mode. These GPUs will be rescheduled, causing repeated device allocation. This can lead to runtime conflicts, CUDA memory contention, or service interruption.

- Typical fault symptom: If old services are not completely cleared before the switchover, a CUDA out-of-memory (OOM) error will occur when a newly scheduled pod starts up and collides with the GPU memory of a still-running old process. Consequently, the new service triggers a CUDA OOM error during initialization or GPU memory pre-allocation, causing the pod to repeatedly fail to start or remain in the CrashLoopBackOff state.

- Migration Recommendations and Best Practices

- Clear allocations before transition: Before the switchover, ensure all workloads that depend on the target devices have been gracefully terminated or migrated.

kubectl get pods -A -o wide | grep <device_label> - Isolate nodes by scheduling policy: Add labels (such as device-mgmt=legacy or device-mgmt=dra) to nodes, and use nodeSelector or affinity rules to prevent co-location of old and new workloads.

- Clear allocations before transition: Before the switchover, ensure all workloads that depend on the target devices have been gracefully terminated or migrated.

Notes and Constraints

- The driver to be downloaded must be a .run file.

- Only NVIDIA Tesla drivers are supported, not GRID drivers.

- When installing or reinstalling the add-on, ensure that the driver download address is correct and accessible. CCE does not verify the address validity.

- This add-on only enables you to download the driver and execute the installation script. The add-on status only indicates how the add-on is running, not whether the driver is successfully installed.

- CCE does not guarantee the compatibility between the GPU driver version and the CUDA library version of your application. You need to check the compatibility by yourself.

- If a custom OS image has had a GPU driver installed, CCE cannot ensure that the GPU driver is compatible with other GPU components such as the monitoring components used in CCE.

- If the version of the GPU driver you used is not included in the Supported GPU Drivers, the GPU driver may be incompatible with the OS, ECS type, or container runtime. As a result, the driver installation may fail or the CCE AI Suite (NVIDIA GPU) add-on may be abnormal. To use a custom GPU driver, verify its availability by yourself.

Installing the Add-on

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane, choose Add-ons. In the right pane, find the CCE AI Suite (NVIDIA GPU) add-on and click Install.

- Determine whether to enable Use DCGM-Exporter to Observe DCGM Metrics. After this function is enabled, DCGM-Exporter is deployed on the GPU node.

If the add-on version is 2.7.40 or later, DCGM-Exporter can be deployed. DCGM-Exporter maintains the community capability and does not support the sharing mode or GPU virtualization.

After DCGM-Exporter is enabled, if you need to report the collected GPU monitoring data to AOM, see Comprehensive Monitoring of DCGM Metrics.

- Configure the add-on parameters.

Table 1 Add-on parameters Parameter

Description

Default Cluster Driver

All GPU nodes in a cluster use the same driver. You can select a proper GPU driver version or customize the driver link and enter the download link of the NVIDIA driver.NOTICE:- If the download link is an Internet address, for example, https://us.download.nvidia.com/tesla/470.103.01/NVIDIA-Linux-x86_64-470.103.01.run, bind an EIP to each GPU node. For details about how to obtain the driver link, see From the Internet.

- If the download link is an OBS URL, you do not need to bind an EIP to GPU nodes. For details about how to obtain the driver link, see From the OBS Link.

- Ensure that the NVIDIA driver version matches the GPU nodes. For details about the version mapping, see Supported GPU Drivers.

- If the driver version is changed, restart the nodes to apply the change.

- For Linux kernel 5.x, use driver version 470 or later for Huawei Cloud EulerOS 2.0, and driver 515 or later for Ubuntu 22.04.

After the add-on is installed, you can configure GPU virtualization and node pool drivers on the Heterogeneous Resources tab in Settings.

- (Optional) Enable DRA.

On the Install Add-on page, click Installation Using YAML, and change the dra_mode value in the YAML configuration file to true to enable DRA. For details about how to deploy a workload and declare resources, see Installing the NVIDIA DRA Driver.

- Click Install.

If the add-on is uninstalled, GPU pods newly scheduled to the nodes cannot run properly, but GPU pods already running on the nodes will not be affected.

Verifying the Add-on

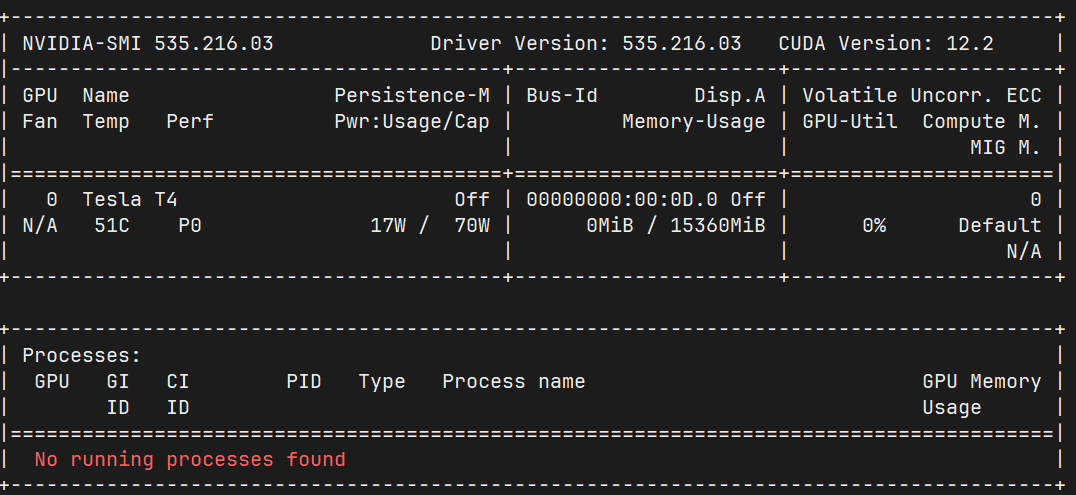

After the add-on is installed, run the nvidia-smi command on the GPU node and the container that schedules GPU resources to verify the availability of the GPU device and driver.

- GPU node:

- If the add-on version is earlier than 2.0.0, run the following command:

cd /opt/cloud/cce/nvidia/bin && ./nvidia-smi

- If the add-on version is 2.0.0 or later, run the following command:

cd /usr/local/nvidia/bin && ./nvidia-smi

- If the add-on version is earlier than 2.0.0, run the following command:

- Pod:

- If the cluster version is v1.27 or earlier, run the following command:

cd /usr/local/nvidia/bin && ./nvidia-smi

- If the cluster version is v1.28 or later, run the following command:

cd /usr/bin && ./nvidia-smi

- If the cluster version is v1.27 or earlier, run the following command:

If GPU information is returned, the device is available and the add-on has been installed.

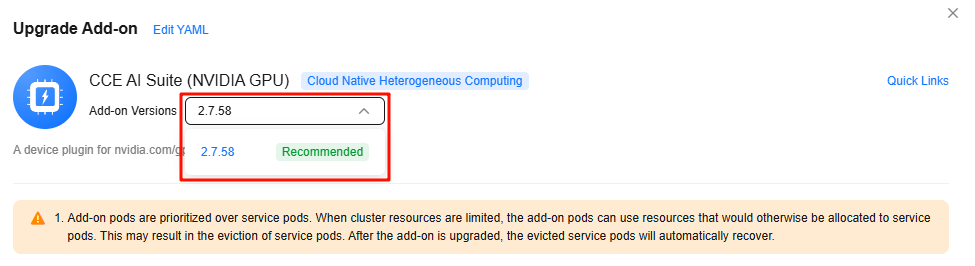

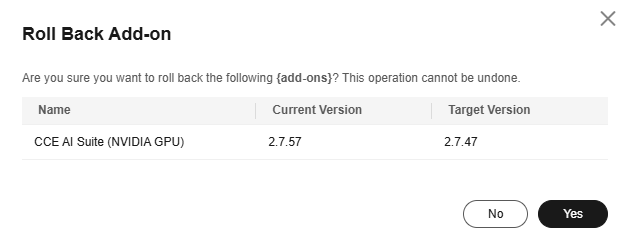

Managing the Add-on

Once the add-on is installed, you can upgrade or roll it back as needed. Before upgrading or rolling back the CCE AI Suite (NVIDIA GPU) add-on, make sure there are no GPU virtualization workloads running on the GPU node. If the GPU node has GPU virtualization workloads, when you upgrade or roll back the add-on, you need to drain the GPU node. For details, see How Can I Drain a GPU Node After Upgrading or Rolling Back the CCE AI Suite (NVIDIA GPU) Add-on?

Supported GPU Drivers

- The list of supported GPU drivers applies only to CCE AI Suite (NVIDIA GPU) of 1.2.28 or later.

- To use the latest GPU driver, upgrade your CCE AI Suite (NVIDIA GPU) to the latest version.

- The compatibility of the OSs, GPU drivers, and GPU models listed in Table 3 Supported GPU drivers has been tested and verified. To ensure excellent performance and stability, use these GPU drivers. If the deployment is performed in an unverified environment, perform full tests based on the environment to ensure the compatibility and stability.

- NVIDIA no longer provides updates or security patches for GPU drivers that have reached their end of life (EOL). For details, see Driver Lifecycle. For example, a Production Branch (PB) provides one-year support from the date of release, and a Long-Term Support Branch (LTSB) provides three-year support.

According to this policy, CCE does not provide technical support for GPU drivers that have reached EOL, including driver installation and updates. The following drivers have reached EOL: 510.47.03, 470.141.03, and 470.57.02.

- When installing a GPU driver on Ubuntu and CentOS, pay attention to the OS version. For details, see Table 3. For more information, see NVIDIA Driver Documentation.

| GPU Model | Supported Cluster Type | Specification | OS | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Huawei Cloud EulerOS 3.0 | Huawei Cloud EulerOS 2.0 | Ubuntu 22.04 | CentOS Linux release 7.6 | EulerOS release 2.9 | EulerOS release 2.5 | Ubuntu 18.04 (EOM) | EulerOS release 2.3 (EOM) | |||

| Tesla T4 | CCE Turbo cluster CCE standard cluster | g6 pi2 | 570.86.15 | 580.126.20 570.86.15 535.216.03 535.161.08 535.54.03 510.47.03 470.57.02 | 580.126.20 570.86.15 535.216.03 535.161.08 535.54.03 | 535.216.03 535.161.08 535.54.03 510.47.03 470.141.03 470.57.02 | 535.54.03 470.141.03 | 535.54.03 470.141.03 | 470.141.03 | 470.141.03 |

| Tesla V100 | CCE Turbo cluster CCE standard cluster | p2s p2vs p2v | 570.86.15 | 580.126.20 570.86.15 535.216.03 535.161.08 535.54.03 510.47.03 470.57.02 | 580.126.20 570.86.15 535.216.03 535.161.08 535.54.03 | 535.216.03 535.161.08 535.54.03 510.47.03 470.141.03 470.57.02 | 535.54.03 470.141.03 | 535.54.03 470.141.03 | 470.141.03 | 470.141.03 |

| Driver Version | Ubuntu 22.04 | CentOS Linux release 7.6 | Ubuntu 18.04 (EOM) |

|---|---|---|---|

| Ubuntu 22.04.z LTS (where z ≤ 5) | Not supported | Not supported | |

| Ubuntu 22.04.z LTS (where z ≤ 4) | CentOS 7.y (where y ≤ 9) | Not supported | |

| Ubuntu 22.04.z LTS (where z ≤ 3) | CentOS 7.y (where y ≤ 9) | Not supported | |

| Ubuntu 22.04.z LTS (where z ≤ 2) | CentOS 7.y (where y ≤ 9) | Not supported | |

| Not supported | CentOS 7.y (where y ≤ 9) | Ubuntu 18.04.z LTS (where z ≤ 6) | |

| Not supported | CentOS 7.y (where y ≤ 9) | Ubuntu 18.04.z LTS (where z ≤ 6) | |

| Not supported | CentOS 7.y (where y ≤ 9) | Ubuntu 18.04.z LTS (where z ≤ 5) |

Obtaining a Driver Link

When you need to install a driver using the custom driver link, the CCE AI Suite (NVIDIA GPU) add-on allows you to obtain the driver link from either the Internet or the OBS link. To obtain a driver link, take the following steps:

- Log in to the CCE console and click the cluster name to access the cluster console.

- Create a node. In the Specifications area, select the GPU node flavor. The GPU models are displayed in the lower part of the area. Figure 4 Viewing the GPU models

- Go to the NVIDIA driver download page and search for the driver information. The OS must be Linux 64-bit. Figure 5 Selecting parameters

- After confirming the driver information, click Find. On the displayed page, find the driver to be downloaded and click View. Figure 6 Viewing the driver information

- Click Download and copy the download link. Figure 7 Obtaining the link

- Upload the driver to OBS and set the driver file to public read. For details, see Uploading an Object.

When the node is restarted, the driver will be downloaded and installed again. Ensure that the OBS bucket link of the driver is valid.

- In the bucket list, click the bucket name to go to the Objects page.

- Click the target object name and copy the driver link on the Basic Information tab. Figure 8 Copying an OBS link

Components

| Component | Description | Resource Type |

|---|---|---|

| nvidia-driver-installer | A workload for installing the NVIDIA GPU driver on a node, which only uses resources during the installation (Once it has been installed, it no longer uses resources.) | DaemonSet |

| nvidia-gpu-device-plugin | A Kubernetes device plugin that provides NVIDIA GPU heterogeneous compute for containers | DaemonSet |

| nvidia-operator | A component that provides NVIDIA GPU node management capabilities for clusters | Deployment |

| dcgm-exporter | A component that is installed when DCGM-Exporter is enabled to observe DCGM metrics. It is used to collect GPU metrics. | DaemonSet |

Helpful Links

- CCE AI Suite (NVIDIA GPU) provides GPU monitoring metrics. For details about GPU metrics, see GPU Metrics.

- After DCGM-Exporter is enabled, if you need to report the collected GPU monitoring data to AOM, see Comprehensive Monitoring of DCGM Metrics.

- To further use GPU virtualization, see GPU Virtualization.

Release History

| Add-on Version | Supported Cluster Version | New Feature |

|---|---|---|

| 2.13.3 | v1.28 v1.29 v1.30 v1.31 v1.32 v1.33 v1.34 | Fixed the compatibility issues. |

| 2.12.0 | v1.28 v1.29 v1.30 v1.31 v1.32 v1.33 v1.34 | Fixed the compatibility issues. |

| 2.11.1 | v1.28 v1.29 v1.30 v1.31 v1.32 v1.33 v1.34 | CCE clusters v1.34 are supported. |

| 2.10.3 | v1.28 v1.29 v1.30 v1.31 v1.32 v1.33 | Fixed some issues. |

| 2.10.2 | v1.28 v1.29 v1.30 v1.31 v1.32 v1.33 | Supported NVIDIA driver 570.86.15. |

| 2.8.4 | v1.28 v1.29 v1.30 v1.31 v1.32 | Fixed CVE-2025-23266 and CVE-2025-23267. |

| 2.8.1 | v1.28 v1.29 v1.30 v1.31 v1.32 | Fixed some issues. |

| 2.7.84 | v1.28 v1.29 v1.30 v1.31 v1.32 | CCE clusters v1.32 are supported. |

| 2.7.66 | v1.28 v1.29 v1.30 v1.31 | Fixed some issues. |

| 2.7.63 | v1.28 v1.29 v1.30 v1.31 | Fixed the security vulnerabilities. |

| 2.7.47 | v1.28 v1.29 v1.30 v1.31 | Added the NVIDIA 535.216.03 drivers that support xGPUs. |

| 2.7.42 | v1.28 v1.29 v1.30 v1.31 | Added the NVIDIA 535.216.03 drivers that support xGPUs. |

| 2.7.41 | v1.28 v1.29 v1.30 v1.31 | Added the NVIDIA 535.216.03 drivers that support xGPUs. |

| 2.7.40 | v1.28 v1.29 v1.30 v1.31 | Integrated with DCGM-Exporter to observe the DCGM metrics of NVIDIA GPU nodes in clusters. |

| 2.7.19 | v1.28 v1.29 v1.30 | Fixed the nvidia-container-toolkit CVE-2024-0132 container escape vulnerability. |

| 2.7.13 | v1.28 v1.29 v1.30 |

|

| 2.6.4 | v1.28 v1.29 | Updated the isolation logic of GPU cards. |

| 2.6.1 | v1.28 v1.29 | Upgraded the base images of the add-on. |

| 2.5.6 | v1.28 | Fixed an issue that occurred during the installation of the driver. |

| 2.5.4 | v1.28 | Clusters v1.28 are supported. |

| 2.2.4 | v1.25 v1.27 | Fixed CVE-2025-23266 and CVE-2025-23267. |

| 2.2.1 | v1.25 v1.27 | Fixed some issues. |

| 2.1.67 | v1.25 v1.27 | Supported the nvidia-peermem module. |

| 2.1.49 | v1.25 v1.27 | Added the NVIDIA 535.216.03 drivers that support xGPUs. |

| 2.1.47 | v1.25 v1.27 | Added the NVIDIA 535.216.03 drivers that support xGPUs. |

| 2.1.26 | v1.21 v1.23 v1.25 v1.27 | Added the NVIDIA 535.216.03 drivers that support xGPUs. |

| 2.1.14 | v1.21 v1.23 v1.25 v1.27 | Fixed the nvidia-container-toolkit CVE-2024-0132 container escape vulnerability. |

| 2.1.8 | v1.21 v1.23 v1.25 v1.27 | Fixed some issues. |

| 2.0.69 | v1.21 v1.23 v1.25 v1.27 | Upgraded the base images of the add-on. |

| 2.0.46 | v1.21 v1.23 v1.25 v1.27 |

|

| 2.0.18 | v1.21 v1.23 v1.25 v1.27 | Supported Huawei Cloud EulerOS 2.0. |

| 1.2.28 | v1.19 v1.21 v1.23 v1.25 |

|

| 1.2.24 | v1.19 v1.21 v1.23 v1.25 |

|

| 1.2.20 | v1.19 v1.21 v1.23 v1.25 | Set the add-on alias to gpu. |

| 1.2.17 | v1.15 v1.17 v1.19 v1.21 v1.23 | Added the nvidia-driver-install pod limit configuration. |

| 1.2.15 | v1.15 v1.17 v1.19 v1.21 v1.23 | CCE clusters v1.23 are supported. |

| 1.2.11 | v1.15 v1.17 v1.19 v1.21 | Supported EulerOS 2.10. |

| 1.2.10 | v1.15 v1.17 v1.19 v1.21 | CentOS supports the GPU driver of the new version. |

| 1.2.9 | v1.15 v1.17 v1.19 v1.21 | CCE clusters v1.21 are supported. |

| 1.2.2 | v1.15 v1.17 v1.19 | Supported the new EulerOS kernel. |

| 1.2.1 | v1.15 v1.17 v1.19 |

|

| 1.1.13 | v1.13 v1.15 v1.17 | Supported kernel-3.10.0-1127.19.1.el7.x86_64 for CentOS 7.6. |

| 1.1.11 | v1.15 v1.17 |

|

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot