Adding a New Disk to an MRS Cluster

Issue

MRS HBase is unavailable.

Symptom

A high disk usage of the user's host causes service faults.

Cause Analysis

The service becomes unavailable due to insufficient disk capacity of the core node.

Procedure

For a pay-per-use MRS cluster, the billing mode cannot be changed to yearly/monthly after the disk capacity is expanded.

- Purchase an EVS disk. For details, see Purchasing an EVS Disk.

- Attach the EVS disk. For details, see Attaching a Non-Shared Disk.

- Log in to the ECS console and click the name of the ECS to which the new disk is to be attached.

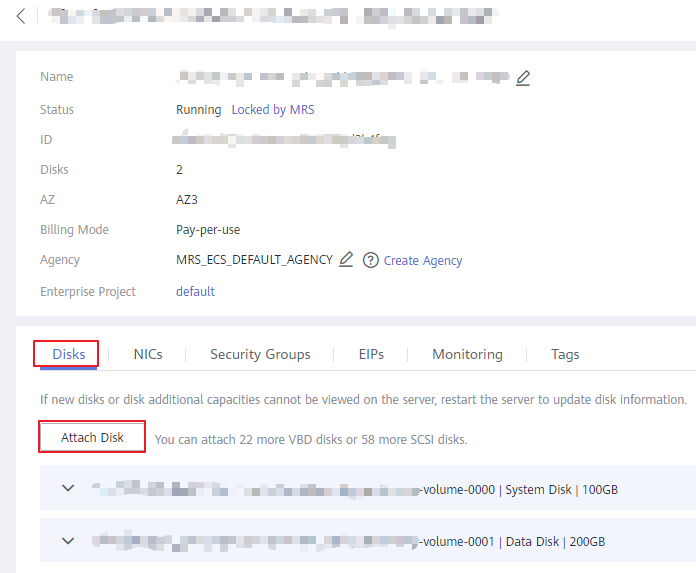

- On the Disks tab, click Attach Disk.

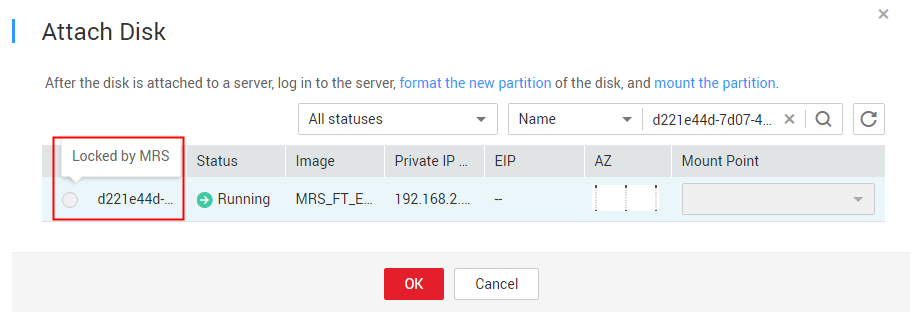

Figure 2 Attaching an EVS disk to the ECS

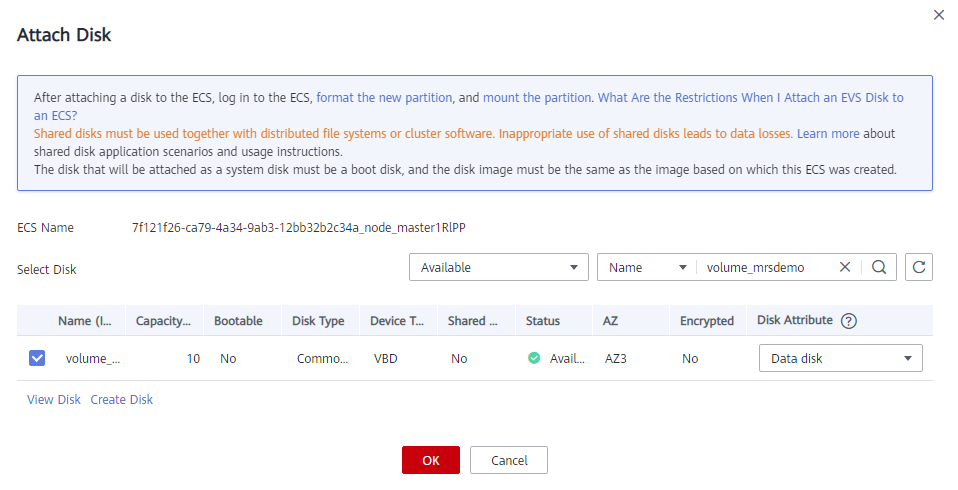

- Select the new disk to be attached and click OK.

Figure 3 Attaching a disk

- Initialize a Linux data disk. For details, see Initializing a Linux Data Disk (fdisk).

- The mount point directory is the existing DataNode instance ID plus one. For example, if you run the df –h command and find that the existing ID is /srv/BigData/hadoop/data1, the new mount point is then /srv/BigData/hadoop/data2. When initializing a Linux data disk to create a mount point, name the mount point /srv/BigData/hadoop/data2 and mount a new partition to the mount point. For example:

mkdir /srv/BigData/hadoop/data2 mount /dev/xvdb1 /srv/BigData/hadoop/data2

About the /srv/BigData/hadoop/data2 path: Change /srv/BigData/hadoop/data2 mentioned below according to the following scenarios:- In 3.x: Change it to /srv/BigData/data2.

- In versions earlier than 3.x: Change it to /srv/BigData/hadoop/data2.

- The mount point directory is the existing DataNode instance ID plus one. For example, if you run the df –h command and find that the existing ID is /srv/BigData/hadoop/data1, the new mount point is then /srv/BigData/hadoop/data2. When initializing a Linux data disk to create a mount point, name the mount point /srv/BigData/hadoop/data2 and mount a new partition to the mount point. For example:

- Run the following command to grant user omm the permissions to access the new disk:

chown omm:wheel New mount point

Example: chown omm:wheel /srv/BigData/hadoop/data2

- Run the following command to grant the execution permission on the new mount point directory:

chmod 701 New mount point

Example: chmod 701 /srv/BigData/hadoop/data2

In this command, 701 is only an example. Replace it with the value of the existing data disk data1.

- Log in to Manager and add data disks to DataNode and NodeManager instances.

- Modify the DataNode instance configuration.

MRS Manager: Log in to MRS Manager, choose Services > HDFS > Instance, click the target DataNode instance, and click Instance Configuration. On the displayed page, set Type to All.

FusionInsight Manager: Log in to FusionInsight Manager and choose Cluster. Click the name of the desired cluster and choose Service > HDFS > Instance. Click the target DataNode instance, click Instance Configuration, and select All Configurations.

- Method 1: Manually modify the DataNode instance configuration on the current node.

- Enter dfs.datanode.fsdataset.volume.choosing.policy in the search box and change the parameter value to org.apache.hadoop.hdfs.server.datanode.fsdataset.AvailableSpaceVolumeChoosingPolicy.

- Enter dfs.datanode.data.dir in the search box and change the parameter value to /srv/BigData/hadoop/data1/dn,/srv/BigData/hadoop/data2/dn.

If the values of the two parameters have been changed, click Save Configuration and select Restart role instance to restart the DataNode instance.

- Method 2: Automatically synchronize the DataNode instance configuration on the current node.

- Click Synchronize Configuration to enable the new configuration for the HDFS service.

- After the synchronization is complete, restart the instance for the configuration to take effect.

- If HDFS is not used and you want to quickly restart the instance, select Restart role instance.

- If a task is using HDFS, you must select rolling restart to prevent data exceptions or task failures.

- Method 1: Manually modify the DataNode instance configuration on the current node.

- Modify the Yarn NodeManager instance configuration.

MRS Manager: Log in to MRS Manager, choose Services > Yarn > Instance, click the target NodeManager instance, and click Instance Configuration. On the displayed page, set Type to All.

FusionInsight Manager: Log in to FusionInsight Manager and choose Cluster. Click the name of the desired cluster and choose Service > Yarn > Instance. Click the target NodeManager instance, click Instance Configuration, and select All Configurations.

- Method 1: Manually modify the Yarn NodeManager instance configuration on the current node.

- Enter yarn.nodemanager.local-dirs in the search box and change the parameter value to /srv/BigData/hadoop/data1/nm/localdir,/srv/BigData/hadoop/data2/nm/localdir.

- Enter yarn.nodemanager.log-dirs in the search box and change the parameter value to /srv/BigData/hadoop/data1/nm/containerlogs,/srv/BigData/hadoop/data2/nm/containerlogs.

If the values of the two parameters have been changed, click Save Configuration and select Restart role instance to restart the NodeManager instance.

- Method 2: Automatically synchronize the Yarn NodeManager instance configuration on the current node.

- Click Synchronize Configuration to enable the new configuration for the Yarn service.

- After the synchronization is complete, restart the instance for the configuration to take effect.

- If Yarn is not used and you want to quickly restart the instance, select Restart role instance.

- If a task is using Yarn, you must select rolling restart to prevent data exceptions or task failures.

- Method 1: Manually modify the Yarn NodeManager instance configuration on the current node.

- Check whether the capacity expansion is successful.

MRS Manager: Log in to MRS Manager, choose Services > HDFS > Instance, and click the target DataNode instance.

FusionInsight Manager: Log in to FusionInsight Manager and choose Cluster. Click the name of the desired cluster, choose Service > HDFS > Instance, and click the target DataNode instance.

In the Chart area, check whether the total disk capacity in real-time monitoring item DataNode Storage is increased. If DataNode Storage does not exist in the Chart area, click Customize to add it.- If the total disk capacity has been increased, the capacity expansion is complete.

- If the total disk capacity does not increase, contact technical support.

- (Optional) Add data disks to a Kafka instance.

Modify the Kafka instance configuration.

- Navigate to the parameter settings of the target Kafka Broker node.

MRS Manager: Log in to MRS Manager, choose Services > Kafka > Instance, click the target Broker instance, and click Instance Configuration. On the displayed page, set Type to All.

FusionInsight Manager: Log in to FusionInsight Manager and choose Cluster. Click the name of the desired cluster and choose Service > Kafka > Instance. Click the target Broker instance, click Instance Configuration, and select All Configurations.

- Enter log.dirs in the search box, add information about the disks to be added, and use commas (,) to separate them.

For example, if there was only one Kafka data disk and a new one is then added, change /srv/BigData/kafka/data1/kafka-logs to /srv/BigData/kafka/data1/kafka-logs,/srv/BigData/kafka/data2/kafka-logs.

- Save the configuration and select Restart role instance to restart the instance as prompted.

- Check whether the capacity expansion is successful.

MRS Manager: Log in to MRS Manager, choose Services > Kafka > Instance, and click the target Broker instance.

FusionInsight Manager: Log in to FusionInsight Manager and choose Cluster. Click the name of the desired cluster, choose Service > Kafka > Instance, and click the target Broker instance.

Check whether the total disk capacity in real-time monitoring item Capacity of Broker Disks is increased.

- Navigate to the parameter settings of the target Kafka Broker node.

- Enable automatic partition mounting upon system startup.

After the disk capacity of a cluster node is expanded, if a new node is added to the cluster, you need to add disks to the new node by referring to the preceding procedure. Otherwise, data may be lost.

Summary and Suggestions

- If the disk usage exceeds 85%, you are advised to expand disk capacity and attach the newly purchased disks to ECSs to associate with the cluster.

- The procedure for attaching disks and setting parameters may vary depending on the site environment.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.