Configuring Spark SQL to Enable the Adaptive Execution Feature

Scenarios

The Spark SQL adaptive execution feature enables Spark SQL to optimize subsequent execution processes based on intermediate results to improve overall execution efficiency. The following features have been implemented:

- Automatic configuration of the number of shuffle partitions

Before the adaptive execution feature is enabled, Spark SQL specifies the number of partitions for a shuffle process by specifying the spark.sql.shuffle.partitions parameter. This method lacks flexibility when multiple SQL queries are performed on an application and cannot ensure optimal performance in all scenarios. After adaptive execution is enabled, Spark SQL automatically configures the number of partitions for each shuffle process, instead of using the general configuration. In this way, the proper number of partitions is automatically used during each shuffle process.

- Dynamic adjusting of the join execution plan

Before the adaptive execution feature is enabled, Spark SQL creates an execution plan based on the optimization results of rule-based optimization (RBO) and Cost-Based Optimization (CBO). This method ignores changes of result sets during data execution. For example, when a view created based on a large table is joined with other large tables, the execution plan cannot be adjusted to BroadcastJoin even if the result set of the view is small. After the adaptive execution feature is enabled, Spark SQL can dynamically adjust the execution plan based on the execution result of the previous stage to obtain better performance.

- Automatic processing of data skew

If data skew occurs during SQL statement execution, the memory overflow of an executor or slow task execution may occur. After the adaptive execution feature is enabled, Spark SQL can automatically process data skew scenarios. Multiple tasks are started for partitions where data skew occurs. Each task reads several output files obtained from the shuffle process and performs union operations on the join results of these tasks to eliminate data skew.

Parameters

- Log in to FusionInsight Manager.

For details, see Accessing FusionInsight Manager.

- Choose Cluster > Services > Spark2x or Spark, click Configurations and then All Configurations, and search for the following parameters and adjust their values.

Parameter

Description

Example Value

spark.sql.adaptive.enabled

Whether to enable the adaptive execution function.

- true: The adaptive execution function is enabled. This is the default value. If you want to enable this function, disable the dynamic partition pruning function. The corresponding parameter is spark.sql.optimizer.dynamicPartitionPruning.enabled.

Note: If AQE and Dynamic Partition Pruning (DPP) are enabled at the same time, DPP takes precedence over AQE during SparkSQL task execution. As a result, AQE does not take effect.

- false: The adaptive execution function is disabled.

true

spark.sql.optimizer.dynamicPartitionPruning.enabled

Whether to enable dynamic partition pruning (DPP) optimization. This parameter is particularly useful for queries involving partitioned tables and can help improve query performance.

- true: Dynamic partition pruning optimization is enabled. This is the default value. Spark dynamically prunes unnecessary partitions during query execution, significantly reducing I/O operations and improving overall query performance.

- false: Dynamic partition pruning optimization is disabled.

true

spark.sql.adaptive.coalescePartitions.enabled

Whether Adaptive Query Execution (AQE) will dynamically coalesce contiguous shuffle partitions during query execution.

- true: When true and spark.sql.adaptive.enabled is true, Spark will coalesce contiguous shuffle partitions according to the target size (specified by spark.sql.adaptive.advisoryPartitionSizeInBytes), to avoid too many small tasks.

- false: This optimization is disabled.

true

spark.sql.adaptive.coalescePartitions.initialPartitionNum

Initial number of shuffle partitions before merge. The default value is the same as the value of spark.sql.shuffle.partitions. This parameter is valid only when spark.sql.adaptive.enabled and spark.sql.adaptive.coalescePartitions.enabled are set to true. This parameter is optional. The initial number of partitions must be a positive number.

200

spark.sql.adaptive.coalescePartitions.minPartitionNum

Minimum number of shuffle partitions after merge. If this parameter is not set, the default degree of parallelism (DOP) of the Spark cluster is used. This parameter is valid only when spark.sql.adaptive.enabled and spark.sql.adaptive.coalescePartitions.enabled are set to true. This parameter is optional. The initial number of partitions must be a positive number.

1

spark.sql.adaptive.shuffle.targetPostShuffleInputSize

Target size of a partition after shuffling. Spark 3.0 and later versions do not support this parameter.

64 MB

spark.sql.adaptive.advisoryPartitionSizeInBytes

Size of a shuffle partition (unit: byte) during adaptive optimization (spark.sql.adaptive.enabled is set to true). This parameter takes effect when Spark aggregates small shuffle partitions or splits shuffle partitions where skew occurs.

64 MB

spark.sql.adaptive.fetchShuffleBlocksInBatch

Whether to obtain consecutive shuffle blocks in batches. For the same map job, reading consecutive shuffle blocks in batches can reduce I/Os and improve performance, instead of reading blocks one by one.

- true: Batch processing is enabled by default. This is the default value. Spark fetches shuffle blocks in batches during shuffle operation execution. This can improve performance by reducing the overhead of data transmission.

- false: This function is disabled.

Note that multiple consecutive blocks exist in a single read request only when spark.sql.adaptive.enabled and spark.sql.adaptive.coalescePartitions.enabled are set to true. This feature also relies on a relocatable serializer that uses cascading to support the codec and the latest version of the shuffle extraction protocol.

true

spark.sql.adaptive.localShuffleReader.enabled

Whether Spark attempts to use a local shuffle reader when AQE is enabled.

- When true and spark.sql.adaptive.enabled is true, Spark tries to use the local shuffle reader to read the shuffle data when the shuffle partitioning is not needed, for example, after converting sort-merge join to broadcast-hash join.

- false: This function is disabled.

true

spark.sql.adaptive.skewJoin.enabled

Whether AQE dynamically handles data skew in join operations.

- true: This function is enabled. This is the default value. Spark automatically detects data skew during join operations and takes measures to reduce the impact of data skew on performance. Reducing the impact of data skew improves resource utilization and reduces memory and disk I/O overhead.

- false: This function is disabled.

true

spark.sql.adaptive.skewJoin.skewedPartitionFactor

This parameter is a multiplier used to determine whether a partition is a data skew partition. If the data size of a partition exceeds the value of this parameter multiplied by the median of the all partition sizes except this partition and exceeds the value of spark.sql.adaptive.skewJoin.skewedPartitionThresholdInBytes, this partition is considered as a data skew partition.

5

spark.sql.adaptive.skewJoin.skewedPartitionThresholdInBytes

If the partition size (unit: byte) is greater than the threshold as well as the product of the spark.sql.adaptive.skewJoin.skewedPartitionFactor value and the median partition size, skew occurs in the partition. Ideally, the value of this parameter should be greater than that of spark.sql.adaptive.advisoryPartitionSizeInBytes.

256 MB

spark.sql.adaptive.nonEmptyPartitionRatioForBroadcastJoin

If the ratio of non-null partitions is less than the value of this parameter when two tables are joined, broadcast hash join cannot be properly performed regardless of the partition size. This parameter is valid only when spark.sql.adaptive.enabled is set to true.

0.2

- true: The adaptive execution function is enabled. This is the default value. If you want to enable this function, disable the dynamic partition pruning function. The corresponding parameter is spark.sql.optimizer.dynamicPartitionPruning.enabled.

- After the parameter settings are modified, click Save, perform operations as prompted, and wait until the settings are saved successfully.

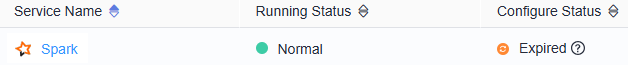

- After the Spark server configurations are updated, if Configure Status is Expired, restart the component for the configurations to take effect.

Figure 1 Modifying Spark configurations

On the Spark dashboard page, choose More > Restart Service or Service Rolling Restart, enter the administrator password, and wait until the service restarts.

If you use the Spark client to submit tasks, after the cluster parameters are modified, you need to download the client again for the configuration to take effect. For details, see Using an MRS Client.

Components are unavailable during the restart, affecting upper-layer services in the cluster. To minimize the impact, perform this operation during off-peak hours or after confirming that the operation does not have adverse impact.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot