Restarting an MRS Cluster

During MRS cluster running, restart the components in the cluster if you have modified component settings, encountered infrastructure resource faults, or detected service process errors.

Components within an MRS cluster support both the standard restart and rolling restart.

- Standard restart: concurrently restarts all components or instances in the cluster, which may interrupt services.

- Rolling restart: restarts required components or instances without interrupting services as much as possible. Compared with a standard restart, a rolling restart takes a longer time and may affect service throughput and performance. To minimize or eliminate the impact on services during a component restart, you can perform rolling restarts to restart components or instances in batches. For instances in active/standby mode, the standby instance is restarted first, followed by the active instance.

Table 2 describes the impact on services when a rolling restart is performed on components.

Restarting a cluster will stop the cluster components from providing services, which adversely affects the running of upper-layer applications or jobs. You are advised to perform rolling restarts during off-peak hours.

Notes and Constraints

- Not all components in an MRS cluster support rolling restarts. When you perform a rolling restart on a cluster, components that do not support rolling restarts will be restarted in standard restart mode. For details, see Component Restart Reference Information.

- For configurations that must take effect immediately, for example, server port configurations, a standard restart is generally recommended over a rolling restart.

Impact on the System

Table 2 describes the impact of the rolling restart on each component during the rolling restart of a cluster.

Restarting an MRS Cluster

Restart an MRS cluster on the MRS console or on FusionInsight Manager of the cluster.

- Log in to the MRS console.

- Choose Active Clusters and click a cluster name to go to the cluster details page.

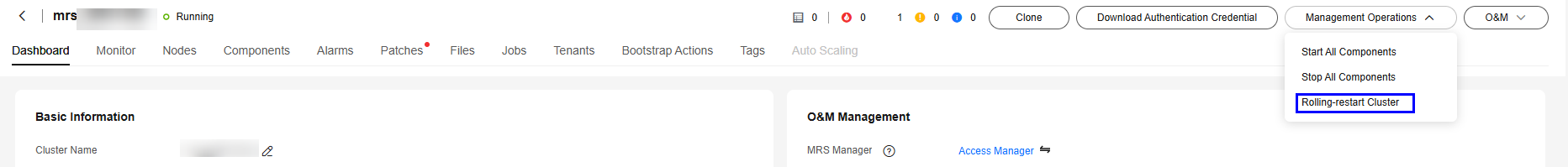

- In the upper right corner of the page, choose Management Operations > Perform Rolling Cluster Restart.

Figure 1 Performing rolling cluster restart

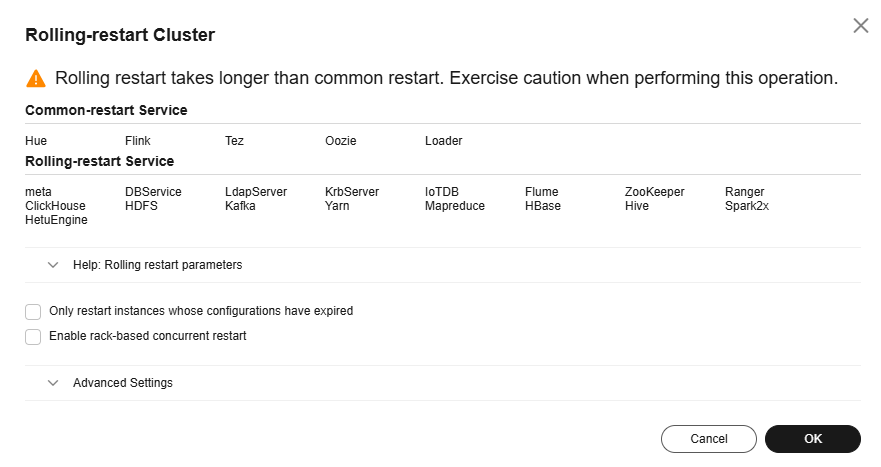

- The Rolling-restart Cluster page is displayed. Select Only restart instances whose configurations have expired and click OK to perform rolling restart for the target cluster.

- After the rolling restart task is complete, click Finish.

- Log in to FusionInsight Manager of the MRS cluster.

For details about how to log in to FusionInsight Manager, see Accessing MRS Manager.

- Choose . In the upper right corner, click More > Restart.

- For MRS 3.3.0 or later, the Cluster > Dashboard page has been removed from FusionInsight Manager. You can choose More in the upper right corner of Homepage to access cluster maintenance and management functions.

- When restarting a cluster, you can choose between a standard restart or rolling restart. A rolling restart takes a longer time but has less impact on services.

- In the displayed dialog box, enter the password of the current login user and click OK.

- If you select the rolling restart, adjust related parameters based on the site requirements.

Figure 2 Rolling restart

Table 1 Rolling restart parameters Parameter

Example Value

Description

Restart only instances with expired configurations in the cluster

-

Whether to restart only the modified instances in a cluster.

Enable rack strategy

-

Whether to enable the concurrent rack rolling restart strategy. This parameter takes effect only for roles that meet the rack rolling restart strategy. (The roles support rack awareness, and instances of the roles belong to two or more racks.)

This parameter is available only when a rolling restart is performed on HDFS or YARN.

Data Nodes to Be Batch Restarted

1

Number of instances that are restarted in each batch when the batch rolling restart strategy is used. The default value is 1.

- This parameter is valid only when the batch rolling restart strategy is used and the instance type is DataNode.

- This parameter is invalid when the rack strategy is enabled. In this case, the cluster uses the maximum number of instances (20 by default) configured in the rack strategy as the maximum number of instances that are concurrently restarted in a rack.

- This parameter is configurable only when a rolling restart is performed on HDFS, HBase, YARN, Kafka, Storm, or Flume.

- This parameter for the RegionServer of HBase cannot be manually configured. Instead, it is automatically adjusted based on the number of RegionServer nodes. Specifically, if the number of RegionServer nodes is less than 30, the parameter value is 1. If the number is greater than or equal to 30 and less than 300, the parameter value is 2. If the number is greater than or equal to 300, the parameter value is 1% of the number (rounded-down).

Batch Interval

0

Interval between two batches of instances to be roll-restarted. The default value is 0.

Decommissioning Timeout Interval

1800

Decommissioning interval for role instances during a rolling restart. The default value is 1800s.

Some roles (such as HiveServer and JDBCServer) stop providing services before the rolling restart. Stopped instances cannot be connected to new clients. Existing connections will be completed after a period of time. An appropriate timeout interval can ensure service continuity.

This parameter is configurable only when a rolling restart is performed on Hive, Spark, or Spark2x.

Batch Fault Tolerance Threshold

0

Tolerance times when the rolling restart of instances fails to be batch executed. The default value is 0, which indicates that the rolling restart task ends after any batch of instances fails to restart.

Advanced parameters, such as Data Nodes to Be Batch Restarted, Batch Interval, and Batch Fault Tolerance Threshold, should be properly configured based on site requirements. Otherwise, services may be interrupted or cluster performance may be severely affected.

Example:

- If Data Nodes to Be Batch Restarted is set to an unnecessarily large value, a large number of instances are restarted concurrently. As a result, services are interrupted or cluster performance is severely affected due to too few working instances.

- If Batch Fault Tolerance Threshold is set to an unnecessarily large value, services will be interrupted because a next batch of instances will be restarted after a batch of instances fails to restart.

- Click OK.

Reference

Table 2 describes the impact on the system during the rolling restart of components and instances.

|

Component |

Service Interruption |

Impact on System |

|---|---|---|

|

ClickHouse |

During the rolling restart, if the submitted workloads can be complete within the timeout period (30 minutes by default), there is no impact. |

Nodes undergoing a rolling restart reject all new requests. This affects single-replica services, the ON CLUSTER operation, and workloads dependent on the instances being rolling-restarted. If a request that is being executed is not complete within the timeout period (30 minutes by default), the request fails. |

|

DBService |

All services are normal during the rolling restart. |

During the rolling restart, alarms indicating a heartbeat interruption between the active and standby DBService nodes may be reported. |

|

Doris |

Doris services will not be interrupted during the rolling restart only when the following conditions are met:

|

During the rolling restart, the total resources decrease, affecting the maximum memory and CPU resources that can be used by jobs. In extreme cases, the jobs may fail due to insufficient resources. If a job times out (30 minutes by default), retry the job. |

|

Flink |

All services are normal during the rolling restart. |

The FlinkServer UI cannot be accessed during the rolling restart. |

|

Flume |

To prevent service interruptions and data loss, the following conditions must be met:

|

|

|

Guardian |

All services are normal during the rolling restart. |

None |

|

HBase |

HBase read and write services are normal during the rolling restart. |

|

|

HDFS |

|

If a third-party client is used, the reliability of the third-party client during the rolling restart cannot be guaranteed. |

|

HetuEngine |

|

|

|

Hive |

During the rolling restart, services with execution time longer than the decommissioning timeout period may fail. |

|

|

Kafka |

During the rolling restart, the read and write of Kafka topics with multiple replicas are normal, but operations on Kafka topics with only a single replica are interrupted. |

|

|

KrbServer |

All services are normal during the rolling restart. |

During the rolling restart, Kerberos authentication of a cluster may take a longer time. |

|

LdapServer |

All services are normal during the rolling restart. |

During the rolling restart, Kerberos authentication of a cluster may take a longer time. |

|

MapReduce |

None |

|

|

Ranger |

All services are normal during the rolling restart. |

The RangerAdmin, RangerKMS, and PolicySync instances of Ranger are configured in active-active mode, and these instances can provide services in turn during the rolling restart. While UserSync supports only one-instance configuration and users cannot be synchronized during the restart. The user synchronization period is 5 minutes, and UserSync takes a short time to restart. Therefore, the UserSync restart has little impact on user synchronization. |

|

Spark |

Except the listed items, other services are not affected. |

|

|

YARN |

|

During the rolling restart of YARN, tasks running on YARN may experience exceptions due to excessive retries. |

|

ZooKeeper |

ZooKeeper read and write operations are normal during the rolling restart. |

|

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot