Creating an ELB Ingress on the Console

Prerequisites

- A workload is available in the cluster (because an ingress enables network access for workloads). If no workload is available, deploy a workload by referring to Creating a Workload.

- A Service has been configured for the workload.

Precautions

- Other resources should not use the load balancer automatically created by an ingress. If the load balancer is used by other resources, there will be residual resources when the ingress is deleted.

- After an ingress is created, you can only upgrade and maintain the configuration of each load balancer on the CCE console. Do not modify the configuration on the ELB console. If you modify the configuration on the ELB console, the ingress may be abnormal.

- The URL specified in an ingress forwarding policy must be the same as that used to access the backend Service. If the URL is not the same, 404 will be returned.

- Dedicated load balancers must be of the application type (HTTP/HTTPS) and each have a private IP address bound.

- If multiple ingresses are used to connect to the same ELB port in the same cluster, the listener configuration items (such as the certificate associated with the listener and the HTTP/2 attribute of the listener) are subject to the configuration of the first ingress.

Adding an ELB Ingress

An Nginx workload is used as an example to describe how to add an ELB ingress.

- Log in to the CCE console and click the cluster name to access the cluster console.

- In the navigation pane on the left, choose Services & Ingresses. On the Ingresses tab, click Create Ingress in the upper right corner.

- Configure the parameters.

- Name: Enter a name for the ingress, for example, ingress-demo.

- Load Balancer: Select the load balancer type and whether to use an existing load balancer or create a new one.

Only dedicated load balancers are allowed. A dedicated load balancer must be of the application (HTTP/HTTPS) type and work on a private network.

Select either Use existing or Auto create. For more information, see Table 1.Table 1 Load balancer configurations Option

Description

Use existing

Only the load balancers in the same VPC as the cluster can be selected. If no load balancer is available, click Create Load Balancer to create one on the ELB console.

Auto create

- Instance Name: Enter a load balancer name.

- Enterprise Project: This parameter is only available for enterprise users who have enabled an enterprise project. Enterprise projects facilitate project-level management and grouping of cloud resources and users.

- AZ: This parameter is only available for dedicated load balancers. You can create load balancers in multiple AZs to improve service availability. If diaster recovery is required, you are advised to select multiple AZs.

- Frontend Subnet: This parameter is used to allocate IP addresses to load balancers to receive traffic from clients.

- Backend Subnet: This parameter is used to allocate IP addresses for load balancers to routing traffic to pods.

- Network/Application-oriented Specifications

- Elastic: applies to fluctuating traffic, billed based on the total traffic.

- Fixed: applies to stable traffic, billed based on specifications.

- EIP: If you select Auto create, you can select a bandwidth billing option and set the bandwidth.

- Resource Tag: You can add resource tags to classify resources. You can create predefined tags on the TMS console. The predefined tags are available to all resources that support tags. You can use predefined tags to improve the tag creation and resource migration efficiency. This is supported by clusters of v1.27.5-r0, v1.28.3-r0, and later versions.

- Listener: Ingress configures a listener for the load balancer, which listens to and distributes the requests. After the configuration is complete, a listener will be created on the load balancer. The default listener name is in the format of k8s__<Protocol>_<Port>, for example, k8s_HTTP_80.

- External Protocol: HTTP and HTTPS are available.

- External Port: Port for the load balancer to receive requests. You can specify any port.

- Access Control

- Allow all IP addresses: No access control is configured.

- Trustlist: Only the selected IP address group can access the load balancer.

- Blocklist: The selected IP address group cannot access the load balancer.

- Certificate Source: TLS secrets and ELB server certificates are supported.

- Server Certificate: When an HTTPS listener is added to the load balancer, bind a certificate to the load balancer for encrypted transmission for HTTPS data.

- TLS secret: For details about how to create a secret certificate, see Creating a Secret.

- ELB server certificate: Use the certificate created in the ELB service.

If there is already an HTTPS ingress for the chosen port on the load balancer, the certificate of the new HTTPS ingress must be the same as the certificate of the existing ingress. This means that a listener has only one certificate. If two certificates, each with a different ingress, are added to the same listener of the same load balancer, only the certificate added earliest takes effect on the load balancer.

- SNI: Server Name Indication (SNI) is an extended protocol of TLS. It allows multiple TLS-compliant domain names for external access using the same IP address and port, and different domain names can use different security certificates. If SNI is enabled, the client is allowed to submit the requested domain name when initiating a TLS handshake request. After receiving the TLS request, the load balancer searches for the certificate based on the domain name in the request. If the certificate corresponding to the domain name is found, the load balancer returns the certificate for authorization. Otherwise, the default certificate (server certificate) is returned for authorization.

- SNI is available only when HTTPS is selected.

- You need to specify the domain name for the SNI certificate. Only one domain name can be specified for each certificate. Wildcard-domain certificates are supported.

- Advanced Options

Description

Description

Transfer Listener Port Number

If this option is enabled, the listening port on the load balancer can be transferred to backend servers through the HTTP header of the packet.

Transfer Port Number in the Request

If this option is enabled, the source port of the client can be transferred to backend servers through the HTTP header of the packet.

Rewrite X-Forwarded-Host

If this option is enabled, X-Forwarded-Host will be rewritten using the Host field in the client request body and transferred to backend servers.

Idle Timeout

Timeout for an idle client connection. If there are no requests reaching the load balancer during the timeout duration, the load balancer will disconnect the connection from the client and establish a new connection when there is a new request.

Request Timeout

Timeout for waiting for a request from a client. There are two cases:

- If the client fails to send a request header to the load balancer during the timeout duration, the request will be interrupted.

- If the interval between two consecutive request bodies reaching the load balancer is greater than the timeout duration, the connection will be disconnected.

Response Timeout

Timeout for waiting for a response from a backend server. After a request is forwarded to the backend server, if the backend server does not respond during the timeout duration, the load balancer will stop waiting and return HTTP 504 Gateway Timeout.

HTTP2

Whether to use HTTP/2 for a client to communicate with a load balancer. Request forwarding using HTTP/2 improves the access performance between your application and the load balancer. However, the load balancer still uses HTTP/1.x to forward requests to the backend server.

- Forwarding Policy: When the access address of a request matches the forwarding policy (that consists of a domain name and URL, for example, 10.117.117.117:80/helloworld), the request is forwarded to the corresponding target Service for processing. You can click

to add multiple forwarding policies.

to add multiple forwarding policies.

- Domain Name: Enter the domain name used for access. Ensure that the domain name has been registered and licensed. Once a forwarding policy is configured with a domain name specified, you must use the domain name for access.

- URL Matching Rule

- Prefix match: If the URL is set to /healthz, the URL that meets /healthz can be accessed, for example, /healthz/v1 and /healthz/v2.

- Exact match: The URL can be accessed only when it is fully matched. For example, if the URL is set to /healthz, only /healthz can be accessed.

- RegEX match: The URL is matched based on the regular expression. If the regular expression is /[A-Za-z0-9_.-]+/test, all URLs that comply with this rule can be accessed, for example, /abcA9/test and /v1-Ab/test. Two regular expression standards are supported: POSIX and Perl.

- URL: access path, for example, /healthz.

The access path added here must exist in the backend applications. If it does not exist, requests will fail to be forwarded.

For example, the default access URL of the Nginx application is /usr/share/nginx/html. When adding /test to the ingress forwarding policy, ensure the access URL of your Nginx application contains /usr/share/nginx/html/test. Otherwise, 404 will be returned.

- Destination Service: Select an existing Service or create a Service. Services that do not meet search criteria are automatically filtered out.

- Destination Service Port: Select the access port of the destination Service.

- Set ELB:

- Algorithm: Three algorithms are available: weighted round robin, weighted least connections algorithm, or source IP hash.

- Weighted round robin: Requests are forwarded to different servers based on their weights, which indicate server processing performance. Backend servers with higher weights receive proportionately more requests, whereas equal-weighted servers receive the same number of requests. This algorithm is often used for short connections, such as HTTP services.

- Weighted least connections: In addition to the weight assigned to each server, the number of connections processed by each backend server is considered. Requests are forwarded to the server with the lowest connections-to-weight ratio. Building on least connections, the weighted least connections algorithm assigns a weight to each server based on their processing capability. This algorithm is often used for persistent connections, such as database connections.

- Source IP hash: The source IP address of each request is calculated using the hash algorithm to obtain a unique hash key, and all backend servers are numbered. The generated key allocates the client to a particular server. This enables requests from different clients to be distributed in load balancing mode and ensures that requests from the same client are forwarded to the same server. This algorithm applies to TCP connections without cookies.

- Sticky Session: This feature is disabled by default. There are two options:

- Load balancer cookie: Enter the Stickiness Duration , which ranges from 1 to 1440 minutes.

- Health Check: Set the health check configuration of the load balancer. If this feature is enabled, you need to configure the following parameters.

Parameter

Description

Protocol

When the protocol of the target Service is TCP, gRPC, and HTTP are supported.

- Check Path: This parameter is only available for HTTP and GRPC health check. It specifies the URL for health check. The check path must start with a slash (/) and contain 1 to 80 characters.

Port

By default, the service ports (Node Port or container port of the Service) are used for health check. You can also specify another port for health check. If a port is specified, a service port named cce-healthz will be added for the Service.

- Node Port: If this parameter is not specified, a random port is used. The value ranges from 30000 to 32767.

- Container Port: When a dedicated load balancer has an elastic network interface associated, the container port is used for health check. The value ranges from 1 to 65535.

Check Period (s)

Specifies the maximum interval between health checks. The value ranges from 1 to 50.

Timeout (s)

Specifies the maximum timeout duration for each health check. The value ranges from 1 to 50.

Max. Retries

Specifies the maximum number of health check retries. The value ranges from 1 to 10.

- Algorithm: Three algorithms are available: weighted round robin, weighted least connections algorithm, or source IP hash.

- Operation: Click Delete to delete the configuration.

- Annotation: Ingresses provide some advanced CCE functions, which are implemented by annotations. When you use kubectl to create a container, annotations will be used. For details, see Creating an Ingress - Automatically Creating a Load Balancer and Creating an Ingress - Interconnecting with an Existing Load Balancer

- Click OK. After the ingress is created, it is displayed in the ingress list.

On the ELB console, you can view the ELB automatically created through CCE. The default name is cce-lb-ingress.UID. Click the load balancer name to access its details page. On the Listeners tab, view the route settings of the ingress, such as the URL, listener port, and backend port.

After an ingress is created, upgrade and maintain the selected load balancer on the CCE console. Do not modify the configuration on the ELB console. Otherwise, the ingress service may be abnormal.

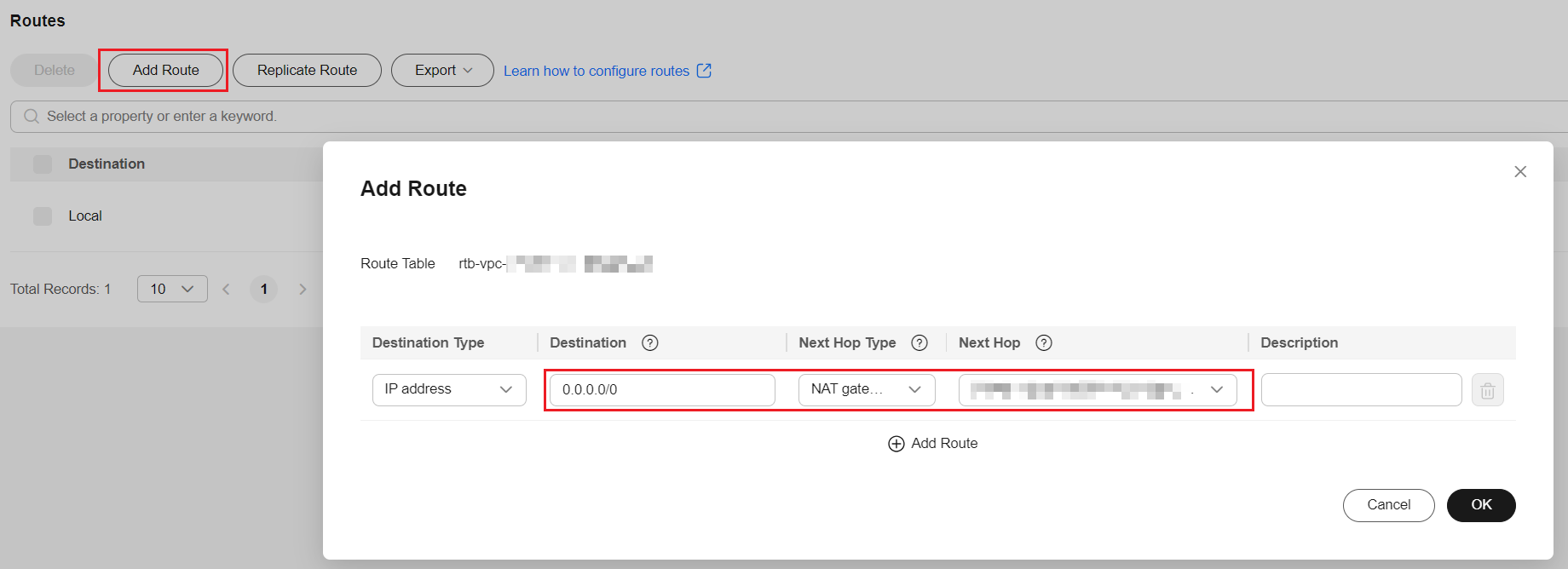

Figure 1 ELB routing configuration

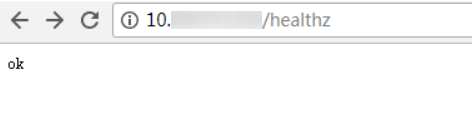

- Access the /healthz interface of the workload, for example, workload defaultbackend.

- Obtain the access address of the /healthz interface of the workload. The access address consists of the load balancer IP address, external port, and mapping URL, for example, 10.**.**.**:80/healthz.

- Enter the URL of the /healthz interface, for example, http://10.**.**.**:80/healthz, in the address box of the browser to access the workload.

Figure 2 Accessing the /healthz interface of defaultbackend

Related Operations

The Kubernetes ingress structure does not contain the property field. Therefore, the ingress created by the API called by client-go does not contain the property field. CCE provides a solution to ensure compatibility with the Kubernetes client-go. For details about the solution, see How Can I Achieve Compatibility Between Ingress's property and Kubernetes client-go?

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot