ALM-38001 Insufficient Kafka Disk Capacity

Description

The system checks the Kafka disk usage every 60 seconds and compares the actual disk usage with the threshold. The disk usage has a default threshold. This alarm is generated when the disk usage is greater than the threshold.

You can change the threshold in O&M > Alarm > Thresholds. Under the service list, choose Kafka > Disk > Broker Disk Usage (Broker) and change the threshold.

When the Trigger Count is 1, this alarm is cleared when the Kafka disk usage is less than or equal to the threshold. When the Trigger Count is greater than 1, this alarm is cleared when the Kafka disk usage is less than or equal to 90% of the threshold.

Attribute

|

Alarm ID |

Alarm Severity |

Automatically Cleared |

|---|---|---|

|

38001 |

Major |

Yes |

Parameters

|

Name |

Meaning |

|---|---|

|

Source |

Specifies the cluster for which the alarm is generated. |

|

ServiceName |

Specifies the service for which the alarm is generated. |

|

RoleName |

Specifies the role for which the alarm is generated. |

|

HostName |

Specifies the host for which the alarm is generated. |

|

PartitionName |

Specifies the disk partition where the alarm is generated. |

|

Trigger Condition |

Specifies the threshold triggering the alarm. If the current indicator value exceeds this threshold, the alarm is generated. |

Impact on the System

Kafka data write operations are affected.

Possible Causes

- The configuration (such as number and size) of the disks for storing Kafka data cannot meet the requirement of the current service traffic, due to which the disk usage reaches the upper limit.

- Data retention time is too long, due to which the data disk usage reaches the upper limit.

- The service plan does not distribute data evenly, due to which the usage of some disks reaches the upper limit.

Procedure

Check the disk configuration of Kafka data.

- On the FusionInsight Manager portal and click O&M > Alarm > Alarms.

- In the alarm list, locate the alarm and obtain HostName from Location.

- Click Cluster > Name of the desired cluster > Hosts.

- In the host list, click the host name obtained in Step 2.

- Check whether the Disk area contains the partition name in the alarm.

- If yes, go to Step 6.

- If no, manually clear the alarm and no further operation is required.

- Check whether the disk partition usage contained in the alarm reaches 100% in the Disk area.

- If yes, handle the alarm by following the instructions in Related Information.

- If no, go to Step 7.

Check the Kafka data storage duration.

- Choose Cluster > Name of the desired cluster > Services > Kafka > Configurations.

- Check whether the value of parameter disk.adapter.enable is set to true.

- Set the value of disk.adapter.enable to true. Check whether the value of adapter.topic.min.retention.hours is properly set.

- If yes, go to Step 10.

- If no, adjust the data retention period based on service requirements.

If the disk auto-adaptation function is enabled, some historical data of specified topics is deleted. If the retention period of some topics cannot be adjusted, click All Configurations and add the topics to the value of the disk.adapter.topic.blacklist parameter.

- Wait 10 minutes and check whether the usage of faulty disks reduces.

- If yes, wait until the alarm is cleared.

- If no, go to Step 11.

Check the Kafka data plan.

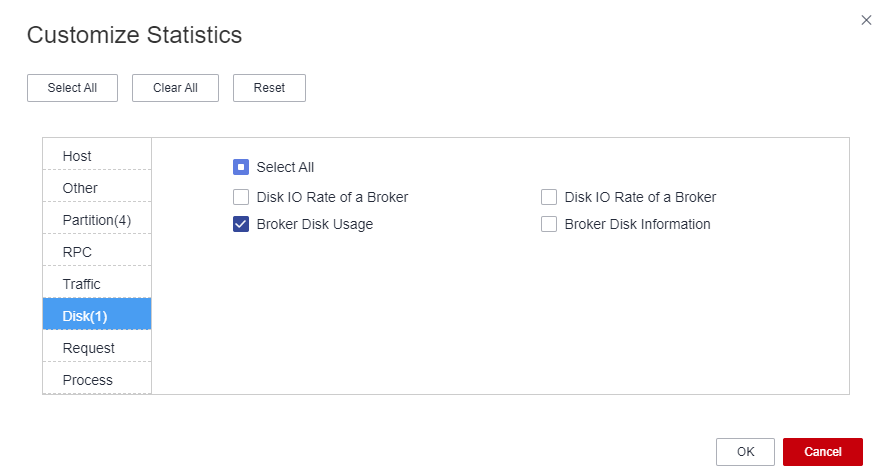

- In the Instance area, click Broker. In the Real Time area of Broker, Click the drop-down menu in the Chart area and choose Customize to customize monitoring items.

- In the dialog box, select Disk > Broker Disk Usage and click OK.

The Kafka disk usage information is displayed.

Figure 1 Broker Disk Usage

- View the information in Step 12 to check whether there is only the disk parathion for which the alarm is generated in Step 2.

- Perform disk planning and mount a new disk again. Go to the Instance Configurations page of the node for which the alarm is generated, modify log.dirs, add other disk directories, and restart the Kafka instance.

If the current topic has multiple replicas during the instance rolling restart, there is no impact on the Kafka service. Otherwise, the Kafka service will be unavailable during the restart, and upper-layer services that depend on the service will be affected.

- Determine whether to shorten the data retention time configured on Kafka based on service requirements and service traffic.

- Log in to FusionInsight Manager, select Cluster > Name of the desired cluster > Services > Kafka > Configurations, and click All Configurations. In the search box on the right, enter log.retention.hours. The value of the parameter indicates the default data retention time of the topic. You can change the value to a smaller one.

- For a topic whose data retention time is configured alone, the modification of the data retention time on the Kafka Service Configuration page does not take effect.

- Check whether the usage of some disks reaches the upper limit due to unreasonable configuration of the partitions of some topics. For example, the number of partitions configured for a topic with large data volume is smaller than the number of disks. In this case, the data is not evenly allocated to disks.

If you do not know which topics have a large amount of service data, perform the following steps:

- In the Kafka client CLI, run the following command to perform partition capacity expansion for the topic:

kafka-topics.sh --zookeeper "ZooKeeper IP address:2181/kafka" --alter --topic "Topic name" --partitions="New number of partitions"

- You are advised to set the new number of partitions to a multiple of the number of Kafka data disks.

- The step may not quickly clear the alarm, and you need to modify the data retention time in Step 11 to gradually balance data allocation.

- Determine whether to perform capacity expansion.

You are advised to perform capacity expansion for Kafka when the current disk usage exceeds 80%.

- Expand the disk capacity and check whether the alarm is cleared after capacity expansion.

- If yes, no further action is required.

- If no, go to Step 22.

- Check whether the alarm is cleared.

- If yes, no further action is required.

- If no, go to Step 22.

Collect fault information.

- On the FusionInsight Manager portal, choose O&M > Log > Download.

- Select Kafka in the required cluster from the Service drop-down list.

- Click

in the upper right corner, and set Start Date and End Date for log collection to 10 minutes ahead of and after the alarm generation time, respectively. Then, click Download.

in the upper right corner, and set Start Date and End Date for log collection to 10 minutes ahead of and after the alarm generation time, respectively. Then, click Download. - Send the collected fault logs to O&M personnel for help.

Alarm Clearing

After the fault is rectified, the system automatically clears this alarm.

Related Information

- Log in to FusionInsight Manager, choose Cluster > Name of the desired cluster > Services > Kafka > Instance, stop the Broker instance whose status is Restoring, record the management IP address of the node where the Broker instance is located, and record broker.id. The value can be obtained by using the following method: Click the role name. On the Configurations page, select All Configurations, and search for the broker.id parameter.

- Log in to the recorded management IP address as user root, and run the df -lh command to view the mounted directory whose disk usage is 100%, for example, ${BIGDATA_DATA_HOME}/kafka/data1.

- Go to the directory and run the du -sh * command to view the size of each file in the directory. Check whether there are files in addition to the files in the kafka-logs directory, and determine whether these files can be deleted or migrated.

- Go to the kafka-logs directory, run the du -sh * command, select a partition folder to be moved. The naming rule is Topic name-Partition ID. Record the topic and partition.

- Modify the recovery-point-offset-checkpoint and replication-offset-checkpoint files in the kafka-logs directory in the same way.

- Decrease the number in the second line in the file. (To remove multiple directories, the number deducted is equal to the number of files to be removed.)

- Delete the line of the to-be-removed partition. (The line structure is "Topic name Partition ID Offset". Save the data before deletion. Subsequently, the content must be added to the file of the same name in the destination directory.)

- Modify the recovery-point-offset-checkpoint and replication-offset-checkpoint files in the destination data directory. For example, ${BIGDATA_DATA_HOME}/kafka/data2/kafka-logs in the same way.

- Increase the number in the second line in the file. (To move multiple directories, the number added is equal to the number of files to be moved.)

- Add the to-be moved partition to the end of the file. (The line structure is "Topic name Partition ID Offset". You can copy the line data saved in Step 5.)

- Move the partition to the destination directory. After the partition is moved, run the chown omm:wheel -R Partition directory command to modify the directory owner group for the partition.

- Log in to FusionInsight Manager and choose Cluster > Name of the desired cluster > Services > Kafka > Instance to start the Broker instance.

- Wait for 5 to 10 minutes and check whether the health status of the Broker instance is Normal.

- If yes, resolve the disk capacity insufficiency problem according to the handling method of "ALM-38001 Insufficient Kafka Disk Space" after the alarm is cleared.

- If no, contact the O&M personnel.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot