Adding Fields

Scenario

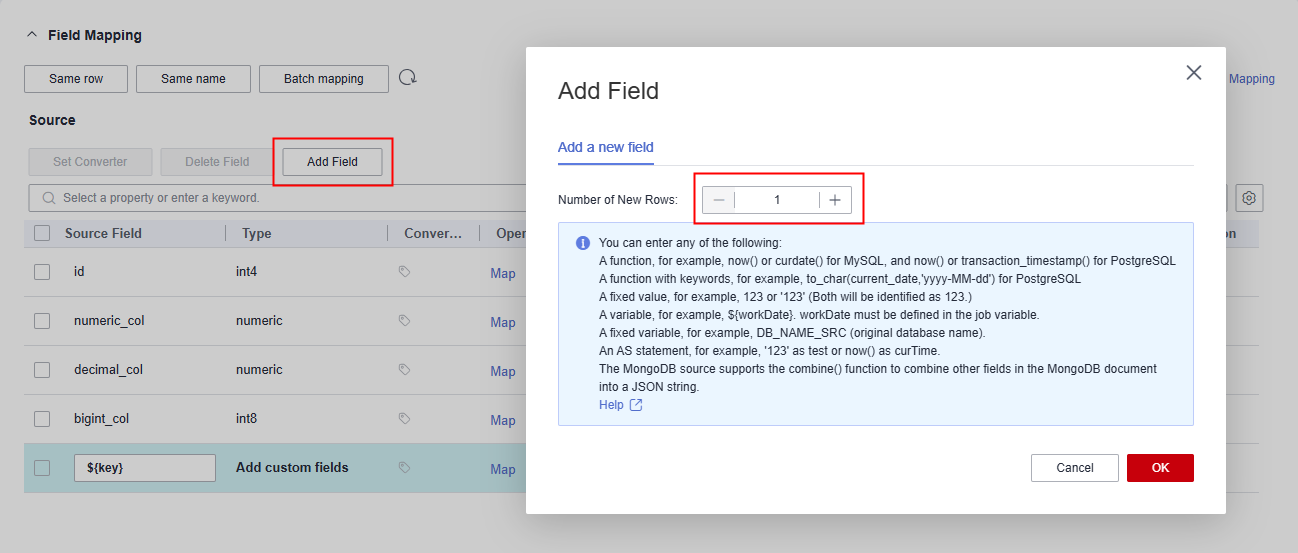

- After job parameters are configured, field mapping needs to be configured. You can customize new fields by clicking Add Field on the Field Mapping page.

- If files are migrated between FTP, SFTP, OBS, and HDFS and the migration source's File Format is set to Binary, files will be directly transferred, free from field mapping.

- In other scenarios, CDM automatically maps fields of the source table and the destination table. You need to check whether the mapping and time format are correct. For example, check whether the source field type can be converted into the destination field type.

Currently, the following field types are supported:

Function

- Functions

PostgreSQL: now() or transaction_timestamp()

- Functions with keywords, for example, to_char(current_date,'yyyy-MM-dd') for PostgreSQL

- MongoDB source: a JSON string consisting of the combine() function and other fields in a MongoDB document

Variable value

Variable values, for example, ${workDate} (workDate must be defined in the job variable.)

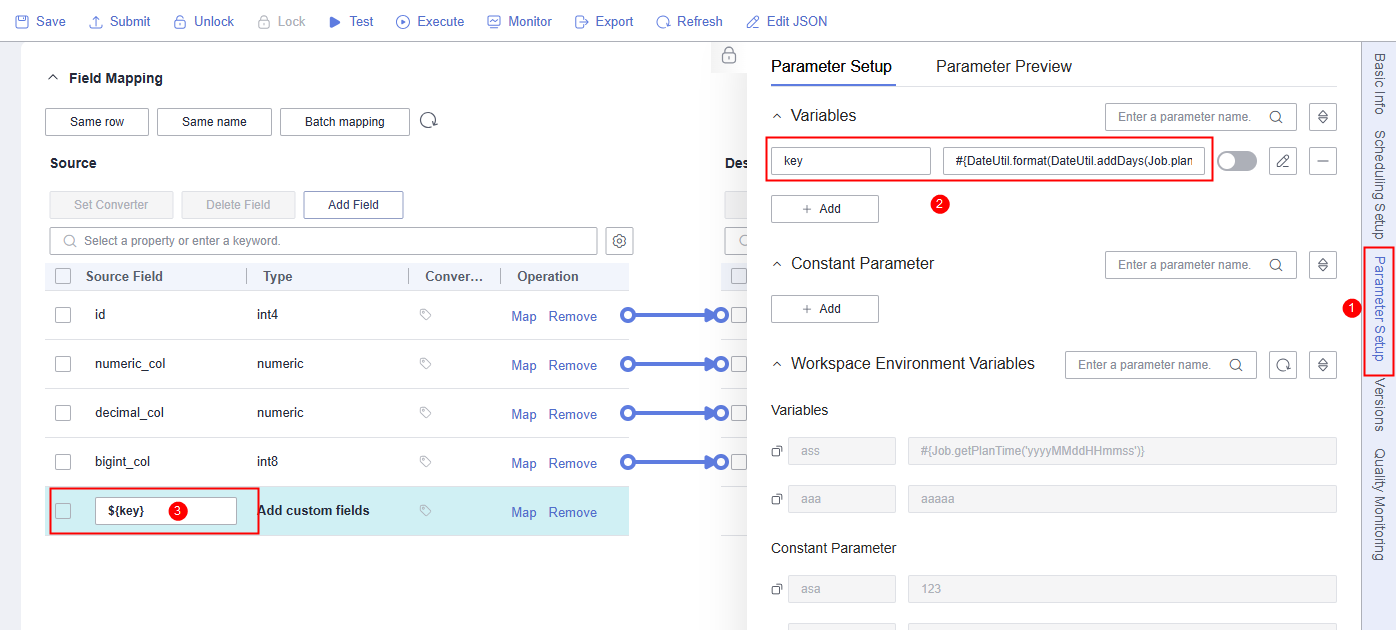

Example: You can use the variables and constants configured in job parameters. For example, if the #{DateUtil.format(DateUtil.addDays(Job.planTime,-1),"yyyy-MM-dd")} key has been configured in job parameters, you can set a custom field to ${key} to use the key.

For how to set job parameters, see EL Expression Reference.

Fixed value

- Fixed values, such as 123 and '123' (both indicate string 123)

- Fixed variable values for JDBC, for example, DB_NAME_SRC(Source database name)

Expression

as statements, such as '123' as test and now() as curTime

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot