Planning HDFS Capacity

Scenario

In HDFS, DataNode stores user files and directories as blocks, and file objects are generated on the NameNode to map each file, directory, and block on the DataNode.

The file objects on the NameNode require certain memory capacity. The memory consumption linearly increases as more file objects generated. The number of file objects on the NameNode increases and the objects consume more memory when the files and directories stored on the DataNode increase. In this case, the existing hardware may not meet the service requirement and the cluster is difficult to be scaled out.

Capacity planning of the HDFS that stores a large number of files is to plan the capacity specifications of the NameNode and DataNode and to set parameters according to the capacity plans.

During the configuration, check whether alarms 14007, 14008, and 14009 are generated. If yes, change the alarm thresholds based on service requirements.

Concepts

- Name Quotas

The name quota is a hard limit on the number of file and directory names in a directory tree.

- File and directory creation will fail if the quota is exceeded. Renaming a directory has no effect on the original name quota of the directory. The renaming operation will fail if the operation results in a quota violation. The quota can still be set even if the directory is in violation of the new quota. Newly created directories do not have any associated quotas. A directory with a quota of 1 will remain empty because itself takes up a quota.

- Quotas are persisted with fsimage. When the system is started, if the fsimage is in violation of the quota (possibly due to fsimage changes), the system will report an exception. Setting or deleting a quota generates a log record.

- Space Quotas

The space quota is a hard limit on the number of bytes used by files in a directory tree.

- If the configured quota does not allow writing a full block, the block allocation fails. Each replica of a data block counts against the quota. Renaming a directory has no effect on the original space quota of the directory. The renaming operation will fail if the operation results in a quota violation. Files can still be created when the quota is 0, but no block can be added to the files. Directories do not use the host file system space and do not count against the space quota. The host file system space used to store file metadata does not count against the quota.

- Quotas are persisted with fsimage. When the system is started, if the fsimage is in violation of the quota (possibly due to fsimage changes), the system will report an exception. Setting or deleting a quota generates a log record.

- Storage Type Quotas

The storage type quota is a hard limit on the use of a specific storage type (SSD, DISK, or ARCHIVE) by files in a directory tree. It works similarly to space quotas in many ways but provides fine-grained control over cluster space usage. Before setting a storage type quota for a directory, you must configure a storage policy for the directory first. In this way, files can be stored in different storage types based on the storage policy. For more information, see HDFS Storage Policy Documentation.

You can combine storage type quotas with space quotas and name quotas to effectively manage cluster storage usage. For example:

- For directories configured with storage policies, the administrator should set storage type quotas for storage types with limited resources (such as SSDs) and leave quotas for other storage types and overall space quota with small limits or no limits by default. HDFS deducts the actually used quotas from the target storage type based on the storage policy and overall space quota.

- For directories without storage policies configured, the administrator should not configure storage type quotas. You can configure storage type quotas even if a specific storage type is unavailable (or available but the storage type information is incorrectly configured). However, storage type quota in this case may not be effective due to unavailable or inaccurate storage type. Therefore, the overall space quota is recommended.

- For clusters with DISK as the primary storage type, the storage type quota is not recommended.

For more information, see HDFS Quotas Guide.

NameNode Capacity Specifications and Parameters

- NameNode capacity specifications

Each file object on the NameNode corresponds to a file, directory, or block on the DataNode.

A file occupies at least one block. The default size of each block is 134,217,728 bytes (128 MB). You can set the block size by setting the dfs.blocksize parameter in the Client installation directory/HDFS/hadoop/etc/hadoop/hdfs-site.xml file. For details about the parameter, see Table 2.

By default, a file whose size is less than 128 MB occupies only one block. If the file size is greater than 128 MB, the number of occupied blocks is the file size divided by 128 MB (Number of occupied blocks = File size/128). The directories do not occupy any blocks.

Based on dfs.blocksize, the number of file objects on the NameNode is calculated as follows:Table 1 Number of NameNode file objects Size of a File

Number of File Objects

< 128 MB

1 (File) + 1 (Block) = 2

> 128 MB (for example, 128 GB)

1 (File) + 1,024 (128 GB/128 MB = 1,024 blocks) = 1,025

The maximum number of file objects supported by the active and standby NameNodes is 300,000,000 (equivalent to 150,000,000 small files). dfs.namenode.max.objects specifies the number of file objects that can be generated in the current system. To check the number, log in to FusionInsight Manager, choose Cluster > Services > HDFS > Configurations > All Configurations, and search for this parameter. The default value is 0, indicating that the number of file objects is not limited. For details about the parameter, see Table 2.

Table 2 HDFS parameters Parameter

Description

Default Value

dfs.blocksize

Default block size of a new file.

The unit is bytes. The value must be a complete number of bytes. For example, if the block size is 128 MB, the value must be 134217728. The value must be a multiple of 512.

Value range: 512 to 1073741824

134217728

dfs.namenode.max.objects

Maximum number of files, directories, and blocks supported by HDFS.

If this parameter is set to 0, the number of objects supported by HDFS is not limited.

Value range: 0 to 1000000000

0

- Configuration rules of the NameNode JVM parameter

For details about the NameNode JVM parameter GC_OPTS, see Table 3. You can perform the following operations to set the parameter:

- Log in to FusionInsight Manager.

- Choose Cluster > Services > HDFS > Configurations > All Configurations.

- Search for the GC_OPTS parameter and set a value for it.

Table 3 NameNode parameter GC_OPTS Parameter

Description

Default Value

GC_OPTS

Parameter used by the JVM for GC.

This parameter is valid only when GC_PROFILE is set to custom.

Ensure that the GC_OPTS parameter is set correctly. Otherwise, the process will fail to be started.

-Xms2G -Xmx4G -XX:NewSize=128M -XX:MaxNewSize=256M -XX:MetaspaceSize=128M -XX:MaxMetaspaceSize=128M -XX:+UseConcMarkSweepGC -XX:+CMSParallelRemarkEnabled -XX:CMSInitiatingOccupancyFraction=65 -XX:+PrintGCDetails -Dsun.rmi.dgc.client.gcInterval=0x7FFFFFFFFFFFFFE -Dsun.rmi.dgc.server.gcInterval=0x7FFFFFFFFFFFFFE -XX:-OmitStackTraceInFastThrow -XX:+PrintGCDateStamps -XX:+UseGCLogFileRotation -XX:NumberOfGCLogFiles=10 -XX:GCLogFileSize=1M -Djdk.tls.ephemeralDHKeySize=3072 -Djdk.tls.rejectClientInitiatedRenegotiation=true -Djava.io.tmpdir=${Bigdata_tmp_dir}

The number of NameNode files is proportional to the used memory size of the NameNode. When file objects change, you need to change -Xms2G -Xmx4G -XX:NewSize=128M -XX:MaxNewSize=256M in the default value. The following table lists the reference values.Table 4 NameNode JVM configuration Number of File Objects

Reference Value

10,000,000

-Xms6G -Xmx6G -XX:NewSize=512M -XX:MaxNewSize=512M

20,000,000

-Xms12G -Xmx12G -XX:NewSize=1G -XX:MaxNewSize=1G

50,000,000

-Xms32G -Xmx32G -XX:NewSize=3G -XX:MaxNewSize=3G

100,000,000

-Xms64G -Xmx64G -XX:NewSize=6G -XX:MaxNewSize=6G

200,000,000

-Xms96G -Xmx96G -XX:NewSize=9G -XX:MaxNewSize=9G

300,000,000

-Xms164G -Xmx164G -XX:NewSize=12G -XX:MaxNewSize=12G

- Click Save. Confirm the operation impact and click OK.

- Wait until the message "Operation succeeded" is displayed. Click Finish. The configuration is modified.

Check whether there is any service whose configuration has expired in the cluster. If yes, restart the corresponding service or role instance for the configuration to take effect.

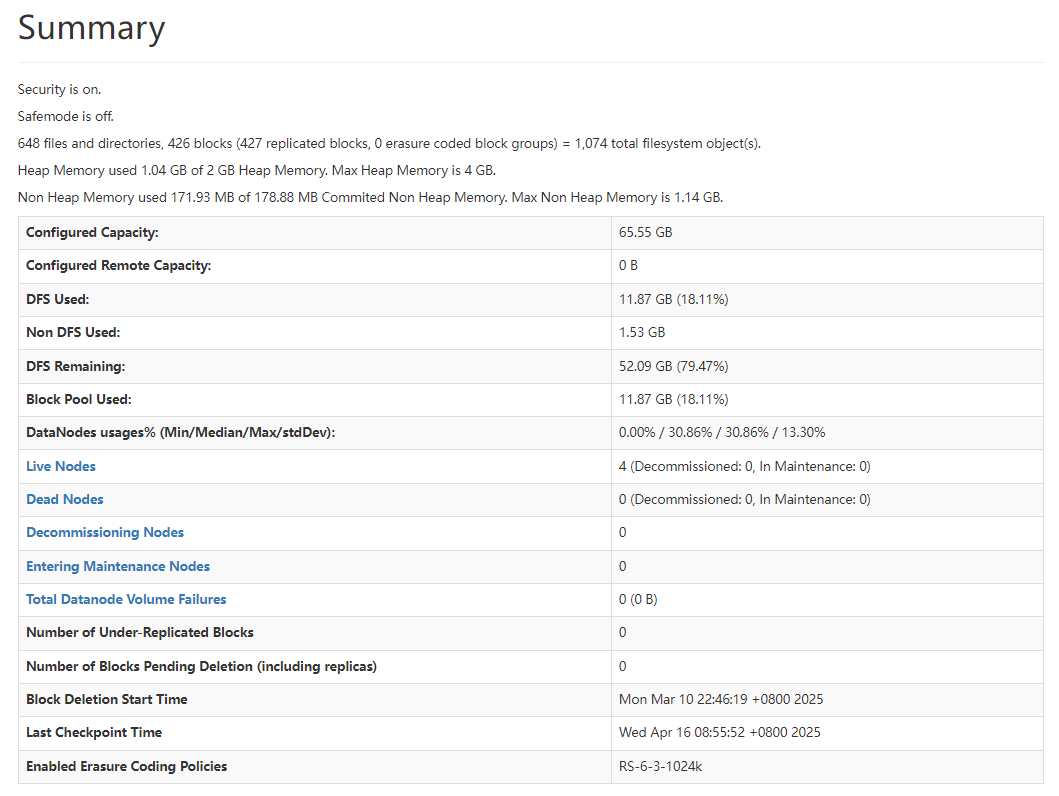

- Viewing NameNode capacity information

- Log in to FusionInsight Manager as an HDFS service user.

- Choose Cluster > Services > HDFS > NameNode (xxx,Active). The HDFS web UI is displayed.

- Click Overview tab. On the Summary module, check the number of files, directories, blocks, and HDFS file objects.

Figure 1 Information on the Summary module

DataNode Capacity Specifications and Parameters

- DataNode capacity specifications

In HDFS, blocks are stored on the DataNodes as copies. The default number of copies is 3, which can be set in the dfs.replication parameter.

Table 5 dfs.replication parameter description Parameter

Description

Default Value

dfs.replication

Default number of block copies.

The actual number of block copies can be specified during file creation. If the number is not specified, the default value is used.

Value range: 1–16

- Exercise caution when setting this parameter. If this parameter is set to 1, the file storage will degrade. This may cause data loss or HDFS service unavailability. If the number of lost blocks exceeds the tolerance threshold specified by dfs.namenode.safemode.threshold-pct, HDFS fails to be restarted.

- If this parameter must be set to 1, you must enable dfs.single.replication.enable and set dfs.namenode.decommission.force.replication.min to 1. Otherwise, the HDFS service may be unavailable.

3

Number of blocks stored on all DataNode role instances in the cluster = Number of HDFS blocks x 3. Average number of saved blocks = Number of HDFS blocks x 3/Number of DataNodes.

Table 6 DataNode specifications Item

Specification

Maximum number of blocks supported by a DataNode instance

5,000,000

Maximum number of blocks supported by a disk on a DataNode instance

500,000

Minimum number of disks required when the number of blocks supported by a DataNode instance reaches the maximum

10

Table 7 Number of DataNodes Number of HDFS Blocks

Minimum Number of DataNode Roles

10,000,000

10,000,000 *3/5,000,000 = 6

50,000,000

50,000,000 *3/5,000,000 = 30

100,000,000

100,000,000 *3/5,000,000 = 60

- Configuration rules of the DataNode JVM parameter

For details about the DataNode JVM parameter GC_OPTS, see Table 8. You can perform the following operations to set the parameter:

- Log in to FusionInsight Manager.

- Choose Cluster > Services > HDFS > Configurations > All Configurations.

- Search for the GC_OPTS parameter and set a value for it.

Table 8 DataNode parameter GC_OPTS Parameter

Description

Default Value

GC_OPTS

Parameter used by the JVM for GC.

This parameter is valid only when GC_PROFILE is set to custom.

Ensure that the GC_OPTS parameter is set correctly. Otherwise, the process will fail to be started.

-Xms2G -Xmx4G -XX:NewSize=128M -XX:MaxNewSize=256M -XX:MetaspaceSize=128M -XX:MaxMetaspaceSize=128M -XX:+UseConcMarkSweepGC -XX:+CMSParallelRemarkEnabled -XX:CMSInitiatingOccupancyFraction=65 -XX:+PrintGCDetails -Dsun.rmi.dgc.client.gcInterval=0x7FFFFFFFFFFFFFE -Dsun.rmi.dgc.server.gcInterval=0x7FFFFFFFFFFFFFE -XX:-OmitStackTraceInFastThrow -XX:+PrintGCDateStamps -XX:+UseGCLogFileRotation -XX:NumberOfGCLogFiles=10 -XX:GCLogFileSize=1M -Djdk.tls.ephemeralDHKeySize=3072 -Djdk.tls.rejectClientInitiatedRenegotiation=true -Djava.io.tmpdir=${Bigdata_tmp_dir}

The average number of blocks stored in each DataNode instance in the cluster is: Number of HDFS blocks x 3/Number of DataNodes. If the average number of blocks changes, you need to change -Xms2G -Xmx4G -XX:NewSize=128M -XX:MaxNewSize=256M in the default value. The following table lists the reference values.

Table 9 DataNode JVM configuration Average Number of Blocks in a DataNode Instance

Reference Value

2,000,000

-Xms6G -Xmx6G -XX:NewSize=512M -XX:MaxNewSize=512M

5,000,000

-Xms12G -Xmx12G -XX:NewSize=1G -XX:MaxNewSize=1G

Xmx specifies memory which corresponds to the threshold of the number of DataNode blocks, and each GB memory supports a maximum of 500,000 DataNode blocks. Set the memory as required.

- Click Save. Confirm the operation impact and click OK.

- Wait until the message "Operation succeeded" is displayed. Click Finish. The configuration is modified.

Check whether there is any service whose configuration has expired in the cluster. If yes, restart the corresponding service or role instance for the configuration to take effect.

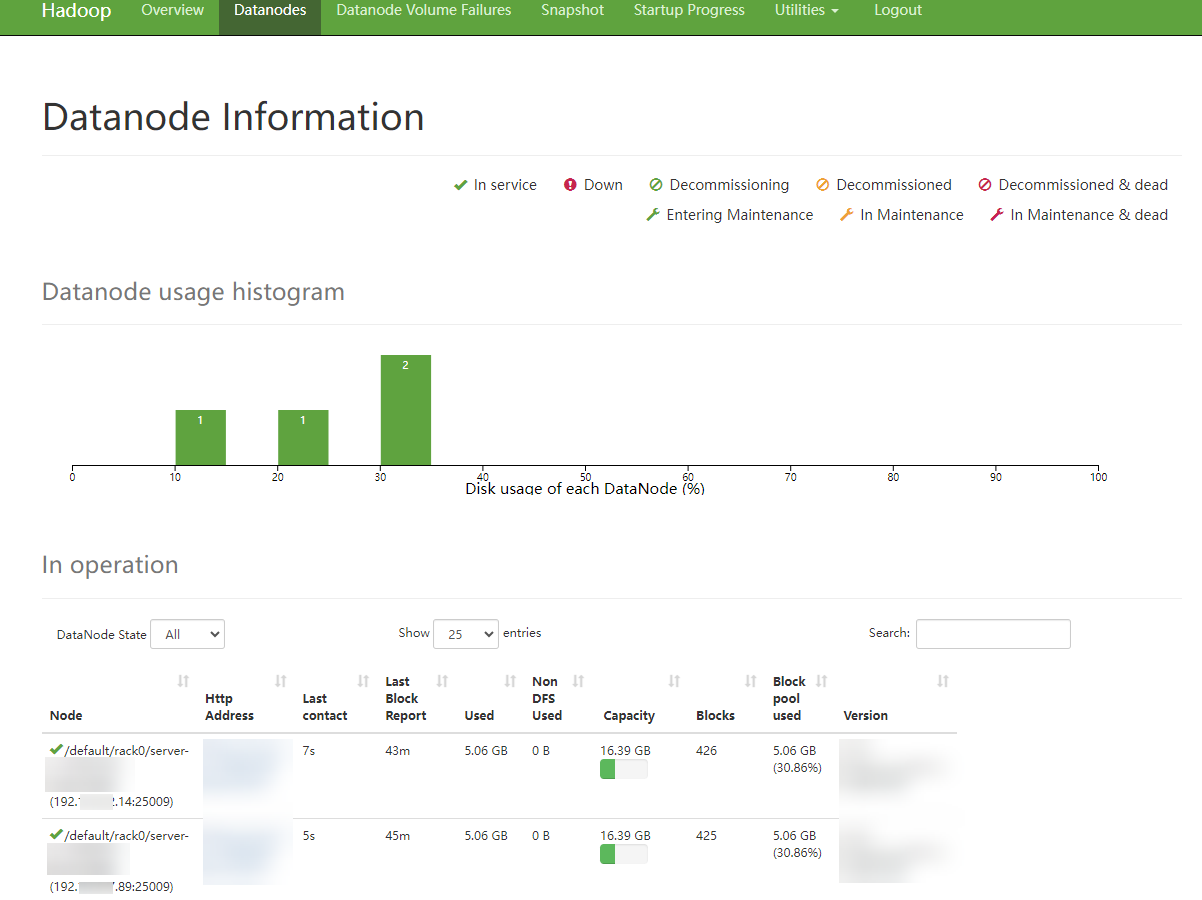

- Viewing DataNode capacity information

Log in to FusionInsight Manager, choose Cluster > Services > HDFS, click NameNode (Active), and click DataNodes to view the number of blocks on all DataNodes for which the alarm is generated.

- Log in to FusionInsight Manager as an HDFS service user.

- Choose Cluster > Services > HDFS > NameNode (xxx,Active). The HDFS web UI is displayed.

- Click Datanodes to view the number of blocks on all DataNodes for which the alarm is generated.

Figure 2 Datanodes information

Helpful Links

- After a quota is set for a directory, files fail to be written to the directory, and the error message "The DiskSpace quota of /tmp/tquota2 is exceeded" is displayed. To rectify the fault, see Failed to Write Files Because the HDFS Directory Quota Is Insufficient.

- HBase fails to start after the quota is set on the HDFS. To rectify the fault, see HBase Failed to Start Due to a Quota Set on HDFS.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot