Interconnecting Hive with External Self-Built Relational Databases

Scenarios

After an external metadata database is deployed in a cluster with Hive data, the original metadata tables will not be automatically synchronized. Before installing Hive, determine whether to store metadata in an external database or DBService. For the former, deploy an external database when installing Hive or when there is no Hive data. After Hive installation, the metadata storage location cannot be changed. Otherwise, the original metadata will be lost.

Hive supports open-source MySQL and PostgreSQL metadata databases. This section explains how to connect to open-source MySQL and PostgreSQL databases.

Notes and Constraints

- After external metadata is imported to the MySQL database, Hive supports only table names, field names, and table description in Chinese.

- This topic is available for MRS 3.x and later versions.

Step 1: Install the Database and Upload the Driver Package

- Install the open source MySQL or PostgreSQL database.

The node where the database is installed must be in the same network segment as the cluster, so that they can access each other.

- Upload the driver package.

- PostgreSQL:

Use the open source driver package to replace the cluster's existing one. Download the open source PostgreSQL driver package postgresql-42.2.5.jar at https://repo1.maven.org/maven2/org/postgresql/postgresql/42.2.5/ and upload it to the ${BIGDATA_HOME}/third_lib/Hive directory on all MetaStore nodes.

Run the following commands on all MetaStore nodes to modify the permission on the driver package:

cd ${BIGDATA_HOME}/third_lib/Hive

chown omm:wheel postgresql-42.2.5.jar

chmod 600 postgresql-42.2.5.jar

- MySQL:

Visit the MySQL official website at https://www.mysql.com/, choose DOWNLOADS > MySQL Community(GPL) Downloads > Connector/J, and download the driver package of the required version.

- For versions earlier than MRS 8.2.0, upload the MySQL driver package of the required version to the /opt/Bigdata/FusionInsight_HD_*/install/FusionInsight-Hive-*/hive-*/lib/ directory on all Metastore nodes.

- For MRS 8.2.0 and later versions, upload the MySQL driver package of the required version to the ${BIGDATA_HOME}/third_lib/Hive directory on all Metastore nodes.

Run the following commands on all MetaStore nodes to modify the permission on the driver package:

cd /opt/Bigdata/FusionInsight_HD_*/install/FusionInsight-Hive-*/hive-*/lib/

chown omm:wheel mysql-connector-java-*.jar

chmod 600 mysql-connector-java-*.jar

- PostgreSQL:

Step 2: Create Tables and Grant Permissions in the User-built Database

- Create a user and metadata database in the user-built database and assign all permissions on the database to the user. The following are examples:

- Run the following commands as the database administrator in PostgreSQL to create database test and user testuser, and assign all permissions on test to testuser:

create user testuser with password 'password';

create database test owner testuser;

grant all privileges on database test to testuser;

- Run the following commands as the database administrator in MySQL to create database test and user testuser, and assign all permissions on test to testuser:

create database test;

create user 'testuser'@'%' identified by 'password';

grant all privileges on test.* to 'testuser';

flush privileges;

- Run the following commands as the database administrator in PostgreSQL to create database test and user testuser, and assign all permissions on test to testuser:

- Import the SQL statements for creating metadata tables.

- SQL script path in the PostgreSQL database: ${BIGDATA_HOME}/FusionInsight_HD_*/install/FusionInsight-Hive-*/hive-*/scripts/metastore/upgrade/postgres/hive-schema-3.1.0.postgres.sql

Run the following command to import the SQL file to Postgres:

./bin/psql -U username -d databasename -f hive-schema-3.1.0.postgres.sql

Configure the parameters by referring to the following information:

./bin/psql is in the Postgres installation directory.

username indicates the username for logging in to Postgres.

databasename indicates the database name.

- SQL script path in the MySQL database: ${BIGDATA_HOME}/FusionInsight_HD_*/install/FusionInsight-Hive-*/hive-*/scripts/metastore/upgrade/mysql/hive-schema-3.1.0.mysql.sql

Run the following command to import the SQL file to the MySQL database:

./bin/mysql -u username -p -D databasename<hive-schema-3.1.0.mysql.sql

Configure the parameters by referring to the following information:

./bin/mysql is in the MySQL installation directory.

username indicates the user name for logging in to MySQL.

databasename indicates the database name.

- SQL script path in the PostgreSQL database: ${BIGDATA_HOME}/FusionInsight_HD_*/install/FusionInsight-Hive-*/hive-*/scripts/metastore/upgrade/postgres/hive-schema-3.1.0.postgres.sql

Step 3: Configure MRS Cluster Parameters for Database Interconnection

- Log in to FusionInsight Manager, choose Cluster > Services, and click Hive. On the displayed page, click Configuration > All Configurations, and choose Hive (Service) > MetaDB. Modify the parameters in the following table, and save the modification so that the Hive configuration can be connected to the open-source database.

Table 1 Parameters Parameter

Default Value

Description

javax.jdo.option.ConnectionDriverName

org.postgresql.Driver

Driver class for connecting metadata on MetaStore

javax.jdo.option.ConnectionURL

jdbc:postgresql://%{DBSERVICE_FLOAT_IP}%{DBServer}:%{DBSERVICE_CPORT}/hivemeta?socketTimeout=600

URL of the JDBC link of the MetaStore metadata

- If an external MySQL database is used, the value is:

jdbc:mysql://IP address of the MySQL database:Port number of the MySQL database/test?characterEncoding=utf-8&socketTimeout=300000

- If an external Postgres database is used, the value is:

jdbc:postgresql://PostgreSQL IP address:PostgreSQL port/test?socketTimeout=600

NOTE:test is the name of the database created in MySQL or PostgreSQL in 1.

javax.jdo.option.ConnectionUserName

hive${SERVICE_INDEX}${SERVICE_INDEX}

Username for connecting to the external metadata database on Metastore.

- If an external MySQL database is used, the value is:

- Change the Postgres database password in MetaStore. Choose Cluster > Services > Hive. On the displayed page, click Configurations > All Configurations and choose MetaStore(Role) > MetaDB, modify the following parameters, and click Save.

Table 2 Parameters Parameter

Default Value

Description

javax.jdo.option.extend.ConnectionPassword

******

Password for connecting to the external metadata database on Metastore. The password is encrypted in the background.

- In MRS 3.2.0-LTS or later, if multiple MRS clusters are connected to the same relational database, choose MetaStore(Role) > Customization in the navigation pane on the left, add the metastore.whether.query.all.tokens configuration item to the custom parameter hive.metastore.customized.configs, set the value to false, and click Save.

- Log in to each MetaStore background node and check whether the local directory /opt/Bigdata/tmp exists.

- If it exists, go to 5.

- If it does not exist, run the following commands to create one:

chmod 755 /opt/Bigdata/tmp

- Choose Dashboard > More > Restart Service, and enter the password to restart the Hive service.

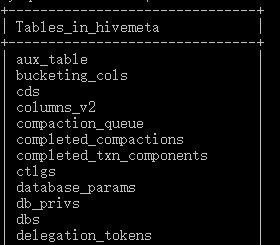

- Log in to the MySQL or PostgreSQL database and view metadata tables generated in the metadata database created in 1.

Step 4: Verify If the Hive Metabase is Externally Connected.

- Log in to the node where the Hive client is installed as the client installation user.

cd Client installation directory

source bigdata_env

kinit Component service user (Skip this step for clusters with Kerberos authentication disabled.)

- Run the following command to log in to the Hive client CLI:

- Run the following command to create the test table:

create table test(id int,str1 string,str2 string);

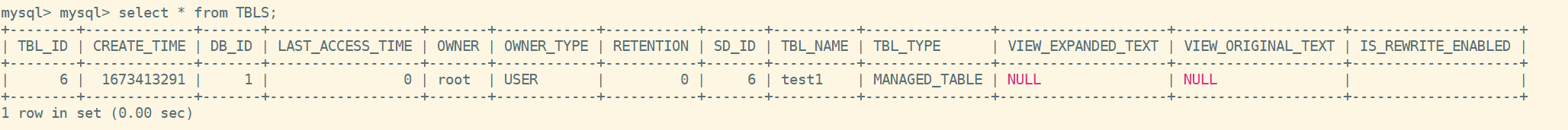

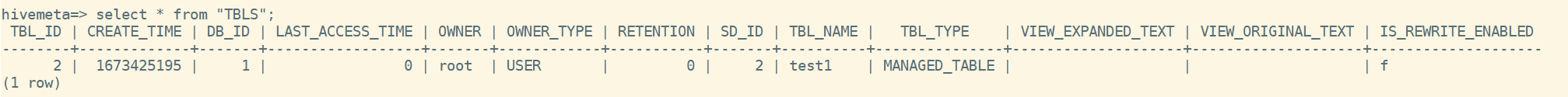

- Run the following command in the test database of the MySQL or PostgreSQL database to check whether there is any information about the test table:

select * from TBLS;

If information about the test table is displayed, the external database is successfully deployed. For example:

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot