Interconnecting Sqoop with OBS Using an IAM Agency

After connecting the Sqoop client to an OBS file system by referring to Interconnecting an MRS Cluster with OBS Using an IAM Agency, you can import tables from a relational database to OBS or export tables from OBS to a relational database on the Sqoop client.

Prerequisites

The RDS for MySQL instance and the MRS cluster where Sqoop is located are in the same VPC.

Importing MySQL Data to OBS Using Sqoop

- Log in to the RDS console, choose Instances, locate the row that contains the target RDS for MySQL instance, click Log In in the Operation column to log in to the instance as user root.

- On the homepage, click Create Database to create a database, for example, test. In the database list, click the name of the newly created database to go to the database management page. On the Tables page, click Create Table to create a table, for example, sourcetable, which contains the id and name fields.

- Click Query SQL Statements in the row where the new table is located. On the displayed page, switch to the database where the table is located and run the following SQL command to insert data into the table:

INSERT INTO sourcetable (id,name) VALUES (11,"A"); INSERT INTO sourcetable (id,name) VALUES (22,"B");

Query table data.

select * from `sourcetable`;

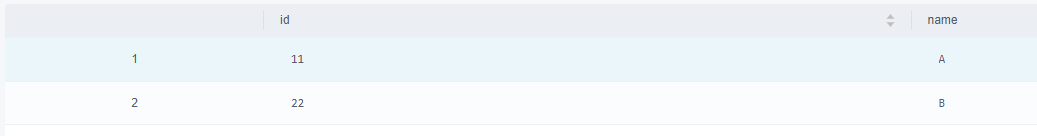

The queried table data is as follows:

Figure 1 Querying table data

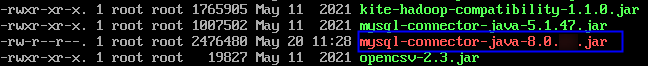

- Download the MySQL driver package of the required version from the MySQL official website https://downloads.mysql.com/archives/c-j/, decompress the package, and upload it to the Client installation directory/Sqoop/sqoop/lib directory on the node where the Sqoop client is installed.

Figure 2 Uploading the MySQL driver file

- Log in to the node where the client is installed as the client installation user.

For details about how to download and install the cluster client, see Installing an MRS Cluster Client.

- Configure environment variables and authenticate the user.

Go to the client installation directory.

cd Client installation directoryLoad the environment variables.source bigdata_env

Authenticate the user. Skip this step for clusters with Kerberos authentication disabled.

kinit Component service user - Import MySQL table data to OBS using sqoop import.

sqoop import --connect jdbc:mysql://MySQL IP address:3306/test --username root --password xxx --table sourcetable --target-dir obs://Parallel file system name/xxx --delete-target-dir --fields-terminated-by "," -m 1 --as-textfile

For more sqoop import and sqoop export operations and related parameters, see Using Sqoop from Scratch.

- Log in to OBS console, choose Parallel File System, go to the parallel file system path for storing MySQL data configured in Step 7, and download and check whether the data file is the same as that inserted into the MySQL table in Step 3.

11,A 22,B

Helpful Links

If the error message "Could not load db driver class: com.mysql.jdbc.Driver" is displayed after you run the sqoop import or sqoop export command, the MySQL driver package is missing. For details about how to rectify the fault, see What Should I Do If the MySQL Driver Package Is Missing When Data Is Imported or Exported Using Sqoop?.

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.See the reply and handling status in My Cloud VOC.

For any further questions, feel free to contact us through the chatbot.

Chatbot