Accessing OBS Using Spark Through Guardian

After interconnecting Guardian with OBS by referring to Disabling Ranger OBS Path Authentication for Guardian or Enabling Ranger OBS Path Authentication for Guardian, you can create a table whose location is an OBS path on the Spark client.

Prerequisites

If you interconnected Guardian with OBS by referring to Enabling Ranger OBS Path Authentication for Guardian, ensure that you have the read and write permissions on the OBS path in Ranger. For details about how to obtain the permissions, see Configuring Ranger Permissions.

Interconnecting Spark with OBS

In an MRS cluster, Location can be set to an OBS file system path during Spark table creation and Spark can connect to OBS through Hive Metastore.

- Setting the location to an OBS path during table creation:

- Log in to the node where the client is installed as the client installation user and access the spark-sql client.

Go to the client installation directory.

cd Client installation directoryLoad the environment variables.

source bigdata_env

Authenticate the user. Skip this step for clusters with Kerberos authentication disabled.

kinit User performing operations on the componentLog in to the spark-sql client.

spark-sql --master yarn

- Set Location to the OBS file system path when creating a table.

For example, to create a table named test whose Location is obs://obs-test/test/Database name/Table name, run the following command:

create external table testspark(name string) location " obs://obs-test/test/ Database name/Table name";

- Log in to the node where the client is installed as the client installation user and access the spark-sql client.

- Interconnecting Spark with OBS through Hive Metastore:

- Complete the configurations by referring to Interconnecting Hive with OBS using MetaStore.

- Log in to FusionInsight Manager, choose Cluster > Services > Spark and choose Configurations > All Configurations.

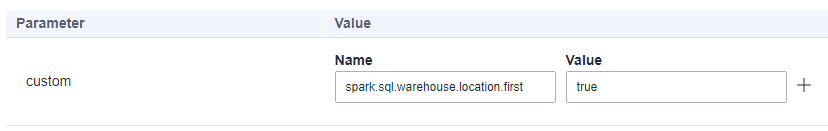

- In the navigation pane on the left, choose SparkResource > Customization. In the custom configuration items, add spark.sql.warehouse.location.first to the custom parameter and set its value to true.

Figure 1 spark.sql.warehouse.location.first configuration

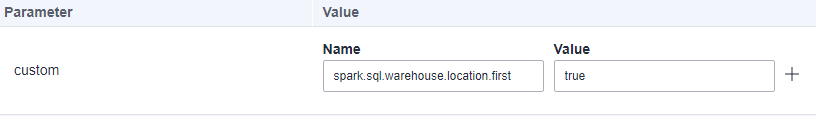

- In the navigation pane on the left, choose JDBCServer > Customization. In the custom configuration items, add spark.sql.warehouse.location.first to the custom parameter and set its value to true.

Figure 2 spark.sql.warehouse.location.first configuration

- Click Save to save the configuration. Click the Dashboard tab choose More > Restart Service, enter the password, click OK, and click OK again to restart Spark.

- After Spark is restarted, choose More > Download Client to download and install the Spark client again. Then, go to 7.

If you do not download and install the client again, you can perform the following steps to update the Spark client configuration file (for example, the client directory is /opt/client):

- Log in to the node where the Spark client is deployed as user root and switch to the client installation directory.

cd /opt/client - Run the following command to modify hive-site.xml in the configuration file directory of the Spark client:

vi Spark/spark/conf/hive-site.xmlChange the value of hive.metastore.warehouse.dir to the corresponding OBS path, for example, obs://hivetest/user/hive/warehouse/.

<property> <name>hive.metastore.warehouse.dir</name> <value>obs://hivetest/user/hive/warehouse/<value> </property>

- Run the following command to modify the spark-defaults.conf file in the configuration file directory of the Spark client and set spark.sql.warehouse.location.first to true:

vi Spark/spark/conf/spark-defaults.conf

- Log in to the node where the Spark client is deployed as user root and switch to the client installation directory.

- For a cluster with Kerberos authentication enabled, configure the OBS directory permission for the user who performs operations on the component. For details, see Configuring Ranger Permissions.

- Go to the SparkSQL CLI and spark-beeline, create a table, and check whether the location of the table is an OBS path.

Load the environment variables.

source bigdata_env

Authenticate the user. Skip this step for clusters with Kerberos authentication disabled.

kinit Service user- Go to the SparkSQL CLI.

spark-sql

Create a table.

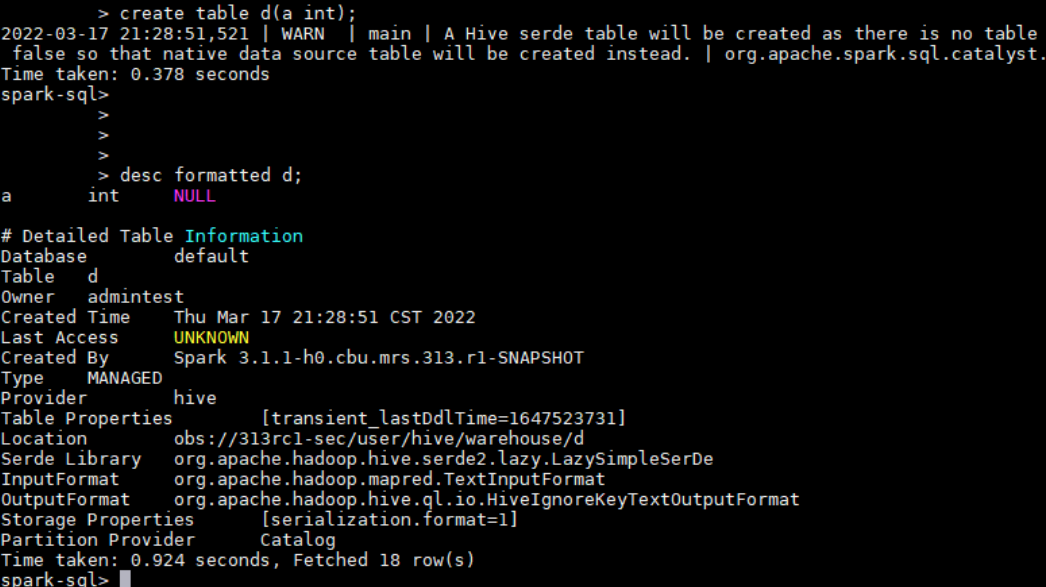

create table d(a int);Check the table location.

desc formatted d;As shown in the following figure, the location of table d is in the specified OBS path.

Figure 3 Viewing the location of the d table

- Go to spark-beeline.

spark-beeline

Create a table.

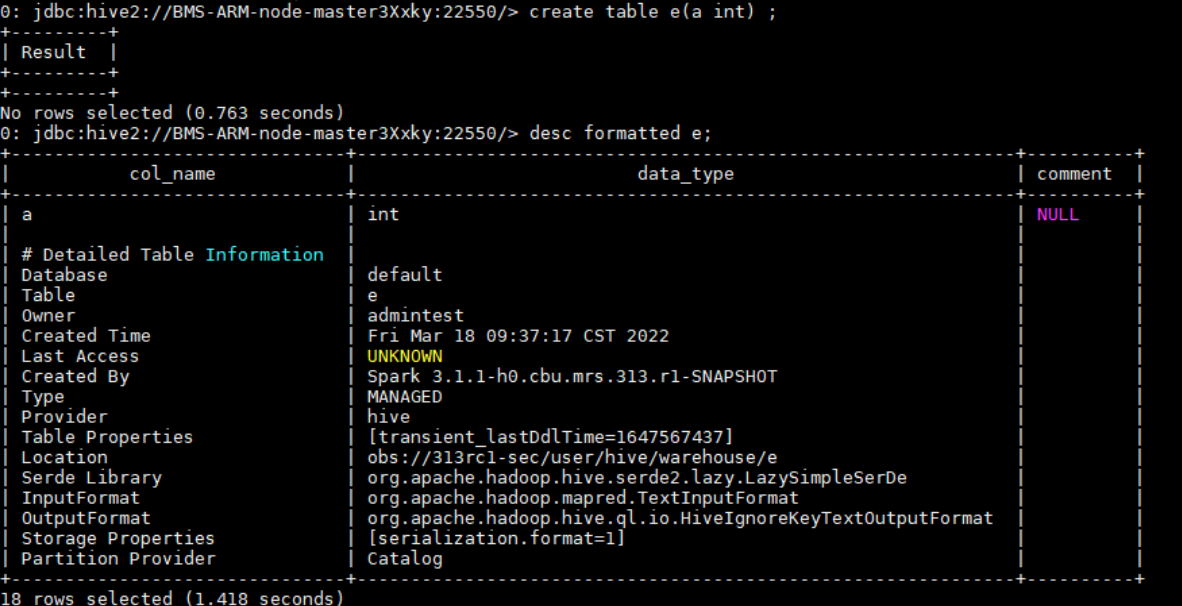

create table e(a int);Check the table location.

desc formatted e;As shown in the following figure, the location of table e is in the specified OBS path.

Figure 4 Viewing the location of the e table

- Go to the SparkSQL CLI.

Configuring Ranger Permissions

- Log in to MRS Manager and choose System > Permission > User Group. On the displayed page, click Create User Group.

For details about how to log in to MRS Manager, see Accessing MRS Manager.

- Create a user group without a role, for example, obs_spark, and bind the user group to the corresponding user.

- Log in to the Ranger management page as user rangeradmin.

- On the home page, click component plug-in name OBS in the EXTERNAL AUTHORIZATION area.

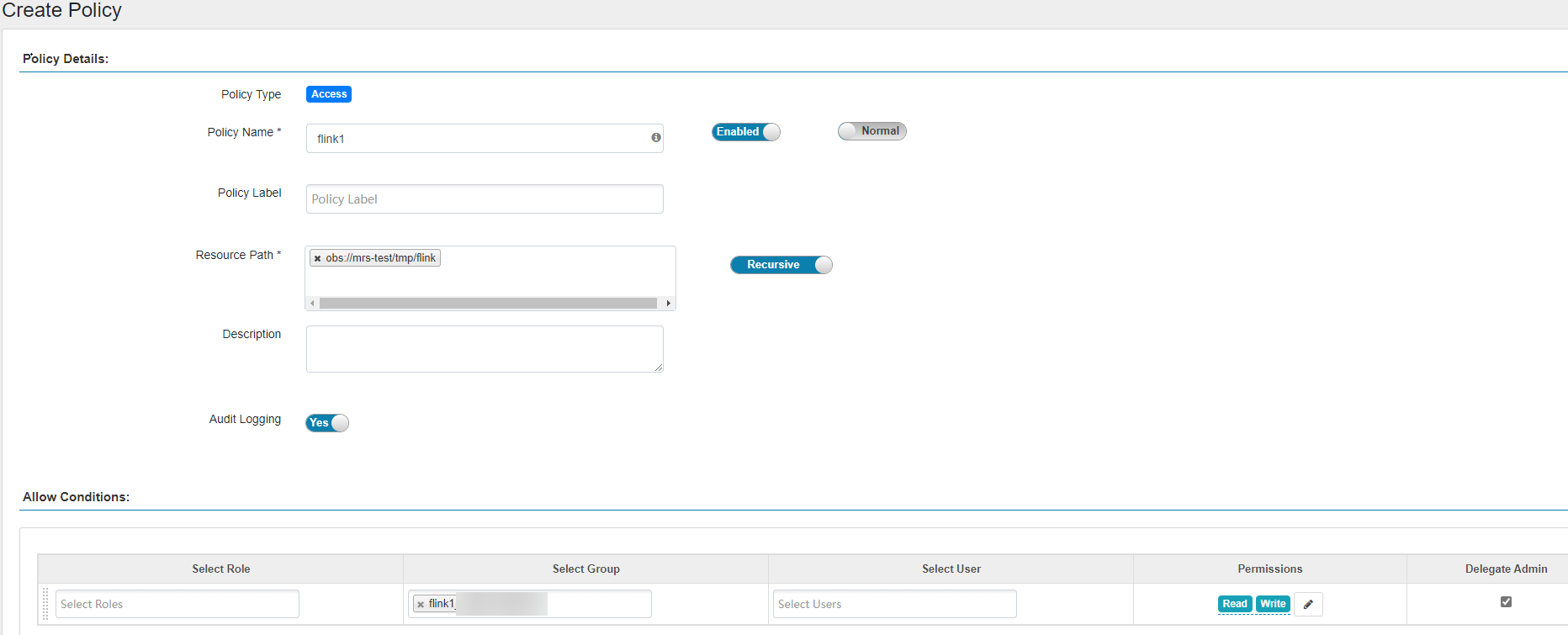

- Click Add New Policy to add the Read and Write permissions on OBS paths to the user group created in Step 2. If there are no OBS paths, create one in advance (wildcard character * is not allowed).

Figure 5 Granting the Spark user group permissions for reading and writing OBS paths

- To authorize a view chart, you need to grant the view chart permission and the physical table path permission corresponding to the view chart.

- Cascading authorization can be performed only on databases and tables, and cannot be on partitions. If a partition path is not in the table path, you need to manually authorize the partition path.

- Cascading authorization for Deny Conditions in the Hive Ranger policy is not supported. That is, the Deny Conditions permission only restricts the table permission and cannot generate the permission of the HDFS/OBS storage source.

- The permission of the HDFS Ranger policy is prior to that of the HDFS/OBS storage source generated by cascading authorization. If the HDFS Ranger permission has been set for the HDFS storage source of the table, the cascading permission does not take effect.

- alter operations cannot be performed on tables whose storage source is OBS after cascading authorization. To perform the alter operation, you need to grant the Read and Write permissions of the parent directory of the OBS table path to the corresponding user group.

- Before configuring permission policies for OBS paths on Ranger, ensure that the AccessLabel function has been enabled for OBS. For how to enable it, contact OBS O&M personnel.

Configuring Permissions for CDL Service Users

If Kerberos authentication is enabled for a cluster (security mode) and you want to use CDL to import data to OBS in real time after the cluster is connected to OBS, perform the following steps to grant corresponding users Read and Write permissions on the corresponding OBS path:

- Log in to MRS Manager and choose System > Permission > User Group. On the displayed page, click Create User Group.

For details about how to log in to MRS Manager, see Accessing MRS Manager.

- Create a user group with no assigned role, for example, obs_cdl, and bind it to the relevant CDL service user, like cdluser.

- Log in to the Ranger management page as user rangeradmin.

- On the home page, click component plug-in name OBS in the EXTERNAL AUTHORIZATION area.

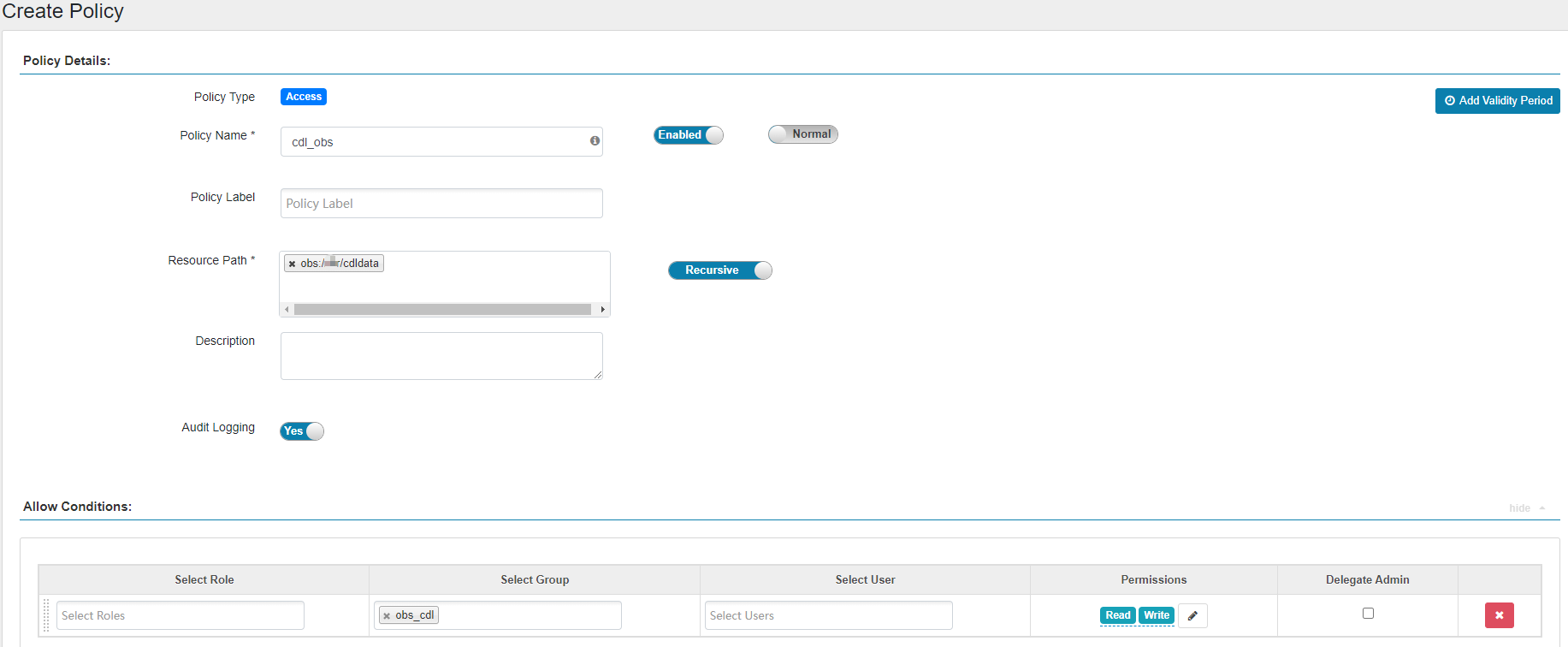

- Click Add New Policy to add the Read and Write permissions on OBS paths to the created user group. If there are no OBS paths, create one in advance (wildcard character * is not allowed).

The following figure shows the configurations needed for adding the Read and Write permissions on obs://OBS parallel file system name/cdldata to user group obs_cdl.

Figure 6 Granting the CDL user group permissions for reading and writing OBS paths

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.