Flume Log Collection

Flume is a distributed, reliable, and highly available system for aggregating massive logs, which can efficiently collect, aggregate, and move massive log data from different data sources and store the data in a centralized data storage system. Various data senders can be customized in the system to collect data. Additionally, Flume provides simple data processes capabilities and writes data to data receivers (which is customizable).

Flume consists of the client and server, both of which are FlumeAgents. The server corresponds to the FlumeServer instance and is directly deployed in a cluster. The client can be deployed inside or outside the cluster. The client-side and service-side FlumeAgents work independently and provide the same functions.

The client-side FlumeAgent needs to be independently installed. Data can be directly imported to components such as HDFS and Kafka. Additionally, the client-side and service-side FlumeAgents can also work together to provide services.

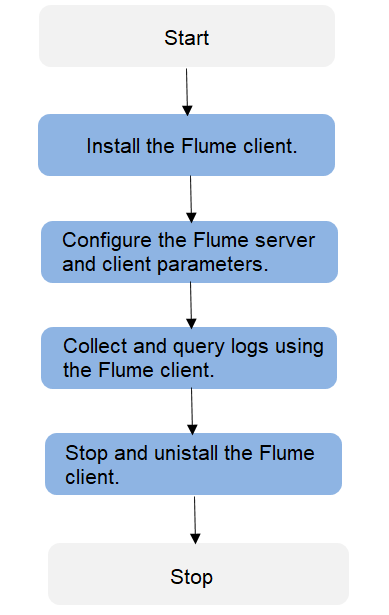

Process

Create a Flume cascading task using both Flume server and client. Perform the following operations to collect logs:

- Installing the flume client

- Configuring the Flume server and client parameters

- Collecting and querying logs using the Flume client

- Stopping and uninstalling the Flume client

Flume Modules

The Flume client or server consists of one or multiple agents. Each agent consists of three modules: source, channel, and sink. Data enters the source module, is transmitted to the channel, and then is sent to the sink for the next agent or a destination outside the client. Table 1 describes Flume modules.

| Name | Description |

|---|---|

| Source | A source receives or generates data and sends the data to one or multiple channels. The source can work in either data-driven or polling mode. Typical sources include:

A Source must associate with at least one channel. |

| Channel | A channel is used to buffer data between a source and a sink. After the sink transmits the data to the next channel or the destination, the cache is deleted automatically. The persistency of the channels varies with the channel types:

Channels support the transaction feature to ensure simple sequential operations. A channel can work with sources and sinks of any quantity. |

| Sink | Sink is responsible for sending data to the next hop or final destination and removing the data from the channel after successfully sending the data. Typical sinks include:

A sink must associate with at least one channel. |

Each Flume agent can be configured with multiple source, channel, and sink modules. That is, one source sends data to multiple channels, and multiple sinks send the data to the next agent or destinations.

Multiple Flumes can be cascaded, meaning that the sink of an agent sends data to the source of another agent.

Supplementary Information

- Flume provides the following reliability measures:

- The transaction mechanism is implemented between sources and channels, and between channels and sinks.

- The sink processor supports the failover and load balancing (load_balance) mechanisms.

- The following are precautions for the aggregation and cascading of multiple Flume clients:

- Avro or Thrift protocol can be used for cascading.

- When the aggregation end contains multiple nodes, evenly distribute the clients to these nodes. Do not connect all the clients to a single node.

- The Flume client can contain multiple independent data flows. That is, multiple sources, channels, and sinks can be configured in the properties.properties configuration file. These components can be linked to form multiple flows.

For example, to configure two data flows in a configuration, run the following commands:

server.sources = source1 source2 server.sinks = sink1 sink2 server.channels = channel1 channel2 #dataflow1 server.sources.source1.channels = channel1 server.sinks.sink1.channel = channel1 #dataflow2 server.sources.source2.channels = channel2 server.sinks.sink2.channel = channel2

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.