Using Flink Jobs to Process OBS Data

Application Scenarios

MRS supports decoupled storage and compute in scenarios where a large storage capacity is required and compute resources need to be scaled on demand. This allows you to store your data in OBS and use an MRS cluster only for data computing.

This practice instructs you on how to run Flink jobs in an MRS cluster to process data stored in OBS.

Solution Architecture

Flink is a unified computing framework that supports both batch processing and stream processing. It provides a stream data processing engine that supports data distribution and parallel computing. Flink features stream processing and is a top open-source stream processing engine in the industry.

Flink provides high-concurrency pipeline data processing, millisecond-level latency, and high reliability, making it suitable for low-latency data processing.

In this example, the Flink WordCount job program built in the MRS cluster is used to analyze the source data stored in the OBS file system and compute the frequency of words in the source data.

You can also obtain MRS sample code project and develop other Flink stream job programs by referring to Flink Development Guide.

Procedure

The operation process is as follows:

Step 1: Creating an MRS Cluster

Create and purchase an MRS cluster that contains the Flink component. For details, see Buying a Custom Cluster.

In this practice, an MRS 3.1.0 cluster with Kerberos authentication disabled is used as an example.

In this example, before you analyze data stored in OBS, bind an IAM agency to the MRS cluster so that cluster components can connect to the OBS file system and have operation permissions on file system directories.

You can select the default MRS_ECS_DEFAULT_AGENCY agency or create a custom agency that has the permission to operate the OBS file system.

After the cluster is purchased, install the cluster client on any node of the cluster as user omm. For details, see Installing and Using the Cluster Client.

Assume that the client is installed in /opt/client.

Step 2: Preparing Test Data

Before you create a Flink job for data analysis, prepare test data to be analyzed and upload the data to OBS.

- Create a file named mrs_flink_test.txt on your local PC. For example, the file content is as follows:

This is a test demo for MRS Flink. Flink is a unified computing framework that supports both batch processing and stream processing. It provides a stream data processing engine that supports data distribution and parallel computing.

- Choose Service List > Storage > Object Storage Service.

- On the OBS management console that is displayed, choose Parallel File Systems in the navigation pane on the left. On the page displayed, click Create Parallel File System and set required parameters to create a parallel file system. After the system is created, upload the test data to it.

For example, if the created file system is named mrs-demo-data, click the system name, and click the Files tab. On this tab page, click Create Folder to create a folder named flink and upload the test data to the folder.

In this example, the complete path of the test data is obs://mrs-demo-data/flink/mrs_flink_test.txt.

Figure 1 Uploading test data

- (Optional) Uploading Data Analysis Applications

You can upload the JAR files of the Flink applications developed by yourself to OBS or HDFS of the MRS cluster.

In this example, the Flink WordCount sample program built in the MRS cluster is used. You can obtain the sample program from the MRS cluster client installation directory, that is, /opt/client/Flink/flink/examples/batch/WordCount.jar.

Upload WordCount.jar to the mrs-demo-data/program directory.

Step 3: Creating and Running a Flink Job

Method 1: Submit a job online on the console.

- Log in to the MRS management console and click the cluster name to go to the cluster details page.

- On the Dashboard tab page, click Synchronize next to IAM User Sync to synchronize IAM users.

- Click the Jobs tab.

- Click Create. In the Create Job dialog box that is displayed, set the following parameters to create a Flink job.

- Type: Select Flink.

- Name: Customize a job name, for example, flink_obs_test.

- Program Path: In this example, the WordCount program of the Flink client is used.

- Program Parameter: Use the default value.

- Parameters: Enter the input and output parameters of the application. The input parameter indicates the test data to be analyzed, and the output parameter indicates the result output file.

In this example, set this parameter to --input obs://mrs-demo-data/flink/mrs_flink_test.txt --output obs://mrs-demo-data/flink/output.

- Service Parameter: Use the default values. For details about how to manually configure job parameters, see Running a Flink Job.

- Confirm the job configuration information and click OK.

Method 2: Submit a job using the cluster client.

- Log in to the node where the cluster client is installed as user root and go to the client installation directory.

su - omm

cd /opt/client

source bigdata_env

- Run the following command to check whether the cluster can access OBS:

hdfs dfs -ls obs://mrs-demo-data/flink

- Submit a Flink job and specify the source file data for consumption.

flink run -m yarn-cluster /opt/client/Flink/flink/examples/batch/WordCount.jar --input obs://mrs-demo-data/flink/mrs_flink_test.txt --output obs://mrs-demo/data/flink/output2

... Cluster started: Yarn cluster with application id application_1654672374562_0011 Job has been submitted with JobID a89b561de5d0298cb2ba01fbc30338bc Program execution finished Job with JobID a89b561de5d0298cb2ba01fbc30338bc has finished. Job Runtime: 1200 ms

Step 4: Viewing Job Execution Results

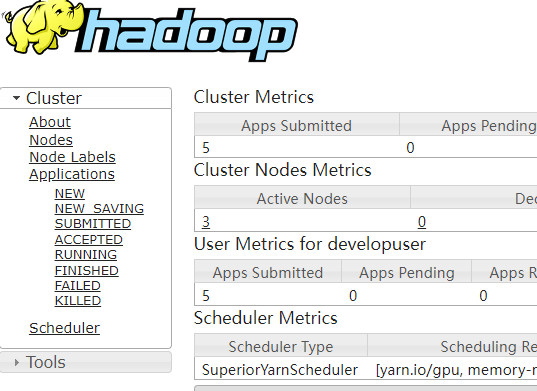

- After the job is submitted, log in to FusionInsight Manager of the MRS cluster and choose Cluster > Services > Yarn.

- Click the link next to ResourceManager WebUI to access the native Yarn web UI. On the All Applications page that is displayed, choose Applications on the left, and view the job running status and run logs.

- After the job execution is complete, you can view the data analysis result in the specified result output file in the OBS file system.

Download the output file to your local PC and open the file to view the analysis result.

a 3 and 2 batch 1 both 1 computing 2 data 2 demo 1 distribution 1 engine 1 flink 2 for 1 framework 1 is 2 it 1 mrs 1 parallel 1 processing 3 provides 1 stream 2 supports 2 test 1 that 2 this 1 unified 1

If you do not specify the output directory when submitting a job using the cluster client CLI, you can view the data analysis result on the job running page.

Job with JobID xxx has finished. Job Runtime: xxx ms Accumulator Results: - e6209f96ffa423974f8c7043821814e9 (java.util.ArrayList) [31 elements] (a,3) (and,2) (batch,1) (both,1) (computing,2) (data,2) (demo,1) (distribution,1) (engine,1) (flink,2) (for,1) (framework,1) (is,2) (it,1) (mrs,1) (parallel,1) (processing,3) (provides,1) (stream,2) (supports,2) (test,1) (that,2) (this,1) (unified,1)

Feedback

Was this page helpful?

Provide feedbackThank you very much for your feedback. We will continue working to improve the documentation.